## Charts: Model Accuracy vs. RL Flops

### Overview

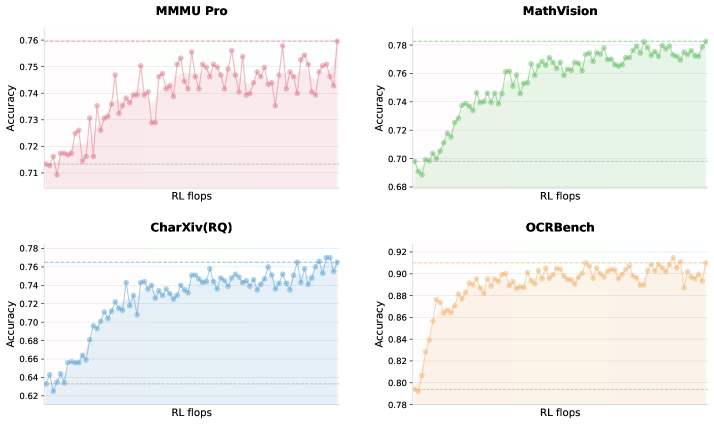

The image presents four separate line charts, each depicting the relationship between "Accuracy" and "RL flops" for different models/benchmarks: MMMU Pro, MathVision, CharXiv(RQ), and OCRBench. Each chart shows a trend line representing accuracy as RL flops increase. The charts are arranged in a 2x2 grid.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Labeled "RL flops". The scale appears to range from approximately 0 to 100 (units not specified).

* **Y-axis:** Labeled "Accuracy". The scale ranges from approximately 0.68 to 0.78 for MathVision, CharXiv(RQ), and OCRBench, and from 0.70 to 0.76 for MMMU Pro.

* **Data Series:** Each chart has a single line representing the accuracy trend.

* **Background:** Each chart has a lightly colored background, with a different color for each chart (pink for MMMU Pro, green for MathVision, blue for CharXiv(RQ), and orange for OCRBench).

### Detailed Analysis

**1. MMMU Pro (Top-Left)**

* **Line Color:** Pink

* **Trend:** The line generally slopes upward, but with significant fluctuations. It starts at approximately 0.715 accuracy at around 0 RL flops and reaches a peak of approximately 0.755 accuracy at around 80 RL flops, then declines slightly.

* **Data Points (approximate):**

* (0, 0.715)

* (20, 0.73)

* (40, 0.745)

* (60, 0.75)

* (80, 0.755)

* (100, 0.745)

**2. MathVision (Top-Right)**

* **Line Color:** Green

* **Trend:** The line shows a consistent upward trend, with less fluctuation than MMMU Pro. It starts at approximately 0.68 accuracy at around 0 RL flops and increases to approximately 0.77 accuracy at around 100 RL flops.

* **Data Points (approximate):**

* (0, 0.68)

* (20, 0.71)

* (40, 0.73)

* (60, 0.75)

* (80, 0.76)

* (100, 0.77)

**3. CharXiv(RQ) (Bottom-Left)**

* **Line Color:** Light Blue

* **Trend:** The line shows an upward trend, but with significant oscillations. It begins at approximately 0.64 accuracy at around 0 RL flops and reaches a peak of approximately 0.75 accuracy at around 60 RL flops, then fluctuates around that level.

* **Data Points (approximate):**

* (0, 0.64)

* (20, 0.68)

* (40, 0.72)

* (60, 0.75)

* (80, 0.73)

* (100, 0.74)

**4. OCRBench (Bottom-Right)**

* **Line Color:** Orange

* **Trend:** The line shows an initial steep upward trend, followed by a leveling off. It starts at approximately 0.78 accuracy at around 0 RL flops and increases to approximately 0.91 accuracy at around 40 RL flops, then plateaus.

* **Data Points (approximate):**

* (0, 0.78)

* (20, 0.85)

* (40, 0.91)

* (60, 0.90)

* (80, 0.89)

* (100, 0.88)

### Key Observations

* OCRBench demonstrates the fastest initial accuracy gains with increasing RL flops.

* MathVision shows the most consistent and stable accuracy improvement.

* MMMU Pro exhibits the most volatile accuracy, suggesting sensitivity to RL flops.

* CharXiv(RQ) shows a moderate improvement with significant fluctuations.

### Interpretation

The charts illustrate the performance of different models/benchmarks as computational resources (RL flops) are increased. The varying slopes and fluctuations suggest different levels of efficiency and stability in each model. OCRBench appears to benefit most from increased RL flops initially, quickly reaching a high level of accuracy. MathVision shows a steady, reliable improvement. MMMU Pro's fluctuating accuracy suggests that its performance is more sensitive to the specific training process or data distribution. CharXiv(RQ) shows a moderate improvement, but with considerable variability.

The data suggests that simply increasing RL flops does not guarantee improved accuracy, as demonstrated by MMMU Pro. The optimal balance between computational resources and model architecture/training strategy is crucial for achieving high performance. The leveling off of OCRBench's accuracy indicates a point of diminishing returns, where further increases in RL flops yield minimal improvements. These charts are likely used to evaluate the scalability and efficiency of different models in relation to computational cost.