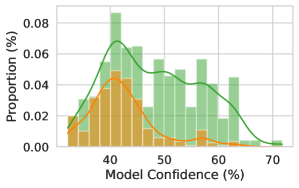

## Histogram with Overlaid Density Curves: Model Confidence Distribution

### Overview

The image displays a histogram comparing the distribution of model confidence percentages for correct and incorrect predictions. Two density curves (green and orange) are overlaid on the histogram bars, representing the proportion of predictions at each confidence level. The x-axis represents model confidence (40–70%), and the y-axis represents proportion (%).

### Components/Axes

- **X-axis**: Model Confidence (%)

- Range: 40% to 70%

- Tick marks at 40, 50, 60, 70

- **Y-axis**: Proportion (%)

- Range: 0.00% to 0.08%

- Tick marks at 0.00, 0.02, 0.04, 0.06, 0.08

- **Legend**:

- Green line: "Correct Predictions"

- Orange line: "Incorrect Predictions"

- Positioned in the top-right corner

### Detailed Analysis

1. **Green Curve (Correct Predictions)**:

- Peaks at ~50% confidence with a proportion of ~0.07%.

- Declines symmetrically on either side, approaching ~0.00% at 40% and 70%.

- Histogram bars (green) are tallest near 50%, indicating most correct predictions cluster around this confidence level.

2. **Orange Curve (Incorrect Predictions)**:

- Peaks at ~45% confidence with a proportion of ~0.05%.

- Declines more gradually, remaining above ~0.00% until ~60%.

- Histogram bars (orange) are shorter and skewed toward lower confidence (40–50%).

3. **Distribution Trends**:

- Correct predictions dominate higher confidence bins (50–60%), while incorrect predictions are concentrated in lower confidence bins (40–50%).

- Both curves taper off sharply beyond 60% and below 40%, with minimal proportions in these regions.

### Key Observations

- The model exhibits higher confidence in correct predictions (~50%) compared to incorrect ones (~45%).

- The proportion of correct predictions decreases more rapidly with increasing confidence beyond 50% than incorrect predictions.

- The histogram bars confirm that correct predictions are more frequent in the 50–60% confidence range, while incorrect predictions are underrepresented in this range.

### Interpretation

The data suggests the model is well-calibrated for correct predictions, as confidence aligns closely with accuracy (peak at 50%). However, incorrect predictions show a broader confidence distribution, indicating potential overconfidence in some misclassified cases. The sharp decline in proportions beyond 60% implies the model rarely achieves extreme confidence, which may reflect a balanced threshold for decision-making. Improving confidence estimation for lower-confidence incorrect predictions could enhance overall performance.