## Bar Chart: Model Accuracy by Subject

### Overview

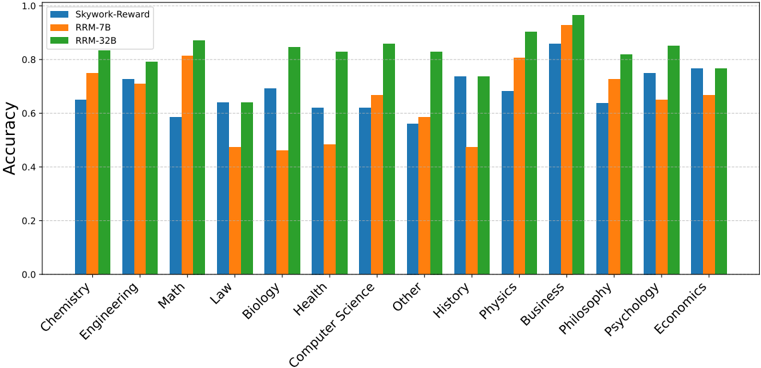

The image is a bar chart comparing the accuracy of three models (Skywork-Reward, RRM-7B, and RRM-32B) across various subjects. The y-axis represents accuracy, ranging from 0.0 to 1.0. The x-axis represents the subjects: Chemistry, Engineering, Math, Law, Biology, Health, Computer Science, Other, History, Physics, Business, Philosophy, Psychology, and Economics. Each subject has three bars representing the accuracy of each model.

### Components/Axes

* **Title:** (Inferred) Model Accuracy by Subject

* **Y-axis:**

* **Label:** Accuracy

* **Scale:** 0.0 to 1.0, with tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis:**

* **Label:** Subjects

* **Categories:** Chemistry, Engineering, Math, Law, Biology, Health, Computer Science, Other, History, Physics, Business, Philosophy, Psychology, Economics

* **Legend:** Located in the top-left corner.

* **Skywork-Reward:** Blue

* **RRM-7B:** Orange

* **RRM-32B:** Green

### Detailed Analysis

Here's a breakdown of the accuracy for each model across the subjects:

* **Chemistry:**

* Skywork-Reward (Blue): ~0.65

* RRM-7B (Orange): ~0.75

* RRM-32B (Green): ~0.80

* **Engineering:**

* Skywork-Reward (Blue): ~0.72

* RRM-7B (Orange): ~0.70

* RRM-32B (Green): ~0.78

* **Math:**

* Skywork-Reward (Blue): ~0.58

* RRM-7B (Orange): ~0.78

* RRM-32B (Green): ~0.82

* **Law:**

* Skywork-Reward (Blue): ~0.64

* RRM-7B (Orange): ~0.64

* RRM-32B (Green): ~0.83

* **Biology:**

* Skywork-Reward (Blue): ~0.64

* RRM-7B (Orange): ~0.47

* RRM-32B (Green): ~0.83

* **Health:**

* Skywork-Reward (Blue): ~0.62

* RRM-7B (Orange): ~0.70

* RRM-32B (Green): ~0.82

* **Computer Science:**

* Skywork-Reward (Blue): ~0.66

* RRM-7B (Orange): ~0.62

* RRM-32B (Green): ~0.82

* **Other:**

* Skywork-Reward (Blue): ~0.56

* RRM-7B (Orange): ~0.58

* RRM-32B (Green): ~0.66

* **History:**

* Skywork-Reward (Blue): ~0.75

* RRM-7B (Orange): ~0.47

* RRM-32B (Green): ~0.74

* **Physics:**

* Skywork-Reward (Blue): ~0.68

* RRM-7B (Orange): ~0.74

* RRM-32B (Green): ~0.81

* **Business:**

* Skywork-Reward (Blue): ~0.80

* RRM-7B (Orange): ~0.82

* RRM-32B (Green): ~0.90

* **Philosophy:**

* Skywork-Reward (Blue): ~0.84

* RRM-7B (Orange): ~0.90

* RRM-32B (Green): ~0.94

* **Psychology:**

* Skywork-Reward (Blue): ~0.64

* RRM-7B (Orange): ~0.72

* RRM-32B (Green): ~0.81

* **Economics:**

* Skywork-Reward (Blue): ~0.77

* RRM-7B (Orange): ~0.67

* RRM-32B (Green): ~0.67

### Key Observations

* RRM-32B (Green) generally has the highest accuracy across most subjects.

* RRM-7B (Orange) often has lower accuracy than Skywork-Reward (Blue) and RRM-32B (Green).

* The accuracy varies significantly across subjects for all models.

* Philosophy and Business tend to have the highest accuracy scores for all models.

* Biology and History have the lowest accuracy scores for RRM-7B.

### Interpretation

The bar chart provides a comparative analysis of the accuracy of three different models across a range of subjects. The consistent outperformance of RRM-32B suggests it is the most effective model overall. The varying performance across subjects indicates that the models' effectiveness is subject-dependent, possibly due to the nature of the subject matter or the training data used for each model. The relatively lower performance of RRM-7B in certain subjects like Biology and History could indicate specific weaknesses in its architecture or training. The high accuracy in Philosophy and Business might reflect the nature of the questions or the availability of relevant training data. Further investigation into the models' architectures, training data, and the specific questions used for evaluation would be necessary to understand the underlying reasons for these performance differences.