\n

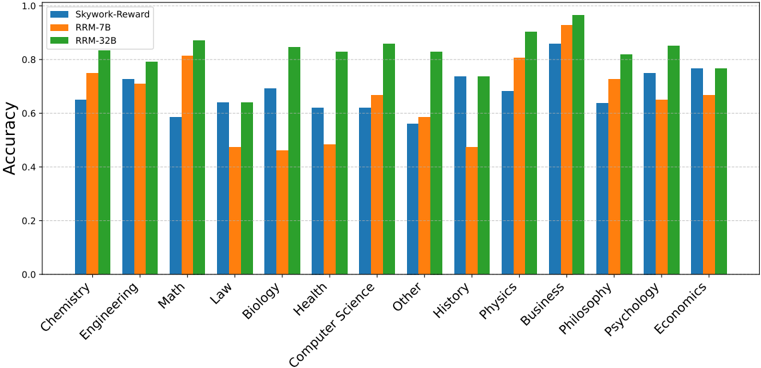

## Bar Chart: Accuracy Comparison Across Disciplines

### Overview

This image presents a bar chart comparing the accuracy of three different models – Skywork-Reward, RRM-7B, and RRM-32B – across ten academic disciplines. The accuracy is represented on the y-axis, ranging from 0.0 to 1.0, while the x-axis lists the disciplines: Chemistry, Engineering, Math, Law, Biology, Health, Computer Science, Other, History, Physics, Business, Philosophy, Psychology, and Economics. Each discipline has three bars representing the accuracy of each model.

### Components/Axes

* **X-axis:** Disciplines (Chemistry, Engineering, Math, Law, Biology, Health, Computer Science, Other, History, Physics, Business, Philosophy, Psychology, Economics)

* **Y-axis:** Accuracy (Scale from 0.0 to 1.0)

* **Legend:**

* Skywork-Reward (Light Blue)

* RRM-7B (Orange)

* RRM-32B (Green)

### Detailed Analysis

The chart consists of 14 groups of three bars, one for each discipline. The following are approximate accuracy values, read from the chart, with uncertainty due to bar width and visual estimation:

* **Chemistry:**

* Skywork-Reward: ~0.68

* RRM-7B: ~0.74

* RRM-32B: ~0.76

* **Engineering:**

* Skywork-Reward: ~0.72

* RRM-7B: ~0.78

* RRM-32B: ~0.80

* **Math:**

* Skywork-Reward: ~0.62

* RRM-7B: ~0.82

* RRM-32B: ~0.88

* **Law:**

* Skywork-Reward: ~0.70

* RRM-7B: ~0.84

* RRM-32B: ~0.89

* **Biology:**

* Skywork-Reward: ~0.66

* RRM-7B: ~0.72

* RRM-32B: ~0.82

* **Health:**

* Skywork-Reward: ~0.45

* RRM-7B: ~0.62

* RRM-32B: ~0.80

* **Computer Science:**

* Skywork-Reward: ~0.64

* RRM-7B: ~0.70

* RRM-32B: ~0.86

* **Other:**

* Skywork-Reward: ~0.56

* RRM-7B: ~0.66

* RRM-32B: ~0.88

* **History:**

* Skywork-Reward: ~0.76

* RRM-7B: ~0.78

* RRM-32B: ~0.84

* **Physics:**

* Skywork-Reward: ~0.58

* RRM-7B: ~0.86

* RRM-32B: ~0.92

* **Business:**

* Skywork-Reward: ~0.86

* RRM-7B: ~0.90

* RRM-32B: ~0.94

* **Philosophy:**

* Skywork-Reward: ~0.68

* RRM-7B: ~0.76

* RRM-32B: ~0.92

* **Psychology:**

* Skywork-Reward: ~0.72

* RRM-7B: ~0.78

* RRM-32B: ~0.86

* **Economics:**

* Skywork-Reward: ~0.64

* RRM-7B: ~0.70

* RRM-32B: ~0.76

**Trends:**

* **RRM-32B** consistently demonstrates the highest accuracy across all disciplines. Its bars are generally the tallest.

* **RRM-7B** generally outperforms **Skywork-Reward**, with its bars being taller in most disciplines.

* **Skywork-Reward** exhibits the lowest accuracy in most disciplines.

* The largest performance gaps between models appear in **Physics**, **Philosophy**, and **Business**.

* The smallest performance gaps appear in **History** and **Engineering**.

### Key Observations

* RRM-32B consistently achieves accuracy levels above 0.8, often approaching 0.9 or 1.0.

* Skywork-Reward's accuracy is notably lower in "Health" (~0.45), suggesting a potential weakness in this domain.

* The difference in accuracy between RRM-7B and RRM-32B is more pronounced in certain disciplines (e.g., Math, Law, Physics) than others.

### Interpretation

The data suggests that the RRM-32B model is significantly more accurate than both RRM-7B and Skywork-Reward across a broad range of academic disciplines. This indicates that increasing the model size (from 7B to 32B parameters) leads to substantial improvements in performance. RRM-7B consistently outperforms Skywork-Reward, suggesting that the architecture or training data of RRM models is more effective. The varying performance gaps across disciplines suggest that the models' strengths and weaknesses are domain-specific. The low accuracy of Skywork-Reward in "Health" could be due to specialized terminology or a lack of relevant training data in that field. The chart highlights the importance of model size and training data in achieving high accuracy in complex tasks, and the need for domain-specific optimization. The consistent superiority of RRM-32B suggests it is the most robust and generalizable model among the three tested.