## Diagram: Thinking States vs. Chain-of-Thought

### Overview

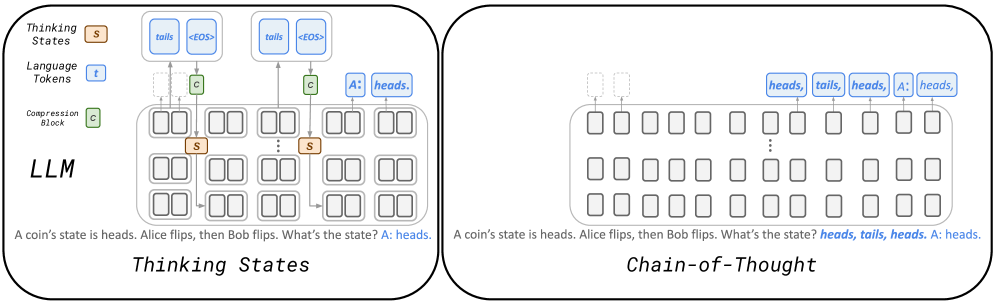

The image presents a comparative diagram illustrating two approaches to language model processing: "Thinking States" and "Chain-of-Thought." The diagram contrasts how a Large Language Model (LLM) handles a simple coin flip problem using these two methods. The "Thinking States" approach involves a more complex internal state representation, while the "Chain-of-Thought" approach uses a more direct, sequential processing method.

### Components/Axes

**Left Side: Thinking States**

* **Title:** Thinking States

* **LLM:** Indicates the use of a Large Language Model.

* **Thinking States (Legend):** Represented by an orange box labeled "s".

* **Language Tokens (Legend):** Represented by a blue box labeled "t".

* **Compression Block (Legend):** Represented by a green box labeled "c".

* **Internal State Representation:** A grid of rounded rectangles, some containing the "s" label (orange). Arrows indicate the flow of information between these states.

* **Input Tokens:** "tails" and "<EOS>" (End of Sentence) in blue boxes.

* **Compression Blocks:** Green boxes labeled "c" connected to the input tokens and internal states.

* **Output:** "A: heads." in a blue box.

* **Text:** "A coin's state is heads. Alice flips, then Bob flips. What's the state? A: heads."

**Right Side: Chain-of-Thought**

* **Title:** Chain-of-Thought

* **Processing Units:** A grid of rounded rectangles, similar to the "Thinking States" diagram, but without internal labels.

* **Input Tokens:** Represented by dashed boxes above the grid.

* **Output Tokens:** "heads, tails, heads, A: heads," in blue boxes.

* **Text:** "A coin's state is heads. Alice flips, then Bob flips. What's the state? heads, tails, heads. A: heads."

### Detailed Analysis

**Thinking States:**

* The diagram shows a more complex internal representation within the LLM.

* The "s" (orange) labels within the grid likely represent the model's internal "thinking states" as it processes the input.

* The "c" (green) compression blocks suggest a mechanism for compressing or summarizing information.

* The flow of information, indicated by arrows, is more intricate compared to the Chain-of-Thought approach.

* The model directly outputs "A: heads."

**Chain-of-Thought:**

* The diagram shows a simpler, more direct processing flow.

* The grid of rounded rectangles represents processing units, but without explicit internal state labels.

* The model outputs a sequence of tokens: "heads, tails, heads, A: heads," reflecting the intermediate steps in reasoning.

### Key Observations

* The "Thinking States" approach emphasizes internal state management and compression.

* The "Chain-of-Thought" approach emphasizes explicit, sequential reasoning steps.

* Both approaches aim to solve the same problem but use different mechanisms.

### Interpretation

The diagram illustrates a fundamental difference in how LLMs can approach problem-solving. "Thinking States" suggests a more abstract, internal representation of knowledge, while "Chain-of-Thought" emphasizes a more transparent, step-by-step reasoning process. The choice between these approaches (or a hybrid) can impact the model's performance, interpretability, and ability to generalize to new tasks. The "Thinking States" approach might be more efficient for certain tasks, while the "Chain-of-Thought" approach might be easier to debug and understand. The coin flip example is a simplified illustration, but the underlying principles apply to more complex reasoning tasks.