## Line Chart: Accuracy vs. Number of Layers in Recurrence

### Overview

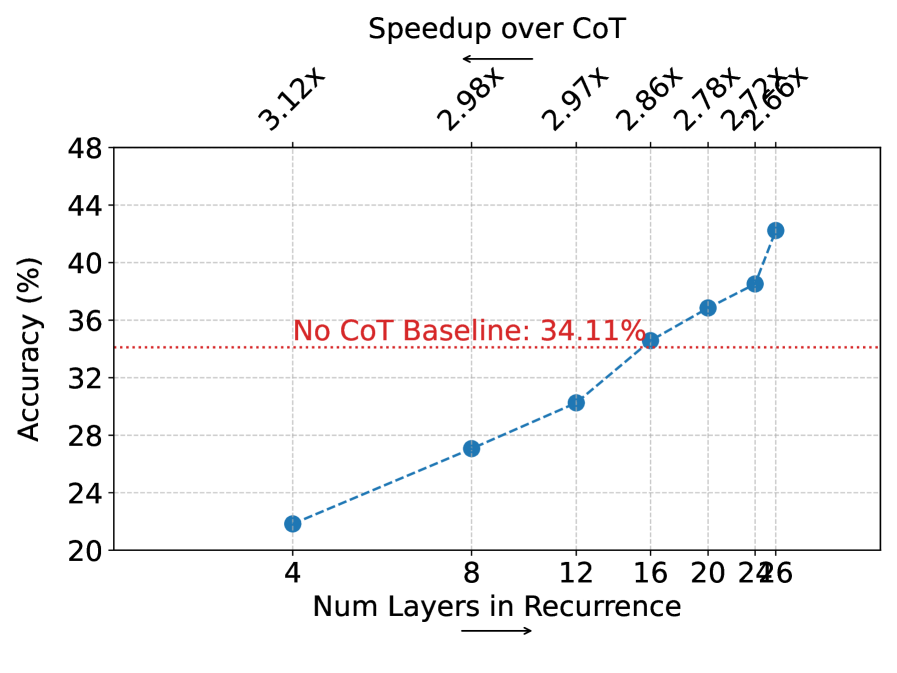

The image is a line chart showing the relationship between the number of layers in recurrence and the accuracy (in percentage). It also displays the speedup over CoT (Chain of Thought) for different numbers of layers. A horizontal line indicates the "No CoT Baseline" accuracy.

### Components/Axes

* **X-axis:** "Num Layers in Recurrence". The axis ranges from approximately 4 to 26, with tick marks at 4, 8, 12, 16, 20, and 24. An arrow points to the right, indicating the direction of increasing layers.

* **Y-axis:** "Accuracy (%)". The axis ranges from 20% to 48%, with tick marks at 20, 24, 28, 32, 36, 40, 44, and 48.

* **Title:** "Speedup over CoT" is displayed above the chart.

* **Horizontal Line:** A dotted red line represents the "No CoT Baseline: 34.11%".

* **Data Series:** A blue dashed line connects data points representing the accuracy for different numbers of layers.

* **Speedup Values:** Numerical values (e.g., 3.12x, 2.98x) are displayed above the chart, corresponding to specific data points.

### Detailed Analysis

* **No CoT Baseline:** The red dotted line is at 34.11% accuracy.

* **Data Series (Accuracy vs. Num Layers):** The blue dashed line shows an upward trend, indicating that accuracy generally increases with the number of layers in recurrence.

* At 4 layers, the accuracy is approximately 22%.

* At 8 layers, the accuracy is approximately 27%.

* At 12 layers, the accuracy is approximately 31%.

* At 16 layers, the accuracy is approximately 33%.

* At 20 layers, the accuracy is approximately 37%.

* At 24 layers, the accuracy is approximately 39%.

* At 26 layers, the accuracy is approximately 43%.

* **Speedup over CoT:**

* At 4 layers, the speedup is 3.12x.

* At 8 layers, the speedup is 2.98x.

* At 12 layers, the speedup is 2.97x.

* At 16 layers, the speedup is 2.86x.

* At 20 layers, the speedup is 2.78x.

* At 24 layers, the speedup is 2.72x.

* At 26 layers, the speedup is 2.66x.

### Key Observations

* The accuracy generally increases as the number of layers in recurrence increases.

* The speedup over CoT decreases as the number of layers in recurrence increases.

* The accuracy at 26 layers (approximately 43%) is significantly higher than the "No CoT Baseline" (34.11%).

### Interpretation

The chart suggests that increasing the number of layers in recurrence improves the accuracy of the model. However, this improvement comes at the cost of reduced speedup over the Chain of Thought (CoT) method. The model with 26 layers significantly outperforms the "No CoT Baseline," indicating the effectiveness of using recurrence layers. The decreasing speedup with increasing layers suggests a diminishing return in terms of efficiency as more layers are added.