TECHNICAL ASSET FINGERPRINT

7226bc6a0d1f3d9af6cdeee8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Evaluation Accuracy and Training Reward vs. Training Steps for Different Algorithms

### Overview

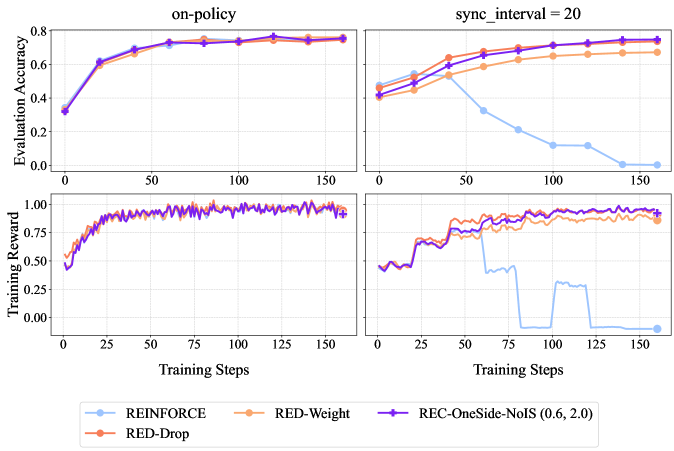

The image presents four line graphs arranged in a 2x2 grid. The graphs depict the performance of different reinforcement learning algorithms, namely REINFORCE, RED-Weight, REC-OneSide-NoIS (0.6, 2.0), and RED-Drop, across varying training steps. The top row displays "Evaluation Accuracy" while the bottom row displays "Training Reward". The left column shows results for "on-policy" training, and the right column shows results for "sync_interval = 20".

### Components/Axes

**General:**

* **X-axis (all plots):** Training Steps, ranging from 0 to 150. Increments are marked at 0, 25, 50, 75, 100, 125, and 150.

* **Legend (bottom):** Located below the four plots.

* REINFORCE (light blue)

* RED-Drop (orange-red)

* RED-Weight (light orange)

* REC-OneSide-NoIS (0.6, 2.0) (purple)

**Top-Left Plot (on-policy, Evaluation Accuracy):**

* **Title:** on-policy

* **Y-axis:** Evaluation Accuracy, ranging from 0.0 to 0.8, with increments of 0.2.

**Top-Right Plot (sync_interval = 20, Evaluation Accuracy):**

* **Title:** sync_interval = 20

* **Y-axis:** Evaluation Accuracy, ranging from 0.0 to 0.8, with increments of 0.2.

**Bottom-Left Plot (on-policy, Training Reward):**

* **Y-axis:** Training Reward, ranging from 0.00 to 1.00, with increments of 0.25.

**Bottom-Right Plot (sync_interval = 20, Training Reward):**

* **Y-axis:** Training Reward, ranging from 0.00 to 1.00, with increments of 0.25.

### Detailed Analysis

**Top-Left Plot (on-policy, Evaluation Accuracy):**

* **REINFORCE (light blue):** Starts at approximately 0.35, increases sharply to around 0.65 by step 25, and then plateaus around 0.75.

* **RED-Drop (orange-red):** Starts at approximately 0.35, increases sharply to around 0.60 by step 25, and then plateaus around 0.75.

* **RED-Weight (light orange):** Starts at approximately 0.35, increases sharply to around 0.65 by step 25, and then plateaus around 0.75.

* **REC-OneSide-NoIS (0.6, 2.0) (purple):** Starts at approximately 0.35, increases sharply to around 0.70 by step 25, and then plateaus around 0.78.

**Top-Right Plot (sync_interval = 20, Evaluation Accuracy):**

* **REINFORCE (light blue):** Starts at approximately 0.50, increases slightly to around 0.55 by step 25, then decreases steadily to approximately 0.05 by step 150.

* **RED-Drop (orange-red):** Starts at approximately 0.40, increases steadily to around 0.70 by step 75, and then plateaus around 0.70.

* **RED-Weight (light orange):** Starts at approximately 0.50, increases steadily to around 0.65 by step 75, and then plateaus around 0.65.

* **REC-OneSide-NoIS (0.6, 2.0) (purple):** Starts at approximately 0.45, increases steadily to around 0.75 by step 75, and then plateaus around 0.78.

**Bottom-Left Plot (on-policy, Training Reward):**

* **REINFORCE (light blue):** Starts at approximately 0.55, increases sharply to around 0.90 by step 25, and then fluctuates around 0.95.

* **RED-Drop (orange-red):** Starts at approximately 0.55, increases sharply to around 0.85 by step 25, and then fluctuates around 0.95.

* **RED-Weight (light orange):** Starts at approximately 0.55, increases sharply to around 0.90 by step 25, and then fluctuates around 0.95.

* **REC-OneSide-NoIS (0.6, 2.0) (purple):** Starts at approximately 0.45, increases sharply to around 0.90 by step 25, and then fluctuates around 0.95.

**Bottom-Right Plot (sync_interval = 20, Training Reward):**

* **REINFORCE (light blue):** Starts at approximately 0.60, increases to around 0.70 by step 25, then drops sharply and fluctuates between 0.00 and 0.25 after step 75.

* **RED-Drop (orange-red):** Starts at approximately 0.60, increases steadily to around 0.90 by step 50, and then fluctuates around 0.95.

* **RED-Weight (light orange):** Starts at approximately 0.60, increases steadily to around 0.85 by step 50, and then fluctuates around 0.95.

* **REC-OneSide-NoIS (0.6, 2.0) (purple):** Starts at approximately 0.55, increases steadily to around 0.85 by step 50, and then fluctuates around 0.95.

### Key Observations

* In the "on-policy" setting, all algorithms achieve similar performance in terms of both evaluation accuracy and training reward.

* In the "sync_interval = 20" setting, REINFORCE performs significantly worse than the other algorithms, particularly in terms of evaluation accuracy and training reward. The other three algorithms (RED-Weight, REC-OneSide-NoIS, and RED-Drop) maintain relatively high performance.

* The "sync_interval = 20" setting seems to negatively impact REINFORCE's ability to learn and maintain a high reward.

### Interpretation

The data suggests that the "sync_interval = 20" setting introduces a challenge that REINFORCE struggles to overcome, while RED-Weight, REC-OneSide-NoIS, and RED-Drop are more robust to this condition. This could be due to the way REINFORCE updates its policy, making it more sensitive to delayed or infrequent synchronization. The other algorithms may employ techniques that mitigate the impact of asynchronous updates. The "on-policy" setting, where updates are more frequent and synchronized, allows REINFORCE to perform comparably to the other algorithms. The REC-OneSide-NoIS algorithm consistently achieves slightly higher evaluation accuracy than the other algorithms.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Training Performance Comparison

### Overview

The image presents a comparison of training performance for different reinforcement learning algorithms. It consists of two columns of charts, one labeled "on-policy" and the other "sync_interval = 20". Each column contains two charts: one displaying "Evaluation Accuracy" versus "Training Steps", and the other displaying "Training Reward" versus "Training Steps". Four algorithms are compared: REINFORCE, RED-Weight, RED-Drop, and REC-OneSide-NoIS (0.6, 2.0).

### Components/Axes

* **X-axis (both charts):** Training Steps, ranging from 0 to 150.

* **Y-axis (top charts):** Evaluation Accuracy, ranging from 0 to 0.8.

* **Y-axis (bottom charts):** Training Reward, ranging from 0 to 1.0.

* **Legend:** Located at the bottom center of the image.

* REINFORCE (Light Blue)

* RED-Weight (Orange)

* REC-OneSide-NoIS (0.6, 2.0) (Purple)

* RED-Drop (Brown)

### Detailed Analysis or Content Details

**On-Policy Column:**

* **Evaluation Accuracy:**

* REINFORCE (Light Blue): Starts at approximately 0.15, increases rapidly to around 0.65 by step 25, and plateaus around 0.7 with minor fluctuations.

* RED-Weight (Orange): Starts at approximately 0.15, increases to around 0.5 by step 25, and plateaus around 0.6 with minor fluctuations.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Starts at approximately 0.15, increases steadily to around 0.7 by step 50, and remains relatively stable around 0.7.

* RED-Drop (Brown): Starts at approximately 0.15, increases to around 0.5 by step 25, and plateaus around 0.6 with minor fluctuations.

* **Training Reward:**

* REINFORCE (Light Blue): Fluctuates around 0.8-0.9 throughout the training process.

* RED-Weight (Orange): Fluctuates around 0.8-0.9 throughout the training process.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Fluctuates around 0.7-0.9 throughout the training process, with a dip around step 25.

* RED-Drop (Brown): Fluctuates around 0.7-0.9 throughout the training process.

**Sync\_interval = 20 Column:**

* **Evaluation Accuracy:**

* REINFORCE (Light Blue): Starts at approximately 0.2, increases to around 0.5 by step 50, and then declines to around 0.2 by step 150.

* RED-Weight (Orange): Starts at approximately 0.4, increases to around 0.6 by step 50, and then declines to around 0.3 by step 150.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Starts at approximately 0.4, increases to around 0.7 by step 50, and remains relatively stable around 0.6-0.7.

* RED-Drop (Brown): Starts at approximately 0.4, increases to around 0.6 by step 50, and then declines sharply to around 0.1 by step 150.

* **Training Reward:**

* REINFORCE (Light Blue): Fluctuates around 0.7-0.8 throughout the training process.

* RED-Weight (Orange): Fluctuates around 0.8-0.9 throughout the training process.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Fluctuates around 0.7-0.8 throughout the training process.

* RED-Drop (Brown): Exhibits a large drop in reward around step 75, falling from approximately 0.8 to 0.0, and remains low for the rest of the training process.

### Key Observations

* In the "on-policy" setting, all algorithms achieve relatively high and stable evaluation accuracy and training reward. REINFORCE and REC-OneSide-NoIS perform slightly better in terms of evaluation accuracy.

* In the "sync\_interval = 20" setting, the performance of REINFORCE and RED-Drop deteriorates significantly over time, while REC-OneSide-NoIS maintains a relatively stable performance. RED-Weight also shows a decline, but less drastic than REINFORCE and RED-Drop.

* RED-Drop exhibits a catastrophic failure in the "sync\_interval = 20" setting, with a sudden drop in both evaluation accuracy and training reward around step 75.

### Interpretation

The charts demonstrate the impact of the synchronization interval on the training performance of different reinforcement learning algorithms. In the "on-policy" setting, where updates are applied immediately, all algorithms perform reasonably well. However, when a synchronization interval of 20 is introduced, the performance of REINFORCE and RED-Drop degrades significantly, suggesting that these algorithms are sensitive to delayed updates.

REC-OneSide-NoIS appears to be more robust to delayed updates, maintaining a stable performance even with a synchronization interval of 20. This could be due to the noise injection mechanism, which helps to prevent overfitting and improve generalization. The catastrophic failure of RED-Drop in the "sync\_interval = 20" setting suggests that this algorithm is particularly vulnerable to the effects of delayed updates, potentially due to instability in the learning process.

The difference in performance between the two settings highlights the importance of considering the synchronization interval when designing and training reinforcement learning algorithms. The choice of synchronization interval should be based on the specific characteristics of the algorithm and the environment.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Charts: Comparative Performance of Reinforcement Learning Algorithms

### Overview

The image displays a 2x2 grid of line charts comparing the performance of four reinforcement learning algorithms across two different training conditions. The top row measures "Evaluation Accuracy," and the bottom row measures "Training Reward" over the course of "Training Steps." The left column represents an "on-policy" condition, while the right column represents a condition with "sync_interval = 20."

### Components/Axes

* **Titles:**

* Top-left chart: "on-policy"

* Top-right chart: "sync_interval = 20"

* **Y-Axis Labels:**

* Top row (both charts): "Evaluation Accuracy" (Scale: 0.0 to 0.8)

* Bottom row (both charts): "Training Reward" (Scale: 0.00 to 1.00)

* **X-Axis Label (All Charts):** "Training Steps" (Scale: 0 to 150, with major ticks at 0, 25, 50, 75, 100, 125, 150)

* **Legend (Bottom Center):**

* **REINFORCE:** Light blue line with circle markers.

* **RED-Weight:** Orange line with diamond markers.

* **RED-Drop:** Red line with square markers.

* **REC-OneSide-NoIS (0.6, 2.0):** Purple line with plus (+) markers.

### Detailed Analysis

**Top-Left Chart: Evaluation Accuracy (on-policy)**

* **Trend:** All four algorithms show a strong, similar upward trend, converging to high accuracy.

* **Data Points (Approximate):**

* All lines start near 0.35 at step 0.

* By step 25, all are clustered around 0.60-0.65.

* By step 75, all are tightly grouped between 0.70-0.75.

* From step 100 to 150, all lines plateau and remain very close, ending near 0.75-0.78.

**Top-Right Chart: Evaluation Accuracy (sync_interval = 20)**

* **Trend:** The REINFORCE algorithm shows a dramatic decline, while the other three maintain high performance.

* **Data Points (Approximate):**

* **REINFORCE (Light Blue):** Starts ~0.45, peaks ~0.55 at step 40, then declines sharply. It falls below 0.20 by step 100 and approaches 0.00 by step 150.

* **RED-Weight (Orange):** Starts ~0.45, rises steadily to ~0.70 by step 100, and plateaus near 0.72.

* **RED-Drop (Red) & REC-OneSide-NoIS (Purple):** Both start ~0.40, follow a very similar upward path, and converge near 0.75-0.78 by step 150, slightly outperforming RED-Weight.

**Bottom-Left Chart: Training Reward (on-policy)**

* **Trend:** All algorithms show rapid initial learning and converge to a high, stable reward.

* **Data Points (Approximate):**

* All lines start near 0.50.

* They rise sharply, reaching ~0.90 by step 50.

* From step 50 to 150, all lines fluctuate in a tight band between approximately 0.90 and 1.00, showing stable convergence.

**Bottom-Right Chart: Training Reward (sync_interval = 20)**

* **Trend:** REINFORCE collapses to near-zero reward, while the other algorithms maintain high reward.

* **Data Points (Approximate):**

* **REINFORCE (Light Blue):** Starts ~0.50, rises to ~0.75 by step 50, then crashes. It drops to near 0.00 by step 80, shows a brief, small recovery around step 100, then falls back to 0.00.

* **RED-Weight (Orange):** Starts ~0.50, rises to ~0.90 by step 75, and remains stable between 0.85-0.95.

* **RED-Drop (Red) & REC-OneSide-NoIS (Purple):** Both follow a nearly identical path, starting ~0.50, rising to ~0.95 by step 75, and maintaining a high reward between 0.90-1.00.

### Key Observations

1. **Algorithm Divergence:** The "sync_interval = 20" condition causes a catastrophic performance collapse for the REINFORCE algorithm in both evaluation accuracy and training reward, while the other three methods (RED-Weight, RED-Drop, REC-OneSide-NoIS) remain robust.

2. **Method Similarity:** RED-Drop and REC-OneSide-NoIS (0.6, 2.0) perform almost identically across all four charts, suggesting similar underlying behavior or effectiveness in these scenarios.

3. **Stability vs. Instability:** The "on-policy" condition leads to stable, convergent learning for all methods. The "sync_interval = 20" condition introduces instability that only REINFORCE succumbs to.

4. **Performance Ceiling:** Under stable conditions (on-policy), all methods appear to reach a similar performance ceiling (~0.75 accuracy, ~0.95 reward).

### Interpretation

This data demonstrates a critical vulnerability in the standard REINFORCE algorithm when training is synchronized at intervals (sync_interval=20), likely due to issues with stale gradients or policy lag. The other three algorithms, which presumably incorporate mechanisms to handle off-policy or delayed updates (implied by names like "RED" and "REC"), show significant robustness to this synchronization delay.

The near-identical performance of RED-Drop and REC-OneSide-NoIS suggests that their specific technical differences may not be consequential for this particular task and set of conditions. The charts effectively argue that for distributed or synchronized training setups, using one of the more robust variants (RED or REC family) is essential to prevent complete training failure, as exhibited by REINFORCE. The "on-policy" results serve as a control, proving all algorithms are capable of learning the task under ideal, low-latency conditions.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Algorithm Performance Comparison

### Overview

The image contains four line graphs arranged in a 2x2 grid, comparing the performance of four reinforcement learning algorithms (REINFORCE, RED-Drop, RED-Weight, REC-OneSide-NoIS) across different training metrics. The graphs show evaluation accuracy and training reward trends over training steps, with distinct performance patterns emerging between the algorithms.

### Components/Axes

1. **Top-Left Graph**

- **Title**: "on-policy"

- **Y-Axis**: Evaluation Accuracy (0.0 to 0.8)

- **X-Axis**: Training Steps (0 to 150)

- **Legend**:

- Blue: REINFORCE

- Orange: RED-Drop

- Purple: REC-OneSide-NoIS (0.6, 2.0)

- Red: RED-Weight

2. **Top-Right Graph**

- **Title**: "sync_interval = 20"

- **Y-Axis**: Evaluation Accuracy (0.0 to 0.8)

- **X-Axis**: Training Steps (0 to 150)

- **Legend**: Same as top-left graph

3. **Bottom-Left Graph**

- **Title**: "Training Reward"

- **Y-Axis**: Training Reward (0.0 to 1.0)

- **X-Axis**: Training Steps (0 to 150)

- **Legend**: Same as top-left graph

4. **Bottom-Right Graph**

- **Title**: "Training Reward"

- **Y-Axis**: Training Reward (0.0 to 1.0)

- **X-Axis**: Training Steps (0 to 150)

- **Legend**: Same as top-left graph

### Detailed Analysis

#### Top-Left Graph ("on-policy")

- **Trend**: All algorithms show upward trajectories, plateauing near 0.75–0.8 evaluation accuracy.

- **Data Points**:

- REINFORCE (blue): Starts at ~0.35, peaks at ~0.78 by 150 steps.

- RED-Drop (orange): Starts at ~0.38, peaks at ~0.77.

- REC-OneSide-NoIS (purple): Starts at ~0.32, peaks at ~0.79.

- RED-Weight (red): Starts at ~0.36, peaks at ~0.78.

#### Top-Right Graph ("sync_interval = 20")

- **Trend**: REINFORCE (blue) drops sharply after 50 steps, while others improve.

- **Data Points**:

- REINFORCE: Starts at ~0.45, drops to ~0.15 by 150 steps.

- RED-Drop: Starts at ~0.42, peaks at ~0.72.

- REC-OneSide-NoIS: Starts at ~0.40, peaks at ~0.76.

- RED-Weight: Starts at ~0.44, peaks at ~0.74.

#### Bottom-Left Graph ("Training Reward")

- **Trend**: All algorithms show gradual improvement with minor fluctuations.

- **Data Points**:

- REINFORCE: Starts at ~0.5, peaks at ~0.95.

- RED-Drop: Starts at ~0.52, peaks at ~0.98.

- REC-OneSide-NoIS: Starts at ~0.55, peaks at ~0.97.

- RED-Weight: Starts at ~0.53, peaks at ~0.96.

#### Bottom-Right Graph ("Training Reward")

- **Trend**: REINFORCE (blue) exhibits erratic drops, while others stabilize.

- **Data Points**:

- REINFORCE: Starts at ~0.5, drops to ~0.0 by 150 steps.

- RED-Drop: Starts at ~0.52, stabilizes at ~0.95.

- REC-OneSide-NoIS: Starts at ~0.55, stabilizes at ~0.94.

- RED-Weight: Starts at ~0.53, stabilizes at ~0.93.

### Key Observations

1. **On-Policy Performance**: All algorithms achieve high evaluation accuracy (~0.75–0.8) under on-policy training, with REC-OneSide-NoIS slightly outperforming others.

2. **Sync Interval Impact**: REINFORCE’s evaluation accuracy collapses under sync_interval = 20, while other algorithms maintain performance.

3. **Training Reward Variance**: REINFORCE shows unstable training rewards in sync_interval scenarios, whereas RED-Drop and REC-OneSide-NoIS maintain consistent rewards.

### Interpretation

- **Algorithm Robustness**: RED-Drop and REC-OneSide-NoIS demonstrate superior stability across training scenarios, suggesting better generalization.

- **REINFORCE Limitations**: REINFORCE’s performance degrades significantly under sync_interval constraints, indicating sensitivity to hyperparameters or training dynamics.

- **Training Reward Correlation**: Higher training rewards align with better evaluation accuracy, except for REINFORCE in sync_interval settings, where reward and accuracy decouple.

- **Practical Implications**: Algorithms with adaptive mechanisms (e.g., RED-Drop, REC-OneSide-NoIS) may be preferable for real-world applications requiring stable training under varying conditions.

*Note: All values are approximate, derived from visual inspection of the graphs.*

DECODING INTELLIGENCE...