## Line Chart: Mean Success Rate Across Checkpoints

### Overview

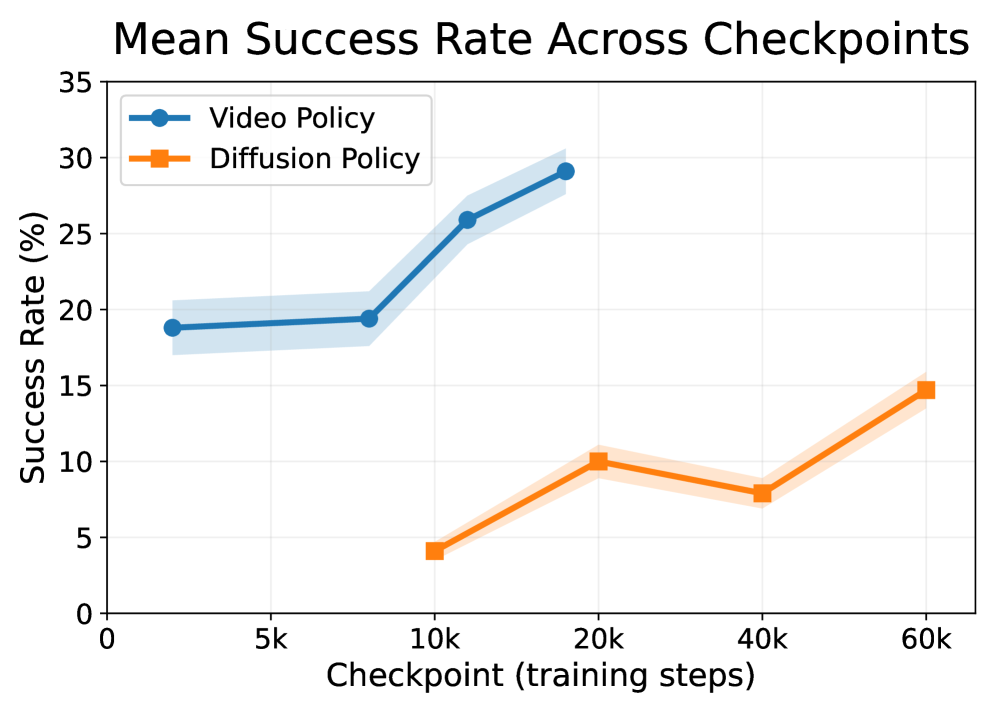

The image displays a line chart comparing the performance of two machine learning policies, "Video Policy" and "Diffusion Policy," over the course of training. The chart plots the mean success rate (as a percentage) against the number of training steps, marked at specific checkpoints. Both lines include shaded regions, likely representing confidence intervals or standard deviation around the mean.

### Components/Axes

* **Chart Title:** "Mean Success Rate Across Checkpoints" (centered at the top).

* **Y-Axis:** Labeled "Success Rate (%)". The scale runs from 0 to 35, with major tick marks at intervals of 5 (0, 5, 10, 15, 20, 25, 30, 35).

* **X-Axis:** Labeled "Checkpoint (training steps)". The scale is non-linear, with labeled checkpoints at 0, 5k, 10k, 20k, 40k, and 60k steps.

* **Legend:** Positioned in the top-left corner of the chart area.

* **Video Policy:** Represented by a solid blue line with circular markers.

* **Diffusion Policy:** Represented by a solid orange line with square markers.

* **Data Series:** Two lines with associated shaded error bands.

* The **Video Policy (blue)** line has a light blue shaded region.

* The **Diffusion Policy (orange)** line has a light orange shaded region.

### Detailed Analysis

**Video Policy (Blue Line with Circles):**

* **Trend:** Shows a consistent upward trend, with a notable acceleration in improvement after the 10k step checkpoint.

* **Data Points (Approximate):**

* At 0 steps: ~19%

* At 10k steps: ~19.5%

* At 20k steps: ~26%

* At 40k steps: ~29%

* The shaded confidence band is relatively narrow, suggesting lower variance in performance at each checkpoint.

**Diffusion Policy (Orange Line with Squares):**

* **Trend:** Shows an overall upward trend but with more variability. Performance increases from 10k to 20k steps, dips at 40k steps, and then rises again by 60k steps.

* **Data Points (Approximate):**

* At 10k steps: ~4%

* At 20k steps: ~10%

* At 40k steps: ~8%

* At 60k steps: ~15%

* The shaded confidence band is wider than that of the Video Policy, indicating higher variance or uncertainty in the mean success rate.

### Key Observations

1. **Performance Gap:** The Video Policy consistently achieves a higher mean success rate than the Diffusion Policy at all comparable checkpoints (10k, 20k, 40k steps).

2. **Learning Trajectory:** The Video Policy shows a smooth, accelerating learning curve. The Diffusion Policy's learning curve is less smooth, exhibiting a performance regression between 20k and 40k steps before recovering.

3. **Data Availability:** The Video Policy has a data point at the 0-step checkpoint, while the Diffusion Policy's first recorded point is at 10k steps.

4. **Uncertainty:** The wider error bands for the Diffusion Policy suggest its performance is less consistent across training runs or evaluation episodes compared to the Video Policy.

### Interpretation

The chart demonstrates a clear comparative advantage for the "Video Policy" over the "Diffusion Policy" in this specific task, as measured by mean success rate. The Video Policy not only starts at a higher performance level but also learns more efficiently and reliably, as indicated by its steeper, smoother ascent and tighter confidence intervals.

The dip in the Diffusion Policy's performance at 40k steps is a critical anomaly. This could indicate a period of instability in training, such as catastrophic forgetting, overfitting to a specific subset of data, or a challenging phase in the optimization landscape. Its subsequent recovery by 60k steps suggests the training process eventually overcame this hurdle.

The absence of a 0-step checkpoint for the Diffusion Policy might imply it was initialized differently or that its baseline performance was not measured. Overall, the data suggests that for the evaluated task and within the observed training duration, the Video Policy is the more effective and robust approach. The shaded regions emphasize that while the mean trends are clear, there is inherent variability in the performance of both methods, more so for the Diffusion Policy.