## Bar Chart: MMLU Accuracy Comparison

### Overview

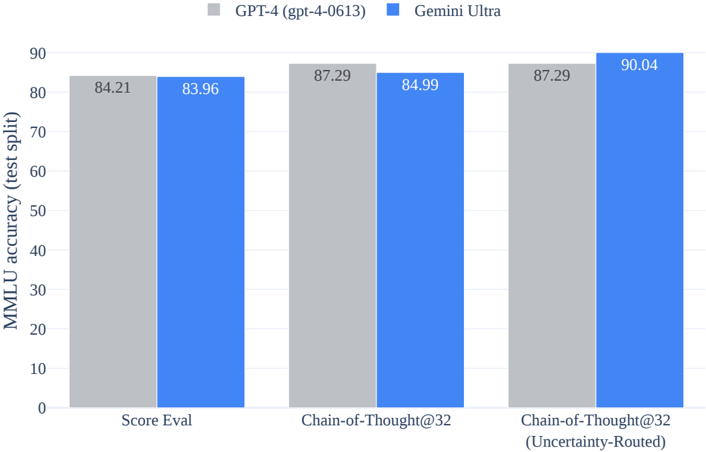

The image is a bar chart comparing the MMLU (Massive Multitask Language Understanding) accuracy of two language models: GPT-4 (gpt-4-0613) and Gemini Ultra. The chart displays the accuracy on three different evaluation methods: "Score Eval", "Chain-of-Thought@32", and "Chain-of-Thought@32 (Uncertainty-Routed)". The y-axis represents MMLU accuracy (test split) ranging from 0 to 90.

### Components/Axes

* **Title:** None explicitly provided in the image.

* **X-axis:** Categorical axis with three categories: "Score Eval", "Chain-of-Thought@32", and "Chain-of-Thought@32 (Uncertainty-Routed)".

* **Y-axis:** Numerical axis labeled "MMLU accuracy (test split)" ranging from 0 to 90, with increments of 10.

* **Legend:** Located at the top of the chart.

* Gray bar: GPT-4 (gpt-4-0613)

* Blue bar: Gemini Ultra

### Detailed Analysis

The chart presents a comparison of MMLU accuracy between GPT-4 and Gemini Ultra across three evaluation methods.

* **Score Eval:**

* GPT-4 (gray bar): 84.21

* Gemini Ultra (blue bar): 83.96

* **Chain-of-Thought@32:**

* GPT-4 (gray bar): 87.29

* Gemini Ultra (blue bar): 84.99

* **Chain-of-Thought@32 (Uncertainty-Routed):**

* GPT-4 (gray bar): 87.29

* Gemini Ultra (blue bar): 90.04

### Key Observations

* For "Score Eval", GPT-4 has a slightly higher accuracy (84.21) compared to Gemini Ultra (83.96).

* For "Chain-of-Thought@32", GPT-4 has a higher accuracy (87.29) compared to Gemini Ultra (84.99).

* For "Chain-of-Thought@32 (Uncertainty-Routed)", Gemini Ultra shows a higher accuracy (90.04) compared to GPT-4 (87.29).

* Gemini Ultra performs best on "Chain-of-Thought@32 (Uncertainty-Routed)", achieving an accuracy of 90.04.

### Interpretation

The bar chart illustrates the MMLU accuracy of GPT-4 and Gemini Ultra under different evaluation conditions. The "Chain-of-Thought@32 (Uncertainty-Routed)" method appears to significantly improve the performance of Gemini Ultra, surpassing GPT-4's accuracy in this specific setting. The data suggests that Gemini Ultra benefits more from the "Uncertainty-Routed" approach compared to GPT-4. The "Score Eval" results are very close, indicating similar performance on standard evaluation. The "Chain-of-Thought@32" results show GPT-4 performing slightly better than Gemini Ultra.