TECHNICAL ASSET FINGERPRINT

734692ebd5a645d4d91835f4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

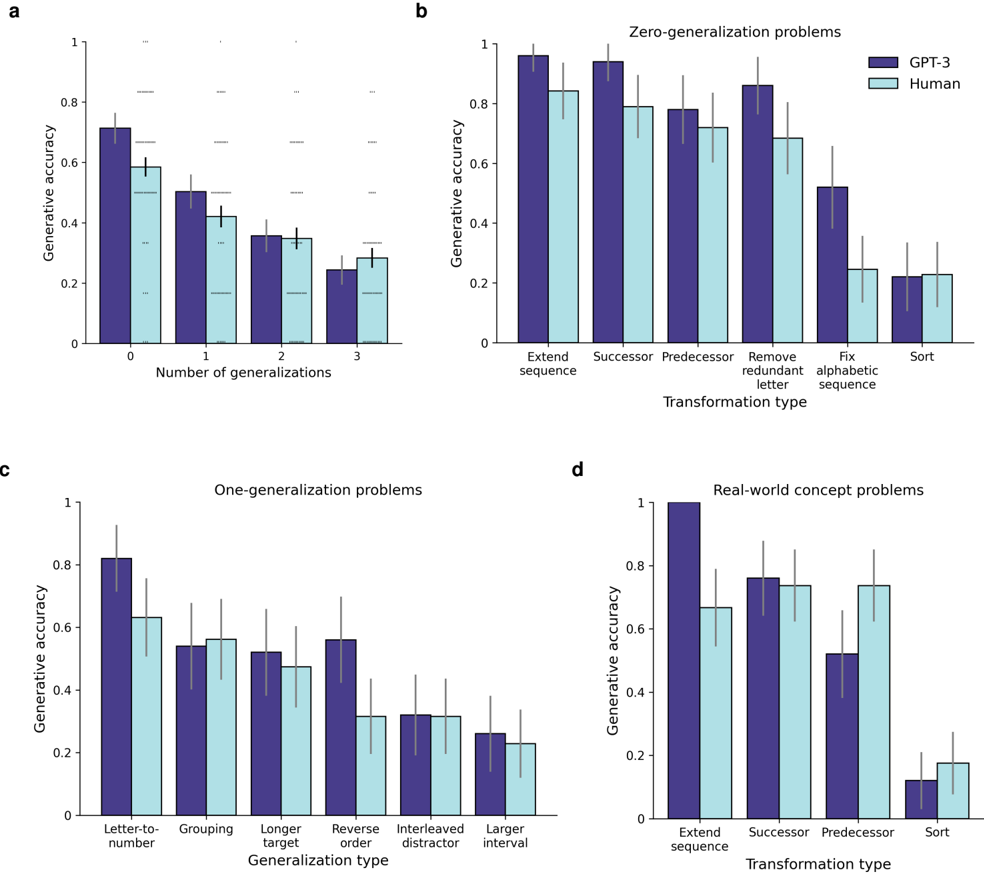

## Bar Charts: GPT-3 vs. Human Generative Accuracy

### Overview

The image presents four bar charts comparing the generative accuracy of GPT-3 and humans across different types of problems. The charts are labeled a, b, c, and d, each focusing on a specific category of problems: number of generalizations, zero-generalization problems, one-generalization problems, and real-world concept problems. The y-axis represents generative accuracy, ranging from 0 to 1. The x-axis varies depending on the chart, representing the number of generalizations, transformation type, or generalization type. Error bars are present on each bar, indicating the variability in the data.

### Components/Axes

**General Components:**

* **Title:** Each chart has a title indicating the type of problem being analyzed.

* **Y-axis:** Generative accuracy, ranging from 0 to 1 in increments of 0.2.

* **Legend:** Located at the top-right of chart b, indicating GPT-3 (dark blue) and Human (light blue).

**Chart a: Number of Generalizations**

* **Title:** Number of generalizations

* **X-axis:** Number of generalizations (0, 1, 2, 3)

**Chart b: Zero-generalization problems**

* **Title:** Zero-generalization problems

* **X-axis:** Transformation type (Extend sequence, Successor, Predecessor, Remove redundant letter, Fix alphabetic sequence, Sort)

**Chart c: One-generalization problems**

* **Title:** One-generalization problems

* **X-axis:** Generalization type (Letter-to-number, Grouping, Longer target order, Reverse order, Interleaved distractor, Larger interval)

**Chart d: Real-world concept problems**

* **Title:** Real-world concept problems

* **X-axis:** Transformation type (Extend sequence, Successor, Predecessor, Sort)

### Detailed Analysis

**Chart a: Number of Generalizations**

* **GPT-3 (dark blue):**

* 0 generalizations: Accuracy ~0.7

* 1 generalization: Accuracy ~0.5

* 2 generalizations: Accuracy ~0.35

* 3 generalizations: Accuracy ~0.25

* Trend: Accuracy decreases as the number of generalizations increases.

* **Human (light blue):**

* 0 generalizations: Accuracy ~0.6

* 1 generalization: Accuracy ~0.4

* 2 generalizations: Accuracy ~0.35

* 3 generalizations: Accuracy ~0.3

* Trend: Accuracy decreases as the number of generalizations increases.

**Chart b: Zero-generalization problems**

* **GPT-3 (dark blue):**

* Extend sequence: Accuracy ~0.98

* Successor: Accuracy ~0.9

* Predecessor: Accuracy ~0.8

* Remove redundant letter: Accuracy ~0.85

* Fix alphabetic sequence: Accuracy ~0.5

* Sort: Accuracy ~0.2

* Trend: Accuracy varies across transformation types, with "Extend sequence" having the highest accuracy and "Sort" having the lowest.

* **Human (light blue):**

* Extend sequence: Accuracy ~0.85

* Successor: Accuracy ~0.8

* Predecessor: Accuracy ~0.7

* Remove redundant letter: Accuracy ~0.7

* Fix alphabetic sequence: Accuracy ~0.25

* Sort: Accuracy ~0.2

* Trend: Accuracy varies across transformation types, with "Extend sequence" having the highest accuracy and "Sort" having the lowest.

**Chart c: One-generalization problems**

* **GPT-3 (dark blue):**

* Letter-to-number: Accuracy ~0.8

* Grouping: Accuracy ~0.55

* Longer target order: Accuracy ~0.55

* Reverse order: Accuracy ~0.55

* Interleaved distractor: Accuracy ~0.3

* Larger interval: Accuracy ~0.3

* Trend: Accuracy varies across generalization types, with "Letter-to-number" having the highest accuracy and "Interleaved distractor" and "Larger interval" having the lowest.

* **Human (light blue):**

* Letter-to-number: Accuracy ~0.6

* Grouping: Accuracy ~0.5

* Longer target order: Accuracy ~0.5

* Reverse order: Accuracy ~0.3

* Interleaved distractor: Accuracy ~0.25

* Larger interval: Accuracy ~0.25

* Trend: Accuracy varies across generalization types, with "Letter-to-number" having the highest accuracy and "Interleaved distractor" and "Larger interval" having the lowest.

**Chart d: Real-world concept problems**

* **GPT-3 (dark blue):**

* Extend sequence: Accuracy ~0.98

* Successor: Accuracy ~0.75

* Predecessor: Accuracy ~0.5

* Sort: Accuracy ~0.1

* Trend: Accuracy varies across transformation types, with "Extend sequence" having the highest accuracy and "Sort" having the lowest.

* **Human (light blue):**

* Extend sequence: Accuracy ~0.7

* Successor: Accuracy ~0.75

* Predecessor: Accuracy ~0.75

* Sort: Accuracy ~0.15

* Trend: Accuracy varies across transformation types, with "Predecessor" and "Successor" having the highest accuracy and "Sort" having the lowest.

### Key Observations

* In general, GPT-3 outperforms humans in most categories, especially in "Extend sequence" tasks.

* Both GPT-3 and human accuracy decrease as the number of generalizations increases (Chart a).

* The "Sort" transformation type consistently shows the lowest accuracy for both GPT-3 and humans across different problem types.

* Error bars indicate variability in the data, suggesting that the differences in accuracy between GPT-3 and humans may not always be statistically significant.

### Interpretation

The data suggests that GPT-3 is generally better at generative tasks than humans, particularly when dealing with simple sequence extension problems. However, the performance of both GPT-3 and humans decreases as the complexity of the task increases, as seen in the "Number of Generalizations" chart. The consistent low accuracy on "Sort" tasks indicates that this type of problem is particularly challenging for both GPT-3 and humans. The error bars highlight the need for statistical testing to determine the significance of the observed differences. The charts collectively demonstrate the strengths and weaknesses of GPT-3 in comparison to human performance across a range of generative tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

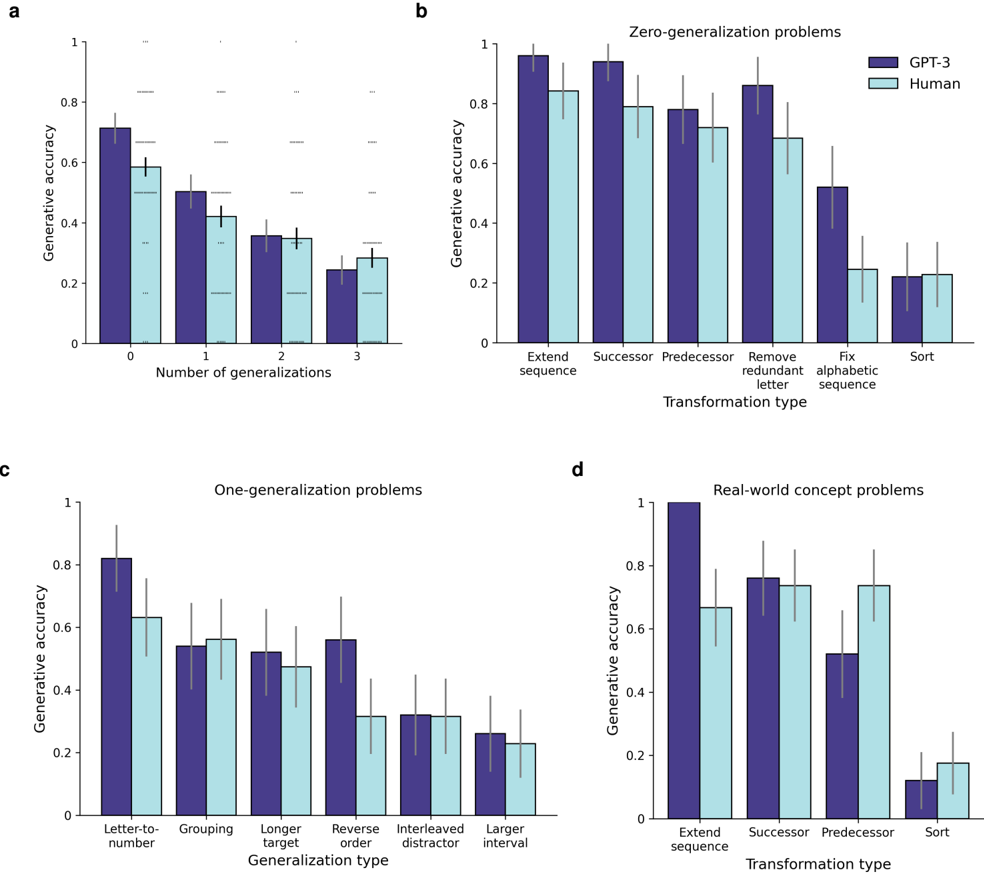

## Bar Charts: Generative Accuracy vs. Generalization Types

### Overview

The image presents four bar charts (labeled a, b, c, and d) comparing generative accuracy between "GPT-3" and "Human" performance across different generalization problem types. The charts use bar plots with error bars to represent the accuracy and variability.

### Components/Axes

* **Y-axis (all charts):** "Generative accuracy" ranging from 0 to 1.

* **X-axis (chart a):** "Number of generalizations" with categories 0, 1, 2, and 3.

* **X-axis (chart b):** "Transformation type" with categories "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", and "Sort".

* **X-axis (chart c):** "Generalization type" with categories "Letter-to-number", "Grouping", "Longer target", "Reverse order", "Interleaved distractor", and "Larger interval".

* **X-axis (chart d):** "Transformation type" with categories "Extend sequence", "Successor", "Predecessor", and "Sort".

* **Legend (charts b, c, and d):** Two entries: "GPT-3" (light blue) and "Human" (purple).

* **Error Bars:** Present on all bars, indicating variability in the generative accuracy.

### Detailed Analysis or Content Details

**Chart a: Number of Generalizations**

* The chart shows generative accuracy as a function of the number of generalizations.

* GPT-3 (light blue) starts with an accuracy of approximately 0.65 at 0 generalizations, decreases to around 0.55 at 1 generalization, then drops to approximately 0.35 at 2 generalizations, and finally to around 0.25 at 3 generalizations.

* Human (purple) starts with an accuracy of approximately 0.60 at 0 generalizations, decreases to around 0.45 at 1 generalization, then drops to approximately 0.30 at 2 generalizations, and finally to around 0.20 at 3 generalizations.

* Both GPT-3 and Human accuracy decrease as the number of generalizations increases.

**Chart b: Zero-generalization problems**

* GPT-3 (light blue) shows high accuracy across all transformation types.

* "Extend sequence": ~0.92

* "Successor": ~0.88

* "Predecessor": ~0.85

* "Remove redundant letter": ~0.82

* "Fix alphabetic sequence": ~0.85

* "Sort": ~0.88

* Human (purple) also shows high accuracy, but generally lower than GPT-3.

* "Extend sequence": ~0.85

* "Successor": ~0.80

* "Predecessor": ~0.75

* "Remove redundant letter": ~0.70

* "Fix alphabetic sequence": ~0.75

* "Sort": ~0.80

* GPT-3 consistently outperforms humans on all transformation types.

**Chart c: One-generalization problems**

* GPT-3 (light blue) shows varying accuracy depending on the generalization type.

* "Letter-to-number": ~0.85

* "Grouping": ~0.65

* "Longer target": ~0.55

* "Reverse order": ~0.40

* "Interleaved distractor": ~0.30

* "Larger interval": ~0.25

* Human (purple) shows similar trends, but generally lower accuracy.

* "Letter-to-number": ~0.80

* "Grouping": ~0.60

* "Longer target": ~0.50

* "Reverse order": ~0.35

* "Interleaved distractor": ~0.25

* "Larger interval": ~0.20

* GPT-3 generally outperforms humans, but the difference is less pronounced than in Chart b.

**Chart d: Real-world concept problems**

* GPT-3 (light blue) shows relatively consistent accuracy across transformation types.

* "Extend sequence": ~0.85

* "Successor": ~0.75

* "Predecessor": ~0.70

* "Sort": ~0.65

* Human (purple) shows similar trends, but generally lower accuracy.

* "Extend sequence": ~0.75

* "Successor": ~0.65

* "Predecessor": ~0.60

* "Sort": ~0.55

* GPT-3 consistently outperforms humans on all transformation types.

### Key Observations

* As the number of generalizations increases (Chart a), both GPT-3 and human accuracy decrease.

* GPT-3 consistently outperforms humans across all problem types, especially in zero-generalization scenarios (Chart b).

* The performance gap between GPT-3 and humans widens as the complexity of the generalization task increases.

* "Letter-to-number" generalization is the easiest for both GPT-3 and humans (Chart c).

* "Larger interval" and "Interleaved distractor" generalizations are the most challenging for both (Chart c).

### Interpretation

The data suggests that GPT-3 exhibits a stronger ability to generalize than humans, particularly in tasks requiring zero-shot generalization. The decreasing accuracy with increasing generalizations indicates a limitation in both models' ability to extrapolate beyond the initial training data. The differences in performance across different generalization types highlight the specific cognitive skills involved in each task. The consistent outperformance of GPT-3 suggests that it has learned more robust and flexible representations of the underlying concepts. The error bars indicate that there is variability in the performance of both models, suggesting that the results are not deterministic and may be influenced by factors not captured in the experiment. The charts provide a quantitative comparison of the generalization capabilities of GPT-3 and humans, offering insights into the strengths and weaknesses of each approach. The data suggests that GPT-3 is a powerful tool for generalization tasks, but it is not without its limitations.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: Comparative Generative Accuracy of GPT-3 vs. Humans

### Overview

The image contains four bar charts (labeled a, b, c, d) comparing the generative accuracy of GPT-3 (dark purple bars) and Human participants (light blue bars) across different problem types and generalization levels. All charts share a common y-axis: "Generative accuracy" ranging from 0 to 1. Error bars are present on all data points, indicating variability or confidence intervals.

### Components/Axes

* **Common Y-Axis (All Charts):** "Generative accuracy" (scale: 0, 0.2, 0.4, 0.6, 0.8, 1).

* **Legend (Top-Right of each chart):** Dark purple square = "GPT-3"; Light blue square = "Human".

* **Chart-Specific Titles & X-Axes:**

* **Chart a:** Title: None (implied overall trend). X-axis: "Number of generalizations" (Categories: 0, 1, 2, 3).

* **Chart b:** Title: "Zero-generalization problems". X-axis: "Transformation type" (Categories: Extend sequence, Successor, Predecessor, Remove redundant letter, Fix alphabetic sequence, Sort).

* **Chart c:** Title: "One-generalization problems". X-axis: "Generalization type" (Categories: Letter-to-number, Grouping, Longer target, Reverse order, Interleaved distractor, Larger interval).

* **Chart d:** Title: "Real-world concept problems". X-axis: "Transformation type" (Categories: Extend sequence, Successor, Predecessor, Sort).

### Detailed Analysis

**Chart a: Accuracy vs. Number of Generalizations**

* **Trend:** Generative accuracy for both GPT-3 and Humans decreases as the number of required generalizations increases from 0 to 3.

* **Data Points (Approximate):**

| Number of Generalizations | GPT-3 Accuracy | Human Accuracy |

| :--- | :--- | :--- |

| 0 | ≈ 0.71 | ≈ 0.58 |

| 1 | ≈ 0.50 | ≈ 0.42 |

| 2 | ≈ 0.36 | ≈ 0.35 |

| 3 | ≈ 0.24 | ≈ 0.29 |

**Chart b: Zero-Generalization Problems by Transformation Type**

* **Trend:** GPT-3 outperforms Humans in most transformation types, with the largest gap in "Extend sequence" and "Successor". Performance is similar for "Sort".

* **Data Points (Approximate):**

| Transformation Type | GPT-3 Accuracy | Human Accuracy |

| :--- | :--- | :--- |

| Extend sequence | ≈ 0.96 | ≈ 0.84 |

| Successor | ≈ 0.94 | ≈ 0.79 |

| Predecessor | ≈ 0.78 | ≈ 0.72 |

| Remove redundant letter | ≈ 0.86 | ≈ 0.68 |

| Fix alphabetic sequence | ≈ 0.52 | ≈ 0.24 |

| Sort | ≈ 0.22 | ≈ 0.23 |

**Chart c: One-Generalization Problems by Generalization Type**

* **Trend:** Performance is more varied. GPT-3 leads significantly in "Letter-to-number" and "Reverse order". Humans perform slightly better in "Grouping" and "Larger interval". The "Interleaved distractor" task shows low accuracy for both.

* **Data Points (Approximate):**

| Generalization Type | GPT-3 Accuracy | Human Accuracy |

| :--- | :--- | :--- |

| Letter-to-number | ≈ 0.82 | ≈ 0.63 |

| Grouping | ≈ 0.54 | ≈ 0.56 |

| Longer target | ≈ 0.52 | ≈ 0.48 |

| Reverse order | ≈ 0.56 | ≈ 0.32 |

| Interleaved distractor | ≈ 0.32 | ≈ 0.31 |

| Larger interval | ≈ 0.26 | ≈ 0.23 |

**Chart d: Real-World Concept Problems by Transformation Type**

* **Trend:** GPT-3 shows a perfect score (1.0) on "Extend sequence" and generally outperforms Humans, except for "Predecessor" where Humans are slightly better.

* **Data Points (Approximate):**

| Transformation Type | GPT-3 Accuracy | Human Accuracy |

| :--- | :--- | :--- |

| Extend sequence | = 1.0 | ≈ 0.67 |

| Successor | ≈ 0.76 | ≈ 0.73 |

| Predecessor | ≈ 0.52 | ≈ 0.74 |

| Sort | ≈ 0.12 | ≈ 0.18 |

### Key Observations

1. **Generalization Cost:** Chart (a) clearly demonstrates that requiring more generalizations (0 to 3) significantly reduces accuracy for both AI and humans.

2. **GPT-3's Strengths:** GPT-3 excels in tasks involving sequence extension and successor identification, particularly in zero-generalization (b) and real-world concept (d) contexts.

3. **Human Strengths:** Humans show a relative advantage in the "Predecessor" task within real-world concepts (d) and in "Grouping" and "Larger interval" within one-generalization problems (c).

4. **Shared Difficulty:** Both entities struggle with the "Sort" transformation (b, d) and the "Interleaved distractor" generalization (c), indicating these are inherently challenging tasks.

5. **Performance Gap:** The performance gap between GPT-3 and Humans is most pronounced in tasks that appear more rule-based or sequential (e.g., Extend sequence, Successor).

### Interpretation

The data suggests a nuanced comparison between GPT-3 and human cognitive performance on structured, generative tasks. GPT-3 demonstrates superior performance in tasks that likely rely on pattern completion and applying straightforward sequential rules (e.g., extending a sequence, finding the next item). This aligns with the model's training on vast textual data where such patterns are prevalent.

However, humans show resilience or superiority in tasks that may require different cognitive strategies, such as grouping based on abstract criteria ("Grouping"), working with larger numerical intervals ("Larger interval"), or understanding concepts in reverse ("Predecessor" in real-world contexts). The steep decline in accuracy with increased generalizations (Chart a) highlights a fundamental challenge for both biological and artificial intelligence: moving from specific examples to broader, abstract application.

The near-equal performance on the "Sort" task is notable, suggesting it may be a task where human intuitive heuristics and GPT-3's learned patterns converge on similarly limited effectiveness. Overall, the charts illustrate that while GPT-3 can match or exceed human performance on specific, well-defined generative problems, its advantages are task-dependent, and both systems face common limitations when tasks require higher-order generalization or involve certain types of cognitive operations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Comparative Performance of GPT-3 and Humans Across Task Types

### Overview

The image contains four subplots (a-d) comparing generative accuracy of GPT-3 (dark blue) and humans (light blue) across different task categories. Each subplot examines performance under varying generalization requirements, from zero to real-world concept problems.

### Components/Axes

**Subplot a: Number of Generalizations**

- **X-axis**: Number of generalizations (0, 1, 2, 3)

- **Y-axis**: Generative accuracy (0-1 scale)

- **Legend**: GPT-3 (dark blue), Human (light blue)

- **Spatial**: Legend top-right; bars clustered by generalization count

**Subplot b: Zero-Generalization Problems**

- **X-axis**: Transformation types (Extend sequence, Successor, Predecessor, Remove redundant letter, Fix alphabetic sequence, Sort)

- **Y-axis**: Generative accuracy (0-1 scale)

- **Legend**: Same as subplot a

- **Spatial**: Legend top-right; bars grouped by transformation type

**Subplot c: One-Generalization Problems**

- **X-axis**: Generalization types (Letter-to-number, Grouping, Longer target, Reverse order, Interleaved distractor, Larger interval)

- **Y-axis**: Generative accuracy (0-1 scale)

- **Legend**: Same as subplot a

- **Spatial**: Legend top-right; bars grouped by generalization type

**Subplot d: Real-World Concept Problems**

- **X-axis**: Transformation types (Extend sequence, Successor, Predecessor, Sort)

- **Y-axis**: Generative accuracy (0-1 scale)

- **Legend**: Same as subplot a

- **Spatial**: Legend top-right; bars grouped by transformation type

### Detailed Analysis

**Subplot a Trends**:

- GPT-3 accuracy: 0.72 (±0.05) at 0 generalizations → 0.28 (±0.04) at 3 generalizations

- Human accuracy: 0.60 (±0.06) at 0 generalizations → 0.30 (±0.05) at 3 generalizations

- Both show exponential decay with increasing generalization requirements

**Subplot b Trends**:

- GPT-3: 0.95 (±0.03) for Extend sequence → 0.20 (±0.04) for Sort

- Human: 0.80 (±0.05) for Extend sequence → 0.25 (±0.06) for Sort

- GPT-3 maintains 15-20% advantage in sequence manipulation tasks

**Subplot c Trends**:

- GPT-3: 0.82 (±0.04) for Letter-to-number → 0.25 (±0.05) for Larger interval

- Human: 0.65 (±0.06) for Letter-to-number → 0.22 (±0.05) for Larger interval

- Both struggle with combinatorial generalization tasks

**Subplot d Trends**:

- GPT-3: 0.98 (±0.02) for Extend sequence → 0.12 (±0.03) for Sort

- Human: 0.68 (±0.04) for Extend sequence → 0.18 (±0.04) for Sort

- GPT-3 shows 3x performance drop in real-world concept sorting vs. sequence extension

### Key Observations

1. **Generalization Degradation**: All models show >50% accuracy drop when moving from zero to three generalizations

2. **Sequence vs. Concept Tasks**: GPT-3 maintains >0.8 accuracy in sequence tasks vs. <0.5 in real-world concept tasks

3. **Human Performance**: Humans consistently score 15-25% lower than GPT-3 across all task types

4. **Sorting Vulnerability**: Both models show extreme sensitivity to sorting tasks (GPT-3: 0.12, Human: 0.18)

### Interpretation

The data reveals fundamental limitations in large language models' ability to handle:

1. **Combinatorial Generalization**: Performance degrades exponentially with increasing generalization requirements

2. **Abstraction Gap**: While GPT-3 excels at pattern recognition (sequence tasks), it struggles with real-world concept manipulation

3. **Human-AI Disparity**: Humans demonstrate better relative performance in complex transformation tasks despite lower absolute accuracy

4. **Sorting as Bottleneck**: The dramatic drop in Sort task performance suggests a critical limitation in hierarchical reasoning capabilities

This pattern indicates that while GPT-3 achieves human-level performance in simple pattern completion, it lacks the compositional generalization abilities required for real-world problem solving, particularly in tasks requiring multi-step logical transformations.

DECODING INTELLIGENCE...