# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

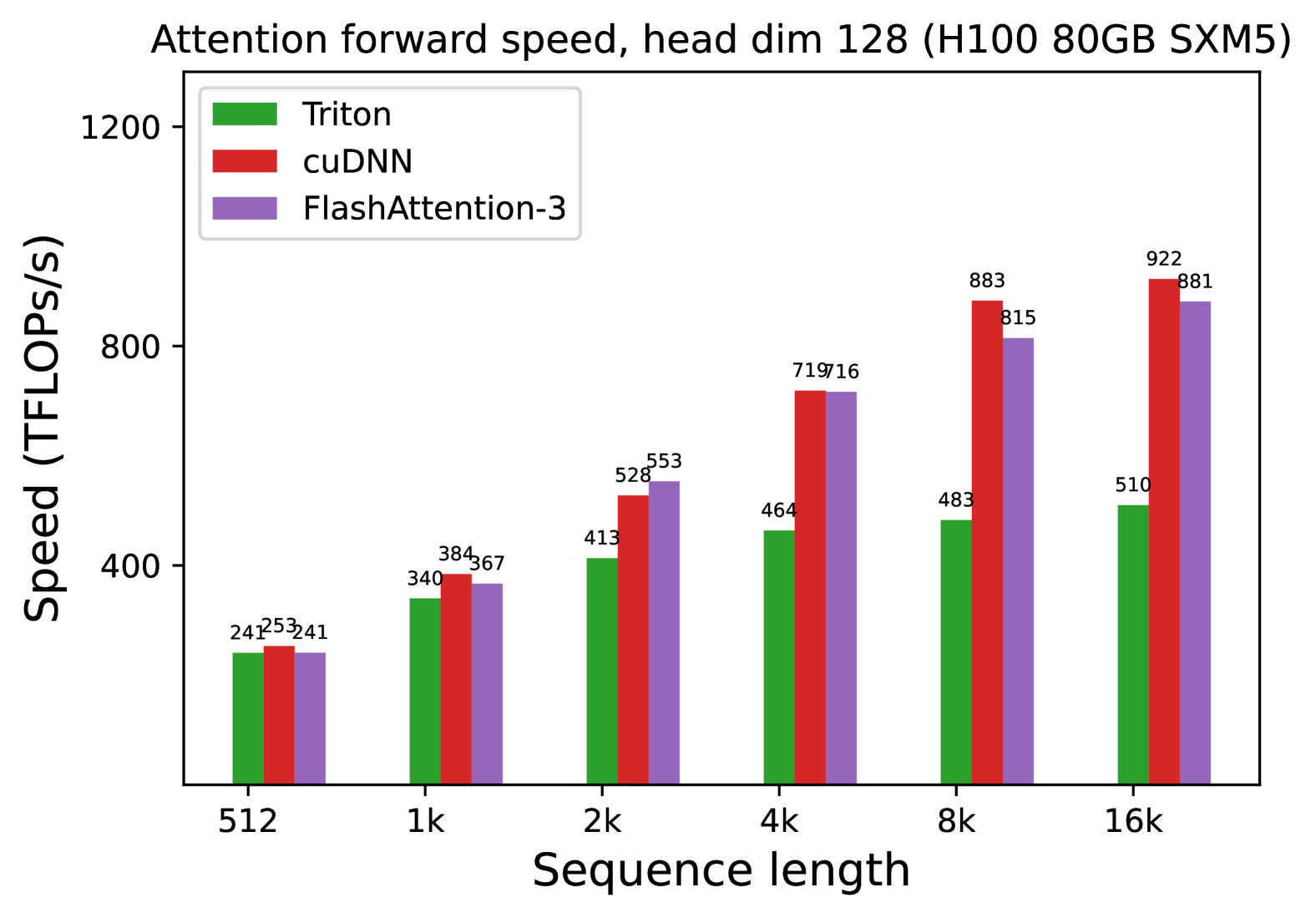

* **Title:** Attention forward speed, head dim 128 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Operation:** Attention forward pass.

* **Parameter:** Head dimension fixed at 128.

## 2. Chart Metadata

* **Type:** Grouped Bar Chart.

* **X-Axis Label:** Sequence length (Categorical/Logarithmic scale: 512 to 16k).

* **Y-Axis Label:** Speed (TFLOPS/s).

* **Y-Axis Scale:** 0 to 1200, with major ticks at 400, 800, and 1200.

* **Legend Placement:** Top-left [x ≈ 0.05, y ≈ 0.95].

## 3. Legend and Series Identification

The chart compares three software implementations/kernels:

1. **Triton** (Green): Represented by the leftmost bar in each group.

2. **cuDNN** (Red): Represented by the middle bar in each group.

3. **FlashAttention-3** (Purple): Represented by the rightmost bar in each group.

## 4. Data Table Extraction

The following table reconstructs the numerical values displayed above each bar in the chart.

| Sequence Length | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :---: | :---: | :---: |

| **512** | 241 | 253 | 241 |

| **1k** | 340 | 384 | 367 |

| **2k** | 413 | 528 | 553 |

| **4k** | 464 | 719 | 716 |

| **8k** | 483 | 883 | 815 |

| **16k** | 510 | 922 | 881 |

## 5. Trend Analysis and Observations

### Component Isolation: Trend Verification

* **Triton (Green):** Shows a steady, linear-like upward slope. It starts as the lowest performer at 512 (tied with FlashAttention-3) and remains the slowest implementation as sequence length increases, plateauing significantly compared to the others at higher sequence lengths.

* **cuDNN (Red):** Shows a strong upward slope. It is the fastest implementation at the smallest scale (512) and the largest scales (8k and 16k). It exhibits the highest peak performance at 922 TFLOPS/s.

* **FlashAttention-3 (Purple):** Shows a strong upward slope, closely tracking cuDNN. It surpasses cuDNN briefly at the 2k sequence length mark but falls slightly behind cuDNN at the 8k and 16k marks.

### Key Findings

* **Scaling:** All three methods show improved TFLOPS/s as sequence length increases, indicating better hardware utilization at larger scales.

* **Performance Leaders:** At sequence lengths of 4k and above, cuDNN and FlashAttention-3 significantly outperform Triton, achieving nearly double the throughput at 16k (922 and 881 TFLOPS/s vs. 510 TFLOPS/s).

* **Peak Performance:** The maximum recorded speed is **922 TFLOPS/s** achieved by **cuDNN** at a sequence length of 16k.