## Diagram: LLM Training Pipeline Flowchart

### Overview

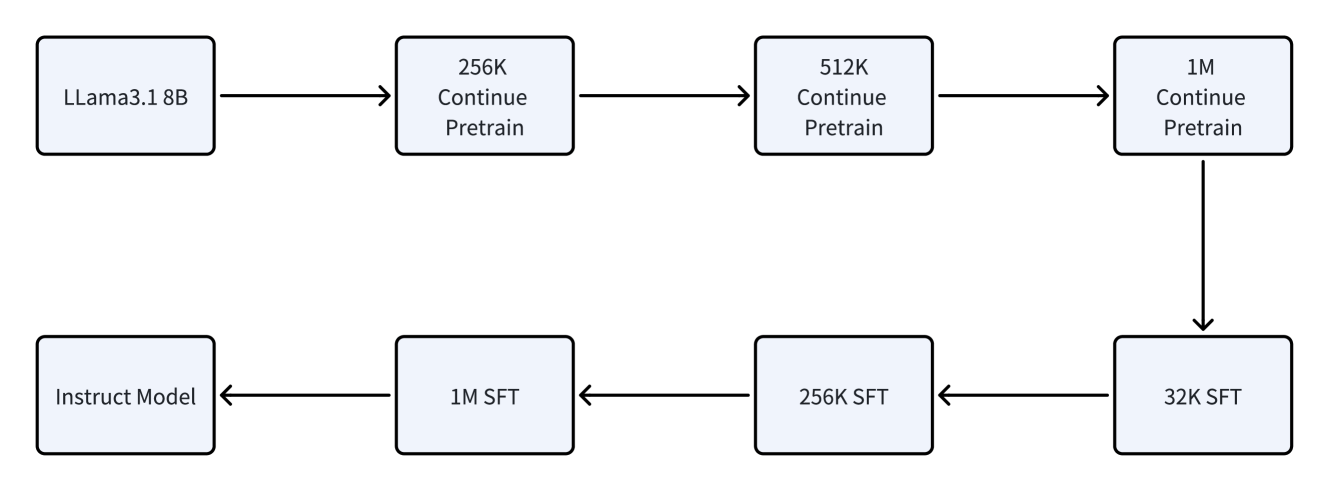

The image displays a flowchart illustrating a multi-stage training pipeline for a large language model (LLM). The process begins with a base model and progresses through phases of continued pretraining at increasing context lengths, followed by supervised fine-tuning (SFT) at varying context lengths, culminating in a final "Instruct Model." The flow is directional, moving from left to right in the top row, then down, and finally from right to left in the bottom row.

### Components/Axes

The diagram consists of eight rectangular boxes with rounded corners, connected by directional arrows. Each box contains text describing a model or a training stage. The arrows indicate the sequence and flow of the process.

**Box Content (in order of flow):**

1. **Top Row, Leftmost:** `LLama3.1 8B`

2. **Top Row, Second:** `256K Continue Pretrain`

3. **Top Row, Third:** `512K Continue Pretrain`

4. **Top Row, Rightmost:** `1M Continue Pretrain`

5. **Bottom Row, Rightmost:** `32K SFT`

6. **Bottom Row, Second from Right:** `256K SFT`

7. **Bottom Row, Third from Right:** `1M SFT`

8. **Bottom Row, Leftmost:** `Instruct Model`

**Flow Direction:**

* The top row flows sequentially from left to right: Box 1 → Box 2 → Box 3 → Box 4.

* A single arrow points downward from Box 4 (top-right) to Box 5 (bottom-right).

* The bottom row flows sequentially from right to left: Box 5 → Box 6 → Box 7 → Box 8.

### Detailed Analysis

The pipeline describes a two-phase training process:

**Phase 1: Continued Pretraining (Top Row)**

* **Starting Point:** The base model is `LLama3.1 8B`.

* **Process:** The model undergoes "Continue Pretrain" in three successive stages.

* **Key Variable:** The context length (likely measured in tokens) increases at each stage: `256K` → `512K` → `1M`. This suggests the model is being progressively adapted to handle much longer sequences of text.

**Phase 2: Supervised Fine-Tuning (SFT) (Bottom Row)**

* **Process:** Following the final pretraining stage, the model enters a series of "SFT" (Supervised Fine-Tuning) stages.

* **Key Variable:** The context length varies during fine-tuning: `32K` → `256K` → `1M`. The sequence starts with a shorter context (`32K`) and increases back to the maximum (`1M`).

* **End Product:** The final output of the entire pipeline is labeled `Instruct Model`, indicating a model fine-tuned to follow instructions.

### Key Observations

1. **Context Length Scaling:** The primary technical detail being communicated is the scaling of the model's context window. The pipeline explicitly shows training at 256K, 512K, and 1 million (1M) token contexts.

2. **Two-Phase Structure:** The process is clearly divided into a pretraining extension phase and a fine-tuning phase.

3. **Non-Linear SFT Context:** While pretraining context length increases monotonically, the SFT phase starts at a lower context (`32K`) before scaling back up. This could indicate a training strategy where the model is first fine-tuned on shorter, potentially higher-quality instruction data before being adapted to the full long-context capability.

4. **Model Origin:** The starting point is specified as `LLama3.1 8B`, identifying the base model architecture and size (8 billion parameters).

### Interpretation

This flowchart documents a sophisticated training recipe for creating a long-context instruction-following model. The process suggests that simply pretraining a model on long sequences is not sufficient. To create a usable "Instruct Model," a dedicated fine-tuning phase is required, which itself involves training at multiple context lengths.

The sequence implies a logical progression:

1. **Foundation:** Start with a capable base model (`LLama3.1 8B`).

2. **Capability Expansion:** Systematically extend its core ability to process very long documents (up to 1M tokens) through continued pretraining.

3. **Alignment & Refinement:** Fine-tune the model to follow instructions, a process that also involves re-exposing it to varying context lengths, possibly to ensure the instruction-following behavior is robust across the entire supported context window.

The diagram serves as a high-level technical specification, answering the question: "What were the key stages and context lengths used to train this specific instruct model?" It provides a reproducible blueprint for the training pipeline.