## Comparison Diagram: Model Performance on "Las Meninas" Question

### Overview

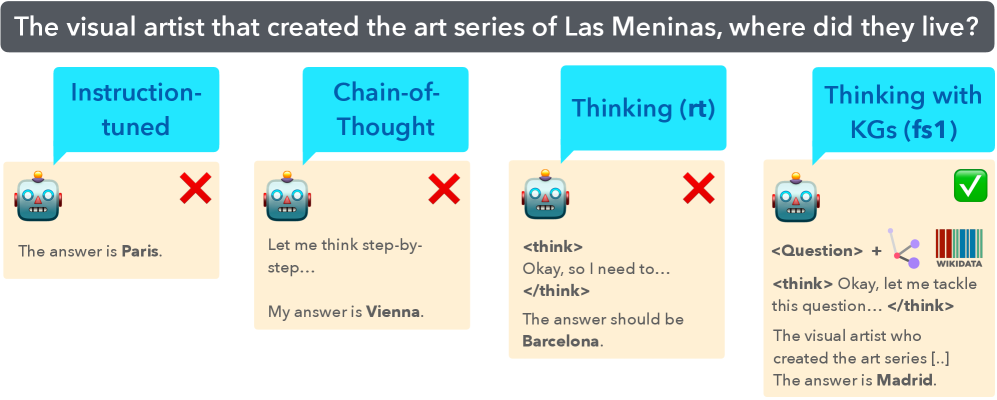

The image presents a comparison of four different models' performance on the question: "The visual artist that created the art series of Las Meninas, where did they live?". Each model's approach and answer are displayed, along with an indication of whether the answer was correct (green checkmark) or incorrect (red 'X').

### Components/Axes

* **Title:** "The visual artist that created the art series of Las Meninas, where did they live?"

* **Models (from left to right):**

* Instruction-tuned

* Chain-of-Thought

* Thinking (rt)

* Thinking with KGs (fs1)

* **Visual Elements:**

* Robot emoji representing the model.

* Speech bubble above each robot indicating the model's name/type.

* Text box below each robot showing the model's reasoning and answer.

* Red 'X' or green checkmark indicating incorrect or correct answer, respectively.

* For "Thinking with KGs (fs1)", there are icons representing "Question", a knowledge graph, and "Wikidata".

### Detailed Analysis or ### Content Details

**1. Instruction-tuned:**

* **Model Type:** Instruction-tuned

* **Reasoning/Answer:** "The answer is Paris."

* **Result:** Incorrect (Red 'X' in the top-right)

**2. Chain-of-Thought:**

* **Model Type:** Chain-of-Thought

* **Reasoning/Answer:** "Let me think step-by-step... My answer is Vienna."

* **Result:** Incorrect (Red 'X' in the top-right)

**3. Thinking (rt):**

* **Model Type:** Thinking (rt)

* **Reasoning/Answer:**

* "\<think> Okay, so I need to... \</think>"

* "The answer should be Barcelona."

* **Result:** Incorrect (Red 'X' in the top-right)

**4. Thinking with KGs (fs1):**

* **Model Type:** Thinking with KGs (fs1)

* **Reasoning/Answer:**

* "\<Question> + [Knowledge Graph Icon] + [Wikidata Icon]"

* "\<think> Okay, let me tackle this question... \</think>"

* "The visual artist who created the art series [...] The answer is Madrid."

* **Result:** Correct (Green checkmark in the top-right)

### Key Observations

* Three out of the four models provided incorrect answers.

* The "Thinking with KGs (fs1)" model, which incorporates knowledge graphs and Wikidata, was the only one to provide the correct answer (Madrid).

* The models that failed provided the following answers: Paris, Vienna, and Barcelona.

### Interpretation

The diagram illustrates the importance of incorporating external knowledge sources (knowledge graphs and Wikidata) when answering questions that require specific factual information. The "Thinking with KGs (fs1)" model's success suggests that leveraging structured knowledge bases can significantly improve the accuracy of language models in question-answering tasks. The failure of the other models, which rely on instruction tuning or chain-of-thought reasoning alone, highlights the limitations of these approaches when dealing with questions that require accessing and reasoning about external knowledge.