## Line Chart: Accuracy vs. Epochs for Three Methods

### Overview

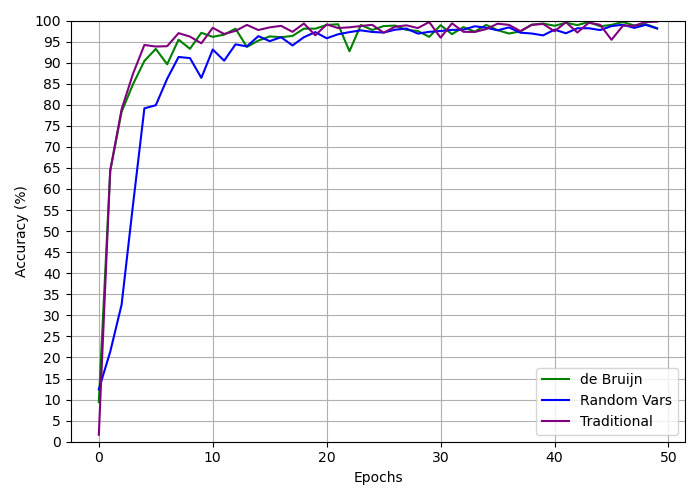

The image is a line chart comparing the training accuracy (in percentage) over 50 epochs for three different methods or models: "de Bruijn", "Random Vars", and "Traditional". All three methods show a rapid initial increase in accuracy followed by convergence to a high, stable accuracy level near 100%.

### Components/Axes

* **Chart Type:** Line chart with multiple series.

* **X-Axis:** Labeled "Epochs". Scale ranges from 0 to 50, with major tick marks every 10 units (0, 10, 20, 30, 40, 50).

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 0 to 100, with major tick marks every 5 units (0, 5, 10, ..., 95, 100).

* **Legend:** Located in the bottom-right corner of the chart area. It contains three entries:

* A green line labeled "de Bruijn".

* A blue line labeled "Random Vars".

* A purple line labeled "Traditional".

* **Grid:** A light gray grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

**Trend Verification & Data Points:**

1. **Traditional (Purple Line):**

* **Trend:** Shows the steepest initial ascent, reaching high accuracy fastest. After the initial rise, it exhibits minor fluctuations but maintains a very high accuracy.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~94%

* Epoch 10: ~98%

* Epoch 20: ~99%

* Epoch 30: ~98%

* Epoch 40: ~99%

* Epoch 50: ~99%

2. **de Bruijn (Green Line):**

* **Trend:** Rises rapidly, slightly slower than the Traditional method initially. It converges to a high accuracy level similar to the Traditional method but shows a notable dip around epoch 22.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~92%

* Epoch 10: ~96%

* Epoch 20: ~98%

* Epoch 22 (Dip): ~93%

* Epoch 30: ~98%

* Epoch 40: ~99%

* Epoch 50: ~99%

3. **Random Vars (Blue Line):**

* **Trend:** Has the slowest initial rise of the three. It takes more epochs to reach the 90%+ accuracy range but eventually converges to a similar high accuracy plateau as the other two methods.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~79%

* Epoch 10: ~93%

* Epoch 20: ~97%

* Epoch 30: ~98%

* Epoch 40: ~98%

* Epoch 50: ~99%

### Key Observations

* **Convergence Speed:** The "Traditional" method converges to high accuracy (>90%) the fastest (within ~5 epochs). The "de Bruijn" method is slightly slower, and the "Random Vars" method is the slowest to reach that threshold (around epoch 8-9).

* **Final Accuracy:** All three methods appear to converge to a very similar final accuracy level, hovering between approximately 98% and 100% after epoch 30.

* **Stability:** The "Traditional" and "Random Vars" lines are relatively smooth after convergence. The "de Bruijn" line shows a distinct, temporary drop in accuracy around epoch 22 before recovering.

* **Initial Conditions:** All methods start from a very low accuracy (near 0-2%) at epoch 0.

### Interpretation

This chart demonstrates a comparative analysis of training efficiency and final performance for three distinct approaches. The data suggests that the **"Traditional" method is the most efficient in the early stages of training**, achieving high accuracy with fewer computational epochs. This could imply it uses a more effective optimization strategy or has a better-inductive bias for the task at hand.

The **"Random Vars" method, while ultimately reaching the same performance ceiling, requires a longer "warm-up" period**. This might indicate a less directed search of the parameter space initially. The **"de Bruijn" method presents an interesting case of high efficiency coupled with a momentary instability** (the dip at epoch 22), which could be an artifact of the specific training run, a learning rate issue, or a characteristic of the method itself.

The most significant finding is that **given sufficient training time (epochs), all three methods achieve near-perfect accuracy** on this particular task. This implies the task may not be sufficiently complex to differentiate the ultimate capacity of these models, or that all three methods are fundamentally sound. The key differentiator is therefore **training speed and stability**, not final accuracy. For applications where training time or computational cost is critical, the "Traditional" method would be the preferred choice based on this data.