## Line Chart: AI Model Accuracy During Training

### Overview

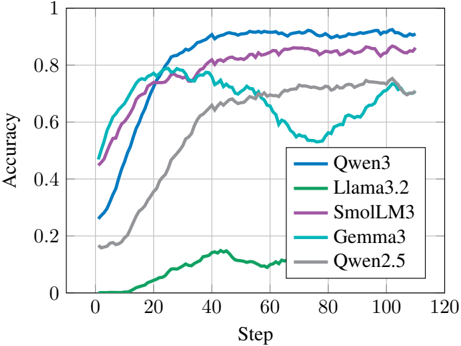

The image is a line chart comparing the training accuracy of five different AI models over a series of training steps. The chart plots "Accuracy" on the vertical axis against "Step" on the horizontal axis, showing the learning progression of each model.

### Components/Axes

* **Chart Type:** Multi-line chart.

* **X-Axis (Horizontal):**

* **Label:** "Step"

* **Scale:** Linear, ranging from 0 to 120.

* **Major Ticks:** 0, 20, 40, 60, 80, 100, 120.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear, ranging from 0 to 1.

* **Major Ticks:** 0, 0.2, 0.4, 0.6, 0.8, 1.

* **Legend:**

* **Placement:** Bottom-right corner of the chart area.

* **Content:** A box listing five models with corresponding colored lines.

1. **Qwen3** - Blue line

2. **Llama3.2** - Green line

3. **SmolLM3** - Purple line

4. **Gemma3** - Cyan/Teal line

5. **Qwen2.5** - Gray line

### Detailed Analysis

The chart tracks the accuracy of each model from step 0 to approximately step 110.

**1. Qwen3 (Blue Line):**

* **Trend:** Shows a strong, consistent upward trend, plateauing near the top of the chart.

* **Data Points (Approximate):**

* Starts at ~0.28 accuracy at step 0.

* Rises steeply, crossing 0.8 accuracy around step 30.

* Reaches a plateau between ~0.90 and ~0.93 from step 50 onward, maintaining the highest accuracy among all models.

**2. Llama3.2 (Green Line):**

* **Trend:** Remains very low throughout, with a minor, brief increase in the middle.

* **Data Points (Approximate):**

* Starts near 0 accuracy.

* Begins a slow rise around step 10, peaking at approximately 0.15 accuracy near step 45.

* Declines back towards 0.10 by step 65, where the line ends.

**3. SmolLM3 (Purple Line):**

* **Trend:** Shows a steady, strong upward trend, closely following but slightly below Qwen3.

* **Data Points (Approximate):**

* Starts at ~0.45 accuracy at step 0.

* Rises consistently, crossing 0.8 accuracy around step 40.

* Plateaus in the range of ~0.83 to ~0.87 from step 60 onward.

**4. Gemma3 (Cyan/Teal Line):**

* **Trend:** Exhibits a distinctive "dip and recovery" pattern.

* **Data Points (Approximate):**

* Starts at ~0.48 accuracy at step 0.

* Rises quickly to a local peak of ~0.78 around step 25.

* Experiences a significant decline, bottoming out at ~0.53 around step 75.

* Recovers sharply, ending near ~0.70 accuracy by step 110.

**5. Qwen2.5 (Gray Line):**

* **Trend:** Shows a steady, moderate upward trend that plateaus in the middle range.

* **Data Points (Approximate):**

* Starts at ~0.18 accuracy at step 0.

* Rises steadily, crossing 0.6 accuracy around step 40.

* Plateaus between ~0.70 and ~0.75 from step 60 onward.

### Key Observations

1. **Performance Hierarchy:** A clear performance hierarchy is established by step 50 and maintained thereafter: Qwen3 > SmolLM3 > Qwen2.5 ≈ Gemma3 (post-recovery) >> Llama3.2.

2. **Convergence:** Qwen3 and SmolLM3 show similar learning curves and converge to high accuracy levels, with Qwen3 maintaining a slight lead.

3. **Anomaly - Gemma3:** Gemma3's training trajectory is highly anomalous. Its significant mid-training performance drop and subsequent recovery suggest potential instability in its training process or a specific challenge in the data/optimization at those steps.

4. **Underperformance - Llama3.2:** Llama3.2 demonstrates very poor learning on this specific task, failing to achieve meaningful accuracy compared to the other models.

5. **Stability:** Qwen3, SmolLM3, and Qwen2.5 show relatively stable plateaus after their initial learning phase, indicating converged training.

### Interpretation

This chart likely visualizes a benchmark or a specific training task for comparing large language models. The data suggests:

* **Model Capability:** Qwen3 and SmolLM3 are the most capable models for this particular task, demonstrating both fast learning and high final accuracy.

* **Training Dynamics:** The stark difference in curves highlights that model architecture, training data, or hyperparameters lead to vastly different learning behaviors. Gemma3's dip is a critical red flag for its training stability on this task.

* **Task Suitability:** Llama3.2's flatline performance indicates it may be fundamentally unsuited for this task, or its training run encountered a failure mode (e.g., loss spike, optimization divergence).

* **Evolution:** Comparing Qwen3 (newer) to Qwen2.5 (older) shows a clear generational improvement in both learning speed and final performance for this model family.

**Language Note:** All text in the image is in English. No other languages are present.