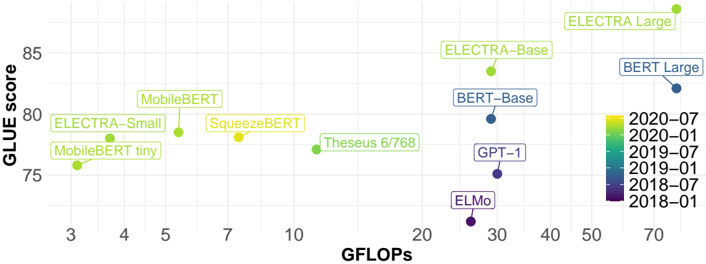

## Scatter Plot: GLUE Score vs. GFLOPS for Various Language Models

### Overview

The image is a scatter plot comparing the GLUE (General Language Understanding Evaluation) score of various language models against their GFLOPS (billions of floating point operations per second). The data points are color-coded by the year and month of the model's release, ranging from 2018-01 to 2020-07. The plot illustrates the trade-off between model performance (GLUE score) and computational cost (GFLOPS).

### Components/Axes

* **X-axis:** GFLOPS (billions of floating point operations per second). Scale ranges from 3 to 70, with tick marks at 3, 4, 5, 7, 10, 20, 30, 40, 50, and 70.

* **Y-axis:** GLUE score. Scale ranges from 75 to 85, with tick marks at 75, 80, and 85.

* **Data Points:** Each data point represents a language model. The position of the point indicates its GFLOPS and GLUE score.

* **Labels:** Each data point is labeled with the name of the language model.

* **Legend:** Located on the right side of the plot. The color gradient represents the release date of the models, ranging from dark purple (2018-01) to bright yellow (2020-07). The legend entries are:

* 2020-07 (Yellow)

* 2020-01 (Light Green)

* 2019-07 (Green)

* 2019-01 (Teal)

* 2018-07 (Blue)

* 2018-01 (Dark Purple)

### Detailed Analysis

* **MobileBERT tiny:** Located at approximately (3, 76). Color is light green, corresponding to approximately 2020-01.

* **ELECTRA-Small:** Located at approximately (4, 78). Color is light green, corresponding to approximately 2020-01.

* **MobileBERT:** Located at approximately (5.5, 79). Color is light green, corresponding to approximately 2020-01.

* **SqueezeBERT:** Located at approximately (7, 78.5). Color is yellow-green, corresponding to approximately 2020-04.

* **Theseus 6/768:** Located at approximately (11, 77). Color is green, corresponding to approximately 2019-07.

* **ELMo:** Located at approximately (27, 72). Color is dark purple, corresponding to approximately 2018-01.

* **GPT-1:** Located at approximately (29, 75). Color is purple-blue, corresponding to approximately 2018-07.

* **BERT-Base:** Located at approximately (32, 79.5). Color is blue, corresponding to approximately 2018-07.

* **ELECTRA-Base:** Located at approximately (37, 83). Color is light green, corresponding to approximately 2020-01.

* **ELECTRA Large:** Located at approximately (52, 86). Color is yellow-green, corresponding to approximately 2020-04.

* **BERT Large:** Located at approximately (68, 83). Color is blue, corresponding to approximately 2018-07.

### Key Observations

* There is a general trend of increasing GLUE score with increasing GFLOPS.

* Models released later (closer to 2020-07) tend to have higher GLUE scores for a given GFLOPS value, suggesting improvements in model efficiency over time.

* The ELECTRA models (Small, Base, and Large) show a clear progression in both GFLOPS and GLUE score.

* The BERT models (Base and Large) also show a progression, but they are older than the ELECTRA models.

* MobileBERT and SqueezeBERT are designed for efficiency, achieving relatively high GLUE scores with lower GFLOPS.

### Interpretation

The scatter plot illustrates the trade-off between model performance (GLUE score) and computational cost (GFLOPS) for various language models. The color-coding by release date reveals a trend of improving model efficiency over time, as newer models tend to achieve higher GLUE scores for a given GFLOPS value. This suggests that advancements in model architecture and training techniques are enabling researchers to develop more efficient and performant language models. The plot also highlights the existence of models like MobileBERT and SqueezeBERT, which prioritize efficiency and achieve relatively high GLUE scores with lower computational requirements. The data suggests that the field of NLP is continuously evolving, with a focus on developing models that are both accurate and computationally efficient.