TECHNICAL ASSET FINGERPRINT

74ab283729b353c5805f0968

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

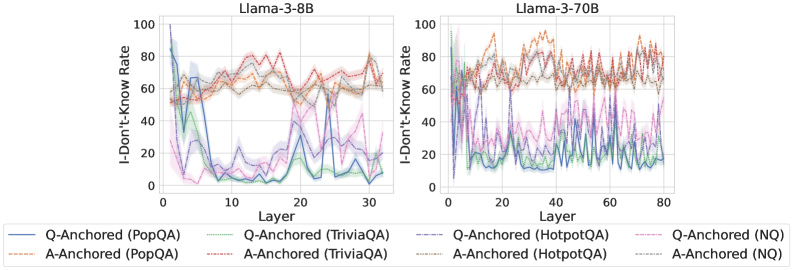

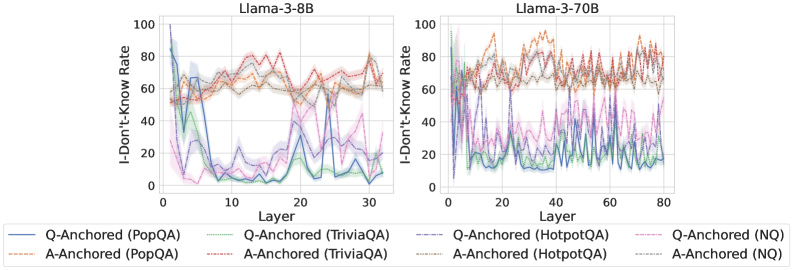

## Chart Type: Line Graphs Comparing "I-Don't-Know" Rate

### Overview

The image presents two line graphs side-by-side, comparing the "I-Don't-Know" rate across different layers of two language models: Llama-3-8B (left) and Llama-3-70B (right). Each graph plots the "I-Don't-Know" rate (y-axis) against the layer number (x-axis) for various question-answering datasets (PopQA, TriviaQA, HotpotQA, and NQ), with both question-anchored (Q-Anchored) and answer-anchored (A-Anchored) approaches. The shaded regions around each line represent the uncertainty or variance in the data.

### Components/Axes

* **Titles:**

* Left Graph: "Llama-3-8B"

* Right Graph: "Llama-3-70B"

* **Y-Axis:**

* Label: "I-Don't-Know Rate"

* Scale: 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis:**

* Label: "Layer"

* Left Graph Scale: 0 to 30, with tick marks every 10 units.

* Right Graph Scale: 0 to 80, with tick marks every 20 units.

* **Legend:** Located at the bottom of the image, describing the lines:

* Blue solid line: "Q-Anchored (PopQA)"

* Brown dashed line: "A-Anchored (PopQA)"

* Green dotted line: "Q-Anchored (TriviaQA)"

* Brown dotted line: "A-Anchored (TriviaQA)"

* Red dashed line: "Q-Anchored (HotpotQA)"

* Brown dotted line: "A-Anchored (HotpotQA)"

* Purple dotted line: "Q-Anchored (NQ)"

* Brown dotted line: "A-Anchored (NQ)"

### Detailed Analysis

#### Llama-3-8B (Left Graph)

* **Q-Anchored (PopQA) (Blue solid line):** Starts at approximately 85-90% at layer 0, drops sharply to around 5-10% by layer 10, and then fluctuates between 5% and 30% for the remaining layers.

* **A-Anchored (PopQA) (Brown dashed line):** Starts at approximately 50% at layer 0, rises to around 60-70% and remains relatively stable with minor fluctuations.

* **Q-Anchored (TriviaQA) (Green dotted line):** Starts at approximately 60% at layer 0, drops to around 5-10% by layer 10, and then fluctuates between 5% and 20% for the remaining layers.

* **A-Anchored (TriviaQA) (Brown dotted line):** Starts at approximately 50% at layer 0, rises to around 60-70% and remains relatively stable with minor fluctuations.

* **Q-Anchored (HotpotQA) (Red dashed line):** Starts at approximately 60% at layer 0, rises to around 70-80% and remains relatively stable with minor fluctuations.

* **A-Anchored (HotpotQA) (Brown dotted line):** Starts at approximately 50% at layer 0, rises to around 60-70% and remains relatively stable with minor fluctuations.

* **Q-Anchored (NQ) (Purple dotted line):** Starts at approximately 60% at layer 0, drops to around 5-10% by layer 10, and then fluctuates between 5% and 20% for the remaining layers.

* **A-Anchored (NQ) (Brown dotted line):** Starts at approximately 50% at layer 0, rises to around 60-70% and remains relatively stable with minor fluctuations.

#### Llama-3-70B (Right Graph)

* **Q-Anchored (PopQA) (Blue solid line):** Starts at approximately 20% at layer 0, fluctuates between 10% and 40% for the remaining layers.

* **A-Anchored (PopQA) (Brown dashed line):** Starts at approximately 70% at layer 0, fluctuates between 60% and 90% for the remaining layers.

* **Q-Anchored (TriviaQA) (Green dotted line):** Starts at approximately 20% at layer 0, fluctuates between 10% and 30% for the remaining layers.

* **A-Anchored (TriviaQA) (Brown dotted line):** Starts at approximately 70% at layer 0, fluctuates between 60% and 80% for the remaining layers.

* **Q-Anchored (HotpotQA) (Red dashed line):** Starts at approximately 70% at layer 0, fluctuates between 60% and 90% for the remaining layers.

* **A-Anchored (HotpotQA) (Brown dotted line):** Starts at approximately 70% at layer 0, fluctuates between 60% and 80% for the remaining layers.

* **Q-Anchored (NQ) (Purple dotted line):** Starts at approximately 40% at layer 0, fluctuates between 20% and 50% for the remaining layers.

* **A-Anchored (NQ) (Brown dotted line):** Starts at approximately 70% at layer 0, fluctuates between 60% and 80% for the remaining layers.

### Key Observations

* For Llama-3-8B, the Q-Anchored approach for PopQA, TriviaQA, and NQ datasets shows a significant drop in the "I-Don't-Know" rate in the initial layers, while the A-Anchored approach remains relatively stable.

* For Llama-3-70B, the "I-Don't-Know" rates fluctuate more across layers for all datasets and anchoring methods compared to Llama-3-8B.

* The "I-Don't-Know" rate is generally higher for A-Anchored methods compared to Q-Anchored methods, especially for PopQA, TriviaQA, and NQ datasets in Llama-3-8B.

* HotpotQA consistently shows a higher "I-Don't-Know" rate compared to other datasets for both models and anchoring methods.

### Interpretation

The graphs illustrate how the "I-Don't-Know" rate varies across different layers of the Llama-3-8B and Llama-3-70B language models when answering questions from various datasets using question-anchored (Q-Anchored) and answer-anchored (A-Anchored) approaches.

The sharp drop in the "I-Don't-Know" rate for Q-Anchored methods in Llama-3-8B suggests that the model quickly learns to answer questions from PopQA, TriviaQA, and NQ datasets using the question as a starting point. The relatively stable "I-Don't-Know" rate for A-Anchored methods indicates that the model may find it more challenging to answer questions when starting from the answer.

The higher "I-Don't-Know" rates and greater fluctuations in Llama-3-70B suggest that this larger model may be more sensitive to the specific layer and anchoring method used. The consistently high "I-Don't-Know" rate for HotpotQA indicates that this dataset may contain more complex or ambiguous questions that the models struggle to answer.

The differences in "I-Don't-Know" rates between the two models and across datasets and anchoring methods highlight the importance of carefully selecting the appropriate model, dataset, and anchoring method for a given question-answering task. The data suggests that smaller models may be more efficient for certain tasks, while larger models may be necessary for more complex questions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: I-Don't-Know Rate vs. Layer for Llama Models

### Overview

The image presents two line charts, side-by-side, displaying the "I-Don't-Know Rate" against the "Layer" number for two different Llama models: Llama-3-8B and Llama-3-70B. Each chart contains multiple lines representing different question-answering datasets and anchoring methods. The charts aim to visualize how the rate of the model responding with "I-Don't-Know" changes as the model's layer depth increases.

### Components/Axes

* **X-axis:** "Layer" - Ranges from 0 to 30 for Llama-3-8B and 0 to 80 for Llama-3-70B. The axis is linearly scaled with gridlines.

* **Y-axis:** "I-Don't-Know Rate" - Ranges from 0 to 100, representing the percentage of times the model responds with "I-Don't-Know". The axis is linearly scaled with gridlines.

* **Title (Left Chart):** "Llama-3-8B"

* **Title (Right Chart):** "Llama-3-70B"

* **Legend:** Located at the bottom of the image, spanning both charts. It identifies the different lines by dataset and anchoring method.

* Q-Anchored (PopQA) - Blue solid line

* A-Anchored (PopQA) - Orange dashed line

* Q-Anchored (TriviaQA) - Purple solid line

* A-Anchored (TriviaQA) - Brown dashed line

* Q-Anchored (HotpotQA) - Green solid line

* A-Anchored (HotpotQA) - Red dashed line

* Q-Anchored (NQ) - Cyan solid line

* A-Anchored (NQ) - Magenta dashed line

### Detailed Analysis or Content Details

**Llama-3-8B (Left Chart):**

* **Q-Anchored (PopQA):** Starts at approximately 80, rapidly decreases to around 10-15 by layer 5, then fluctuates between 10 and 25 until layer 30.

* **A-Anchored (PopQA):** Starts at approximately 80, decreases to around 50 by layer 5, then fluctuates between 50 and 70 until layer 30.

* **Q-Anchored (TriviaQA):** Starts at approximately 80, decreases to around 20 by layer 5, then fluctuates between 20 and 35 until layer 30.

* **A-Anchored (TriviaQA):** Starts at approximately 80, decreases to around 60 by layer 5, then fluctuates between 60 and 75 until layer 30.

* **Q-Anchored (HotpotQA):** Starts at approximately 80, decreases to around 10 by layer 5, then fluctuates between 10 and 20 until layer 30.

* **A-Anchored (HotpotQA):** Starts at approximately 80, decreases to around 50 by layer 5, then fluctuates between 50 and 70 until layer 30.

* **Q-Anchored (NQ):** Starts at approximately 80, decreases to around 10 by layer 5, then fluctuates between 10 and 20 until layer 30.

* **A-Anchored (NQ):** Starts at approximately 80, decreases to around 50 by layer 5, then fluctuates between 50 and 70 until layer 30.

**Llama-3-70B (Right Chart):**

* **Q-Anchored (PopQA):** Starts at approximately 80, decreases to around 20 by layer 10, then fluctuates between 20 and 40 until layer 80.

* **A-Anchored (PopQA):** Starts at approximately 80, decreases to around 50 by layer 10, then fluctuates between 50 and 70 until layer 80.

* **Q-Anchored (TriviaQA):** Starts at approximately 80, decreases to around 30 by layer 10, then fluctuates between 30 and 50 until layer 80.

* **A-Anchored (TriviaQA):** Starts at approximately 80, decreases to around 60 by layer 10, then fluctuates between 60 and 80 until layer 80.

* **Q-Anchored (HotpotQA):** Starts at approximately 80, decreases to around 20 by layer 10, then fluctuates between 20 and 40 until layer 80.

* **A-Anchored (HotpotQA):** Starts at approximately 80, decreases to around 50 by layer 10, then fluctuates between 50 and 70 until layer 80.

* **Q-Anchored (NQ):** Starts at approximately 80, decreases to around 20 by layer 10, then fluctuates between 20 and 40 until layer 80.

* **A-Anchored (NQ):** Starts at approximately 80, decreases to around 50 by layer 10, then fluctuates between 50 and 70 until layer 80.

### Key Observations

* Both models exhibit a significant drop in "I-Don't-Know Rate" in the initial layers (0-5).

* The "I-Don't-Know Rate" generally stabilizes after the initial drop, fluctuating within a certain range for the remaining layers.

* "A-Anchored" methods consistently show higher "I-Don't-Know Rates" than "Q-Anchored" methods across all datasets for both models.

* The 70B model generally exhibits a lower "I-Don't-Know Rate" than the 8B model, especially in the later layers.

* The PopQA, TriviaQA, HotpotQA, and NQ datasets all show similar trends, though the specific values differ.

### Interpretation

The charts demonstrate that increasing the depth of the Llama models (adding more layers) initially reduces the frequency of "I-Don't-Know" responses. This suggests that the early layers are crucial for acquiring basic knowledge and reducing uncertainty. However, beyond a certain point, adding more layers does not significantly decrease the "I-Don't-Know Rate," indicating that the model's knowledge acquisition plateaus.

The consistent difference between "Q-Anchored" and "A-Anchored" methods suggests that the way questions are anchored (whether based on the question itself or the answer) influences the model's confidence. "A-Anchored" methods, which likely rely more on the provided answer context, result in a higher "I-Don't-Know Rate," potentially because they are more sensitive to ambiguous or incomplete information.

The lower "I-Don't-Know Rate" for the 70B model compared to the 8B model highlights the benefits of scaling model size. Larger models are better able to generalize and provide answers, even when faced with challenging or ambiguous questions. The fluctuations in the "I-Don't-Know Rate" after the initial drop could be due to the complexity of the datasets and the inherent limitations of the models. The datasets themselves may contain questions that are genuinely difficult or require specialized knowledge, leading to increased uncertainty.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: I-Don't-Know Rate vs. Layer for Llama-3 Models

### Overview

The image displays two side-by-side line charts comparing the "I-Don't-Know Rate" across the layers of two different-sized language models: Llama-3-8B (left) and Llama-3-70B (right). The charts analyze model uncertainty on four question-answering (QA) datasets under two different prompting conditions ("Q-Anchored" and "A-Anchored").

### Components/Axes

* **Chart Titles:** "Llama-3-8B" (left chart), "Llama-3-70B" (right chart).

* **Y-Axis (Both Charts):** Label: "I-Don't-Know Rate". Scale: 0 to 100, with major tick marks at 0, 20, 40, 60, 80, 100.

* **X-Axis (Left Chart - Llama-3-8B):** Label: "Layer". Scale: 0 to 30, with major tick marks at 0, 10, 20, 30.

* **X-Axis (Right Chart - Llama-3-70B):** Label: "Layer". Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, differentiating lines by color and style (solid vs. dashed).

| Style | Color | Dataset | Condition |

| :--- | :--- | :--- | :--- |

| Solid | Blue | PopQA | Q-Anchored |

| Solid | Green | TriviaQA | Q-Anchored |

| Solid | Purple | HotpotQA | Q-Anchored |

| Solid | Pink | NQ | Q-Anchored |

| Dashed | Orange | PopQA | A-Anchored |

| Dashed | Red | TriviaQA | A-Anchored |

| Dashed | Brown | HotpotQA | A-Anchored |

| Dashed | Gray | NQ | A-Anchored |

### Detailed Analysis

**Chart 1: Llama-3-8B (Left)**

* **Q-Anchored Lines (Solid):** All four solid lines exhibit a similar, dramatic trend. They start at a very high I-Don't-Know Rate (approximately 80-95) at Layer 0. There is a sharp, precipitous drop within the first 5 layers, falling to rates between ~5 and ~30. After this initial drop, the lines fluctuate significantly across the remaining layers (5-30), with no clear upward or downward trend, oscillating mostly between 5 and 40. The blue (PopQA) and green (TriviaQA) lines generally remain at the lower end of this range.

* **A-Anchored Lines (Dashed):** In stark contrast, the four dashed lines show a much more stable and elevated pattern. They begin at a moderate rate (approximately 50-65) at Layer 0. They show a slight, gradual increase over the first ~15 layers, peaking around 70-80. From layer 15 to 30, they fluctuate but remain consistently high, mostly between 60 and 80. The orange (PopQA) and red (TriviaQA) dashed lines are often the highest.

**Chart 2: Llama-3-70B (Right)**

* **Q-Anchored Lines (Solid):** The pattern is more volatile than in the 8B model. The lines start high (70-100) at Layer 0 and drop sharply within the first 10 layers, similar to the 8B model. However, the subsequent fluctuations are more extreme and frequent across the entire 80-layer span. The rates frequently spike and dip between ~5 and ~50. The purple (HotpotQA) line shows particularly high volatility.

* **A-Anchored Lines (Dashed):** These lines also start high (60-90) and remain elevated throughout. They exhibit significant volatility, with frequent peaks and troughs across all layers, generally staying within the 50-90 range. The orange (PopQA) and red (TriviaQA) dashed lines again frequently register the highest rates, often peaking above 80.

### Key Observations

1. **Anchoring Effect:** The most prominent pattern is the stark difference between Q-Anchored (solid) and A-Anchored (dashed) conditions. A-Anchored prompting consistently results in a much higher "I-Don't-Know Rate" across all layers for both model sizes.

2. **Early Layer Drop:** Both models show a dramatic decrease in uncertainty (I-Don't-Know Rate) for Q-Anchored prompts within the first 5-10 layers.

3. **Model Size & Volatility:** The larger Llama-3-70B model exhibits greater volatility in its uncertainty rates across deeper layers compared to the 8B model, for both prompting conditions.

4. **Dataset Variation:** PopQA (blue/orange) and TriviaQA (green/red) often represent the extremes within their respective line groups (Q-Anchored or A-Anchored).

### Interpretation

This data suggests that the method of prompting ("anchoring") has a profound and consistent impact on a model's expressed uncertainty, more so than the specific layer or even the model size. "A-Anchored" prompts (likely providing an answer anchor) lead the model to express high uncertainty ("I-Don't-Know") throughout its processing layers. Conversely, "Q-Anchored" prompts (likely providing only a question anchor) cause the model to rapidly reduce its expressed uncertainty in the early layers, after which uncertainty fluctuates at a lower level.

The increased volatility in the larger 70B model might indicate more complex internal processing or specialization across its many layers. The early-layer drop in the Q-Anchored condition could represent a phase where the model quickly commits to a knowledge retrieval pathway, reducing its initial "I don't know" stance. The persistent high rate in the A-Anchored condition is counter-intuitive and warrants investigation—it may reflect a failure mode where providing an answer context somehow triggers a more conservative, "I don't know" response strategy. The chart effectively visualizes how model behavior and self-assessed confidence are not static but evolve dynamically through the network's depth and are highly sensitive to input framing.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: I-Don't-Know Rate Across Layers for Llama-3-8B and Llama-3-70B Models

### Overview

The image contains two line graphs comparing the "I-Don't-Know Rate" (IDK Rate) across transformer model layers for two Llama-3 variants: 8B (3.8B parameters) and 70B (70B parameters). The graphs visualize performance across four datasets: PopQA, TriviaQA, HotpotQA, and NQ, differentiated by Q-Anchored (question-focused) and A-Anchored (answer-focused) configurations. Data is presented with shaded confidence intervals.

### Components/Axes

- **X-Axis (Layer)**:

- Llama-3-8B: 0–30 layers

- Llama-3-70B: 0–80 layers

- **Y-Axis (I-Don't-Know Rate)**: 0–100% scale

- **Legend**:

- Position: Bottom center

- Entries:

- **Q-Anchored (PopQA)**: Solid blue

- **A-Anchored (PopQA)**: Dashed orange

- **Q-Anchored (TriviaQA)**: Dotted green

- **A-Anchored (TriviaQA)**: Dash-dot purple

- **Q-Anchored (HotpotQA)**: Solid red

- **A-Anchored (HotpotQA)**: Dotted pink

- **Q-Anchored (NQ)**: Dashed gray

- **A-Anchored (NQ)**: Dotted cyan

### Detailed Analysis

#### Llama-3-8B (Left Graph)

- **Q-Anchored (PopQA)**:

- Starts at ~95% IDK in layer 0, drops sharply to ~10% by layer 10, then fluctuates between 10–30%.

- **A-Anchored (PopQA)**:

- Starts at ~60%, dips to ~20% by layer 15, then stabilizes near 30–40%.

- **Q-Anchored (TriviaQA)**:

- Peaks at ~80% in layer 5, drops to ~10% by layer 20, then oscillates between 10–40%.

- **A-Anchored (TriviaQA)**:

- Starts at ~50%, rises to ~70% by layer 10, then declines to ~30% by layer 30.

- **Q-Anchored (HotpotQA)**:

- Begins at ~70%, spikes to ~90% in layer 5, then stabilizes at 40–60%.

- **A-Anchored (HotpotQA)**:

- Starts at ~50%, rises to ~80% by layer 10, then declines to ~40%.

- **Q-Anchored (NQ)**:

- Starts at ~85%, drops to ~20% by layer 10, then fluctuates between 10–30%.

- **A-Anchored (NQ)**:

- Starts at ~60%, rises to ~80% by layer 15, then declines to ~40%.

#### Llama-3-70B (Right Graph)

- **Q-Anchored (PopQA)**:

- Starts at ~90%, drops to ~15% by layer 20, then fluctuates between 10–30%.

- **A-Anchored (PopQA)**:

- Starts at ~55%, rises to ~70% by layer 40, then declines to ~50%.

- **Q-Anchored (TriviaQA)**:

- Peaks at ~85% in layer 10, drops to ~20% by layer 40, then oscillates between 10–40%.

- **A-Anchored (TriviaQA)**:

- Starts at ~45%, rises to ~75% by layer 30, then declines to ~50%.

- **Q-Anchored (HotpotQA)**:

- Begins at ~65%, spikes to ~95% in layer 20, then stabilizes at 50–70%.

- **A-Anchored (HotpotQA)**:

- Starts at ~50%, rises to ~85% by layer 50, then declines to ~60%.

- **Q-Anchored (NQ)**:

- Starts at ~80%, drops to ~10% by layer 30, then fluctuates between 5–25%.

- **A-Anchored (NQ)**:

- Starts at ~55%, rises to ~80% by layer 60, then declines to ~50%.

### Key Observations

1. **Model Size Impact**: Llama-3-70B shows more pronounced fluctuations in IDK rates compared to Llama-3-8B, suggesting larger models may struggle more with certain datasets in specific layers.

2. **Dataset Variability**:

- **HotpotQA** consistently shows the highest IDK rates, especially in Q-Anchored configurations.

- **NQ** exhibits the most dramatic drops in IDK rates for Q-Anchored models.

3. **Anchoring Effects**:

- Q-Anchored models generally show steeper initial drops in IDK rates but higher volatility in later layers.

- A-Anchored models maintain higher IDK rates in mid-layers (e.g., layers 20–50 for Llama-3-70B).

4. **Confidence Intervals**: Shaded regions indicate uncertainty, with wider bands in Llama-3-70B, particularly for TriviaQA and HotpotQA.

### Interpretation

The data suggests that:

- **Q-Anchored models** (question-focused) may prioritize early-layer processing for certain datasets (e.g., PopQA, NQ), while **A-Anchored models** (answer-focused) show delayed but sustained IDK rates in mid-layers.

- The **HotpotQA dataset** poses the greatest challenge, with IDK rates exceeding 80% in multiple layers for both model sizes.

- Llama-3-70B’s increased layer count (80 vs. 30) correlates with more complex IDK patterns, potentially reflecting deeper contextual analysis but also greater uncertainty in specific layers.

- The **NQ dataset** demonstrates the most effective Q-Anchored performance, with IDK rates dropping below 20% in later layers for Llama-3-8B.

This analysis highlights trade-offs between model scale, anchoring strategies, and dataset-specific challenges in knowledge retrieval tasks.

DECODING INTELLIGENCE...