TECHNICAL ASSET FINGERPRINT

74ab45407877b8099a65cba0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

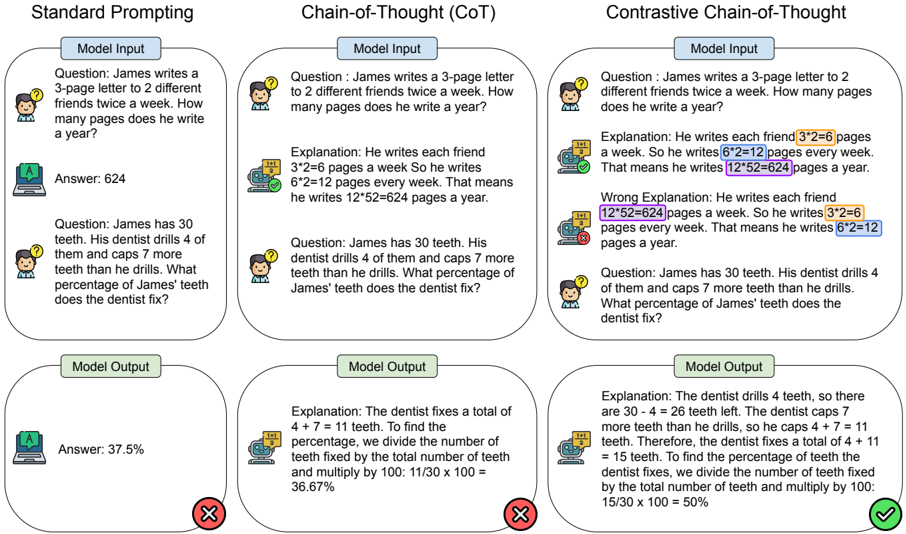

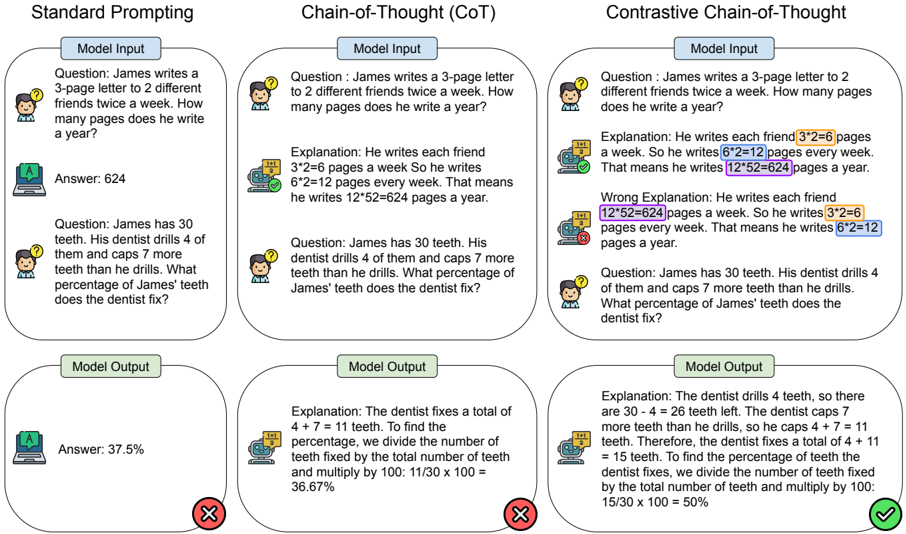

## Chart/Diagram Type: Model Comparison

### Overview

The image presents a comparison of three different prompting methods for a language model: Standard Prompting, Chain-of-Thought (CoT), and Contrastive Chain-of-Thought. Each method is evaluated on two questions. The image shows the model input, the model's reasoning (if any), and the model output, indicating whether the answer is correct or incorrect.

### Components/Axes

* **Titles:**

* Standard Prompting (top-left)

* Chain-of-Thought (CoT) (top-center)

* Contrastive Chain-of-Thought (top-right)

* **Sections (for each method):**

* Model Input (top)

* Model Output (bottom)

* **Elements within each section:**

* Question (text)

* Answer/Explanation (text)

* Correctness indicator (green checkmark or red X)

### Detailed Analysis or ### Content Details

**1. Standard Prompting:**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* Answer: 624

* Correctness: Correct (implied, no explicit indicator)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James' teeth does the dentist fix?"

* Answer: 37.5%

* Correctness: Incorrect (Red X)

**2. Chain-of-Thought (CoT):**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* Explanation: "He writes each friend 3\*2=6 pages a week So he writes 6\*2=12 pages every week. That means he writes 12\*52=624 pages a year."

* Correctness: Correct (Green Checkmark)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James' teeth does the dentist fix?"

* Explanation: "The dentist fixes a total of 4+7 = 11 teeth. To find the percentage, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 11/30 x 100 = 36.67%"

* Correctness: Incorrect (Red X)

**3. Contrastive Chain-of-Thought:**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* Explanation: "He writes each friend 3\*2=6 pages a week. So he writes 6\*2=12 pages every week. That means he writes 12\*52=624 pages a year."

* Wrong Explanation: "He writes each friend 12\*52=624 pages a week. So he writes 3\*2=6 pages every week. That means he writes 6\*2=12 pages a year."

* Correctness: Correct (Green Checkmark)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James' teeth does the dentist fix?"

* Explanation: "The dentist drills 4 teeth, so there are 30 - 4 = 26 teeth left. The dentist caps 7 more teeth than he drills, so he caps 4 + 7 = 11 teeth. Therefore, the dentist fixes a total of 4 + 11 = 15 teeth. To find the percentage of teeth the dentist fixes, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 15/30 x 100 = 50%"

* Correctness: Correct (Green Checkmark)

### Key Observations

* Chain-of-Thought prompting helps the model to correctly answer the first question, while standard prompting fails.

* Contrastive Chain-of-Thought is the only method that correctly answers the second question.

* The "wrong explanation" in Contrastive Chain-of-Thought highlights the model's ability to consider alternative, incorrect reasoning paths.

### Interpretation

The image demonstrates the effectiveness of Chain-of-Thought and Contrastive Chain-of-Thought prompting in improving the reasoning capabilities of language models. By providing intermediate steps and considering alternative explanations, these methods can lead to more accurate and reliable answers, especially for complex problems. The contrastive approach seems particularly effective in avoiding errors in the second question, suggesting that explicitly considering incorrect reasoning paths can help the model arrive at the correct solution.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Comparison of Prompting Techniques: Standard, CoT, and Contrastive CoT

### Overview

The image presents a comparison of three different prompting techniques for Large Language Models (LLMs): Standard Prompting, Chain-of-Thought (CoT) Prompting, and Contrastive Chain-of-Thought (Contrastive CoT) Prompting. Each technique is demonstrated with two example questions, showing the "Model Input", "Explanation" (where applicable), and "Model Output". The results are visually indicated with checkmarks (correct) and crosses (incorrect).

### Components/Axes

The image is divided into three columns, one for each prompting technique. Each column is further divided into two sections, each representing a question-answer pair. Within each section, there are three sub-sections: "Model Input", "Explanation" (for CoT and Contrastive CoT), and "Model Output". Visual cues (checkmarks and crosses) indicate the correctness of the "Model Output".

### Detailed Analysis or Content Details

**Column 1: Standard Prompting**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* **Model Input:** The question is directly stated.

* **Model Output:** "Answer: 624" (marked with a checkmark)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James’ teeth does the dentist fix?"

* **Model Input:** The question is directly stated.

* **Model Output:** "Answer: 37.5%" (marked with a checkmark)

**Column 2: Chain-of-Thought (CoT) Prompting**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* **Model Input:** The question is directly stated.

* **Explanation:** "Explanation: He writes each friend 3\*2=6 pages a week. So he writes 6\*2=12 pages every week. That means he writes 12\*52=624 pages a year."

* **Model Output:** (No explicit output shown, but the explanation leads to the correct answer) (marked with a checkmark)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James’ teeth does the dentist fix?"

* **Model Input:** The question is directly stated.

* **Explanation:** "Explanation: The dentist fixes a total of 4 + 7 = 11 teeth. To find the percentage, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 11/30 x 100 = 36.67%"

* **Model Output:** (No explicit output shown, but the explanation leads to the correct answer) (marked with a cross)

**Column 3: Contrastive Chain-of-Thought (Contrastive CoT) Prompting**

* **Question 1:** "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* **Model Input:** The question is directly stated.

* **Explanation:** "Explanation: James writes each friend 3\*2=6 pages a week. So he writes 6\*2=12 pages every week. That means he writes 12\*52=624 pages a year. Wrong Explanation: He writes each friend 12\*52=624 pages a week. So he writes 3\*2=6 pages every week. That means he writes 6\*2=12 pages a year."

* **Model Output:** (No explicit output shown, but the explanation leads to the correct answer) (marked with a checkmark)

* **Question 2:** "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James’ teeth does the dentist fix?"

* **Model Input:** The question is directly stated.

* **Explanation:** "Explanation: The dentist drills 4 teeth, so there are 30 - 4 = 26 teeth left. The dentist caps 7 more teeth than he drills, so he caps 4 + 7 = 11 teeth. Therefore, the dentist fixes a total of 4 + 11 teeth. To find the percentage of teeth the dentist fixes, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 15/30 x 100 = 50%"

* **Model Output:** (No explicit output shown, but the explanation leads to the correct answer) (marked with a checkmark)

### Key Observations

* Standard Prompting and Contrastive CoT both achieve correct answers for both questions.

* CoT Prompting fails on the second question, providing an incorrect percentage (36.67% instead of 50%).

* Contrastive CoT includes a "Wrong Explanation" alongside the correct one, demonstrating the model's ability to identify and contrast incorrect reasoning paths.

* The checkmarks and crosses clearly indicate the success rate of each prompting technique.

### Interpretation

The image demonstrates the effectiveness of different prompting techniques for LLMs. Standard prompting can work well for simple problems, but struggles with more complex reasoning. Chain-of-Thought prompting improves reasoning ability but can still lead to errors, as seen in the second question. Contrastive Chain-of-Thought prompting appears to be the most robust, as it not only provides a correct explanation but also highlights potential pitfalls in reasoning, leading to a higher success rate. The inclusion of a "Wrong Explanation" in Contrastive CoT is a key feature, allowing the model to self-critique and refine its reasoning process. This suggests that explicitly contrasting correct and incorrect reasoning paths can significantly improve the reliability of LLM outputs. The image highlights the importance of prompt engineering in eliciting accurate and reliable responses from LLMs.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Comparison of Prompting Techniques for AI Models

### Overview

The image is a technical diagram comparing three different methods for prompting AI models to solve math word problems. It is structured as a three-column layout, with each column representing a distinct prompting technique: "Standard Prompting," "Chain-of-Thought (CoT)," and "Contrastive Chain-of-Thought." Each column contains two example problems, showing the "Model Input" (the prompt given to the AI) and the resulting "Model Output." Visual icons (a person with a question mark, a robot, and a laptop) and colored highlights are used to differentiate elements and indicate correctness.

### Components/Axes

The diagram is organized into three vertical panels, each with a clear header:

1. **Left Panel:** "Standard Prompting"

2. **Center Panel:** "Chain-of-Thought (CoT)"

3. **Right Panel:** "Contrastive Chain-of-Thought"

Within each panel, the flow is vertical:

* **Top Section:** "Model Input" box containing the problem statement and, for CoT methods, an explanation.

* **Bottom Section:** "Model Output" box containing the final answer or explanation.

* **Icons:** A person icon (👤) precedes the user's question. A robot icon (🤖) precedes the model's explanation. A laptop icon (💻) precedes the model's final answer.

* **Correctness Indicators:** A red "X" (❌) marks incorrect model outputs. A green checkmark (✅) marks a correct model output.

* **Text Highlights:** In the "Contrastive Chain-of-Thought" column, specific numerical steps in explanations are highlighted with colored backgrounds (yellow, purple, blue, green) to draw attention to the reasoning process.

### Detailed Analysis

**Column 1: Standard Prompting**

* **Example 1 Input:**

* Question: "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

* **Example 1 Output:**

* Answer: "624"

* Status: Marked with a red X (❌).

* **Example 2 Input:**

* Question: "James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James' teeth does the dentist fix?"

* **Example 2 Output:**

* Answer: "37.5%"

* Status: Marked with a red X (❌).

**Column 2: Chain-of-Thought (CoT)**

* **Example 1 Input:**

* Question: (Identical to Standard Prompting Example 1)

* Explanation: "He writes each friend 3*2=6 pages a week. So he writes 6*2=12 pages every week. That means he writes 12*52=624 pages a year."

* **Example 1 Output:**

* Explanation: (Identical to the input explanation)

* Status: Marked with a red X (❌).

* **Example 2 Input:**

* Question: (Identical to Standard Prompting Example 2)

* **Example 2 Output:**

* Explanation: "The dentist fixes a total of 4 + 7 = 11 teeth. To find the percentage, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 11/30 x 100 = 36.67%"

* Status: Marked with a red X (❌).

**Column 3: Contrastive Chain-of-Thought**

* **Example 1 Input:**

* Question: (Identical to previous examples)

* **Correct Explanation:** "He writes each friend **3*2=6** pages a week. So he writes **6*2=12** pages every week. That means he writes **12*52=624** pages a year." (Highlights: yellow, purple, blue)

* **Wrong Explanation:** "He writes each friend **12*52=624** pages a week. So he writes **3*2=6** pages every week. That means he writes **6*2=12** pages a year." (Highlights: blue, yellow, purple)

* **Example 1 Output:**

* (No separate output box shown for this example in the diagram).

* **Example 2 Input:**

* Question: (Identical to previous examples)

* **Example 2 Output:**

* Explanation: "The dentist drills 4 teeth, so there are 30 - 4 = 26 teeth left. The dentist caps 7 more teeth than he drills, so he caps 4 + 7 = 11 teeth. Therefore, the dentist fixes a total of 4 + 11 = 15 teeth. To find the percentage of teeth the dentist fixes, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 15/30 x 100 = 50%"

* Status: Marked with a green checkmark (✅).

### Key Observations

1. **Progression of Complexity:** The prompting techniques evolve from simple question-answer (Standard), to question-reasoning-answer (CoT), to question-reasoning_with_contrastive_examples-answer (Contrastive CoT).

2. **Error Patterns:** The "Standard Prompting" and basic "Chain-of-Thought" methods produce incorrect answers for the teeth percentage problem (37.5% and 36.67% vs. the correct 50%). The error in the CoT explanation for the teeth problem is a logical flaw: it incorrectly sums the drilled and "more than drilled" caps (4 + 7) instead of correctly calculating the caps (4 + 7 = 11) and then summing all fixed teeth (4 drilled + 11 capped = 15).

3. **Visual Learning Cue:** The "Contrastive Chain-of-Thought" column uses colored highlights to visually link numerical steps between a correct and an incorrect explanation for the first problem, demonstrating the method's core idea of learning from contrast.

4. **Correctness Outcome:** Only the final output in the "Contrastive Chain-of-Thought" column (for the teeth problem) is marked as correct (✅), suggesting this method is presented as the most effective.

### Interpretation

This diagram serves as a pedagogical or research illustration comparing AI prompting strategies. It argues visually that:

* **Standard Prompting** is prone to errors as the model jumps to an answer without showing work.

* **Chain-of-Thought Prompting** improves transparency by revealing the model's reasoning process but does not guarantee correctness, as the model can still make logical errors in its step-by-step explanation.

* **Contrastive Chain-of-Thought Prompting** is presented as a superior method. By providing the model with both a correct and an incorrect reasoning path (a "contrastive pair"), it appears to help the model avoid common pitfalls and arrive at the correct solution. The green checkmark on the final output implies this method successfully guides the model to the right answer (50%) where the others failed.

The underlying message is that how you frame a problem for an AI—especially by including examples of both right and wrong reasoning—significantly impacts its performance on tasks requiring multi-step logic. The diagram is likely from a paper or presentation advocating for the adoption of contrastive techniques in prompt engineering.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Comparison of Prompting Methods for Math Problem Solving

### Overview

The image compares three prompting methods for solving math problems: **Standard Prompting**, **Chain-of-Thought (CoT)**, and **Contrastive Chain-of-Thought**. Each method includes a question, model input, explanation, and model output with correctness indicators (✅/❌). The focus is on arithmetic reasoning and percentage calculations.

---

### Components/Axes

- **Columns**: Three vertical sections labeled:

1. **Standard Prompting**

2. **Chain-of-Thought (CoT)**

3. **Contrastive Chain-of-Thought**

- **Rows**: Each column contains:

- **Question**: A math problem (e.g., "James writes a 3-page letter...").

- **Model Input**: The problem statement.

- **Explanation**: Step-by-step reasoning (highlighted in Contrastive CoT).

- **Model Output**: Final answer with correctness feedback (✅/❌).

---

### Detailed Analysis

#### Standard Prompting

- **Question**:

1. James writes a 3-page letter to 2 friends twice a week. How many pages a year?

2. James has 30 teeth. Dentist drills 4, caps 7 more. What percentage fixed?

- **Model Input**: Direct problem statements.

- **Model Output**:

- Answer: 624 (✅).

- Answer: 37.5% (❌).

#### Chain-of-Thought (CoT)

- **Question**: Same as Standard Prompting.

- **Model Input**: Same as Standard Prompting.

- **Explanation**:

1. "He writes each friend 3×2=6 pages a week. So 6×2=12 pages weekly. 12×52=624 yearly."

2. "Dentist fixes 4+7=11 teeth. 11/30×100=36.67%."

- **Model Output**:

- Answer: 624 (✅).

- Answer: 36.67% (❌).

#### Contrastive Chain-of-Thought

- **Question**: Same as Standard Prompting.

- **Model Input**: Same as Standard Prompting.

- **Explanation**:

1. **Correct**: "3×2=6 pages per friend. 6×2=12 weekly. 12×52=624 yearly."

2. **Wrong**: "12×52=624 pages weekly. 3×2=6 pages yearly."

3. **Correct**: "Dentist fixes 4+11=15 teeth. 15/30×100=50%."

- **Model Output**:

- Answer: 624 (✅).

- Answer: 50% (✅).

---

### Key Observations

1. **Standard Prompting** produces correct answers but lacks reasoning transparency.

2. **CoT** improves reasoning clarity but occasionally miscalculates (e.g., 36.67% instead of 50%).

3. **Contrastive CoT** explicitly contrasts correct/incorrect reasoning, leading to accurate answers (50%).

4. **Highlighted Errors**: In Contrastive CoT, incorrect steps (e.g., "12×52=624 pages weekly") are flagged to guide the model.

---

### Interpretation

- **Standard Prompting** is efficient but opaque, risking errors in complex reasoning.

- **CoT** enhances interpretability by breaking down steps but may still propagate mistakes.

- **Contrastive CoT** mitigates errors by contrasting valid/invalid reasoning paths, improving accuracy. This method is particularly effective for percentage calculations, where missteps in intermediate steps (e.g., misapplying multiplication) are critical.

The image demonstrates how structured reasoning (CoT) and error contrast (Contrastive CoT) enhance model performance in mathematical problem-solving.

DECODING INTELLIGENCE...