## Diagram: Binary Classification Representation

### Overview

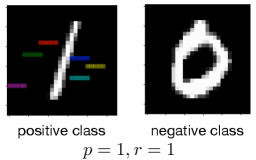

The image is a conceptual diagram illustrating two distinct classes in a binary classification problem. It visually contrasts the spatial distribution of data points belonging to a "positive class" versus a "negative class." The diagram includes a performance metric at the bottom.

### Components/Axes

The image is divided into two primary panels side-by-side on a black background, with a line of text centered below them.

1. **Left Panel (Positive Class):**

* **Visual Element:** A thick, white diagonal line runs from the bottom-left to the top-right. Intersecting this line are several short, horizontal colored bars.

* **Colors Observed (from top to bottom):** Red, Green, Blue, Purple.

* **Label:** The text "positive class" is centered directly below this panel.

2. **Right Panel (Negative Class):**

* **Visual Element:** A thick, white, irregular ring or oval shape with a black center. The shape is not a perfect circle and has a slightly jagged or pixelated edge.

* **Label:** The text "negative class" is centered directly below this panel.

3. **Bottom Text:**

* **Content:** The mathematical notation `p = 1, r = 1` is centered at the very bottom of the entire image, beneath both class labels.

### Detailed Analysis

* **Positive Class Representation:** The data points (represented by colored bars) are arranged linearly along a clear diagonal decision boundary (the white line). This suggests a feature space where the positive class is linearly separable. The different colors (red, green, blue, purple) may represent different sub-categories, features, or instances within the positive class, but no legend is provided to define them.

* **Negative Class Representation:** The data forms a continuous, ring-like cluster. This represents a non-linear distribution where the negative class occupies a region surrounding a central void. This is a classic example of data that is not linearly separable from a central point.

* **Performance Metric:** The text `p = 1, r = 1` is standard notation for **Precision (p)** and **Recall (r)**. A value of 1 for both indicates perfect classification performance on a given dataset: no false positives (Precision = 1) and no false negatives (Recall = 1).

### Key Observations

1. **Contrasting Geometries:** The core visual message is the stark contrast between a linearly organized class (positive) and a non-linear, clustered class (negative).

2. **Perfect Scores:** The stated precision and recall of 1.0 imply that, for the data this diagram represents, a classifier has achieved flawless separation between these two geometrically distinct classes.

3. **Color Usage:** Colors are used only in the positive class panel, potentially to highlight individual data points or subgroups. The negative class is monochromatic (white).

4. **Spatial Layout:** The legend/labels are placed directly below their respective visual components. The performance metric is placed centrally at the bottom, acting as a summary for the entire classification result.

### Interpretation

This diagram is a pedagogical or conceptual illustration, not a plot of empirical data. Its purpose is to visually communicate the idea of class separability in machine learning.

* **What it demonstrates:** It shows that perfect classification (`p=1, r=1`) is achievable when two classes have fundamentally different and non-overlapping geometric structures in the feature space—one is a line, the other is a ring.

* **Relationship between elements:** The left and right panels are direct counterparts, showing the two possible outcomes of a binary classifier. The bottom text (`p=1, r=1`) is the quantitative result of successfully distinguishing between the two visual patterns shown above.

* **Underlying Message:** The image likely serves to explain a concept like the "kernel trick" in Support Vector Machines (SVMs) or the need for non-linear models. It visually argues that while a simple linear boundary (the white line) can define the positive class, a more complex, non-linear boundary is required to encapsulate the negative class (the ring). The perfect scores suggest that with the right model or feature transformation, such distinct patterns can be perfectly classified.