TECHNICAL ASSET FINGERPRINT

74cd194994af166f87308f45

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

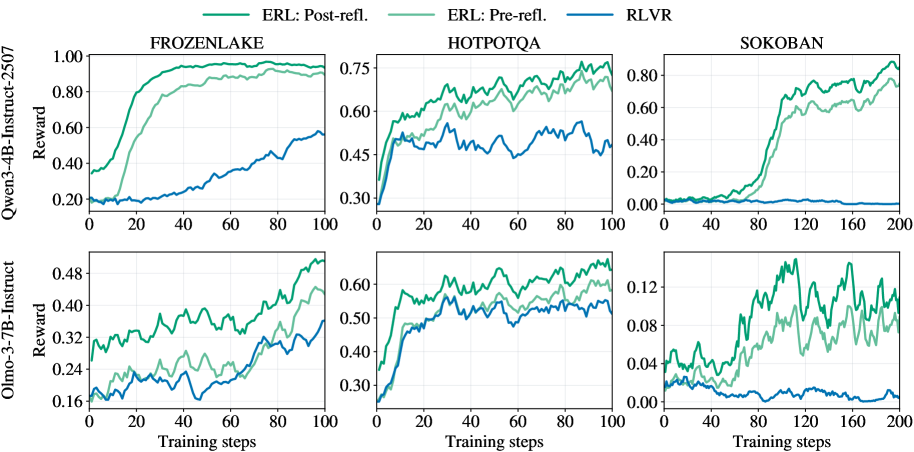

## Chart: Reward vs. Training Steps for Different Models and Environments

### Overview

The image presents six line charts arranged in a 2x3 grid. Each chart displays the reward achieved by different models (ERL: Post-refl, ERL: Pre-refl, and RLVR) across varying training steps in different environments (FROZENLAKE, HOTPOTQA, and SOKOBAN). The charts are grouped by the model used (Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct).

### Components/Axes

* **Titles:**

* Top Row (Qwen3-4B-Instruct-2507): FROZENLAKE, HOTPOTQA, SOKOBAN

* Bottom Row (Olmo-3-7B-Instruct): FROZENLAKE, HOTPOTQA, SOKOBAN

* **Y-Axis (Reward):**

* Top Row: Scale from 0.00 to 1.00, with markers at 0.20, 0.40, 0.60, 0.80, and 1.00.

* Top Row (HOTPOTQA): Scale from 0.30 to 0.75, with markers at 0.30, 0.45, 0.60, and 0.75.

* Top Row (SOKOBAN): Scale from 0.00 to 0.80, with markers at 0.00, 0.20, 0.40, 0.60, and 0.80.

* Bottom Row: Scale from 0.16 to 0.48, with markers at 0.16, 0.24, 0.32, 0.40, and 0.48.

* Bottom Row (HOTPOTQA): Scale from 0.30 to 0.60, with markers at 0.30, 0.40, 0.50, and 0.60.

* Bottom Row (SOKOBAN): Scale from 0.00 to 0.12, with markers at 0.00, 0.04, 0.08, and 0.12.

* **X-Axis (Training steps):**

* FROZENLAKE and HOTPOTQA: Scale from 0 to 100, with ticks every 20 steps.

* SOKOBAN: Scale from 0 to 200, with ticks every 40 steps.

* **Labels:**

* Y-Axis Label (left side, vertical): "Reward" for both rows.

* Left of Top Row (vertical): "Qwen3-4B-Instruct-2507"

* Left of Bottom Row (vertical): "Olmo-3-7B-Instruct"

* X-Axis Label (bottom): "Training steps" for all charts.

* **Legend (top):**

* "ERL: Post-refl." (light green line)

* "ERL: Pre-refl." (green line)

* "RLVR" (blue line)

### Detailed Analysis

**Top Row (Qwen3-4B-Instruct-2507):**

* **FROZENLAKE:**

* ERL: Post-refl. (light green): Rapidly increases from ~0.20 to ~0.90 within the first 20 training steps, then plateaus around ~0.95.

* ERL: Pre-refl. (green): Increases from ~0.20 to ~0.80 within the first 20 training steps, then gradually increases to ~0.90, plateauing around ~0.90-0.95.

* RLVR (blue): Starts at ~0.20 and gradually increases to ~0.55 by 100 training steps.

* **HOTPOTQA:**

* ERL: Post-refl. (light green): Starts at ~0.40 and increases to ~0.70 with fluctuations.

* ERL: Pre-refl. (green): Starts at ~0.45 and increases to ~0.75 with fluctuations.

* RLVR (blue): Starts at ~0.30 and increases to ~0.55 with fluctuations.

* **SOKOBAN:**

* ERL: Post-refl. (light green): Starts at ~0.00, increases rapidly to ~0.70 around step 100, then fluctuates around ~0.70-0.80.

* ERL: Pre-refl. (green): Starts at ~0.00, increases rapidly to ~0.60 around step 100, then fluctuates around ~0.60-0.70.

* RLVR (blue): Remains relatively flat around ~0.00 for the entire training period.

**Bottom Row (Olmo-3-7B-Instruct):**

* **FROZENLAKE:**

* ERL: Post-refl. (light green): Starts at ~0.32 and increases to ~0.44 with fluctuations.

* ERL: Pre-refl. (green): Starts at ~0.30 and increases to ~0.48 with fluctuations.

* RLVR (blue): Starts at ~0.16 and increases to ~0.36 with fluctuations.

* **HOTPOTQA:**

* ERL: Post-refl. (light green): Starts at ~0.45 and increases to ~0.60 with fluctuations.

* ERL: Pre-refl. (green): Starts at ~0.40 and increases to ~0.55 with fluctuations.

* RLVR (blue): Starts at ~0.30 and increases to ~0.50 with fluctuations.

* **SOKOBAN:**

* ERL: Post-refl. (light green): Starts at ~0.02 and increases to ~0.14 with fluctuations.

* ERL: Pre-refl. (green): Starts at ~0.02 and increases to ~0.08 with fluctuations.

* RLVR (blue): Remains relatively flat around ~0.02 for the entire training period.

### Key Observations

* ERL models (both Post-refl and Pre-refl) generally outperform RLVR in all environments and with both base models (Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct).

* The FROZENLAKE environment shows the most significant performance difference between ERL and RLVR, especially with the Qwen3-4B-Instruct-2507 model.

* The SOKOBAN environment shows a delayed but significant increase in reward for ERL models, while RLVR remains consistently low.

* The Olmo-3-7B-Instruct model generally achieves lower rewards compared to the Qwen3-4B-Instruct-2507 model across all environments and algorithms.

* The "Post-refl" version of ERL generally performs slightly better than the "Pre-refl" version, although the difference is not always substantial.

### Interpretation

The data suggests that the ERL models, both with pre-reflection and post-reflection mechanisms, are more effective in learning and achieving higher rewards compared to the RLVR model across the tested environments. The FROZENLAKE environment appears to be particularly challenging for the RLVR model. The delayed performance increase in SOKOBAN for ERL models indicates a potential learning curve or a requirement for more exploration in that specific environment. The difference in performance between the Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct models highlights the impact of the base model architecture on the overall learning outcome. The slight advantage of "Post-refl" ERL over "Pre-refl" ERL suggests that the post-reflection mechanism might contribute to more efficient or effective learning.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

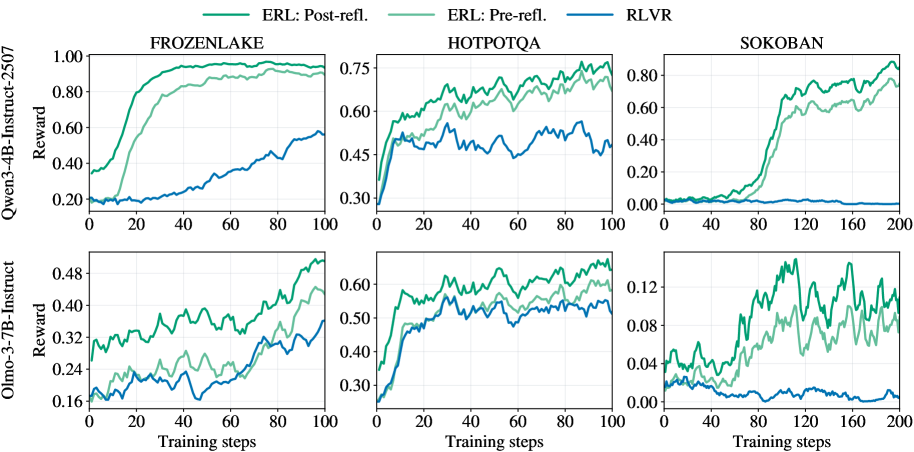

## Charts: Reinforcement Learning Reward Curves

### Overview

The image presents six line charts, arranged in a 2x3 grid, displaying reward curves for different reinforcement learning algorithms across three environments: FrozenLake, HotpotQA, and Sokoban. Two model sizes are compared: Owen-3-4B-Instruct-2507 and Olmo-3-7B-Instruct. The algorithms are ERL (with pre- and post-reflection) and RLVR. The charts show how the reward changes with the number of training steps.

### Components/Axes

* **Y-axis (Vertical):** Reward. Scales vary per chart, ranging from approximately 0.00 to 1.00.

* **X-axis (Horizontal):** Training steps. Scales vary per chart, ranging from 0 to 200.

* **Lines:**

* ERL: Post-refl. (Light Green)

* ERL: Pre-refl. (Medium Turquoise)

* RLVR (Dark Blue)

* **Chart Titles (Top Row):** FROZENLAKE, HOTPOTQA, SOKOBAN

* **Y-axis Labels (Left Column):** Owen-3-4B-Instruct-2507 Reward, Olmo-3-7B-Instruct Reward

* **X-axis Labels (Bottom Row):** Training steps, Training steps, Training steps

### Detailed Analysis or Content Details

**1. FrozenLake (Owen-3-4B-Instruct-2507)**

* **Trend:** All three lines generally slope upwards, indicating increasing reward with training. ERL Post-refl. shows the steepest initial increase.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.20, reaches ~0.95 at 100 steps.

* ERL Pre-refl.: Starts at ~0.20, reaches ~0.75 at 100 steps.

* RLVR: Starts at ~0.25, reaches ~0.50 at 100 steps.

**2. HotpotQA (Owen-3-4B-Instruct-2507)**

* **Trend:** All lines fluctuate, but generally show an upward trend. ERL Post-refl. consistently achieves the highest reward.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.45, reaches ~0.75 at 100 steps.

* ERL Pre-refl.: Starts at ~0.40, reaches ~0.60 at 100 steps.

* RLVR: Starts at ~0.40, reaches ~0.50 at 100 steps.

**3. Sokoban (Owen-3-4B-Instruct-2507)**

* **Trend:** All lines show an upward trend, but with more significant fluctuations. ERL Post-refl. shows the most rapid increase towards the end of the training.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.05, reaches ~0.80 at 200 steps.

* ERL Pre-refl.: Starts at ~0.05, reaches ~0.40 at 200 steps.

* RLVR: Starts at ~0.02, reaches ~0.20 at 200 steps.

**4. FrozenLake (Olmo-3-7B-Instruct)**

* **Trend:** Similar to the Owen model, all lines increase with training. ERL Post-refl. shows the fastest initial growth.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.15, reaches ~0.48 at 100 steps.

* ERL Pre-refl.: Starts at ~0.15, reaches ~0.35 at 100 steps.

* RLVR: Starts at ~0.20, reaches ~0.30 at 100 steps.

**5. HotpotQA (Olmo-3-7B-Instruct)**

* **Trend:** All lines fluctuate and generally increase. ERL Post-refl. consistently has the highest reward.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.30, reaches ~0.60 at 100 steps.

* ERL Pre-refl.: Starts at ~0.30, reaches ~0.50 at 100 steps.

* RLVR: Starts at ~0.30, reaches ~0.40 at 100 steps.

**6. Sokoban (Olmo-3-7B-Instruct)**

* **Trend:** All lines show an upward trend with fluctuations. ERL Post-refl. demonstrates the most significant increase towards the end of training.

* **Data Points (approximate):**

* ERL Post-refl.: Starts at ~0.02, reaches ~0.12 at 200 steps.

* ERL Pre-refl.: Starts at ~0.02, reaches ~0.08 at 200 steps.

* RLVR: Starts at ~0.01, reaches ~0.06 at 200 steps.

### Key Observations

* ERL Post-refl. consistently outperforms ERL Pre-refl. and RLVR across all environments and model sizes.

* The performance gap between the algorithms is most pronounced in the Sokoban environment.

* The Olmo-3-7B-Instruct model generally achieves lower rewards than the Owen-3-4B-Instruct-2507 model, especially in the Sokoban environment.

* All algorithms show diminishing returns as training progresses, with the rate of reward increase slowing down.

### Interpretation

The data suggests that the post-reflection technique significantly improves the performance of the ERL algorithm in reinforcement learning tasks. The consistent outperformance of ERL Post-refl. across different environments indicates that this technique is robust and effective. The lower rewards achieved by the Olmo-3-7B-Instruct model, particularly in Sokoban, could be due to several factors, including differences in model architecture, training data, or hyperparameter settings. The diminishing returns observed in all algorithms suggest that further training may not yield substantial improvements in performance. The fluctuations in reward curves, especially in HotpotQA, may indicate the stochastic nature of the environment or the learning process. The Sokoban environment appears to be the most challenging, as evidenced by the lower overall reward values and the greater variability in performance. This could be due to the complexity of the task or the difficulty of exploring the state space.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Comparative Performance of ERL and RLVR Across Environments

### Overview

The image displays a set of six line charts arranged in a 2x3 grid. The charts compare the training performance (measured in "Reward") of three different reinforcement learning or training methods across three distinct environments. The comparison is conducted for two different base language models.

### Components/Axes

* **Grid Structure:** Two rows, three columns.

* **Row Labels (Y-axis titles for the entire row):**

* Top Row: `Qwen-3-4B-Instruct-2507` (Y-axis label: `Reward`)

* Bottom Row: `Olmo-3-7B-Instruct` (Y-axis label: `Reward`)

* **Column Labels (Chart Titles):**

* Left Column: `FROZENLAKE`

* Middle Column: `HOTPOTQA`

* Right Column: `SOKOBAN`

* **X-axis:** Labeled `Training steps` for all charts. The scale varies:

* FROZENLAKE and HOTPOTQA charts: 0 to 100 steps.

* SOKOBAN charts: 0 to 200 steps.

* **Y-axis:** Labeled `Reward` for all charts. The scale and range differ significantly per chart and model.

* **Legend:** Located at the top center of the entire figure. It defines three data series:

* `ERL: Post-refl.` (Dark Green line)

* `ERL: Pre-refl.` (Light Green line)

* `RLVR` (Blue line)

### Detailed Analysis

**1. Top Row: Qwen-3-4B-Instruct-2507 Model**

* **FROZENLAKE (Top-Left):**

* **Trend Verification:** Both ERL lines show a steep, sigmoidal increase, plateauing near the top. The RLVR line shows a slow, steady linear increase.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.35, rises sharply between steps 10-40, plateaus near 1.0 by step 60.

* `ERL: Pre-refl.`: Starts ~0.20, follows a similar but slightly delayed and lower trajectory than Post-refl., plateaus near 0.95.

* `RLVR`: Starts ~0.20, increases gradually to ~0.55 by step 100.

* **HOTPOTQA (Top-Middle):**

* **Trend Verification:** All three lines show an initial rapid rise followed by noisy, fluctuating plateaus. ERL lines consistently outperform RLVR.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.30, rises to ~0.70 by step 40, fluctuates between 0.65-0.75 thereafter.

* `ERL: Pre-refl.`: Follows a similar pattern to Post-refl. but is consistently lower, fluctuating between 0.60-0.70.

* `RLVR`: Starts ~0.30, rises to ~0.50 by step 20, then fluctuates noisily between 0.45-0.55.

* **SOKOBAN (Top-Right):**

* **Trend Verification:** ERL lines show a delayed but very sharp increase. RLVR remains near zero throughout.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Near 0 until step ~60, then rises sharply to ~0.80 by step 140, ending near 0.90.

* `ERL: Pre-refl.`: Follows a similar delayed rise but is lower, reaching ~0.75 by step 200.

* `RLVR`: Hovers near 0.00 for the entire 200 steps.

**2. Bottom Row: Olmo-3-7B-Instruct Model**

* **FROZENLAKE (Bottom-Left):**

* **Trend Verification:** All lines show a gradual, noisy upward trend. ERL lines are distinctly higher than RLVR.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.30, ends near 0.50.

* `ERL: Pre-refl.`: Starts ~0.18, ends near 0.44.

* `RLVR`: Starts ~0.18, ends near 0.36.

* **HOTPOTQA (Bottom-Middle):**

* **Trend Verification:** Similar pattern to the Qwen model on HOTPOTQA: rapid initial rise, then noisy plateaus with ERL leading.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Rises to ~0.60 by step 20, fluctuates between 0.55-0.65.

* `ERL: Pre-refl.`: Rises to ~0.55 by step 20, fluctuates between 0.50-0.60.

* `RLVR`: Rises to ~0.50 by step 20, fluctuates between 0.45-0.55.

* **SOKOBAN (Bottom-Right):**

* **Trend Verification:** ERL lines show high volatility with a general upward trend. RLVR shows a slight downward trend.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Highly volatile, ranging from ~0.04 to a peak near 0.14, ending around 0.10.

* `ERL: Pre-refl.`: Also volatile but generally lower than Post-refl., ranging from ~0.02 to 0.12.

* `RLVR`: Starts near 0.03, shows a slight decline, ending near 0.00.

### Key Observations

1. **Consistent Hierarchy:** In all six charts, the `ERL: Post-refl.` method (dark green) achieves the highest final reward, followed by `ERL: Pre-refl.` (light green), with `RLVR` (blue) performing the worst.

2. **Environment Difficulty:** The SOKOBAN environment appears to be the most challenging, especially for the RLVR method, which fails to learn (reward ~0) in both models. ERL methods show a significant learning delay in SOKOBAN with the Qwen model.

3. **Model Comparison:** The Qwen-3-4B model achieves higher absolute reward values (e.g., near 1.0 in FROZENLAKE) compared to the Olmo-3-7B model (max ~0.50 in FROZENLAKE), suggesting the tasks or reward scales may differ, or the Qwen model is more capable for these specific tasks.

4. **Learning Dynamics:** ERL methods typically show faster initial learning (steeper slopes) and higher asymptotic performance than RLVR. The "Post-refl." variant consistently offers a performance boost over "Pre-refl.".

### Interpretation

The data strongly suggests that the **ERL (Evolutionary Reinforcement Learning) methodology, particularly with post-reflection ("Post-refl."), is significantly more effective than the RLVR baseline** for training the evaluated language models on these sequential decision-making and reasoning tasks (FROZENLAKE, SOKOBAN, HOTPOTQA).

The consistent performance gap indicates that the evolutionary and reflective components of ERL provide a more robust learning signal or exploration strategy. The dramatic failure of RLVR in SOKOBAN highlights its potential inadequacy for sparse-reward, long-horizon planning tasks, where ERL's population-based approach may excel. The volatility in the Olmo-3-7B SOKOBAN chart suggests less stable training for that model-environment-method combination. Overall, the charts present compelling evidence for the superiority of the proposed ERL framework over the RLVR alternative across diverse tasks and model architectures.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Reinforcement Learning Method Performance Comparison

### Overview

The image contains six line graphs comparing the performance of three reinforcement learning (RL) methods—ERL: Post-reflection, ERL: Pre-reflection, and RLVR—across three environments (FROZENLAKE, HOTPOTQA, SOKOBAN) at two training step milestones (100 and 200 steps). Reward values are plotted on the y-axis against training steps on the x-axis.

---

### Components/Axes

- **X-axis**: "Training steps" (ranges: 0–100 for top row, 0–200 for bottom row).

- **Y-axis**: "Reward" (scales vary by environment: 0–1.0 for FROZENLAKE/HOTPOTQA, 0–0.12 for SOKOBAN).

- **Legends**:

- Green: ERL: Post-reflection

- Light green: ERL: Pre-reflection

- Blue: RLVR

- **Graph Titles**: Environment names (FROZENLAKE, HOTPOTQA, SOKOBAN) with training step counts (100 or 200).

---

### Detailed Analysis

#### FROZENLAKE (100 steps)

- **ERL: Post-reflection** (green): Starts at ~0.2, rises sharply to ~0.9 by 100 steps.

- **ERL: Pre-reflection** (light green): Begins at ~0.1, increases gradually to ~0.7.

- **RLVR** (blue): Starts at ~0.1, climbs to ~0.45.

#### FROZENLAKE (200 steps)

- **ERL: Post-reflection**: Reaches ~0.95, plateauing near 1.0.

- **ERL: Pre-reflection**: Peaks at ~0.7, with minor fluctuations.

- **RLVR**: Stabilizes at ~0.5.

#### HOTPOTQA (100 steps)

- **ERL: Post-reflection**: Starts at ~0.3, rises to ~0.7 with minor dips.

- **ERL: Pre-reflection**: Begins at ~0.2, increases to ~0.6.

- **RLVR**: Starts at ~0.1, climbs to ~0.4.

#### HOTPOTQA (200 steps)

- **ERL: Post-reflection**: Peaks at ~0.75, with oscillations.

- **ERL: Pre-reflection**: Reaches ~0.6, with volatility.

- **RLVR**: Stabilizes at ~0.35.

#### SOKOBAN (100 steps)

- **ERL: Post-reflection**: Starts at ~0.05, rises to ~0.8 with fluctuations.

- **ERL: Pre-reflection**: Begins at ~0.02, increases to ~0.6.

- **RLVR**: Starts at ~0.0, climbs to ~0.2.

#### SOKOBAN (200 steps)

- **ERL: Post-reflection**: Peaks at ~0.9, with minor dips.

- **ERL: Pre-reflection**: Reaches ~0.7, with volatility.

- **RLVR**: Stabilizes at ~0.1.

---

### Key Observations

1. **ERL: Post-reflection** consistently outperforms other methods across all environments and training steps.

2. **ERL: Pre-reflection** performs better than RLVR but lags behind post-reflection.

3. **RLVR** shows the lowest performance, with slower convergence and lower reward ceilings.

4. **SOKOBAN** exhibits higher volatility in rewards, especially for ERL: Pre-reflection at 200 steps.

5. **FROZENLAKE** demonstrates the most stable and highest reward values for ERL: Post-reflection.

---

### Interpretation

The data suggests that **post-reflection in ERL** significantly enhances learning efficiency, likely due to iterative improvements from past experiences. Pre-reflection provides moderate gains but lacks the adaptive feedback loop of post-reflection. RLVR’s inferior performance may stem from its inability to incorporate reflection mechanisms.

Notably, **SOKOBAN’s complexity** (higher training steps and lower reward scales) amplifies the performance gap between methods, highlighting the importance of reflection in complex environments. The dip in ERL: Pre-reflection during HOTPOTQA (200 steps) could indicate overfitting or instability in early training phases. Overall, reflection-based methods (ERL) outperform non-reflective approaches (RLVR), emphasizing the value of meta-cognitive strategies in RL.

DECODING INTELLIGENCE...