TECHNICAL ASSET FINGERPRINT

74eaa74f893529605dcc3452

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Neural Network Architectures

### Overview

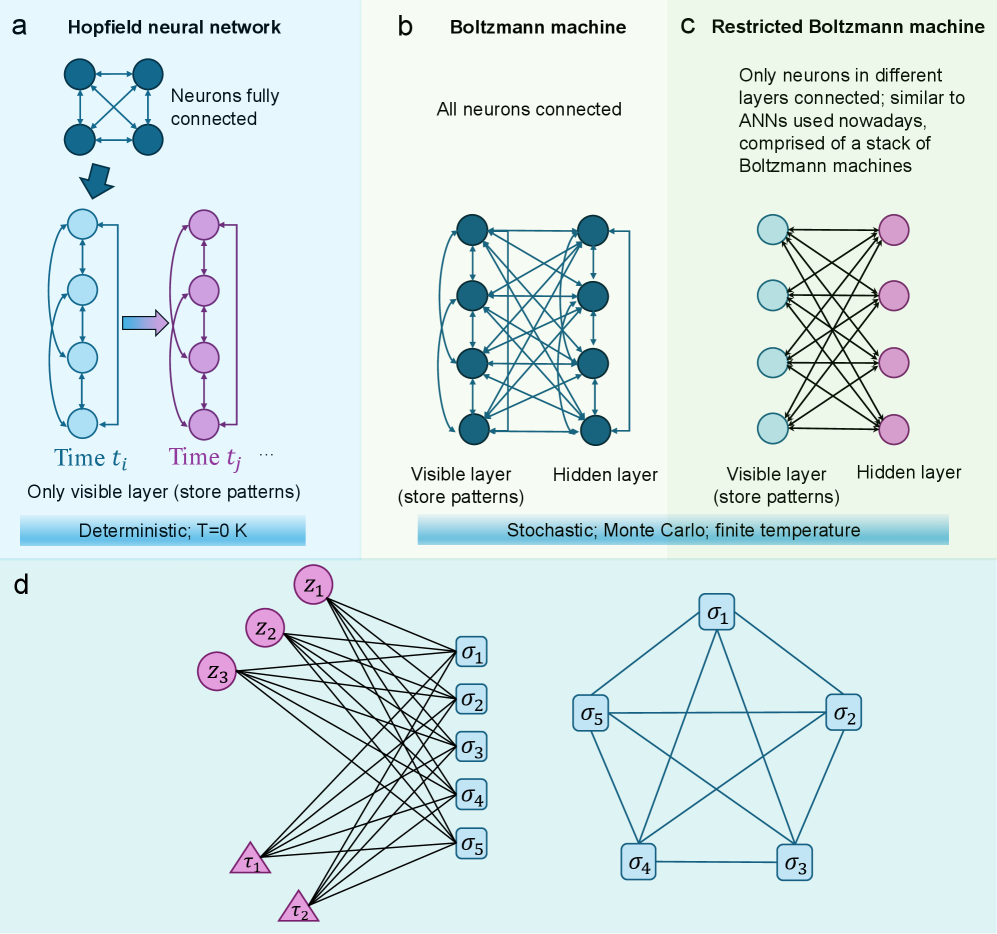

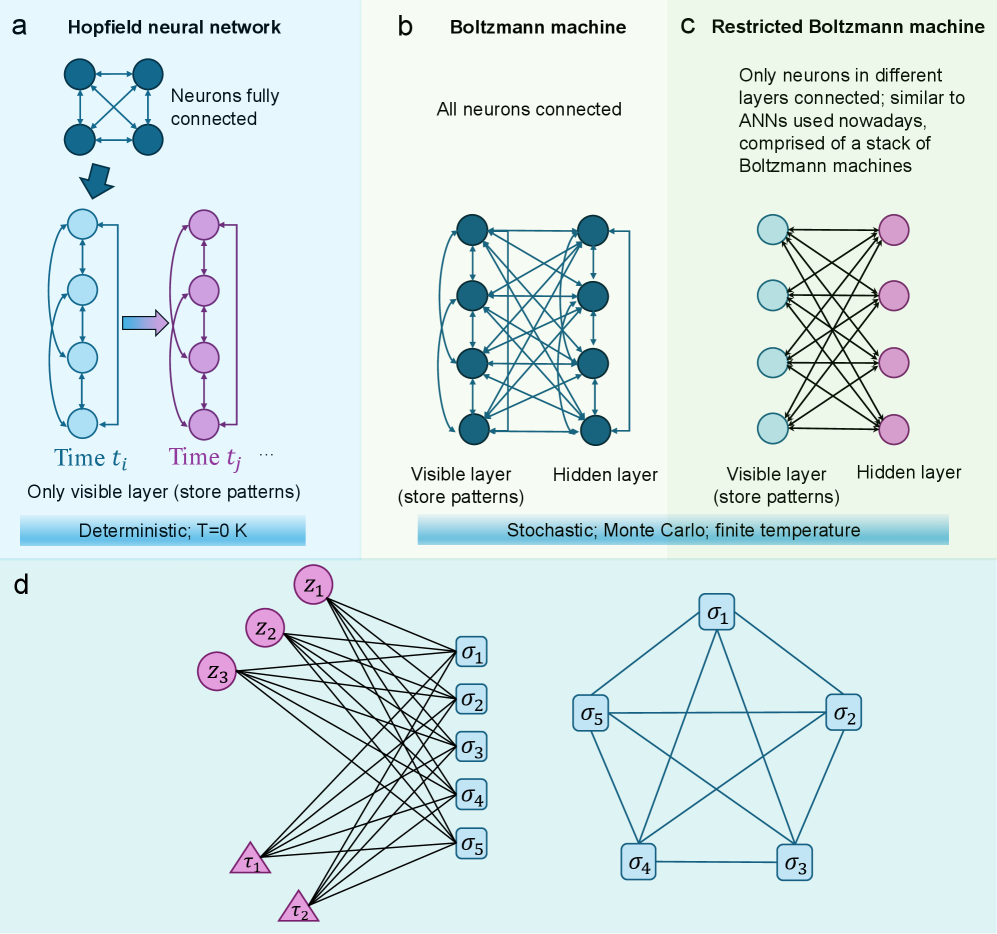

The image presents a comparative overview of different neural network architectures: Hopfield network, Boltzmann machine, Restricted Boltzmann machine, and a final unnamed network. Each architecture is illustrated with a diagram showing the connections between neurons and key characteristics.

### Components/Axes

* **Section a:** Hopfield neural network

* Diagram shows a fully connected network of neurons.

* A downward arrow indicates a transition to a layered structure.

* Two columns of neurons are labeled "Time t\_i" and "Time t\_j".

* Text: "Neurons fully connected", "Only visible layer (store patterns)", "Deterministic; T=0 K"

* **Section b:** Boltzmann machine

* Diagram shows a fully connected network of neurons with self-loops.

* Labels: "Visible layer (store patterns)", "Hidden layer"

* Text: "All neurons connected", "Stochastic; Monte Carlo; finite temperature"

* **Section c:** Restricted Boltzmann machine

* Diagram shows a bipartite network with connections only between layers.

* Labels: "Visible layer (store patterns)", "Hidden layer"

* Text: "Only neurons in different layers connected; similar to ANNs used nowadays, comprised of a stack of Boltzmann machines"

* **Section d:** Unnamed Network

* Two diagrams showing different network structures.

* The left diagram has two layers of nodes, one labeled z1, z2, z3 and the other labeled sigma1, sigma2, sigma3, sigma4, sigma5, and two nodes labeled tau1, tau2.

* The right diagram has nodes labeled sigma1, sigma2, sigma3, sigma4, sigma5.

### Detailed Analysis

* **Hopfield Network (a):**

* Initial state: A fully connected network of approximately 4 neurons.

* Transition: Transforms into a layered structure with two columns, each containing approximately 4 neurons.

* Time t\_i: Neurons are light blue.

* Time t\_j: Neurons are purple.

* The network is deterministic and operates at absolute zero temperature (T=0 K).

* **Boltzmann Machine (b):**

* A fully connected network of approximately 4 neurons in each layer.

* All neurons are interconnected, including self-loops.

* Two layers are labeled "Visible layer" and "Hidden layer".

* The network is stochastic and uses Monte Carlo methods at a finite temperature.

* **Restricted Boltzmann Machine (c):**

* A bipartite network with two layers.

* The "Visible layer" contains approximately 4 light blue neurons.

* The "Hidden layer" contains approximately 4 purple neurons.

* Connections exist only between the visible and hidden layers.

* **Unnamed Network (d):**

* Left Diagram:

* Top layer: 3 purple nodes labeled z1, z2, z3.

* Middle layer: 5 light blue square nodes labeled sigma1, sigma2, sigma3, sigma4, sigma5.

* Bottom layer: 2 purple triangle nodes labeled tau1, tau2.

* All top layer nodes are connected to all middle layer nodes.

* All middle layer nodes are connected to all bottom layer nodes.

* Right Diagram:

* 5 light blue square nodes labeled sigma1, sigma2, sigma3, sigma4, sigma5.

* Each node is connected to two other nodes, forming a pentagon with all diagonals drawn.

### Key Observations

* The diagrams illustrate the evolution from fully connected networks (Hopfield, Boltzmann) to more structured, layered networks (Restricted Boltzmann).

* The transition from deterministic to stochastic models is highlighted.

* The unnamed network in section d shows a more complex architecture with different types of nodes and connections.

### Interpretation

The image provides a visual comparison of different neural network architectures, emphasizing their connectivity, layering, and operational characteristics. The progression from Hopfield to Boltzmann to Restricted Boltzmann machines demonstrates the evolution towards more structured and efficient network designs. The unnamed network in section d suggests further variations in network architecture, potentially involving different types of nodes and connections for specialized tasks. The distinction between deterministic and stochastic models highlights the different approaches to learning and inference in these networks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Neural Network Architectures

### Overview

The image presents a comparative diagram illustrating four different neural network architectures: Hopfield neural network, Boltzmann machine, Restricted Boltzmann machine, and a detailed depiction of a specific network structure labeled 'd'. The diagram focuses on the connectivity and layer structure of each network type.

### Components/Axes

The diagram is divided into four sections labeled 'a', 'b', 'c', and 'd'. Each section represents a different neural network architecture.

- **a (Hopfield Neural Network):** Depicts a network evolving over time (t<sub>i</sub> to t<sub>j</sub>). It highlights a "visible layer" that stores patterns. The text indicates it is "Deterministic; T=0 K".

- **b (Boltzmann Machine):** Shows a network with "Visible layer" and "Hidden layer". The text indicates it is "Stochastic; Monte Carlo; finite temperature".

- **c (Restricted Boltzmann Machine):** Similar to the Boltzmann machine, with "Visible layer" and "Hidden layer", but with restricted connectivity. The text states it is "similar to ANNs used nowadays, comprised of a stack of Boltzmann machines".

- **d:** A more complex network with nodes labeled z<sub>1</sub>, z<sub>2</sub>, z<sub>3</sub>, τ<sub>1</sub>, τ<sub>2</sub> and σ<sub>1</sub>, σ<sub>2</sub>, σ<sub>3</sub>, σ<sub>4</sub>, σ<sub>5</sub>. Connections between nodes are indicated by lines with arrowheads.

### Detailed Analysis or Content Details

**a (Hopfield Neural Network):**

The network consists of interconnected nodes. At time t<sub>i</sub>, the network is in one state, and at time t<sub>j</sub>, it has evolved to a different state. The arrows indicate the direction of influence between neurons. The network is described as deterministic with a temperature of 0 Kelvin.

**b (Boltzmann Machine):**

The network has two layers: a visible layer and a hidden layer. All neurons are connected to each other. The network is described as stochastic, utilizing Monte Carlo methods, and operating at a finite temperature.

**c (Restricted Boltzmann Machine):**

Similar to the Boltzmann machine, it has a visible and hidden layer. However, the connections are restricted to only between different layers. It is described as being similar to modern Artificial Neural Networks (ANNs) and built from stacked Boltzmann machines.

**d:**

This network has two sets of input nodes (τ<sub>1</sub>, τ<sub>2</sub>) connected to a set of hidden nodes (z<sub>1</sub>, z<sub>2</sub>, z<sub>3</sub>). The hidden nodes are then connected to a set of output nodes (σ<sub>1</sub>, σ<sub>2</sub>, σ<sub>3</sub>, σ<sub>4</sub>, σ<sub>5</sub>). The connections are directional, indicated by the arrowheads. The network appears to be fully connected between the input and hidden layers, and between the hidden and output layers.

### Key Observations

- The diagram highlights the evolution of neural network architectures from the fully connected Hopfield network to the more restricted and layered Boltzmann and Restricted Boltzmann machines.

- The Boltzmann and Restricted Boltzmann machines introduce the concept of hidden layers, which are absent in the Hopfield network.

- The Restricted Boltzmann machine introduces a restriction on connectivity, which is a key difference from the Boltzmann machine.

- Diagram 'd' presents a specific network configuration with labeled nodes and directional connections, suggesting a particular implementation or model.

### Interpretation

The diagram illustrates the progression of neural network design, moving from simpler, fully connected networks (Hopfield) to more complex, layered architectures (Boltzmann, Restricted Boltzmann). The introduction of hidden layers and restricted connectivity in the Boltzmann and Restricted Boltzmann machines allows for more sophisticated pattern recognition and representation learning. The diagram suggests that the Restricted Boltzmann machine is a precursor to modern ANNs. The specific network in 'd' likely represents a particular application or model within the broader framework of neural networks, showcasing a detailed connection scheme between input, hidden, and output layers. The inclusion of temperature parameters (T=0 K for Hopfield) and stochastic methods (Monte Carlo for Boltzmann) indicates the consideration of thermodynamic principles in the design and operation of these networks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Neural Network Architectures Comparison

### Overview

The image is a technical diagram comparing four types of neural network architectures, labeled **a**, **b**, **c**, and **d**. It visually explains the structural differences between Hopfield networks, Boltzmann machines, Restricted Boltzmann Machines (RBMs), and a more complex probabilistic graphical model. The diagram uses color-coded nodes and connection lines to illustrate connectivity patterns and includes descriptive text for each model.

### Components/Axes

The image is divided into four main panels:

* **Panel a (Top Left):** Titled "Hopfield neural network". Contains two sub-diagrams.

* **Panel b (Top Center):** Titled "Boltzmann machine". Contains one diagram.

* **Panel c (Top Right):** Titled "Restricted Boltzmann machine". Contains one diagram.

* **Panel d (Bottom):** Contains two unlabeled diagrams showing more complex network structures.

**Common Elements:**

* **Nodes:** Represented as circles. Colors are used to distinguish layers or types (e.g., dark blue, light blue, pink).

* **Connections:** Represented as lines between nodes. Arrows indicate directed connections; plain lines indicate undirected connections.

* **Text Labels:** Provide model names, descriptions of connectivity, layer functions, and operational characteristics.

### Detailed Analysis

#### **Panel a: Hopfield Neural Network**

* **Top Diagram:** Shows four dark blue circles arranged in a square, fully connected with bidirectional arrows. Label: "Neurons fully connected".

* **Bottom Diagram:** Shows two vertical chains of four light blue circles each, connected sequentially with downward arrows. An arrow points from the left chain to the right chain.

* Left chain label: "Time t_i"

* Right chain label: "Time t_j ..."

* **Descriptive Text:**

* "Only visible layer (store patterns)"

* "Deterministic; T=0 K" (where T likely represents temperature).

#### **Panel b: Boltzmann Machine**

* **Diagram:** Shows two vertical columns of four dark blue circles each. The left column is labeled "Visible layer (store patterns)" and the right "Hidden layer". Every node in the visible layer is connected to every node in the hidden layer, and all nodes within each layer are also fully connected to each other. Connections are undirected lines.

* **Descriptive Text:**

* "All neurons connected"

* "Stochastic; Monte Carlo; finite temperature"

#### **Panel c: Restricted Boltzmann Machine (RBM)**

* **Diagram:** Shows two vertical columns. The left column has four light blue circles labeled "Visible layer (store patterns)". The right column has four pink circles labeled "Hidden layer". Connections exist **only** between nodes in the visible layer and nodes in the hidden layer (a bipartite graph). There are no connections within the same layer.

* **Descriptive Text:**

* "Only neurons in different layers connected; similar to ANNs used nowadays, comprised of a stack of Boltzmann machines"

#### **Panel d: Complex Probabilistic Models**

* **Left Diagram:** A more intricate network.

* **Pink Circles (Top Left):** Labeled `z₁`, `z₂`, `z₃`. These connect to all blue squares.

* **Blue Squares (Right):** Labeled `σ₁`, `σ₂`, `σ₃`, `σ₄`, `σ₅`. These are fully interconnected with each other and also receive connections from the pink circles and pink triangles.

* **Pink Triangles (Bottom Left):** Labeled `τ₁`, `τ₂`. These connect to all blue squares.

* **Right Diagram:** A pentagon-shaped network.

* **Blue Squares (Vertices):** Labeled `σ₁`, `σ₂`, `σ₃`, `σ₄`, `σ₅`.

* **Connections:** Each `σ` node is connected to every other `σ` node, forming a complete graph (K₅). The connections are undirected lines.

### Key Observations

1. **Connectivity Evolution:** The diagrams show a clear progression from fully connected networks (Hopfield, Boltzmann) to restricted, layer-based connectivity (RBM), which is noted as the precursor to modern Artificial Neural Networks (ANNs).

2. **Deterministic vs. Stochastic:** A key distinction is made between the deterministic Hopfield network (operating at T=0 K) and the stochastic Boltzmann/RBM models (using Monte Carlo methods at finite temperature).

3. **Layer Function:** In models **b** and **c**, the "visible layer" is explicitly noted to "store patterns," indicating its role as the input/observation layer.

4. **Structural Analogy:** Panel **d**'s right diagram shows a structure (`σ` nodes in a pentagon) that is topologically identical to the fully connected Hopfield network in panel **a**, but with different labeling, suggesting it represents a similar all-to-all connectivity pattern in a different context.

5. **Model Complexity:** Panel **d**'s left diagram introduces multiple latent variable types (`z`, `τ`) influencing a set of interconnected observed variables (`σ`), representing a more advanced probabilistic graphical model.

### Interpretation

This diagram serves as a pedagogical tool to contrast foundational energy-based and probabilistic neural network models. It highlights the architectural constraints that define each model's capabilities and computational methods.

* **Hopfield Networks (a)** are presented as simple, deterministic associative memories with a single layer of fully connected neurons, capable of storing and retrieving patterns but limited by their fully connected, recurrent nature.

* **Boltzmann Machines (b)** introduce stochasticity and a separation into visible and hidden layers, allowing them to model more complex probability distributions, but their fully connected nature makes them computationally intractable for large systems.

* **Restricted Boltzmann Machines (c)** are shown as the critical simplification that enables practical learning. By removing intra-layer connections, they become tractable to train (e.g., using Contrastive Divergence) and can be stacked to form Deep Belief Networks, bridging the gap to modern deep learning.

* **Panel d** likely illustrates extensions or related models. The left diagram may represent a model with multiple types of latent variables (`z`, `τ`) influencing observed data (`σ`), common in advanced topic models or structured prediction tasks. The right diagram reinforces the concept of a fully connected graph, perhaps as a component within a larger model or to contrast with the restricted connectivity of RBMs.

The overall narrative is one of increasing structural refinement for practical machine learning: moving from theoretically interesting but intractable fully connected models to restricted, layer-wise architectures that balance expressive power with computational feasibility, ultimately leading to the ANNs used today.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Neural Network Architectures Comparison

### Overview

The image compares four neural network architectures: Hopfield neural network (a), Boltzmann machine (b), Restricted Boltzmann machine (c), and a hybrid visible-hidden layer configuration (d). Each section includes diagrams of neuron connections, layer types, and operational characteristics.

### Components/Axes

- **Labels**:

- **a**: Hopfield neural network

- **b**: Boltzmann machine

- **c**: Restricted Boltzmann machine

- **d**: Hybrid visible-hidden layer configuration

- **Diagram Elements**:

- **Nodes**:

- Blue circles (visible layer, store patterns)

- Pink circles (hidden layer, store patterns)

- Blue squares (σ₁–σ₅, hidden layer outputs)

- Pink triangles (τ₁–τ₂, input patterns)

- **Connections**:

- Fully connected (a, b)

- Restricted connections (c, d)

- **Text Annotations**:

- "Deterministic; T=0 K" (a)

- "Stochastic; Monte Carlo; finite temperature" (b)

- "Only neurons in different layers connected" (c)

### Detailed Analysis

#### Section a (Hopfield Neural Network)

- **Structure**: Fully connected neurons with visible and hidden layers.

- **Flow**: Input patterns (τ₁–τ₂) at time *t<sub>i</sub>* propagate to output patterns (σ₁–σ₅) at time *t<sub>j</sub>*.

- **Key Features**:

- Deterministic operation (T=0 K).

- Visible layer stores patterns explicitly.

#### Section b (Boltzmann Machine)

- **Structure**: Fully connected visible and hidden layers.

- **Flow**: Stochastic interactions between layers via Monte Carlo sampling.

- **Key Features**:

- Finite temperature enables probabilistic pattern storage.

- No explicit input/output separation.

#### Section c (Restricted Boltzmann Machine)

- **Structure**: Visible and hidden layers with **only inter-layer connections** (no intra-layer connections).

- **Flow**: Similar to modern ANNs, with visible layer storing patterns.

- **Key Features**:

- Restricted connectivity reduces complexity.

- Stacked RBMs form deep learning architectures.

#### Section d (Hybrid Configuration)

- **Structure**: Visible layer (τ₁–τ₂) connected to hidden layer (σ₁–σ₅) via fully connected edges.

- **Flow**: Input patterns (τ) propagate through hidden layer (σ) with no feedback loops.

- **Key Features**:

- Simplified architecture compared to RBMs.

- No explicit temperature or stochasticity mentioned.

### Key Observations

1. **Deterministic vs. Stochastic**:

- Hopfield (a) operates deterministically (T=0 K), while Boltzmann machines (b, c) use stochastic sampling.

2. **Connectivity**:

- RBM (c) restricts connections to inter-layer, unlike fully connected Boltzmann machines (b).

3. **Pattern Storage**:

- Visible layers (a, c, d) explicitly store patterns, while hidden layers (b, c) learn implicit representations.

### Interpretation

- **Hopfield Networks** (a) are ideal for associative memory tasks but lack scalability due to deterministic dynamics.

- **Boltzmann Machines** (b) introduce stochasticity for better generalization but suffer from high computational cost.

- **RBMs** (c) address this by restricting connections, enabling efficient training and forming the basis of deep belief networks.

- **Section d** illustrates a simplified feedforward architecture, emphasizing direct input-to-output mapping without hidden layer interactions.

The progression from Hopfield to RBM reflects advancements in balancing memory capacity, computational efficiency, and scalability in neural networks.

DECODING INTELLIGENCE...