## Diagram: Neural Network Architectures Comparison

### Overview

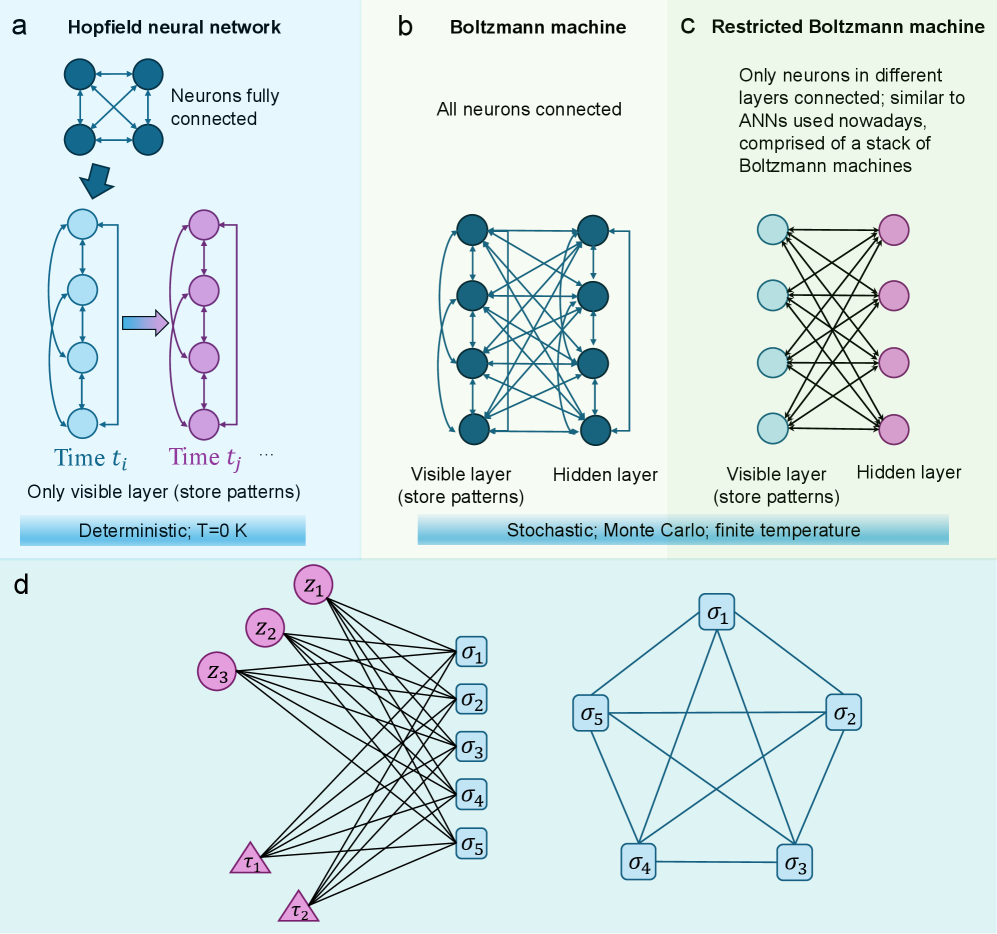

The image compares four neural network architectures: Hopfield neural network (a), Boltzmann machine (b), Restricted Boltzmann machine (c), and a hybrid visible-hidden layer configuration (d). Each section includes diagrams of neuron connections, layer types, and operational characteristics.

### Components/Axes

- **Labels**:

- **a**: Hopfield neural network

- **b**: Boltzmann machine

- **c**: Restricted Boltzmann machine

- **d**: Hybrid visible-hidden layer configuration

- **Diagram Elements**:

- **Nodes**:

- Blue circles (visible layer, store patterns)

- Pink circles (hidden layer, store patterns)

- Blue squares (σ₁–σ₅, hidden layer outputs)

- Pink triangles (τ₁–τ₂, input patterns)

- **Connections**:

- Fully connected (a, b)

- Restricted connections (c, d)

- **Text Annotations**:

- "Deterministic; T=0 K" (a)

- "Stochastic; Monte Carlo; finite temperature" (b)

- "Only neurons in different layers connected" (c)

### Detailed Analysis

#### Section a (Hopfield Neural Network)

- **Structure**: Fully connected neurons with visible and hidden layers.

- **Flow**: Input patterns (τ₁–τ₂) at time *t<sub>i</sub>* propagate to output patterns (σ₁–σ₅) at time *t<sub>j</sub>*.

- **Key Features**:

- Deterministic operation (T=0 K).

- Visible layer stores patterns explicitly.

#### Section b (Boltzmann Machine)

- **Structure**: Fully connected visible and hidden layers.

- **Flow**: Stochastic interactions between layers via Monte Carlo sampling.

- **Key Features**:

- Finite temperature enables probabilistic pattern storage.

- No explicit input/output separation.

#### Section c (Restricted Boltzmann Machine)

- **Structure**: Visible and hidden layers with **only inter-layer connections** (no intra-layer connections).

- **Flow**: Similar to modern ANNs, with visible layer storing patterns.

- **Key Features**:

- Restricted connectivity reduces complexity.

- Stacked RBMs form deep learning architectures.

#### Section d (Hybrid Configuration)

- **Structure**: Visible layer (τ₁–τ₂) connected to hidden layer (σ₁–σ₅) via fully connected edges.

- **Flow**: Input patterns (τ) propagate through hidden layer (σ) with no feedback loops.

- **Key Features**:

- Simplified architecture compared to RBMs.

- No explicit temperature or stochasticity mentioned.

### Key Observations

1. **Deterministic vs. Stochastic**:

- Hopfield (a) operates deterministically (T=0 K), while Boltzmann machines (b, c) use stochastic sampling.

2. **Connectivity**:

- RBM (c) restricts connections to inter-layer, unlike fully connected Boltzmann machines (b).

3. **Pattern Storage**:

- Visible layers (a, c, d) explicitly store patterns, while hidden layers (b, c) learn implicit representations.

### Interpretation

- **Hopfield Networks** (a) are ideal for associative memory tasks but lack scalability due to deterministic dynamics.

- **Boltzmann Machines** (b) introduce stochasticity for better generalization but suffer from high computational cost.

- **RBMs** (c) address this by restricting connections, enabling efficient training and forming the basis of deep belief networks.

- **Section d** illustrates a simplified feedforward architecture, emphasizing direct input-to-output mapping without hidden layer interactions.

The progression from Hopfield to RBM reflects advancements in balancing memory capacity, computational efficiency, and scalability in neural networks.