## Diagram: AI Response Confidence and Hallucination Flowchart

### Overview

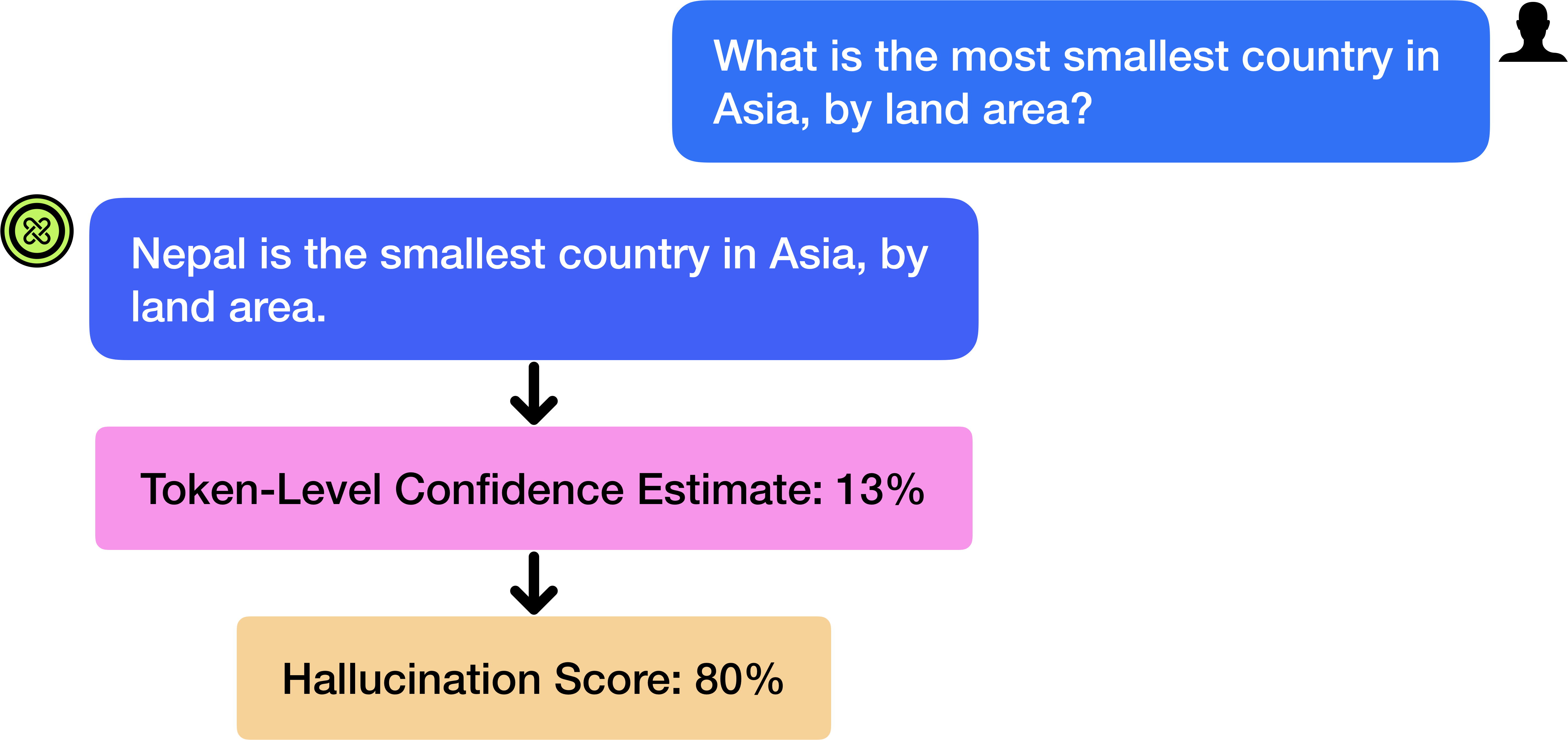

The image is a vertical flowchart diagram illustrating a conversational interaction between a user and an AI system, followed by a two-step evaluation of the AI's response. The diagram uses colored text bubbles and boxes connected by downward-pointing arrows to show the sequence of events. The primary language is English.

### Components/Axes

The diagram is composed of four main elements arranged vertically from top to bottom:

1. **User Query Bubble (Top-Right):** A blue, rounded rectangle containing the user's question. A black silhouette icon of a person's head and shoulders is positioned to its right.

2. **AI Response Bubble (Left-Aligned, Below User Query):** A blue, rounded rectangle containing the AI's answer. A circular green icon with a white, stylized "X" or knot symbol is positioned to its left.

3. **Token-Level Confidence Estimate Box (Centered, Below AI Response):** A pink, rounded rectangle.

4. **Hallucination Score Box (Centered, Bottom):** A light orange/tan, rounded rectangle.

Black, downward-pointing arrows connect the AI Response to the Confidence Estimate box, and the Confidence Estimate box to the Hallucination Score box, indicating the flow of analysis.

### Detailed Analysis

**Textual Content Transcription:**

* **User Query Bubble:**

* Text: "What is the most smallest country in Asia, by land area?"

* *Note: The query contains a grammatical error ("most smallest").*

* **AI Response Bubble:**

* Text: "Nepal is the smallest country in Asia, by land area."

* **Token-Level Confidence Estimate Box:**

* Text: "Token-Level Confidence Estimate: 13%"

* **Hallucination Score Box:**

* Text: "Hallucination Score: 80%"

**Spatial and Relational Details:**

* The user's query is positioned in the upper right quadrant of the image.

* The AI's response is positioned below and to the left of the user's query, creating a staggered, conversational layout.

* The two evaluation metrics (Confidence Estimate and Hallucination Score) are centered horizontally below the AI response, forming a clear analytical pipeline.

* The flow is strictly top-to-bottom: Query → Response → Confidence Analysis → Hallucination Assessment.

### Key Observations

1. **Factual Inaccuracy:** The AI's response ("Nepal is the smallest country in Asia") is factually incorrect. The smallest country in Asia by land area is generally considered to be the Maldives (or Bahrain, depending on definitions), not Nepal.

2. **Low Confidence, High Hallucination:** The diagram explicitly links the incorrect answer to very low confidence (13%) and a very high hallucination score (80%). This suggests the system's internal metrics correctly identified the response as unreliable.

3. **Grammatical Error in Query:** The user's input contains a double superlative ("most smallest"), which may be a test of the AI's ability to handle non-standard input or could be an unintentional error.

4. **Visual Coding:** The use of distinct colors (blue for dialogue, pink for confidence, orange for hallucination) and icons (user silhouette, AI logo) clearly differentiates the components of the process.

### Interpretation

This diagram serves as a technical illustration of an AI system's failure mode and its associated self-diagnostic metrics. It demonstrates a scenario where an AI generates a factually incorrect answer (a hallucination) to a user's query. Crucially, the system's own post-hoc analysis assigns this response a very low confidence score (13%) and a high hallucination probability (80%).

The flowchart's purpose is likely to:

* **Explain a Concept:** Visually explain the relationship between an AI's generated output, its internal confidence estimation, and a calculated hallucination score.

* **Demonstrate a Problem:** Highlight the issue of AI hallucinations, where models generate plausible but false information.

* **Showcase a Solution/Metric:** Illustrate the utility of confidence and hallucination scoring as tools for flagging unreliable AI outputs, even if the model itself produces the error. The high hallucination score acts as a red flag for the low-confidence, incorrect answer.

The diagram implies that while the AI can make mistakes, robust systems should incorporate mechanisms to detect and quantify the uncertainty and potential falsehood of their own responses, which is a critical step for building trustworthy AI applications.