## Diagram: Attention Mechanism / Dependency Visualization

### Overview

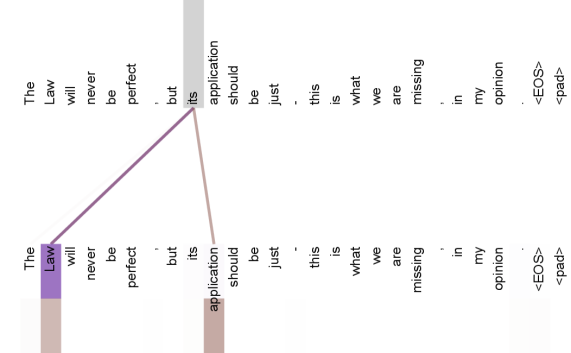

The image displays a visualization typical of Natural Language Processing (NLP) attention mechanisms or dependency parsing. It shows a mapping between two identical sequences of tokens (words and punctuation). The visualization highlights specific relationships between a source token and target tokens via colored connecting lines. The image is oriented vertically, with the text reading from top to bottom in two parallel columns.

### Components/Axes

* **Text Sequence (Top Row/Left Column):** A sentence broken down into individual tokens.

* Sequence: `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `-`, `this`, `is`, `what`, `we`, `are`, `missing`, `,`, `in`, `my`, `opinion`, `.`, `<EOS>`, `<pad>`

* **Text Sequence (Bottom Row/Right Column):** An identical copy of the sequence above.

* Sequence: `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `-`, `this`, `is`, `what`, `we`, `are`, `missing`, `,`, `in`, `my`, `opinion`, `.`, `<EOS>`, `<pad>`

* **Connecting Lines (Attention Weights):**

* Lines connect a specific token in the top sequence to specific tokens in the bottom sequence.

* The lines originate from the word "its" in the top sequence.

* **Highlighting/Shading:**

* **Source Token:** The word "its" in the top sequence is highlighted with a grey vertical bar.

* **Target Tokens:** Two words in the bottom sequence are highlighted:

1. "Law" (highlighted in purple).

2. "application" (highlighted in a brownish-grey).

### Detailed Analysis

**Visual Flow & Connections:**

The diagram illustrates a relationship originating from the token **"its"** (index 8, 0-based) in the source sequence.

1. **Primary Connection (Purple):**

* **Origin:** "its" (Top Sequence)

* **Destination:** "Law" (Bottom Sequence, index 1)

* **Visual Trend:** A thick purple line connects "its" diagonally back to "Law". This suggests a strong attention weight or dependency link, indicating that the pronoun "its" refers to or attends strongly to the noun "Law".

2. **Secondary Connection (Brown/Grey):**

* **Origin:** "its" (Top Sequence)

* **Destination:** "application" (Bottom Sequence, index 9)

* **Visual Trend:** A thinner brownish-grey line connects "its" forward to "application". This suggests a relationship where "its" modifies "application" (as in "its application").

**Token Transcription:**

The full sentence transcribed from the visualization is:

"The Law will never be perfect , but its application should be just - this is what we are missing , in my opinion . <EOS> <pad>"

*Note: The tokens `<EOS>` (End Of Sentence) and `<pad>` (Padding) are standard NLP special tokens.*

### Key Observations

* **Coreference Resolution:** The visualization explicitly captures a linguistic coreference. The possessive pronoun "its" is shown to be related to its antecedent "Law".

* **Syntactic Dependency:** It also captures the syntactic relationship between the possessive pronoun "its" and the noun it modifies, "application".

* **Weight Disparity:** The line connecting to "Law" (purple) appears slightly more distinct or is colored differently than the line connecting to "application," possibly indicating different "heads" in a multi-head attention model or simply distinct dependency types.

* **Self-Attention Context:** Since the source and target sequences are identical, this is a **Self-Attention** map (common in Transformer architectures like BERT or GPT).

### Interpretation

This diagram demonstrates how a machine learning model (likely a Transformer-based NLP model) "understands" the context of the word **"its"**.

1. **Contextual Understanding:** The model correctly identifies that "its" belongs to "Law" (the entity possessing the quality) and modifies "application" (the quality being possessed).

2. **Ambiguity Resolution:** In the sentence "The Law will never be perfect, but its application should be just...", a simple model might struggle to know what "its" refers to. This visualization proves the model has learned to look backwards in the sentence to find "Law" to resolve the ambiguity of the pronoun.

3. **Technical Relevance:** This is a classic "attention map" used to debug or interpret deep learning models. It confirms that the model is paying attention to the semantically correct parts of the sentence when processing the word "its".