\n

## Diagram: Reinforcement Learning Process Flow with Confidence Scores

### Overview

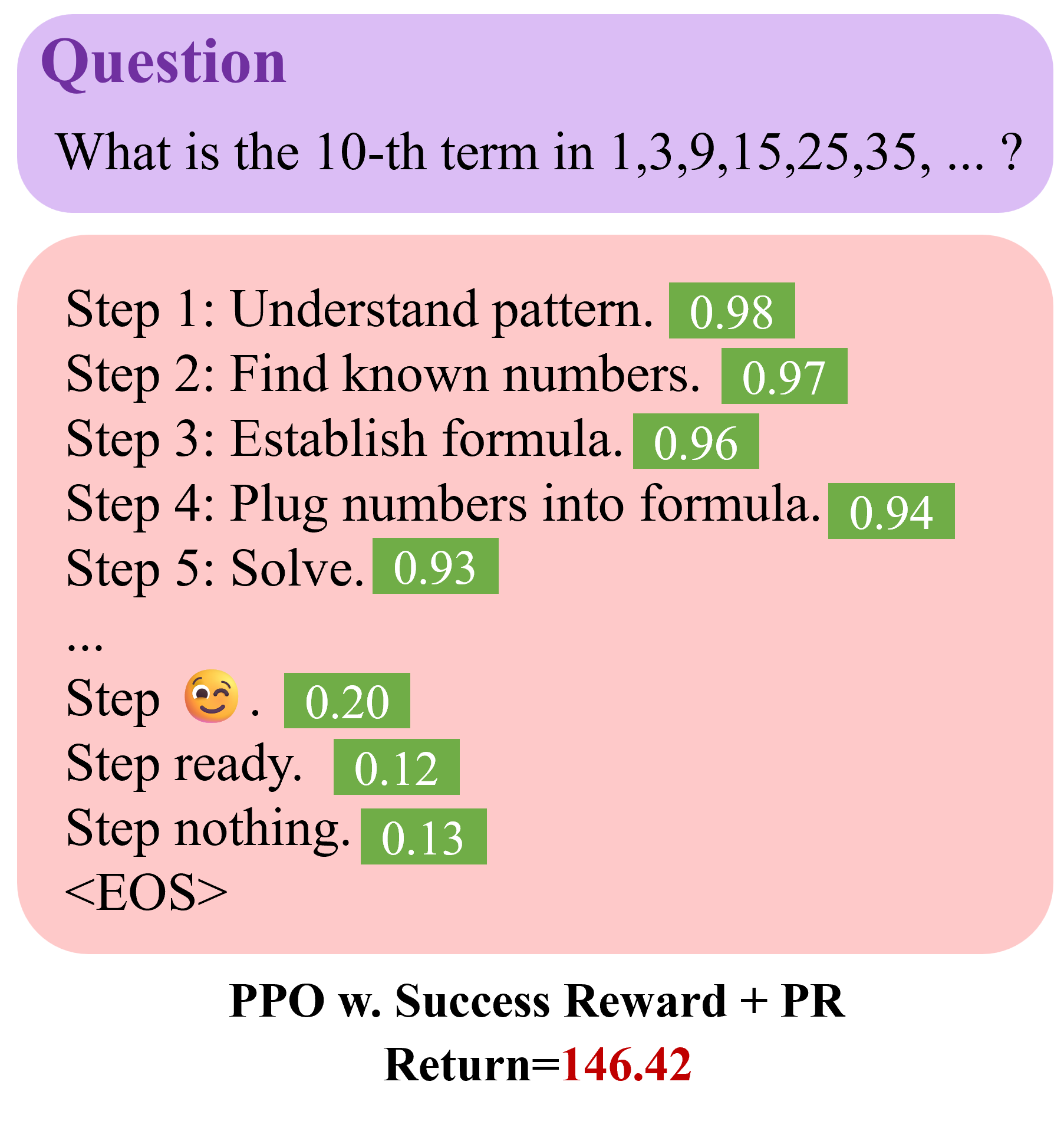

The image is a technical diagram illustrating a step-by-step process for solving a mathematical sequence problem, annotated with confidence scores (likely from a machine learning model). The diagram is structured as a flowchart with a question header, a main process section, and a footer indicating the reinforcement learning method and a final return value. The primary language is English.

### Components/Axes

The diagram is divided into three distinct visual regions:

1. **Header (Purple Rounded Rectangle):**

* **Title:** "Question" (in large, purple serif font).

* **Content:** The mathematical problem statement: "What is the 10-th term in 1,3,9,15,25,35, ... ?"

2. **Main Process Section (Pink Rounded Rectangle):**

* This section lists a sequence of steps, each followed by a numerical value in a green box. The steps are presented in a left-aligned list.

* **Steps and Associated Values:**

* Step 1: Understand pattern. `[0.98]`

* Step 2: Find known numbers. `[0.97]`

* Step 3: Establish formula. `[0.96]`

* Step 4: Plug numbers into formula. `[0.94]`

* Step 5: Solve. `[0.93]`

* `...` (ellipsis indicating omitted steps)

* Step 😉. `[0.20]` (The step label includes a "winking face with tongue" emoji 😉).

* Step ready. `[0.12]`

* Step nothing. `[0.13]`

* `<EOS>` (End of Sequence token).

3. **Footer (Text on light gray background):**

* **Method Label:** "PPO w. Success Reward + PR" (in bold, black serif font). "PPO" likely stands for Proximal Policy Optimization, a reinforcement learning algorithm.

* **Result:** "Return=146.42" (in bold, with the numerical value in red).

### Detailed Analysis

The diagram details a sequential decision-making process, where each step is assigned a confidence or probability score (the number in the green box). The process begins with high-confidence, logical steps for solving the math problem and transitions into lower-confidence, less defined, or terminal steps.

* **Trend Verification:** The confidence scores show a clear downward trend. The initial, well-defined problem-solving steps (1-5) have very high scores (0.93-0.98). After the ellipsis, the scores drop dramatically to the 0.12-0.20 range for the more ambiguous or terminal steps ("😉", "ready", "nothing").

### Key Observations

1. **Confidence Decay:** There is a stark contrast between the high confidence (>0.93) in the initial, logical reasoning steps and the low confidence (<0.21) in the later, less structured steps.

2. **Emoji as a Step:** The inclusion of "Step 😉." with a low score (0.20) is notable. It may represent a non-verbal, heuristic, or "gut-feeling" step in the model's reasoning process, or it could be a placeholder for an uninterpretable action.

3. **Terminal Steps:** The steps "ready" (0.12) and "nothing" (0.13) have the lowest scores and appear just before the `<EOS>` token, suggesting they are low-confidence termination or idle states.

4. **Final Return Value:** The footer reports a "Return" of `146.42`. In reinforcement learning, this is the cumulative reward obtained by the agent (the model) over the entire episode (solving the problem).

### Interpretation

This diagram visualizes the internal reasoning trace and associated confidence of an AI agent, trained with **PPO (Proximal Policy Optimization) using a Success Reward and possibly a Progress Reward (PR)**, as it attempts to solve a sequence prediction problem.

* **What it demonstrates:** The agent successfully identifies and executes a high-confidence, logical strategy for the core mathematical task (Steps 1-5). However, its process doesn't end cleanly after "Solve." It continues into a series of low-confidence, possibly redundant or exploratory actions ("😉", "ready", "nothing") before generating an end-of-sequence signal. This suggests the agent's policy may not be perfectly optimized to terminate immediately upon task completion.

* **Relationship between elements:** The high initial scores indicate the model's strong alignment with the correct problem-solving methodology. The low later scores highlight inefficiency or uncertainty in its termination policy. The final **Return=146.42** is the aggregate measure of performance, combining the reward for solving the problem (Success Reward) and potentially for making progress (PR), minus any penalties for the inefficient steps.

* **Notable anomaly:** The significant drop in confidence after the logical steps is the primary anomaly. It indicates a disconnect between the model's capability to perform the core task and its ability to recognize when the task is complete and stop acting. This is a common challenge in training sequential decision-making models.