\n

## Text Extraction: DeepSeek-R1 Reasoning Chain

### Overview

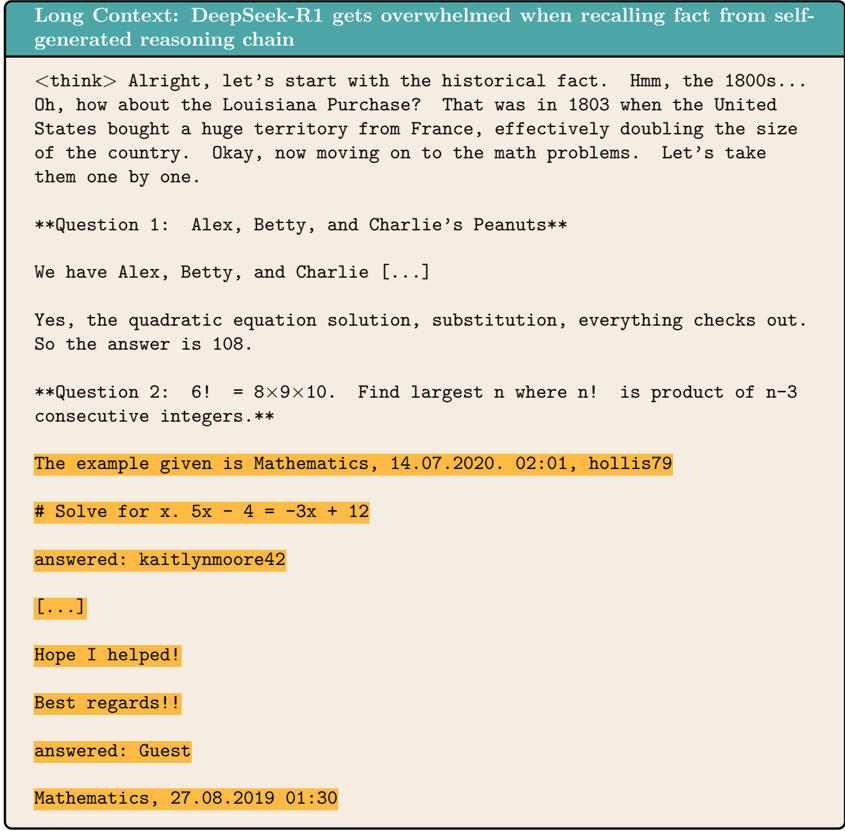

The image contains a transcript of a reasoning chain generated by the DeepSeek-R1 model. It appears to be a log of the model's thought process while answering questions, including a historical fact and mathematical problems. The text is presented in a conversational style, mimicking the model's internal monologue.

### Components/Axes

There are no axes or charts in this image. The components are primarily text blocks, formatted with markdown elements like bolding and headings. Key elements include:

* `<think>` tags: Denote the model's internal thought process.

* `**Question X:**` headings: Indicate the start of a new question.

* User names: `hollis79`, `kaitlynmoore42`, `Guest`

* Dates and times: `14.07.2020. 02:01`, `27.08.2019 01:30`

### Detailed Analysis or Content Details

The transcript can be broken down as follows:

1. **Initial Thought Process:**

* The model begins by recalling the Louisiana Purchase, stating it occurred in 1803 when the United States bought a large territory from France, effectively doubling the country's size.

* It then transitions to math problems.

2. **Question 1: Alex, Betty, and Charlie's Peanuts**

* The question is stated as "**Question 1: Alex, Betty, and Charlie's Peanuts**".

* The model states it has Alex, Betty, and Charlie.

* It confirms the quadratic equation solution and substitution, concluding the answer is 108.

3. **Question 2: Factorial Problem**

* The question is stated as "**Question 2: 6! = 8x9x10. Find largest n where n! is product of n-3 consecutive integers.**"

* The example is given as Mathematics, 14.07.2020. 02:01, hollis79.

* The problem is to solve for x: 5x - 4 = -3x + 12.

* The answer is provided by kaitlynmoore42.

4. **Concluding Remarks:**

* The model states "Here I helped!".

* It adds "Best regards!!".

* The final answer is provided by Guest.

* The date and time are Mathematics, 27.08.2019 01:30.

### Key Observations

* The model demonstrates a step-by-step reasoning process, explicitly stating its thoughts.

* The transcript includes both factual recall (Louisiana Purchase) and problem-solving (math questions).

* The model acknowledges contributions from other users (kaitlynmoore42, Guest, hollis79).

* The formatting suggests a conversational or chat-based interface.

* The ellipsis "[...]" indicates that parts of the transcript have been omitted.

### Interpretation

This transcript provides insight into the reasoning capabilities of the DeepSeek-R1 model. It showcases the model's ability to:

* Access and recall factual information.

* Apply mathematical reasoning to solve problems.

* Present its thought process in a human-readable format.

* Integrate information from external sources (other users).

The use of `<think>` tags is particularly interesting, as it allows us to observe the model's internal monologue. This could be valuable for understanding how the model arrives at its conclusions and identifying potential biases or errors in its reasoning. The presence of omitted sections "[...]" suggests that the full reasoning chain may be more complex than what is presented here. The varying dates and times indicate that this is likely a compilation of interactions over a period of time. The model's concluding remarks ("Here I helped!", "Best regards!!") demonstrate an attempt to mimic human conversational patterns.