\n

## Diagram: AI Reasoning Chain with External References

### Overview

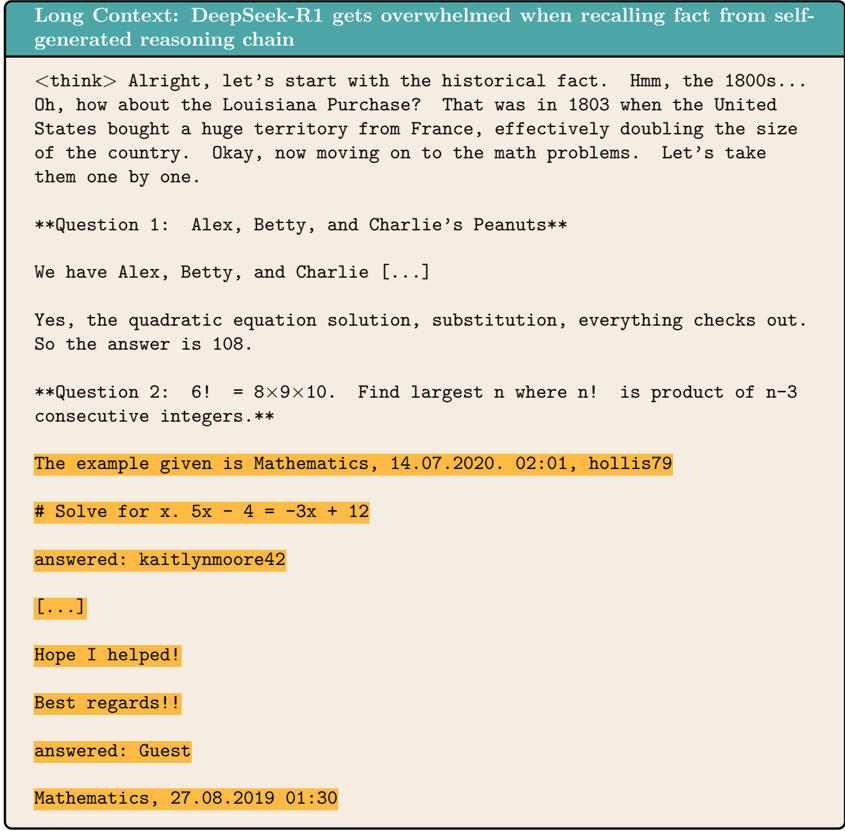

The image is a screenshot or diagram illustrating a long-context reasoning chain generated by an AI model named "DeepSeek-R1." The diagram demonstrates how the model's internal reasoning process (`<think>` tags) becomes overwhelmed or distracted when attempting to recall facts from its own generated chain, leading it to incorporate external, highlighted text snippets that appear to be from a Q&A or forum platform.

### Components/Axes

The image is structured as a single, continuous text block with distinct visual sections:

1. **Header (Top Banner):** A teal-colored banner with white text stating the diagram's title.

2. **Main Content Area (Light Beige Background):** Contains the AI's generated reasoning process and the interspersed external text.

3. **Text Styling:**

* **AI Reasoning Text:** Standard black, monospaced font.

* **Highlighted External Text:** Text with an orange background highlight, indicating it is not part of the AI's original reasoning but has been inserted or recalled from another source.

### Detailed Analysis / Content Details

**1. Header Text:**

* **Position:** Top of the image, spanning the full width.

* **Text:** `Long Context: DeepSeek-R1 gets overwhelmed when recalling fact from self-generated reasoning chain`

**2. AI Reasoning Chain (Main Text Block):**

The AI's internal monologue begins with a `<think>` tag and proceeds as follows:

* It starts by recalling a historical fact about the 1800s, specifically the Louisiana Purchase of 1803.

* It then transitions to solving math problems.

* **Question 1:** Titled "Alex, Betty, and Charlie's Peanuts." The text is cut off (`[...]`), but the AI concludes the answer is 108 after verifying a quadratic equation solution.

* **Question 2:** Poses the problem: "6! = 8×9×10. Find largest n where n! is product of n-3 consecutive integers."

**3. Highlighted External Text Snippets (Orange Background):**

These snippets are interspersed within and after the AI's reasoning chain. They appear to be copied from a mathematics Q&A forum or similar platform.

* **Snippet 1:** `The example given is Mathematics, 14.07.2020. 02:01, hollis79`

* **Snippet 2:** `# Solve for x. 5x - 4 = -3x + 12`

* **Snippet 3:** `answered: kaitlynmoore42`

* **Snippet 4:** `[...]` (Ellipsis, indicating omitted content)

* **Snippet 5:** `Hope I helped!`

* **Snippet 6:** `Best regards!!`

* **Snippet 7:** `answered: Guest`

* **Snippet 8:** `Mathematics, 27.08.2019 01:30`

### Key Observations

1. **Context Contamination:** The AI's coherent reasoning about historical facts and math problems is directly interrupted and followed by unrelated, highlighted text from an external source.

2. **Source of Highlighted Text:** The highlighted snippets contain usernames (`hollis79`, `kaitlynmoore42`, `Guest`), timestamps, forum-style sign-offs ("Hope I helped!", "Best regards!!"), and a simple algebra problem. This strongly suggests they are scraped from a public Q&A website.

3. **Loss of Coherence:** After presenting "Question 2," the AI's own reasoning chain stops. The subsequent content is entirely the external, highlighted text, indicating a failure to continue its original task.

4. **Visual Coding:** The orange highlight is the primary visual cue used to differentiate the injected external data from the model's generated text.

### Interpretation

This diagram serves as a technical case study or failure mode analysis for a Large Language Model (LLM) operating with a long context window.

* **What it Demonstrates:** It visually argues that when an LLM like DeepSeek-R1 is tasked with complex, multi-step reasoning over a long context, it can become "overwhelmed." Instead of maintaining a clean, internal reasoning state, it begins to retrieve and output fragments that resemble its training data (e.g., internet forum posts) rather than continuing the logical task.

* **Relationship Between Elements:** The header states the thesis. The main text block provides the evidence: a clean start to reasoning that degrades into noise. The highlighted text acts as the "symptom" of the model's confusion, showing it has conflated its reasoning process with memorized patterns from its training corpus.

* **Implications:** This highlights a significant challenge in AI reliability for long-form tasks. It suggests that a model's context window can become "polluted" with irrelevant information, leading to outputs that are incoherent, factually incorrect, or plagiarized from training data. The investigation here is Peircean in nature: the diagram is a *sign* (the visual evidence) that points to an *interpretant* (the conclusion that long-context recall is fragile) about the *object* (the model's internal failure mode). The "reading between the lines" is that simply having a large context window does not guarantee robust reasoning; the model's ability to manage and stay focused within that context is a separate, critical capability.