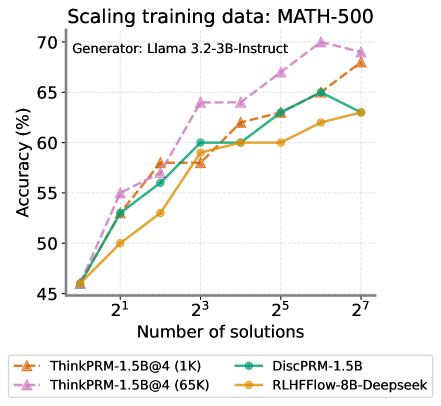

## Line Chart: Scaling training data: MATH-500

### Overview

This is a line chart illustrating the relationship between the amount of training data (measured in "Number of solutions") and model performance (measured in "Accuracy (%)") on the MATH-500 benchmark. The chart compares four different model training methods or configurations. The overall trend for all methods is that accuracy increases as the number of training solutions increases, following a logarithmic scale on the x-axis.

### Components/Axes

* **Chart Title:** "Scaling training data: MATH-500"

* **Subtitle/Generator:** "Generator: Llama 3.2-3B-Instruct"

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, ranging from 45 to 70, with major tick marks at 5-unit intervals (45, 50, 55, 60, 65, 70).

* **X-Axis:**

* **Label:** "Number of solutions"

* **Scale:** Logarithmic (base 2), with labeled tick marks at 2¹ (2), 2³ (8), 2⁵ (32), and 2⁷ (128). The axis starts at approximately 2⁰ (1).

* **Legend:** Located at the bottom of the chart, outside the plot area. It contains four entries, each with a unique color, line style, and marker shape.

1. **ThinkPRM-1.5B@4 (1K):** Orange dashed line with upward-pointing triangle markers.

2. **ThinkPRM-1.5B@4 (65K):** Pink (light purple) dashed line with upward-pointing triangle markers.

3. **DiscPRM-1.5B:** Green solid line with circle markers.

4. **RLHFFlow-8B-Deepseek:** Yellow solid line with circle markers.

* **Grid:** A light gray grid is present in the background of the plot area.

### Detailed Analysis

The chart plots four data series. Below is a breakdown of each series, its visual trend, and approximate data points extracted by aligning markers with the grid.

**1. ThinkPRM-1.5B@4 (65K) - Pink Dashed Line, Triangle Markers**

* **Trend:** Shows the steepest and highest overall growth. It starts as the second-highest performer at the lowest data point and becomes the clear top performer from 2³ solutions onward, maintaining a significant lead.

* **Data Points (Approximate):**

* At ~2⁰ (1) solutions: ~46%

* At 2¹ (2) solutions: ~55%

* At 2² (4) solutions: ~58%

* At 2³ (8) solutions: ~64%

* At 2⁴ (16) solutions: ~64.5%

* At 2⁵ (32) solutions: ~67%

* At 2⁶ (64) solutions: ~70% (Peak)

* At 2⁷ (128) solutions: ~69%

**2. ThinkPRM-1.5B@4 (1K) - Orange Dashed Line, Triangle Markers**

* **Trend:** Shows strong, steady growth. It starts as the lowest performer but catches up to and eventually surpasses the DiscPRM-1.5B model at higher data volumes.

* **Data Points (Approximate):**

* At ~2⁰ (1) solutions: ~46%

* At 2¹ (2) solutions: ~53%

* At 2² (4) solutions: ~58%

* At 2³ (8) solutions: ~58%

* At 2⁴ (16) solutions: ~62%

* At 2⁵ (32) solutions: ~63%

* At 2⁶ (64) solutions: ~65%

* At 2⁷ (128) solutions: ~68%

**3. DiscPRM-1.5B - Green Solid Line, Circle Markers**

* **Trend:** Shows consistent growth but at a slightly lower rate than the ThinkPRM variants at higher data scales. It starts as the second-lowest performer and is overtaken by ThinkPRM-1.5B@4 (1K) after 2⁵ solutions.

* **Data Points (Approximate):**

* At ~2⁰ (1) solutions: ~46%

* At 2¹ (2) solutions: ~53%

* At 2² (4) solutions: ~56%

* At 2³ (8) solutions: ~60%

* At 2⁴ (16) solutions: ~60%

* At 2⁵ (32) solutions: ~63%

* At 2⁶ (64) solutions: ~65%

* At 2⁷ (128) solutions: ~63% (Note: This point appears to dip slightly from the previous point.)

**4. RLHFFlow-8B-Deepseek - Yellow Solid Line, Circle Markers**

* **Trend:** Shows the slowest rate of improvement. It starts as the lowest performer and remains the lowest-performing method across the entire range of training data, though its accuracy does increase.

* **Data Points (Approximate):**

* At ~2⁰ (1) solutions: ~46%

* At 2¹ (2) solutions: ~50%

* At 2² (4) solutions: ~53%

* At 2³ (8) solutions: ~58%

* At 2⁴ (16) solutions: ~60%

* At 2⁵ (32) solutions: ~60%

* At 2⁶ (64) solutions: ~62%

* At 2⁷ (128) solutions: ~63%

### Key Observations

1. **Universal Scaling Law:** All four methods demonstrate that increasing the volume of training data (solutions) leads to improved accuracy on the MATH-500 benchmark, confirming a positive scaling relationship.

2. **Performance Hierarchy:** A clear performance hierarchy is established and maintained as data scales: `ThinkPRM-1.5B@4 (65K)` > `ThinkPRM-1.5B@4 (1K)` ≈ `DiscPRM-1.5B` > `RLHFFlow-8B-Deepseek`. The gap between the top and bottom methods widens significantly with more data.

3. **Diminishing Returns:** The curves for all methods begin to flatten between 2⁵ (32) and 2⁷ (128) solutions, suggesting diminishing returns from adding more data beyond a certain point. The `ThinkPRM-1.5B@4 (65K)` model even shows a slight decrease at the final data point.

4. **Crossover Point:** The `ThinkPRM-1.5B@4 (1K)` (orange) line crosses above the `DiscPRM-1.5B` (green) line between 2⁵ (32) and 2⁶ (64) solutions, indicating it becomes more data-efficient at larger scales.

5. **Initial Convergence:** All models start at nearly the same accuracy (~46%) when trained on the smallest dataset (~1 solution), but their performance diverges rapidly as data increases.

### Interpretation

This chart provides a comparative analysis of different training methodologies (likely involving different reward models or training objectives like "ThinkPRM," "DiscPRM," and "RLHFFlow") when applied to a base generator model (Llama 3.2-3B-Instruct) for mathematical reasoning.

The data suggests that the **`ThinkPRM-1.5B@4` method, especially when scaled to 65K solutions, is the most effective and data-efficient approach** among those tested for improving mathematical accuracy. Its superior performance implies that its underlying training strategy (possibly involving more sophisticated process supervision or thinking-step reward modeling) extracts more learning signal per data point.

The fact that the much larger `RLHFFlow-8B-Deepseek` model (8B parameters vs. 1.5B for the others) performs the worst is a critical finding. It indicates that **model size alone is not the primary driver of performance on this task**; the training methodology and data quality/quantity are more decisive factors. This challenges the simple "bigger is better" paradigm and highlights the importance of algorithmic innovation in training.

The flattening of the curves suggests that for this specific task and generator, simply adding more solution data of the same type may yield limited future gains. Further improvements might require higher-quality data, more advanced training techniques, or changes to the base generator model itself. The slight dip for the top model at 128 solutions could be noise or an early sign of overfitting to the training data distribution.