## Screenshot: Multimodal AI Cooking Assistant Interaction Log

### Overview

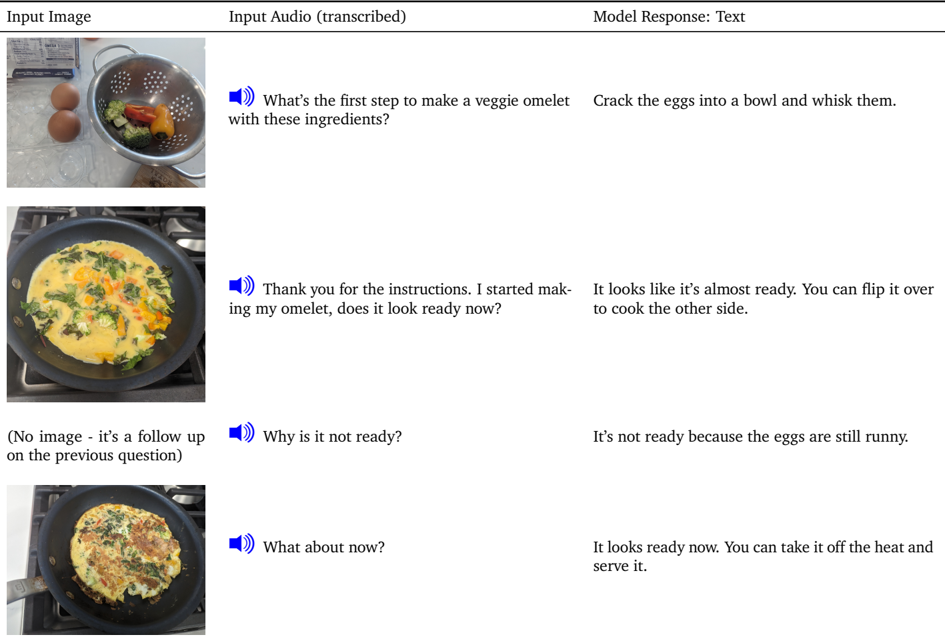

The image is a screenshot of a table documenting a four-step, multimodal interaction between a user and an AI assistant. The interaction demonstrates the AI processing sequential images and audio queries to guide the user through making a veggie omelet. The table has three columns: "Input Image," "Input Audio (transcribed)," and "Model Response: Text."

### Components/Axes

The table structure is the primary component, with the following headers:

- **Column 1 Header:** `Input Image`

- **Column 2 Header:** `Input Audio (transcribed)`

- **Column 3 Header:** `Model Response: Text`

The table contains four rows, each representing a distinct turn in the conversation.

### Detailed Analysis

**Row 1:**

- **Input Image:** A photograph showing two brown eggs on a white surface next to a metal colander. The colander contains chopped vegetables, including red and yellow bell peppers and broccoli florets.

- **Input Audio (transcribed):** `What's the first step to make a veggie omelet with these ingredients?` (Accompanied by a blue speaker icon 🔊).

- **Model Response: Text:** `Crack the eggs into a bowl and whisk them.`

**Row 2:**

- **Input Image:** A photograph of a black frying pan on a gas stovetop. Inside the pan is a partially cooked omelet, yellow with visible green and orange vegetable pieces embedded in it.

- **Input Audio (transcribed):** `Thank you for the instructions. I started making my omelet, does it look ready now?` (Accompanied by a blue speaker icon 🔊).

- **Model Response: Text:** `It looks like it's almost ready. You can flip it over to cook the other side.`

**Row 3:**

- **Input Image:** No image provided (follow-up on the previous question).

- **Input Audio (transcribed):** `Why is it not ready?` (Accompanied by a blue speaker icon 🔊).

- **Model Response: Text:** `It's not ready because the eggs are still runny.`

**Row 4:**

- **Input Image:** A photograph of the same frying pan on the stovetop. The omelet now appears fully set, with a golden-brown, slightly crispy surface.

- **Input Audio (transcribed):** `What about now?` (Accompanied by a blue speaker icon 🔊).

- **Model Response: Text:** `It looks ready now. You can take it off the heat and serve it.`

### Key Observations

1. **Sequential Logic:** The interaction follows a clear, logical cooking sequence: ingredient identification → initial cooking assessment → follow-up reasoning → final doneness confirmation.

2. **Multimodal Integration:** The AI's responses are directly informed by the visual content of the images (e.g., assessing the omelet's "runny" state vs. "golden-brown" finish) and the context of the audio conversation.

3. **Visual Progression:** The images show a clear transformation of the food from raw ingredients to a finished dish, which the AI uses as the primary data for its judgments.

4. **Conversational Context:** The third row explicitly lacks an image, demonstrating the AI's ability to maintain context and provide reasoning based solely on the prior dialogue.

### Interpretation

This screenshot serves as a technical demonstration of a multimodal AI agent's capabilities in a practical, real-world task. It showcases several key functions:

- **Visual Question Answering (VQA):** The AI interprets images to identify ingredients and assess the state of a cooking process.

- **Contextual Dialogue:** It maintains a coherent, multi-turn conversation, understanding follow-up questions and references to previous steps ("the previous question").

- **Procedural Reasoning:** The AI provides step-by-step instructions and justifies its assessments (e.g., explaining *why* the omelet isn't ready), moving beyond simple description to applied reasoning.

- **Uncertainty Handling:** The model's language ("It looks like it's almost ready," "It looks ready now") appropriately conveys probabilistic judgment based on visual evidence, which is crucial for real-world applications where inputs are ambiguous.

The underlying data suggests a system designed to bridge the gap between digital instruction and physical execution, using continuous visual feedback to guide a user through a manual task. The absence of an image in the third turn is particularly notable, as it tests and confirms the model's ability to rely on conversational memory and logical inference rather than immediate sensory input.