## Screenshot: Dialogue with Image and Audio Input

### Overview

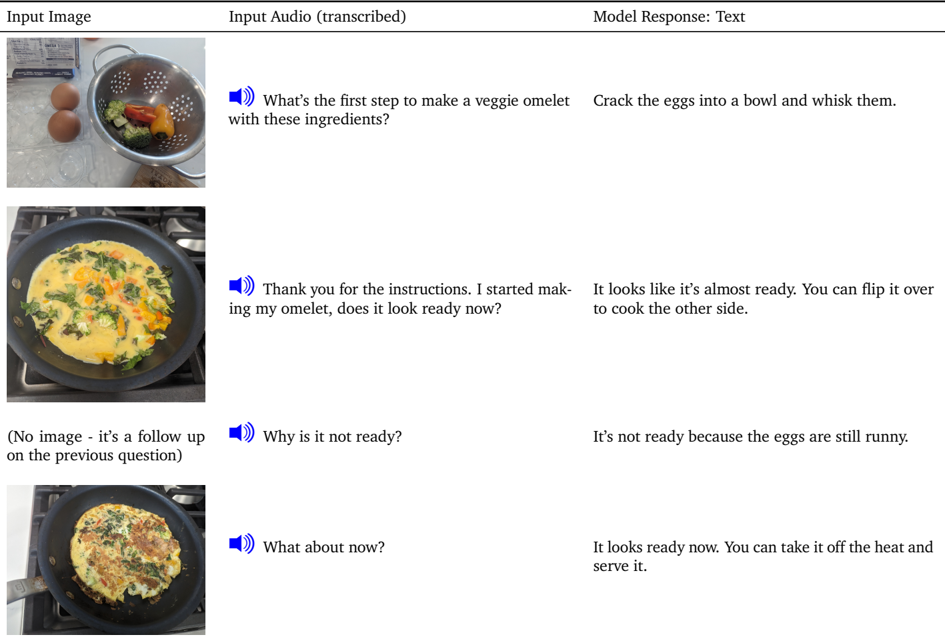

The image depicts a conversational interface where a user interacts with a model using images, transcribed audio, and text responses. The dialogue revolves around preparing a veggie omelet, with the model providing step-by-step guidance based on visual and auditory inputs.

### Components/Axes

- **Input Image**: Visual context for the cooking process (e.g., ingredients, cooking stages).

- **Input Audio (transcribed)**: User queries or statements related to the task.

- **Model Response: Text**: Answers or instructions generated by the model.

### Detailed Analysis

1. **First Interaction**

- **Input Image**: A bowl containing chopped vegetables (broccoli, bell pepper) and two eggs.

- **Input Audio**: *"What’s the first step to make a veggie omelet with these ingredients?"*

- **Model Response**: *"Crack the eggs into a bowl and whisk them."*

2. **Second Interaction**

- **Input Image**: A frying pan with a partially cooked omelet containing vegetables.

- **Input Audio**: *"Thank you for the instructions. I started making my omelet, does it look ready now?"*

- **Model Response**: *"It looks like it’s almost ready. You can flip it over to cook the other side."*

3. **Follow-Up Interaction (No Image)**

- **Input Audio**: *"Why is it not ready?"*

- **Model Response**: *"It’s not ready because the eggs are still runny."*

4. **Final Interaction**

- **Input Image**: A fully cooked omelet in a frying pan.

- **Input Audio**: *"What about now?"*

- **Model Response**: *"It looks ready now. You can take it off the heat and serve it."*

### Key Observations

- The model adapts its responses to the user’s progress, using visual cues (e.g., egg runniness) to assess readiness.

- The dialogue progresses from ingredient preparation to cooking completion, with the model acting as a real-time guide.

- No numerical data or trends are present; the focus is on procedural guidance.

### Interpretation

The interaction demonstrates a multimodal AI system capable of interpreting visual and auditory inputs to provide context-aware instructions. The model’s ability to reference visual states (e.g., "eggs are runny") suggests integration of image analysis with natural language processing. This setup could be applied to cooking tutorials, troubleshooting, or other step-by-step tasks requiring real-time feedback.