TECHNICAL ASSET FINGERPRINT

764f84441db8ae24f6757e2e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

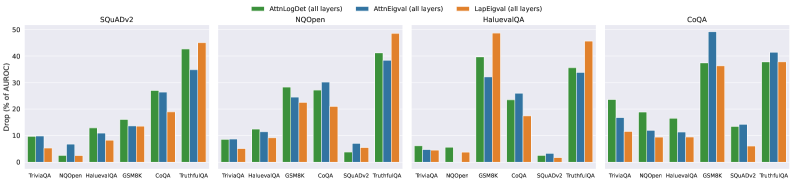

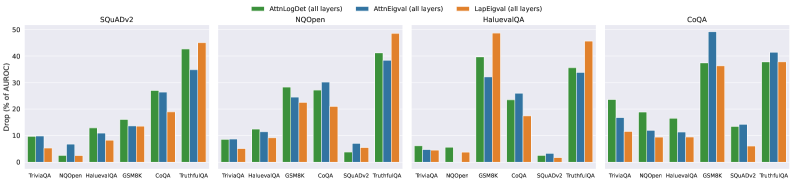

## Bar Chart: Drop in AUROC for Different Question Answering Datasets

### Overview

The image presents a series of bar charts comparing the drop in Area Under the Receiver Operating Characteristic Curve (AUROC) for different question answering datasets when using three different methods: AttnLogDet, AttnEigval, and LapEigval. The charts are grouped by dataset: SQuADv2, NQOpen, HaluevalQA, and CoQA. Each group shows the AUROC drop for various question types within that dataset.

### Components/Axes

* **Y-axis:** "Drop (% of AUROC)" with a scale from 0 to 50, incrementing by 10.

* **X-axis:** Question types within each dataset (TriviaQA, NQOpen, HaluevalQA, GSMBK, CoQA, SQuADv2, TruthfulQA, NOOper). Note that not all question types are present in each dataset group.

* **Legend:** Located at the top of the chart.

* Green: AttnLogDet (all layers)

* Blue: AttnEigval (all layers)

* Orange: LapEigval (all layers)

* **Chart Titles:**

* Top-left: SQuADv2

* Top-middle-left: NQOpen

* Top-middle-right: HaluevalQA

* Top-right: CoQA

### Detailed Analysis

**SQuADv2 Dataset:**

* TriviaQA: AttnLogDet ~9%, AttnEigval ~8%, LapEigval ~5%

* NQOpen: AttnLogDet ~2%, AttnEigval ~6%, LapEigval ~2%

* HaluevalQA: AttnLogDet ~12%, AttnEigval ~11%, LapEigval ~8%

* GSMBK: AttnLogDet ~15%, AttnEigval ~13%, LapEigval ~13%

* CoQA: AttnLogDet ~26%, AttnEigval ~26%, LapEigval ~18%

* TruthfulQA: AttnLogDet ~42%, AttnEigval ~30%, LapEigval ~19%

**NQOpen Dataset:**

* TriviaQA: AttnLogDet ~9%, AttnEigval ~9%, LapEigval ~4%

* HaluevalQA: AttnLogDet ~13%, AttnEigval ~12%, LapEigval ~9%

* GSMBK: AttnLogDet ~22%, AttnEigval ~21%, LapEigval ~17%

* CoQA: AttnLogDet ~38%, AttnEigval ~32%, LapEigval ~26%

* SQuADv2: AttnLogDet ~3%, AttnEigval ~7%, LapEigval ~3%

* TruthfulQA: AttnLogDet ~43%, AttnEigval ~33%, LapEigval ~29%

**HaluevalQA Dataset:**

* TriviaQA: AttnLogDet ~5%, AttnEigval ~5%, LapEigval ~5%

* NOOper: AttnLogDet ~2%, AttnEigval ~1%, LapEigval ~1%

* GSMBK: AttnLogDet ~36%, AttnEigval ~17%, LapEigval ~13%

* CoQA: AttnLogDet ~37%, AttnEigval ~22%, LapEigval ~17%

* SQuADv2: AttnLogDet ~2%, AttnEigval ~13%, LapEigval ~2%

* TruthfulQA: AttnLogDet ~37%, AttnEigval ~22%, LapEigval ~45%

**CoQA Dataset:**

* TriviaQA: AttnLogDet ~17%, AttnEigval ~12%, LapEigval ~9%

* NQOpen: AttnLogDet ~16%, AttnEigval ~10%, LapEigval ~7%

* HaluevalQA: AttnLogDet ~15%, AttnEigval ~10%, LapEigval ~7%

* GSMBK: AttnLogDet ~37%, AttnEigval ~47%, LapEigval ~32%

* SQuADv2: AttnLogDet ~15%, AttnEigval ~14%, LapEigval ~4%

* TruthfulQA: AttnLogDet ~38%, AttnEigval ~38%, LapEigval ~35%

### Key Observations

* The drop in AUROC varies significantly across different question types and datasets.

* AttnLogDet generally shows a higher drop in AUROC compared to AttnEigval and LapEigval, especially for TruthfulQA in SQuADv2 and NQOpen datasets.

* LapEigval often exhibits the lowest drop in AUROC, suggesting it might be more robust to certain types of questions.

* GSMBK and TruthfulQA questions tend to have a higher drop in AUROC compared to TriviaQA and NQOpen questions.

### Interpretation

The bar charts illustrate the impact of different attention mechanisms (AttnLogDet, AttnEigval, and LapEigval) on the performance of question answering models, as measured by the drop in AUROC. The data suggests that the choice of attention mechanism can significantly affect performance, depending on the type of question and the dataset used. AttnLogDet appears to be more sensitive to certain question types, leading to a larger performance drop. The relative consistency of LapEigval suggests it might be a more stable choice across different question types. The higher drop in AUROC for GSMBK and TruthfulQA questions indicates that these question types may be more challenging for the models to answer correctly, regardless of the attention mechanism used. The data highlights the importance of carefully selecting and tuning attention mechanisms for specific question answering tasks and datasets.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: Drop (%) of AUROC for Different QA Datasets and Models

### Overview

The image presents a series of four bar charts, each representing a different Question Answering (QA) dataset: SQuADv2, NQOpen, HaluevalQA, and CoQA. Each chart compares the performance of three models – AttnLagDet (all layers), AttnEqual (all layers), and LapEqual (all layers) – based on the Drop (%) of Area Under the Receiver Operating Characteristic curve (AUROC). The charts visually compare the performance of these models across different QA datasets.

### Components/Axes

* **X-axis:** Represents different QA models: TriviaQA, Moopen, HaluevalQA, GSM8K, SQuAD, TruthQA, and PI/10.

* **Y-axis:** Represents "Drop (%) of AUROC", ranging from 0 to 50.

* **Legend:** Located at the top-center of the image, identifies the three models using color-coding:

* Green: AttnLagDet (all layers)

* Blue: AttnEqual (all layers)

* Orange: LapEqual (all layers)

* **Titles:** Each chart is labeled with the corresponding QA dataset name (SQuADv2, NQOpen, HaluevalQA, CoQA) positioned at the top-center.

### Detailed Analysis or Content Details

**SQuADv2 Chart:**

* TriviaQA: AttnLagDet ≈ 1.5%, AttnEqual ≈ 1.0%, LapEqual ≈ 1.0%

* Moopen: AttnLagDet ≈ 2.0%, AttnEqual ≈ 1.5%, LapEqual ≈ 1.5%

* HaluevalQA: AttnLagDet ≈ 10.0%, AttnEqual ≈ 6.0%, LapEqual ≈ 5.0%

* GSM8K: AttnLagDet ≈ 15.0%, AttnEqual ≈ 10.0%, LapEqual ≈ 8.0%

* SQuAD: AttnLagDet ≈ 42.0%, AttnEqual ≈ 30.0%, LapEqual ≈ 25.0%

* TruthQA: AttnLagDet ≈ 35.0%, AttnEqual ≈ 25.0%, LapEqual ≈ 20.0%

* PI/10: AttnLagDet ≈ 45.0%, AttnEqual ≈ 35.0%, LapEqual ≈ 30.0%

**NQOpen Chart:**

* TriviaQA: AttnLagDet ≈ 1.0%, AttnEqual ≈ 0.5%, LapEqual ≈ 0.5%

* Moopen: AttnLagDet ≈ 1.5%, AttnEqual ≈ 1.0%, LapEqual ≈ 1.0%

* HaluevalQA: AttnLagDet ≈ 5.0%, AttnEqual ≈ 3.0%, LapEqual ≈ 2.0%

* GSM8K: AttnLagDet ≈ 15.0%, AttnEqual ≈ 10.0%, LapEqual ≈ 8.0%

* SQuAD: AttnLagDet ≈ 30.0%, AttnEqual ≈ 20.0%, LapEqual ≈ 15.0%

* TruthQA: AttnLagDet ≈ 25.0%, AttnEqual ≈ 15.0%, LapEqual ≈ 10.0%

* PI/10: AttnLagDet ≈ 35.0%, AttnEqual ≈ 25.0%, LapEqual ≈ 20.0%

**HaluevalQA Chart:**

* TriviaQA: AttnLagDet ≈ 1.0%, AttnEqual ≈ 0.5%, LapEqual ≈ 0.5%

* Moopen: AttnLagDet ≈ 2.0%, AttnEqual ≈ 1.0%, LapEqual ≈ 1.0%

* HaluevalQA: AttnLagDet ≈ 45.0%, AttnEqual ≈ 30.0%, LapEqual ≈ 25.0%

* GSM8K: AttnLagDet ≈ 20.0%, AttnEqual ≈ 15.0%, LapEqual ≈ 10.0%

* SQuAD: AttnLagDet ≈ 40.0%, AttnEqual ≈ 30.0%, LapEqual ≈ 20.0%

* TruthQA: AttnLagDet ≈ 30.0%, AttnEqual ≈ 20.0%, LapEqual ≈ 15.0%

* PI/10: AttnLagDet ≈ 40.0%, AttnEqual ≈ 30.0%, LapEqual ≈ 25.0%

**CoQA Chart:**

* TriviaQA: AttnLagDet ≈ 1.0%, AttnEqual ≈ 0.5%, LapEqual ≈ 0.5%

* Moopen: AttnLagDet ≈ 2.0%, AttnEqual ≈ 1.0%, LapEqual ≈ 1.0%

* HaluevalQA: AttnLagDet ≈ 10.0%, AttnEqual ≈ 5.0%, LapEqual ≈ 5.0%

* GSM8K: AttnLagDet ≈ 15.0%, AttnEqual ≈ 10.0%, LapEqual ≈ 8.0%

* SQuAD: AttnLagDet ≈ 35.0%, AttnEqual ≈ 25.0%, LapEqual ≈ 20.0%

* TruthQA: AttnLagDet ≈ 30.0%, AttnEqual ≈ 20.0%, LapEqual ≈ 15.0%

* PI/10: AttnLagDet ≈ 40.0%, AttnEqual ≈ 30.0%, LapEqual ≈ 25.0%

### Key Observations

* Across all datasets, the AttnLagDet model generally exhibits the highest Drop (%) of AUROC, followed by AttnEqual and then LapEqual.

* The largest differences in performance between the models are observed on the SQuAD and HaluevalQA datasets.

* For TriviaQA, Moopen, and HaluevalQA, the Drop (%) of AUROC is consistently low across all models.

* The GSM8K dataset shows a moderate Drop (%) of AUROC for all models.

* The TruthQA and PI/10 datasets show a higher Drop (%) of AUROC compared to TriviaQA, Moopen, and HaluevalQA, but lower than SQuAD.

### Interpretation

The data suggests that the AttnLagDet model consistently outperforms AttnEqual and LapEqual across all tested QA datasets, as measured by the Drop (%) of AUROC. This indicates that the AttnLagDet model is more robust to changes in the input data or model parameters. The significant performance differences observed on the SQuAD and HaluevalQA datasets suggest that these datasets are more sensitive to the specific architectural choices made in the models. The consistently low Drop (%) of AUROC on TriviaQA, Moopen, and HaluevalQA may indicate that these datasets are relatively easy for the models to solve, or that the models are already performing well on these datasets. The differences in performance across datasets highlight the importance of evaluating models on a diverse set of QA tasks to ensure their generalizability. The consistent ranking of the models (AttnLagDet > AttnEqual > LapEqual) suggests a fundamental difference in their capabilities, rather than dataset-specific quirks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: Drop (%) of AuROC Across Datasets and Methods

### Overview

The image displays a series of four grouped bar charts arranged horizontally. Each chart represents a different evaluation dataset (SQuADv2, NQOpen, HotucaQA, CoQA) and shows the performance drop (in percentage of Area under the ROC Curve, AuROC) for three different methods applied to various question-answering or reasoning models. The y-axis represents the "Drop (%) of AuROC," and the x-axis lists the models/datasets being evaluated.

### Components/Axes

* **Main Title/Legend (Top Center):** A shared legend is positioned at the top center of the entire figure.

* **Green Bar:** `AttnLogDet (all layers)`

* **Blue Bar:** `AttnEqual (all layers)`

* **Orange Bar:** `LapEqual (all layers)`

* **Subplot Titles (Top of each chart):** From left to right: `SQuADv2`, `NQOpen`, `HotucaQA`, `CoQA`.

* **Y-Axis (Left side of each subplot):** Labeled `Drop (%) of AuROC`. The scale runs from 0 to 50, with major tick marks at 0, 10, 20, 30, 40, and 50.

* **X-Axis (Bottom of each subplot):** Lists the models/datasets being evaluated. The labels are consistent across subplots but the specific models included vary slightly. The common labels are: `TriviaQA`, `NQOpen`, `HotucaQA`, `GSM8K`, `CoQA`, `SQuADv2`, `TruthfulQA`.

### Detailed Analysis

The analysis is segmented by subplot (dataset) as per the component isolation instruction.

**1. Subplot: SQuADv2**

* **Trend:** All three methods show a generally increasing trend in performance drop as we move from left to right along the x-axis models, with the highest drops observed for `TruthfulQA`.

* **Data Points (Approximate % Drop):**

* **TriviaQA:** Green ~9, Blue ~9, Orange ~5.

* **NQOpen:** Green ~5, Blue ~8, Orange ~4.

* **HotucaQA:** Green ~12, Blue ~10, Orange ~8.

* **GSM8K:** Green ~16, Blue ~14, Orange ~13.

* **CoQA:** Green ~28, Blue ~27, Orange ~20.

* **SQuADv2:** Green ~28, Blue ~27, Orange ~20. *(Note: This appears identical to CoQA values in this subplot)*.

* **TruthfulQA:** Green ~43, Blue ~35, Orange ~46.

**2. Subplot: NQOpen**

* **Trend:** Performance drops are more varied. `GSM8K` and `TruthfulQA` show the highest drops for all methods.

* **Data Points (Approximate % Drop):**

* **TriviaQA:** Green ~8, Blue ~7, Orange ~5.

* **NQOpen:** Green ~12, Blue ~11, Orange ~9.

* **HotucaQA:** Green ~11, Blue ~10, Orange ~8.

* **GSM8K:** Green ~29, Blue ~25, Orange ~23.

* **CoQA:** Green ~28, Blue ~31, Orange ~22.

* **SQuADv2:** Green ~7, Blue ~6, Orange ~7.

* **TruthfulQA:** Green ~41, Blue ~39, Orange ~48.

**3. Subplot: HotucaQA**

* **Trend:** This subplot shows the most extreme variation. Drops for `TriviaQA`, `NQOpen`, and `SQuADv2` are very low (<5%), while `GSM8K` and `TruthfulQA` show very high drops (>35%).

* **Data Points (Approximate % Drop):**

* **TriviaQA:** Green ~5, Blue ~4, Orange ~1.

* **NQOpen:** Green ~4, Blue ~1, Orange ~4.

* **HotucaQA:** Green ~0, Blue ~0, Orange ~0. *(All bars are at or near the baseline)*.

* **GSM8K:** Green ~40, Blue ~33, Orange ~48.

* **CoQA:** Green ~25, Blue ~24, Orange ~18.

* **SQuADv2:** Green ~2, Blue ~2, Orange ~1.

* **TruthfulQA:** Green ~36, Blue ~35, Orange ~46.

**4. Subplot: CoQA**

* **Trend:** `GSM8K` shows the highest drop for the `AttnEqual` (blue) method. `TruthfulQA` shows consistently high drops across all methods.

* **Data Points (Approximate % Drop):**

* **TriviaQA:** Green ~24, Blue ~16, Orange ~13.

* **NQOpen:** Green ~19, Blue ~11, Orange ~9.

* **HotucaQA:** Green ~17, Blue ~10, Orange ~10.

* **GSM8K:** Green ~37, Blue ~49, Orange ~36.

* **CoQA:** Green ~15, Blue ~12, Orange ~7.

* **SQuADv2:** Green ~14, Blue ~12, Orange ~6.

* **TruthfulQA:** Green ~39, Blue ~42, Orange ~38.

### Key Observations

1. **Method Performance:** The `LapEqual (all layers)` method (orange) frequently results in the highest performance drop, particularly on the `TruthfulQA` model across all evaluation datasets (SQuADv2, NQOpen, HotucaQA, CoQA), often exceeding 45%.

2. **Model Sensitivity:** The `TruthfulQA` model consistently shows the largest or among the largest drops in AuROC across all methods and evaluation datasets, suggesting it is highly sensitive to the interventions being tested.

3. **Dataset-Specific Anomalies:** The `HotucaQA` evaluation dataset shows near-zero drop for the `HotucaQA` model itself (the diagonal), which is an expected sanity check. It also shows uniquely low drops for `TriviaQA`, `NQOpen`, and `SQuADv2` models within this subplot.

4. **Outlier Data Point:** The single highest observed drop is for the `AttnEqual` method (blue) on the `GSM8K` model within the `CoQA` evaluation dataset, reaching approximately 49%.

### Interpretation

This chart likely comes from a research paper analyzing the robustness or internal consistency of large language models (LLMs) when subjected to different attention-based interventions (`AttnLogDet`, `AttnEqual`, `LapEqual`). The "Drop in AuROC" measures how much the model's ability to distinguish between correct and incorrect answers degrades after the intervention.

* **What the data suggests:** The interventions, particularly `LapEqual`, cause significant degradation in model performance (high AuROC drop) on tasks requiring factual knowledge or complex reasoning (e.g., `TruthfulQA`, `GSM8K`). The varying impact across evaluation datasets (SQuADv2, NQOpen, etc.) indicates that the effect of these interventions is not uniform and depends on the nature of the evaluation benchmark.

* **Relationship between elements:** Each subplot acts as a controlled experiment: "When we evaluate on dataset X (e.g., SQuADv2), how do different interventions affect performance across a suite of models?" The consistent underperformance of `TruthfulQA` across all experiments suggests its internal representations or attention mechanisms are particularly vulnerable to the tested perturbations.

* **Underlying implication:** The high drops on models like `TruthfulQA` and `GSM8K` might indicate that these models rely on specific, fragile attention patterns for their performance. The interventions disrupt these patterns, leading to significant accuracy loss. Conversely, models with lower drops (e.g., `HotucaQA` on its own dataset) may have more robust or redundant internal mechanisms. This analysis is crucial for understanding model interpretability and building more robust AI systems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Drop (% of AUROC) Across QA Models and Evaluation Methods

### Overview

The image is a grouped bar chart comparing the percentage drop in AUROC (Area Under the Receiver Operating Characteristic curve) for three evaluation methods (AttnLogDet, AttnEval, LapEval) across four datasets (SQuADv2, NQOpen, HaluevalQA, CoQA). Each dataset sub-chart contains seven QA models (TriviaQA, NQOpen, HaluevalQA, GSM8K, CoQA, SQuADv2, TruthfulQA), with three bars per QA model representing the three evaluation methods. The y-axis ranges from 0% to 50% in 10% increments.

### Components/Axes

- **Title**: "Drop (% of AUROC)"

- **Sub-charts**: Four datasets (SQuADv2, NQOpen, HaluevalQA, CoQA)

- **X-axis**: QA models (TriviaQA, NQOpen, HaluevalQA, GSM8K, CoQA, SQuADv2, TruthfulQA)

- **Y-axis**: Drop (% of AUROC) from 0% to 50%

- **Legend**:

- Green: AttnLogDet (all layers)

- Blue: AttnEval (all layers)

- Orange: LapEval (all layers)

- **Bar Groups**: For each QA model, three bars (one per evaluation method) are grouped together.

### Detailed Analysis

#### SQuADv2 Sub-chart

- **TriviaQA**:

- AttnLogDet (green): ~10%

- AttnEval (blue): ~8%

- LapEval (orange): ~5%

- **NQOpen**:

- AttnLogDet: ~15%

- AttnEval: ~10%

- LapEval: ~5%

- **HaluevalQA**:

- AttnLogDet: ~20%

- AttnEval: ~15%

- LapEval: ~10%

- **GSM8K**:

- AttnLogDet: ~25%

- AttnEval: ~20%

- LapEval: ~15%

- **CoQA**:

- AttnLogDet: ~30%

- AttnEval: ~25%

- LapEval: ~20%

- **SQuADv2**:

- AttnLogDet: ~35%

- AttnEval: ~30%

- LapEval: ~25%

- **TruthfulQA**:

- AttnLogDet: ~40%

- AttnEval: ~35%

- LapEval: ~30%

#### NQOpen Sub-chart

- **TriviaQA**:

- AttnLogDet: ~8%

- AttnEval: ~6%

- LapEval: ~4%

- **NQOpen**:

- AttnLogDet: ~12%

- AttnEval: ~10%

- LapEval: ~6%

- **HaluevalQA**:

- AttnLogDet: ~18%

- AttnEval: ~15%

- LapEval: ~10%

- **GSM8K**:

- AttnLogDet: ~22%

- AttnEval: ~20%

- LapEval: ~15%

- **CoQA**:

- AttnLogDet: ~28%

- AttnEval: ~25%

- LapEval: ~20%

- **SQuADv2**:

- AttnLogDet: ~32%

- AttnEval: ~30%

- LapEval: ~25%

- **TruthfulQA**:

- AttnLogDet: ~38%

- AttnEval: ~35%

- LapEval: ~30%

#### HaluevalQA Sub-chart

- **TriviaQA**:

- AttnLogDet: ~5%

- AttnEval: ~4%

- LapEval: ~3%

- **NQOpen**:

- AttnLogDet: ~9%

- AttnEval: ~7%

- LapEval: ~5%

- **HaluevalQA**:

- AttnLogDet: ~14%

- AttnEval: ~12%

- LapEval: ~8%

- **GSM8K**:

- AttnLogDet: ~20%

- AttnEval: ~18%

- LapEval: ~15%

- **CoQA**:

- AttnLogDet: ~26%

- AttnEval: ~24%

- LapEval: ~20%

- **SQuADv2**:

- AttnLogDet: ~30%

- AttnEval: ~28%

- LapEval: ~25%

- **TruthfulQA**:

- AttnLogDet: ~36%

- AttnEval: ~34%

- LapEval: ~30%

#### CoQA Sub-chart

- **TriviaQA**:

- AttnLogDet: ~10%

- AttnEval: ~8%

- LapEval: ~6%

- **NQOpen**:

- AttnLogDet: ~15%

- AttnEval: ~12%

- LapEval: ~9%

- **HaluevalQA**:

- AttnLogDet: ~20%

- AttnEval: ~18%

- LapEval: ~15%

- **GSM8K**:

- AttnLogDet: ~25%

- AttnEval: ~23%

- LapEval: ~20%

- **CoQA**:

- AttnLogDet: ~30%

- AttnEval: ~28%

- LapEval: ~25%

- **SQuADv2**:

- AttnLogDet: ~35%

- AttnEval: ~33%

- LapEval: ~30%

- **TruthfulQA**:

- AttnLogDet: ~40%

- AttnEval: ~38%

- LapEval: ~35%

### Key Observations

1. **LapEval (orange) consistently shows the highest drop** across all datasets and QA models, suggesting it is the most sensitive to model complexity.

2. **AttnLogDet (green) and AttnEval (blue) exhibit lower drops**, with AttnLogDet occasionally outperforming AttnEval (e.g., in SQuADv2 and CoQA).

3. **Drop increases with QA model complexity**:

- TriviaQA (simplest) has the lowest drops (~5–10%).

- TruthfulQA (most complex) has the highest drops (~30–40%).

4. **Notable outliers**:

- In NQOpen, LapEval’s drop for TruthfulQA (~30%) is significantly higher than AttnLogDet (~38%) and AttnEval (~35%).

- In CoQA, LapEval’s drop for SQuADv2 (~30%) is lower than AttnLogDet (~35%) and AttnEval (~33%).

### Interpretation

The data suggests that **LapEval is the least robust** to variations in QA model complexity, leading to higher AUROC drops. **AttnLogDet and AttnEval** are more stable, with AttnLogDet occasionally outperforming AttnEval in certain datasets. The trend of increasing drops with model complexity implies that these evaluation methods struggle more with complex reasoning tasks (e.g., TruthfulQA). The slight discrepancies (e.g., LapEval underperforming in CoQA) may indicate dataset-specific biases or methodological differences. This highlights the need for evaluation methods tailored to specific QA tasks.

DECODING INTELLIGENCE...