TECHNICAL ASSET FINGERPRINT

766ed2a053949a7b25e5596c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Distribution of Reward Function and Accuracy on Human Reward Function Set

### Overview

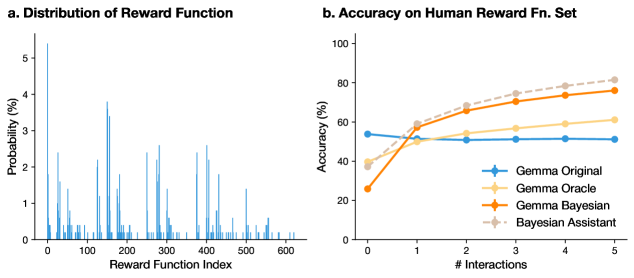

The image presents two charts. The first chart (a) is a bar graph showing the distribution of a reward function across different reward function indices. The second chart (b) is a line graph comparing the accuracy of different models (Gemma Original, Gemma Oracle, Gemma Bayesian, and Bayesian Assistant) on a human reward function set, plotted against the number of interactions.

### Components/Axes

**Chart a: Distribution of Reward Function**

* **Title:** a. Distribution of Reward Function

* **X-axis:** Reward Function Index, ranging from 0 to 600.

* **Y-axis:** Probability (%), ranging from 0% to 5%.

* **Data:** The chart displays a series of vertical bars, each representing the probability associated with a specific reward function index.

**Chart b: Accuracy on Human Reward Fn. Set**

* **Title:** b. Accuracy on Human Reward Fn. Set

* **X-axis:** # Interactions, ranging from 0 to 5.

* **Y-axis:** Accuracy (%), ranging from 0% to 100%.

* **Legend (located in the bottom-right):**

* **Blue Line with Plus Markers:** Gemma Original

* **Light Yellow Line:** Gemma Oracle

* **Orange Line with Plus Markers:** Gemma Bayesian

* **Dashed Gray Line with Diamond Markers:** Bayesian Assistant

### Detailed Analysis

**Chart a: Distribution of Reward Function**

* The distribution is highly uneven, with most reward function indices having very low probabilities.

* There are several spikes indicating reward function indices with significantly higher probabilities.

* The highest probability observed is approximately 5%.

**Chart b: Accuracy on Human Reward Fn. Set**

* **Gemma Original (Blue):** Starts at approximately 54% accuracy at 0 interactions, dips slightly to around 51% at 1 interaction, and then remains relatively constant at approximately 53% for the remaining interactions.

* **Gemma Oracle (Light Yellow):** Starts at approximately 38% accuracy at 0 interactions, increases to approximately 52% at 1 interaction, and then gradually increases to approximately 60% at 5 interactions.

* **Gemma Bayesian (Orange):** Starts at approximately 25% accuracy at 0 interactions, increases sharply to approximately 52% at 1 interaction, and then gradually increases to approximately 77% at 5 interactions.

* **Bayesian Assistant (Dashed Gray):** Starts at approximately 38% accuracy at 0 interactions, increases to approximately 52% at 1 interaction, and then gradually increases to approximately 82% at 5 interactions.

### Key Observations

* In Chart a, the reward function distribution is sparse, suggesting that only a small subset of reward functions are highly probable.

* In Chart b, Gemma Bayesian and Bayesian Assistant significantly outperform Gemma Original and Gemma Oracle as the number of interactions increases.

* Gemma Original's accuracy remains relatively stable regardless of the number of interactions.

* Bayesian Assistant shows the highest accuracy among all models, especially at higher interaction counts.

### Interpretation

The distribution of the reward function (Chart a) indicates that the reward landscape is not uniform, with certain reward functions being much more likely than others. This could reflect inherent biases or preferences in the environment or the data used to define the reward functions.

The accuracy comparison (Chart b) demonstrates the effectiveness of Bayesian methods (Gemma Bayesian and Bayesian Assistant) in learning from human interactions. These methods show a significant improvement in accuracy as the number of interactions increases, suggesting that they are better at adapting to human preferences or feedback compared to the Gemma Original and Gemma Oracle models. The Gemma Original model's stable accuracy suggests it may not be effectively learning from interactions, while the Gemma Oracle model shows some improvement but not as significant as the Bayesian approaches. The Bayesian Assistant, with its highest accuracy, likely incorporates additional mechanisms or prior knowledge that further enhance its learning capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Reward Function Distribution and Accuracy on Human Reward Function Set

### Overview

The image presents two charts side-by-side. The first chart (a) displays the distribution of a reward function, showing the probability of each reward function index. The second chart (b) illustrates the accuracy of different models (Gemma Original, Gemma Oracle, Gemma Bayesian, and Bayesian Assistant) on a human reward function set, plotted against the number of interactions.

### Components/Axes

**Chart a: Distribution of Reward Function**

* **X-axis:** Reward Function Index (ranging from approximately 0 to 600)

* **Y-axis:** Probability (%) (ranging from 0 to approximately 3.5)

* **Title:** Distribution of Reward Function

**Chart b: Accuracy on Human Reward Fn. Set**

* **X-axis:** # Interactions (ranging from 0 to 5)

* **Y-axis:** Accuracy (%) (ranging from 0 to 100)

* **Title:** Accuracy on Human Reward Fn. Set

* **Legend:**

* Gemma Original (Blue, marked with triangles)

* Gemma Oracle (Light Orange, marked with circles)

* Gemma Bayesian (Orange, marked with squares)

* Bayesian Assistant (Light Pink, marked with crosses)

### Detailed Analysis or Content Details

**Chart a: Distribution of Reward Function**

The distribution is a histogram-like plot. There is a large peak around an index of approximately 75-125, with a probability of around 3.3%. There are several smaller peaks and valleys throughout the range of reward function indices, indicating a non-uniform distribution. The probability generally decreases as the index moves away from the initial peak, with several smaller peaks appearing between approximately 200 and 550.

**Chart b: Accuracy on Human Reward Fn. Set**

* **Gemma Original (Blue Triangles):** Starts at approximately 55% accuracy at 0 interactions, dips to around 48% at 1 interaction, and remains relatively stable around 50-55% for the remaining interactions.

* **Gemma Oracle (Light Orange Circles):** Starts at approximately 25% accuracy at 0 interactions, rises sharply to around 60% at 1 interaction, and continues to increase to approximately 75% at 5 interactions.

* **Gemma Bayesian (Orange Squares):** Starts at approximately 45% accuracy at 0 interactions, rises to around 70% at 2 interactions, and continues to increase to approximately 78% at 5 interactions.

* **Bayesian Assistant (Light Pink Crosses):** Starts at approximately 50% accuracy at 0 interactions, rises to around 65% at 1 interaction, and continues to increase to approximately 80% at 5 interactions.

### Key Observations

* The reward function distribution (Chart a) is highly variable, with a prominent peak and numerous smaller fluctuations.

* In Chart b, the Gemma Oracle, Gemma Bayesian, and Bayesian Assistant models all demonstrate an increasing trend in accuracy as the number of interactions increases.

* The Gemma Original model shows a slight decrease in accuracy after the first interaction and remains relatively flat.

* The Bayesian Assistant consistently achieves the highest accuracy across all interaction levels.

* The Gemma Oracle shows the most significant improvement in accuracy with increasing interactions.

### Interpretation

The distribution of the reward function (Chart a) suggests that the reward landscape is complex and not uniformly distributed. This complexity could pose challenges for reinforcement learning algorithms.

Chart b demonstrates the effectiveness of incorporating Bayesian methods and oracle feedback into the Gemma models. The Gemma Oracle, which presumably benefits from access to perfect information, shows a substantial improvement in accuracy with more interactions. The Gemma Bayesian and Bayesian Assistant models also exhibit improved performance, indicating that Bayesian inference can help the models learn more effectively from limited data. The relatively flat performance of the Gemma Original model suggests that it struggles to adapt to the human reward function set without the benefits of oracle feedback or Bayesian reasoning. The consistent high performance of the Bayesian Assistant suggests it is the most robust and effective model for this task. The initial dip in accuracy for Gemma Original could be due to overfitting to the initial data or a temporary instability during the learning process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Chart Pair]: Distribution of Reward Functions and Accuracy Comparison

### Overview

The image contains two distinct charts presented side-by-side. The left chart (a) is a bar chart showing the probability distribution across a set of reward function indices. The right chart (b) is a line chart comparing the accuracy of four different models or methods over a series of interactions. The overall context appears to be an analysis of reinforcement learning or AI alignment, focusing on reward functions and model performance.

### Components/Axes

**Chart a: Distribution of Reward Function**

* **Title:** "a. Distribution of Reward Function"

* **X-axis:** Label: "Reward Function Index". Scale: Linear, marked from 0 to 600 in increments of 100.

* **Y-axis:** Label: "Probability (%)". Scale: Linear, marked from 0 to 5 in increments of 1.

* **Data Series:** A single series represented by vertical blue bars. Each bar corresponds to a specific reward function index.

**Chart b: Accuracy on Human Reward Fn. Set**

* **Title:** "b. Accuracy on Human Reward Fn. Set"

* **X-axis:** Label: "# Interactions". Scale: Discrete integers from 0 to 5.

* **Y-axis:** Label: "Accuracy (%)". Scale: Linear, marked from 0 to 100 in increments of 20.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains four entries:

1. `Gemma Original` - Solid blue line with diamond markers.

2. `Gemma Oracle` - Solid yellow line with square markers.

3. `Gemma Bayesian` - Solid orange line with circle markers.

4. `Bayesian Assistant` - Dashed brown line with diamond markers.

### Detailed Analysis

**Chart a: Distribution of Reward Function**

The distribution is highly non-uniform and sparse. The probability mass is concentrated in several distinct clusters or peaks across the index range.

* **Highest Peak:** The tallest bar is located at approximately index 150-160, reaching a probability of nearly 4%.

* **Other Major Peaks:** Significant clusters of high probability appear around indices 250-280, 350-400, and 450-500. The peaks in these clusters generally range between 2% and 3.5% probability.

* **Low-Probability Regions:** Large stretches of indices, particularly between 0-100, 200-250, and 500-600, show very low or near-zero probability, indicated by very short or absent bars.

* **Overall Shape:** The chart suggests that out of over 600 possible reward functions, only a specific subset (perhaps 50-100) are considered probable or relevant in this context.

**Chart b: Accuracy on Human Reward Fn. Set**

This chart tracks the performance of four methods as the number of interactions increases.

* **Trend Verification & Data Points (Approximate):**

* **Gemma Original (Blue):** The line is nearly flat, showing minimal improvement. It starts at ~55% accuracy at 0 interactions and ends at ~52% at 5 interactions. The trend is essentially stagnant.

* **Gemma Oracle (Yellow):** Shows a steady, moderate upward trend. Starts at ~40% (0 interactions), rises to ~50% (1), ~55% (2), ~58% (3), ~60% (4), and ends at ~62% (5).

* **Gemma Bayesian (Orange):** Shows a strong, consistent upward trend. Starts the lowest at ~28% (0 interactions), then climbs sharply to ~55% (1), ~65% (2), ~70% (3), ~75% (4), and ends at ~78% (5).

* **Bayesian Assistant (Dashed Brown):** Shows the strongest performance and trend. Starts at ~40% (0 interactions), rises to ~60% (1), ~70% (2), ~75% (3), ~80% (4), and ends at the highest point of ~82% (5). It consistently outperforms the other methods after the first interaction.

### Key Observations

1. **Performance Hierarchy:** After 1 interaction, a clear and consistent performance hierarchy is established and maintained: Bayesian Assistant > Gemma Bayesian > Gemma Oracle > Gemma Original.

2. **Impact of Bayesian Methods:** Both methods incorporating "Bayesian" in their name (Gemma Bayesian and Bayesian Assistant) show significantly greater improvement with more interactions compared to the non-Bayesian methods.

3. **The "Oracle" Baseline:** The Gemma Oracle, which likely represents an idealized or informed baseline, is outperformed by the Bayesian methods after just 1-2 interactions.

4. **Stagnation of Baseline:** The Gemma Original model shows no benefit from additional interactions, suggesting it lacks an effective mechanism for learning or adaptation from the provided feedback.

5. **Sparse Reward Distribution:** Chart (a) indicates that the "Human Reward Fn. Set" referenced in chart (b)'s title is not a uniform set but is composed of a sparse selection of specific, probable reward functions.

### Interpretation

The data tells a compelling story about model adaptation and the value of Bayesian approaches in learning from human feedback (or a set of human-aligned reward functions).

* **What the data suggests:** The primary finding is that models equipped with Bayesian updating mechanisms (Gemma Bayesian, Bayesian Assistant) are far more effective at leveraging successive interactions to improve their accuracy on a target set of human reward functions. The Bayesian Assistant, in particular, demonstrates the most efficient and highest overall learning curve.

* **How elements relate:** Chart (a) provides crucial context for chart (b). The sparse distribution of reward functions implies that the learning task is not about mastering a broad, uniform space, but about identifying and aligning with a specific, narrow set of "correct" or "human-preferred" functions. The success of the Bayesian methods suggests they are particularly adept at this kind of targeted identification and alignment.

* **Notable anomalies/outliers:** The complete lack of improvement in the Gemma Original model is the most striking anomaly. It serves as a critical control, highlighting that the improvements seen in the other models are due to their specific architectural or algorithmic choices (like Bayesian inference) and not merely a function of receiving more interactions.

* **Underlying implication:** The results argue strongly for the integration of probabilistic, Bayesian reasoning into AI systems designed to learn from human feedback. Such systems appear to be more sample-efficient (learning faster from fewer interactions) and ultimately more capable of achieving high alignment accuracy. The "Assistant" variant likely incorporates additional design elements that further optimize this learning process.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph and Bar Chart: Reward Function Distribution and Accuracy Trends

### Overview

The image contains two side-by-side visualizations:

1. **Left (Bar Chart)**: "a. Distribution of Reward Function" showing probability (%) across Reward Function Index (0–600).

2. **Right (Line Graph)**: "b. Accuracy on Human Reward Fn. Set" comparing four methods (Gamma Original, Gamma Oracle, Gamma Bayesian, Bayesian Assistant) across 0–5 interactions.

---

### Components/Axes

#### Left Chart (Bar Chart):

- **Title**: "a. Distribution of Reward Function"

- **Y-Axis**: "Probability (%)" (scale: 0–5%)

- **X-Axis**: "Reward Function Index" (0–600, integer steps)

- **Legend**: Not explicitly labeled (bars are blue).

#### Right Chart (Line Graph):

- **Title**: "b. Accuracy on Human Reward Fn. Set"

- **Y-Axis**: "Accuracy (%)" (scale: 0–100%)

- **X-Axis**: "# Interactions" (0–5, integer steps)

- **Legend**:

- **Blue (+)**: Gamma Original

- **Yellow (+)**: Gamma Oracle

- **Orange (+)**: Gamma Bayesian

- **Gray (dashed)**: Bayesian Assistant

---

### Detailed Analysis

#### Left Chart (Bar Chart):

- **Distribution**:

- Multimodal with sharp peaks at indices ~0, 100, 200, 300, 400, 500.

- Peaks vary in height (e.g., ~5% at index 0, ~4% at index 200, ~3% at index 400).

- Most indices have low probability (<1%), with sparse data beyond index 500.

#### Right Chart (Line Graph):

- **Trends**:

1. **Gamma Original (Blue)**: Flat line at ~50% accuracy across all interactions.

2. **Gamma Oracle (Yellow)**: Starts at ~40% (0 interactions), rises to ~60% by 5 interactions.

3. **Gamma Bayesian (Orange)**: Starts at ~20% (0 interactions), steeply increases to ~80% by 5 interactions.

4. **Bayesian Assistant (Gray, dashed)**: Starts at ~30% (0 interactions), rises to ~85% by 5 interactions.

- **Notable**:

- Gamma Bayesian and Bayesian Assistant show the steepest improvement.

- Bayesian Assistant’s dashed line suggests a projected or smoothed trend.

---

### Key Observations

1. **Left Chart**:

- Reward functions are unevenly distributed, with a few dominant indices.

- No clear pattern in peak positions or magnitudes.

2. **Right Chart**:

- All methods improve with more interactions, but Gamma Bayesian and Bayesian Assistant outperform others.

- Gamma Original’s stagnation suggests poor adaptability.

---

### Interpretation

- **Reward Function Distribution**: The left chart implies that certain reward functions are more prevalent or effective, but the sparse data limits conclusions.

- **Accuracy Trends**:

- **Gamma Bayesian** and **Bayesian Assistant** demonstrate superior performance, likely due to adaptive learning or probabilistic modeling.

- **Gamma Original**’s flat line indicates it fails to leverage interactions, possibly due to rigid parameterization.

- The **Bayesian Assistant**’s dashed line may represent a confidence interval or ensemble average, suggesting robustness.

- **Implications**: Bayesian methods (especially Bayesian Assistant) are more effective for dynamic reward function optimization, aligning with principles of probabilistic reasoning and iterative improvement.

---

**Note**: Exact numerical values for bar heights and line points are approximated due to lack of gridlines or numerical annotations. Trends are inferred from visual slopes and relative positioning.

DECODING INTELLIGENCE...