TECHNICAL ASSET FINGERPRINT

76708c04aba82808f655eb35

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

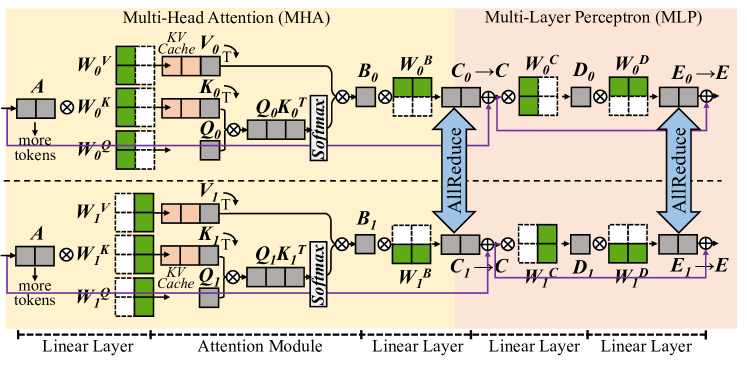

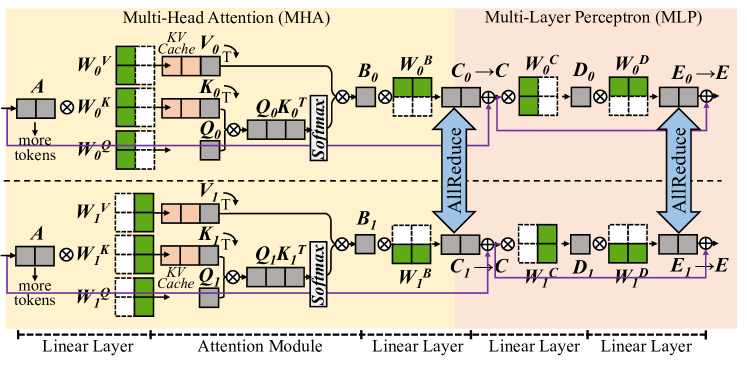

## Diagram: Multi-Head Attention (MHA) and Multi-Layer Perceptron (MLP)

### Overview

The image is a diagram illustrating the architecture and flow of data through a Multi-Head Attention (MHA) module and a Multi-Layer Perceptron (MLP) module. The diagram shows the connections and operations between different layers and components within these modules.

### Components/Axes

* **Titles:**

* Multi-Head Attention (MHA) - Located at the top-left.

* Multi-Layer Perceptron (MLP) - Located at the top-right.

* **Layers:**

* Linear Layer (MHA): Located at the bottom-left, spanning the first section.

* Attention Module (MHA): Located in the middle, spanning the second section.

* Linear Layer (MLP): Located at the bottom-right, spanning the third and fourth sections.

* **Components (MHA):**

* A (Input): Located at the left of the MHA module.

* W0K, W1K (Weight matrices for Key): Located after the input A.

* W0V, W1V (Weight matrices for Value): Located at the top and bottom, respectively.

* W0Q, W1Q (Weight matrices for Query): Located below W0V and W1V, respectively.

* KV Cache: Located next to W0V and W1V.

* K0T, K1T (Transposed Key matrices): Located to the right of the KV Cache.

* Q0, Q1 (Query matrices): Located to the right of W0Q and W1Q, respectively.

* Q0K0T, Q1K1T (Query-Key product): Located to the right of K0T and K1T.

* Softmax: Located to the right of Q0K0T and Q1K1T.

* B0, B1 (Output of Attention Module): Located to the right of Softmax.

* W0B, W1B (Weight matrices): Located to the right of B0 and B1.

* **Components (MLP):**

* C0, C1 (Input to MLP): Located to the right of W0B and W1B.

* W0C, W1C (Weight matrices): Located to the right of C0 and C1.

* D0, D1 (Intermediate output): Located to the right of W0C and W1C.

* W0D, W1D (Weight matrices): Located to the right of D0 and D1.

* E0, E1 (Output before final layer): Located to the right of W0D and W1D.

* E (Final output): Located to the right of E0 and E1.

* **Arrows:** Indicate the direction of data flow.

* **AllReduce:** Blue arrows indicating a reduction operation.

* **Colors:**

* Green: Represents weight matrices (W0V, W1V, W0Q, W1Q, W0B, W1B, W0C, W1C, W0D, W1D).

* Peach: Represents KV Cache and transposed Key matrices (K0T, K1T).

* Gray: Represents other intermediate matrices and operations.

* Purple: Represents the flow of "more tokens".

### Detailed Analysis

* **MHA Module (Top):**

* Input A is multiplied by W0K.

* W0V, W0Q are weight matrices.

* KV Cache stores Key and Value matrices.

* Q0K0T is the product of Query and Transposed Key matrices.

* Softmax is applied to Q0K0T.

* The output of the attention module is B0.

* B0 is multiplied by W0B.

* **MHA Module (Bottom):**

* Input A is multiplied by W1K.

* W1V, W1Q are weight matrices.

* KV Cache stores Key and Value matrices.

* Q1K1T is the product of Query and Transposed Key matrices.

* Softmax is applied to Q1K1T.

* The output of the attention module is B1.

* B1 is multiplied by W1B.

* **MLP Module (Top):**

* C0 is the input to the MLP.

* C0 is multiplied by W0C.

* D0 is the intermediate output.

* D0 is multiplied by W0D.

* E0 is the output before the final layer.

* E0 is added to the output of the AllReduce operation to produce E.

* **MLP Module (Bottom):**

* C1 is the input to the MLP.

* C1 is multiplied by W1C.

* D1 is the intermediate output.

* D1 is multiplied by W1D.

* E1 is the output before the final layer.

* E1 is added to the output of the AllReduce operation to produce E.

* **AllReduce:**

* The AllReduce operation combines the outputs of the two parallel paths in both the MHA and MLP modules.

* The output of the AllReduce operation is added to E0 and E1 to produce the final output E.

* **"more tokens"**:

* A purple arrow indicates the flow of "more tokens" from the input A to the Query matrices (W0Q, W1Q).

### Key Observations

* The diagram illustrates a parallel processing architecture with two identical paths in both the MHA and MLP modules.

* The AllReduce operation is used to combine the outputs of the parallel paths.

* The MHA module consists of a linear layer and an attention module.

* The MLP module consists of multiple linear layers.

### Interpretation

The diagram provides a high-level overview of the architecture and data flow in a Multi-Head Attention (MHA) module and a Multi-Layer Perceptron (MLP) module. The MHA module is used to capture relationships between different parts of the input sequence, while the MLP module is used to perform non-linear transformations on the data. The parallel processing architecture and the AllReduce operation are used to improve the efficiency and scalability of the model. The "more tokens" flow suggests that the query matrices are influenced by additional input tokens, potentially allowing the model to incorporate more contextual information.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Transformer Block Architecture

### Overview

The image depicts a diagram of a Transformer block architecture, specifically illustrating the Multi-Head Attention (MHA) and Multi-Layer Perceptron (MLP) components within two sequential layers. The diagram shows the flow of data through these layers, including linear transformations, attention mechanisms, and residual connections. The diagram is segmented into four main sections: Linear Layer, Attention Module, Linear Layer, and Linear Layer.

### Components/Axes

The diagram features several key components:

* **Input:** Represented by 'A' and 'more tokens' flowing into the first Linear Layer.

* **Linear Layers:** Represented by green boxes with labels like W<sub>0</sub><sup>V</sup>, W<sub>0</sub><sup>K</sup>, W<sub>0</sub><sup>Q</sup>, W<sub>1</sub><sup>V</sup>, W<sub>1</sub><sup>K</sup>, W<sub>1</sub><sup>Q</sup>, W<sub>B</sub><sup>C</sup>, W<sub>C</sub><sup>D</sup>, W<sub>D</sub><sup>P</sup>, W<sub>P</sub><sup>E</sup>.

* **Attention Module:** Contains Key (K), Value (V), and Query (Q) matrices, along with a Softmax function. A 'KV Cache' is also present.

* **MLP:** Consists of multiple Linear Layers and AllReduce operations.

* **Residual Connections:** Represented by pink lines with '+' symbols, indicating addition.

* **Output:** Represented by 'E'.

* **AllReduce:** Blue arrows indicating the AllReduce operation.

* **Labels:** W<sub>0</sub><sup>V</sup>, W<sub>0</sub><sup>K</sup>, W<sub>0</sub><sup>Q</sup>, W<sub>1</sub><sup>V</sup>, W<sub>1</sub><sup>K</sup>, W<sub>1</sub><sup>Q</sup>, W<sub>B</sub><sup>C</sup>, W<sub>C</sub><sup>D</sup>, W<sub>D</sub><sup>P</sup>, W<sub>P</sub><sup>E</sup>, K<sub>0</sub>, Q<sub>0</sub>, V<sub>0</sub>, K<sub>1</sub>, Q<sub>1</sub>, V<sub>1</sub>, B<sub>0</sub>, B<sub>1</sub>, C, D, E, A, E<sub>0</sub>.

### Detailed Analysis or Content Details

The diagram illustrates the data flow as follows:

1. **First Layer:**

* Input 'A' and 'more tokens' are fed into the first Linear Layer, producing W<sub>0</sub><sup>V</sup>, W<sub>0</sub><sup>K</sup>, and W<sub>0</sub><sup>Q</sup>.

* These outputs are used to calculate attention weights via Q<sub>0</sub>K<sub>0</sub><sup>T</sup> and a Softmax function.

* The attention weights are applied to V<sub>0</sub>, resulting in output 'B<sub>0</sub>'.

* 'B<sub>0</sub>' is then passed through a series of Linear Layers (W<sub>B</sub><sup>C</sup>, W<sub>C</sub><sup>D</sup>, W<sub>D</sub><sup>P</sup>, W<sub>P</sub><sup>E</sup>) with AllReduce operations in between, ultimately producing output 'E<sub>0</sub>'.

* A residual connection adds 'A' to 'E<sub>0</sub>', resulting in 'E'.

2. **Second Layer:**

* The output 'E' from the first layer is fed into the second Linear Layer, producing W<sub>1</sub><sup>V</sup>, W<sub>1</sub><sup>K</sup>, and W<sub>1</sub><sup>Q</sup>.

* Similar to the first layer, attention weights are calculated using Q<sub>1</sub>K<sub>1</sub><sup>T</sup> and a Softmax function.

* The attention weights are applied to V<sub>1</sub>, resulting in output 'B<sub>1</sub>'.

* 'B<sub>1</sub>' is then passed through a series of Linear Layers (W<sub>B</sub><sup>C</sup>, W<sub>C</sub><sup>D</sup>, W<sub>D</sub><sup>P</sup>, W<sub>P</sub><sup>E</sup>) with AllReduce operations in between, ultimately producing output 'E'.

* A residual connection adds 'E' to 'E', resulting in 'E'.

The 'KV Cache' is shown connected to both V<sub>0</sub> and V<sub>1</sub>, suggesting it stores key-value pairs for efficient attention calculation. The AllReduce operations are indicated by blue arrows and are applied after each Linear Layer within the MLP.

### Key Observations

* The diagram highlights the repeated structure of the Transformer block, with the same operations being applied in multiple layers.

* The use of residual connections is crucial for enabling the training of deep networks.

* The AllReduce operations suggest a distributed training setup.

* The 'KV Cache' is a key optimization for handling long sequences.

### Interpretation

This diagram illustrates the core architecture of a Transformer block, a fundamental building block of modern natural language processing models. The Multi-Head Attention mechanism allows the model to attend to different parts of the input sequence, while the MLP provides non-linear transformations. The residual connections and AllReduce operations are essential for training and scaling these models. The diagram demonstrates how information flows through the block, highlighting the key components and their interactions. The presence of the KV Cache suggests an optimization for handling long sequences, which is crucial for tasks like machine translation and text generation. The diagram is a high-level representation and does not include details about the specific dimensions or parameters of the linear layers. It focuses on the overall structure and data flow within the Transformer block.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: Transformer Layer Architecture with Parallel Attention and MLP Blocks

### Overview

This image is a technical schematic diagram illustrating the internal architecture of a transformer-based neural network layer. It specifically depicts a parallelized design where the Multi-Head Attention (MHA) and Multi-Layer Perceptron (MLP) computations are processed concurrently, likely across different devices or cores, as indicated by the "AllReduce" communication operations. The diagram uses a flowchart style with boxes representing data tensors or matrices, circles with crosses representing matrix multiplication operations, and arrows indicating data flow.

### Components/Axes

The diagram is divided into two primary horizontal sections, separated by a dashed line, representing two parallel processing streams (e.g., for two different devices or model shards).

**1. Top Section (Stream 0):**

* **Header Label:** "Multi-Head Attention (MHA)" on the left, "Multi-Layer Perceptron (MLP)" on the right.

* **Input:** A gray box labeled **`A`** (input activations). An arrow points from it with the text "more tokens".

* **MHA Block:**

* Three parallel branches for Query (Q), Key (K), and Value (V) projections.

* **Weight Matrices (Green):** `W_0^K`, `W_0^V`, `W_0^Q`.

* **Projected Tensors (Gray):** `K_0`, `V_0`, `Q_0`.

* **KV Cache:** A pink box labeled "KV Cache" is associated with `K_0` and `V_0`.

* **Attention Operation:** `Q_0` and `K_0` are multiplied (`Q_0 K_0^T`), followed by a **`Softmax`** operation (vertical gray box). The result is multiplied with `V_0`.

* **Output Tensor (Gray):** `B_0`.

* **MLP Block:**

* **First Linear Layer:** `B_0` is multiplied by a green weight matrix `W_0^B`, resulting in tensor `C_0`.

* **AllReduce Operation:** A large, double-headed blue arrow labeled **`AllReduce`** connects `C_0` in the top stream to `C_1` in the bottom stream, indicating a synchronization/communication step.

* **Second Linear Layer:** The synchronized `C_0` is multiplied by green weight matrix `W_0^C`, resulting in tensor `D_0`.

* **Third Linear Layer:** `D_0` is multiplied by green weight matrix `W_0^D`, resulting in tensor `E_0`.

* **Output:** The final tensor is labeled **`E`**.

**2. Bottom Section (Stream 1):**

* This section is structurally identical to the top section, with all subscript indices changed from `0` to `1`.

* **Weight Matrices:** `W_1^K`, `W_1^V`, `W_1^Q`, `W_1^B`, `W_1^C`, `W_1^D`.

* **Tensors:** `A`, `K_1`, `V_1`, `Q_1`, `B_1`, `C_1`, `D_1`, `E_1`, `E`.

* **Operations:** Identical matrix multiplications and `Softmax`.

* **AllReceive Operation:** The same blue `AllReduce` arrow connects `C_1` to `C_0`.

**3. Footer Labels (Bottom of Diagram):**

A series of labels aligned with the processing stages from left to right:

* `Linear Layer` (under the initial projection from `A`)

* `Attention Module` (under the QKV operations)

* `Linear Layer` (under the `W_0^B`/`W_1^B` multiplication)

* `Linear Layer` (under the `W_0^C`/`W_1^C` multiplication)

* `Linear Layer` (under the `W_0^D`/`W_1^D` multiplication)

### Detailed Analysis

* **Data Flow & Parallelism:** The diagram explicitly shows a model parallelism strategy. The input `A` is duplicated to both streams. The MHA and initial MLP linear layer (`W^B`) are computed independently on each stream. The critical synchronization point is the `AllReduce` operation on the intermediate tensor `C` (output of the first MLP linear layer). This operation likely sums or averages the `C_0` and `C_1` tensors across streams before proceeding to the subsequent MLP layers (`W^C`, `W^D`). The final output `E` is also shown as a single entity, suggesting it is gathered or replicated after the final computation.

* **KV Cache:** The presence of the "KV Cache" label next to `K_0` and `V_0` (and `K_1`, `V_1`) indicates this architecture is optimized for autoregressive inference, where previously computed Key and Value vectors are stored to avoid recomputation.

* **Matrix Dimensions (Inferred):** The green weight matrices (`W`) are depicted as rectangular blocks, suggesting they are 2D matrices. The gray tensor boxes (`A`, `B`, `C`, etc.) are also rectangular, implying they are 2D tensors (e.g., [sequence_length, hidden_dimension]). The `Q K^T` operation results in a square-like box, consistent with an attention score matrix of shape [seq_len, seq_len].

* **Color Coding:**

* **Green:** Trainable weight matrices/parameters.

* **Gray:** Data activations/tensors and core operations (Softmax).

* **Blue:** Communication operation (AllReduce).

* **Pink:** Cached data (KV Cache).

### Key Observations

1. **Symmetrical Parallel Design:** The two streams are perfect mirrors, indicating a balanced split of the model's hidden dimension or attention heads across two processing units.

2. **Synchronization Point:** The `AllReduce` is placed after the first linear transformation in the MLP block. This is a specific design choice for pipeline or tensor parallelism, ensuring the streams have the same data before the final nonlinear transformations.

3. **No Residual Connections Shown:** The diagram focuses on the core computational blocks (Attention and MLP) and their parallelization. Standard transformer residual connections (add & norm) are not depicted in this specific schematic.

4. **Linear Layer Proliferation:** The MLP is explicitly broken down into three sequential linear layers (`W^B`, `W^C`, `W^D`), which is a more granular view than the typical "two linear layers with an activation in between" description. This may represent a specific implementation or a more detailed breakdown of a single feed-forward block.

### Interpretation

This diagram provides a detailed, low-level view of a **distributed transformer inference engine**. It answers the question: "How is a single transformer layer split and executed across multiple devices to reduce memory footprint and/or increase speed?"

The key insight is the **interleaving of computation and communication**. The devices work independently on their portions of the attention and the first part of the MLP. They must then synchronize (`AllReduce`) to combine their partial results before completing the MLP computation. This pattern is characteristic of **tensor parallelism** (specifically, splitting the MLP layer's hidden dimension).

The inclusion of the "KV Cache" label strongly suggests this architecture is designed for **efficient autoregressive generation** (e.g., for large language models), where minimizing latency and memory bandwidth is critical. The parallelization helps manage the large memory requirement of both the model weights and the growing KV cache for long sequences.

**Notable Anomaly/Design Choice:** The placement of the `AllReduce` *within* the MLP block, rather than after the entire Attention+MLP layer, is significant. It implies that the MLP's first linear layer (`W^B`) is sharded, and its output must be aggregated before the subsequent non-linearity and final linear layers. This is a more communication-intensive but potentially more memory-efficient strategy than other parallelism schemes.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Head Attention (MHA) and Multi-Layer Perceptron (MLP) Architecture

### Overview

The diagram illustrates a neural network architecture combining **Multi-Head Attention (MHA)** and **Multi-Layer Perceptron (MLP)** components. It shows data flow through linear layers, attention mechanisms, and perceptron layers, with explicit attention to distributed training operations like **AllReduce**.

### Components/Axes

- **Key Elements**:

- **Linear Layers**: Input/output transformations (e.g., `A → W_O^K`, `E_0 → E`).

- **Attention Module**:

- **Queries (Q)**: `Q_O`, `Q_1` (computed via `W_O^Q`, `W_1^Q`).

- **Keys (K)**: `K_O`, `K_1` (computed via `W_O^K`, `W_1^K`).

- **Values (V)**: `V_O`, `V_1` (computed via `W_O^V`, `W_1^V`).

- **Cache**: `K^V` (stores key-value pairs for efficiency).

- **Softmax**: Applied to attention scores.

- **MLP Layers**:

- **Bias Terms**: `B_0`, `B_1` (added to linear layer outputs).

- **Weight Matrices**: `W_O^B`, `W_1^B` (for bias adjustments).

- **Dense Layers**: `C_0`, `C_1`, `D_0`, `D_1`, `E_0`, `E_1` (intermediate transformations).

- **AllReduce**: Distributed communication operations between layers (e.g., `C_0 → C_1`).

- **Color Coding**:

- **Green**: Cache (`K^V`).

- **Pink**: Key matrices (`K_O`, `K_1`).

- **Gray**: Query matrices (`Q_O`, `Q_1`).

- **Blue**: Bias terms (`B_0`, `B_1`).

- **White/Black**: Linear layer weights and outputs.

### Detailed Analysis

1. **Input Flow**:

- Input tokens `A` are processed through linear layers with weights `W_O^K`, `W_O^V`, `W_O^Q` to generate queries, keys, and values.

- Additional tokens (`more tokens`) are appended to the input.

2. **Attention Mechanism**:

- Queries (`Q_O`, `Q_1`) and keys (`K_O`, `K_1`) are computed via linear transformations.

- Attention scores are derived from `Q` and `K`, cached (`K^V`), and passed through softmax.

- Values (`V_O`, `V_1`) are combined with attention scores to produce context-aware outputs.

3. **MLP Processing**:

- Outputs from attention are fed into MLP layers with weights `W_O^B`, `W_1^B` and biases `B_0`, `B_1`.

- Intermediate layers (`C_0`, `C_1`, `D_0`, `D_1`, `E_0`, `E_1`) apply dense transformations.

- **AllReduce** operations synchronize gradients across distributed devices (e.g., `C_0 → C_1`).

4. **Layer Structure**:

- **Top Path**: Represents the first attention and MLP layer (`O`).

- **Bottom Path**: Represents the second attention and MLP layer (`1`).

- Arrows indicate data flow and gradient synchronization.

### Key Observations

- **Distributed Training**: AllReduce operations suggest the model is designed for multi-device training.

- **Hierarchical Processing**: Attention layers capture global context, while MLP layers refine features locally.

- **Efficiency**: Caching (`K^V`) reduces redundant computations in attention mechanisms.

### Interpretation

This architecture resembles a **transformer-based model** optimized for distributed training. The combination of attention and MLP layers enables the network to:

- Capture long-range dependencies via attention.

- Process sequential data through perceptron layers.

- Scale efficiently across devices using AllReduce.

The diagram emphasizes modularity, with clear separation between attention and MLP components. The use of cached keys/values and distributed operations highlights a focus on computational efficiency and scalability, typical in large language models or vision transformers.

DECODING INTELLIGENCE...