TECHNICAL ASSET FINGERPRINT

7689aa742c76c031f9e71ff4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

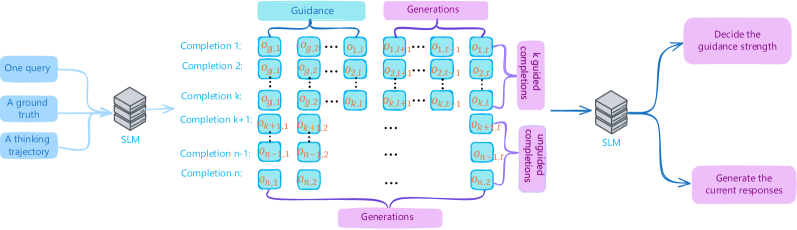

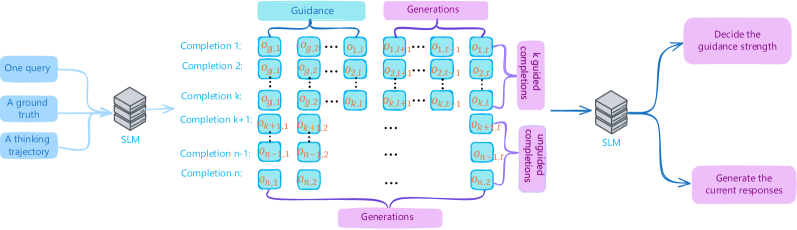

## Diagram: AI Response Generation with Guidance Mechanism

### Overview

The image is a technical flowchart illustrating a process for generating AI responses using a Small Language Model (SLM) with an integrated guidance mechanism. The diagram shows a multi-stage pipeline that takes user inputs, processes them through an SLM to generate multiple completions, applies guidance to these generations, and then uses a second SLM pass to decide on guidance strength and produce the final output. The overall flow moves from left to right.

### Components/Axes

The diagram is organized into several distinct regions and components:

**1. Input Region (Far Left):**

* Three blue, rounded rectangular boxes stacked vertically.

* **Labels (from top to bottom):**

* `One query`

* `A ground truth`

* `A thinking trajectory`

* These three inputs are connected by lines converging into the first processing block.

**2. First Processing Block (Center-Left):**

* A gray, 3D-styled box labeled `SLM` (Small Language Model).

* It receives the three inputs from the left.

**3. Generation & Guidance Region (Center):**

This is the core of the diagram, split into two parallel vertical sections.

* **Left Section - "Guidance":** A light blue header box labeled `Guidance`. Below it is a column of light blue, rounded rectangular boxes representing different "Completions."

* **Labels (from top to bottom):**

* `Completion 1:`

* `Completion 2:`

* `Completion k:`

* `Completion k+1:`

* `Completion n-1:`

* `Completion n:`

* Each "Completion" label is followed by a sequence of mathematical symbols in small boxes (e.g., `η₁₁`, `η₁₂`, ..., `η₁ₘ` for Completion 1). The sequences vary in length and the specific `η` subscripts. The symbols for Completion `k+1` are highlighted in orange.

* **Right Section - "Generations":** A light purple header box labeled `Generations`. Below it is a corresponding column of light purple, rounded rectangular boxes.

* These boxes contain sequences of symbols (e.g., `g₁₁`, `g₁₂`, ..., `g₁ₘ` for the first row) that align horizontally with the "Guidance" completions.

* The sequences for the row corresponding to `Completion k+1` are also highlighted in orange.

* **Connections:** Horizontal lines connect each "Guidance" completion box to its corresponding "Generations" box. A large purple bracket at the bottom labeled `Generations` encompasses all the purple generation boxes.

**4. Comparison & Second Processing Block (Center-Right):**

* Two vertical, purple rectangular boxes labeled `Compare generations` are positioned to the right of the "Generations" column. They appear to aggregate or process the outputs from the generation stage.

* Lines from these comparison boxes converge into a second gray, 3D-styled box labeled `SLM`.

**5. Output Region (Far Right):**

* Two pink, rounded rectangular boxes stacked vertically, connected by lines from the second `SLM` block.

* **Labels (from top to bottom):**

* `Decide the guidance strength`

* `Generate the current responses`

### Detailed Analysis

The diagram depicts a cyclical or iterative refinement process:

1. **Input Stage:** A single query, its associated ground truth, and a "thinking trajectory" (likely a chain-of-thought or reasoning path) are fed into an initial SLM.

2. **Parallel Generation Stage:** The SLM produces `n` different "Completions" (labeled 1 through n). Each completion is a sequence of tokens or steps, represented by `η` variables with double subscripts (e.g., `η₁₁` is the first token of the first completion). Simultaneously, a corresponding set of "Generations" (sequences represented by `g` variables) is created. The highlighting of row `k+1` suggests it may be a focal or example point in the process.

3. **Guidance Application:** The "Guidance" column implies that some form of steering or conditioning is applied to the generation process, influencing the `η` sequences.

4. **Comparison and Decision:** The generated sequences are compared (via the "Compare generations" blocks). This comparison output is fed into a second SLM.

5. **Final Output:** The second SLM performs two tasks: it decides on the appropriate "guidance strength" for future iterations and produces the final "current responses."

### Key Observations

* **Dual SLM Architecture:** The process uses two instances (or stages) of an SLM—one for initial generation and one for meta-decision making.

* **Parallel Processing:** The system generates multiple completions (`n` of them) in parallel, suggesting a strategy like beam search, best-of-n sampling, or ensemble generation.

* **Explicit Guidance Mechanism:** The diagram explicitly separates "Guidance" from "Generations," indicating that guidance is a distinct, controllable input or modifier to the generation process.

* **Iterative Potential:** The output "Decide the guidance strength" suggests the system can adaptively tune its own guidance parameter for subsequent steps, creating a feedback loop.

* **Mathematical Notation:** The use of `η` (eta) and `g` variables with double subscripts provides a formal, mathematical representation of the token sequences, common in machine learning literature.

### Interpretation

This diagram illustrates a sophisticated framework for improving the quality and controllability of text generation from a Small Language Model. The core innovation appears to be an **adaptive guidance loop**.

* **What it demonstrates:** Instead of generating a single response, the system creates a diverse set of candidate completions (`n` paths). A separate process evaluates these candidates ("Compare generations") and uses a second model pass to determine how strongly to guide the model ("guidance strength") for the next iteration. This is akin to a model self-critiquing its outputs and adjusting its own parameters for better results.

* **Relationship between elements:** The inputs (query, ground truth, thinking trajectory) provide the foundation. The first SLM explores the solution space by generating multiple possibilities. The guidance mechanism steers this exploration. The comparison and second SLM act as a controller or manager, optimizing the guidance strategy based on the observed outputs.

* **Notable implications:** This approach could lead to more reliable, accurate, and context-appropriate responses compared to a single-pass generation. The "thinking trajectory" input is particularly interesting, as it suggests the model is being conditioned not just on the desired answer (ground truth) but also on a reasoning process, potentially improving its explanatory capabilities. The system embodies a form of **meta-learning**, where the model learns to improve its own generation process dynamically.

DECODING INTELLIGENCE...