## Diagram: Multi-Stage Language Model Response Generation Process

### Overview

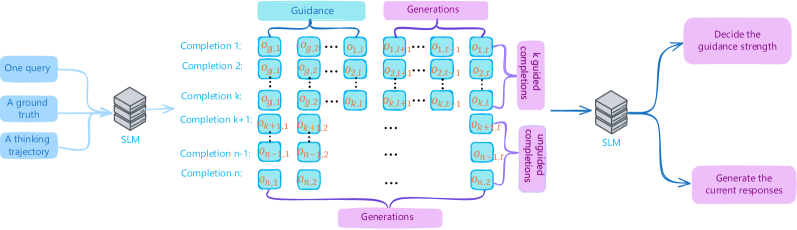

The diagram illustrates a multi-stage process for generating responses using a language model (SLM), incorporating guidance mechanisms and iterative refinement. It shows how inputs (query, ground truth, thinking trajectory) are processed through sequential stages to produce optimized outputs.

### Components/Axes

1. **Inputs**:

- **One query**: Text input for the model.

- **A ground truth**: Reference for correctness.

- **A thinking trajectory**: Internal reasoning path.

2. **First SLM**: Processes inputs to generate initial completions.

3. **Guided Completions**:

- Labeled as `Completion 1` to `Completion n`, with sub-labels `g.1`, `g.2`, ..., `g.k` (guided).

4. **Unguided Completions**:

- Labeled as `Completion 1` to `Completion n`, with sub-labels `o.1`, `o.2`, ..., `o.n` (unguided).

5. **Second SLM**: Takes guided/unguided completions to:

- **Decide guidance strength**: Adjusts influence of guidance.

- **Generate current responses**: Final output.

### Detailed Analysis

- **Guided vs. Unguided Completions**:

- Guided completions (`g.x`) are generated with explicit guidance (e.g., ground truth).

- Unguided completions (`o.x`) are generated without such constraints.

- **Iterative Refinement**:

- Multiple generations (`k` to `n`) suggest iterative improvement.

- Guidance strength is dynamically adjusted based on prior completions.

- **Color Coding**:

- **Blue**: Guided completions (`g.x`).

- **Purple**: Unguided completions (`o.x`).

### Key Observations

1. **Dual-Path Processing**:

- The model splits outputs into guided and unguided streams for parallel evaluation.

2. **Feedback Loop**:

- The second SLM uses both completion types to refine guidance strength, implying adaptive learning.

3. **Hierarchical Structure**:

- Inputs → First SLM → Generations → Second SLM → Final responses.

### Interpretation

This diagram represents a **reinforcement learning framework** for language models, where guidance (e.g., ground truth) is used to iteratively improve response quality. The separation of guided and unguided completions allows the model to balance creativity (unguided) with accuracy (guided). The second SLM acts as a meta-controller, optimizing the trade-off between these paths. The iterative generations (`k` to `n`) suggest a focus on long-term coherence and error correction, critical for complex tasks requiring reasoning. The absence of explicit numerical data implies the process is conceptual, emphasizing architectural design over empirical results.