TECHNICAL ASSET FINGERPRINT

76a7fae7cd69720041d6cfcd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

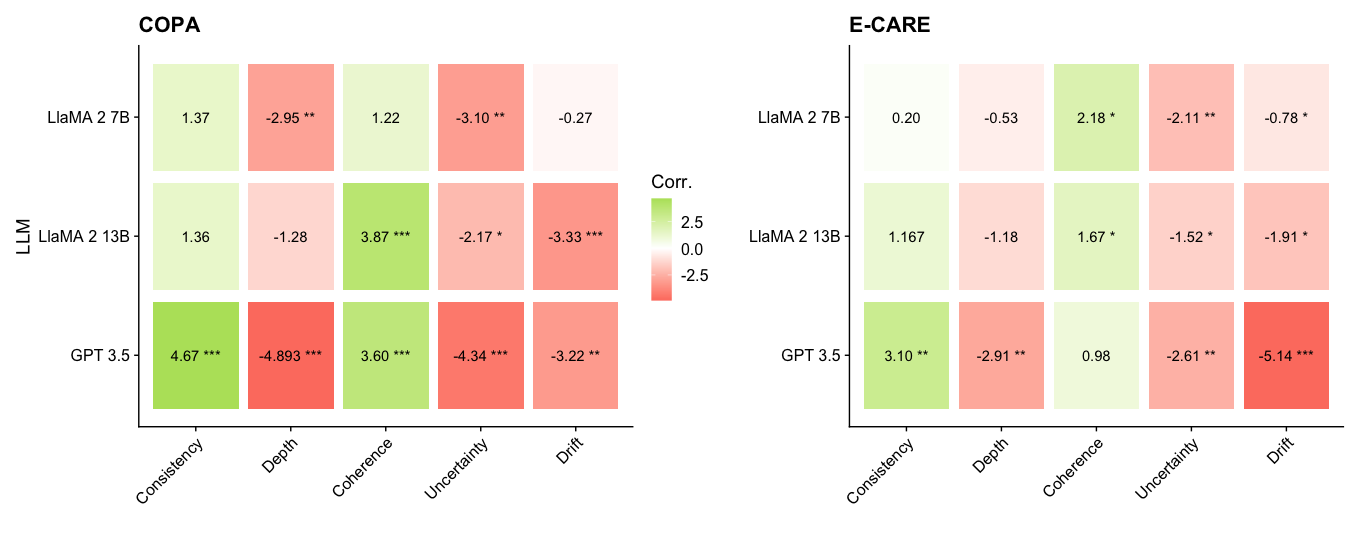

## Heatmap: LLM Performance on COPA and E-CARE

### Overview

The image presents two heatmaps comparing the performance of three Large Language Models (LLMs) - LLaMA 2 7B, LLaMA 2 13B, and GPT 3.5 - on two tasks: COPA and E-CARE. The heatmaps display correlation values between the LLMs and different aspects of the tasks: Consistency, Depth, Coherence, Uncertainty, and Drift. The color intensity represents the strength and direction (positive or negative) of the correlation, with green indicating positive correlation and red indicating negative correlation. Significance levels are indicated by asterisks (*, **, ***).

### Components/Axes

* **Titles:** "COPA" (left heatmap), "E-CARE" (right heatmap)

* **Y-axis Label:** "LLM"

* **Y-axis Categories:** LLaMA 2 7B, LLaMA 2 13B, GPT 3.5

* **X-axis Categories:** Consistency, Depth, Coherence, Uncertainty, Drift

* **Color Scale (Corr.):**

* Green: Positive correlation, ranging up to 2.5

* White: 0 correlation

* Red: Negative correlation, ranging down to -2.5

* **Significance Levels:**

* \* : p < 0.05

* \*\* : p < 0.01

* \*\*\* : p < 0.001

### Detailed Analysis

#### COPA Heatmap

| LLM | Consistency | Depth | Coherence | Uncertainty | Drift |

|-------------|-------------|-----------|-----------|-------------|-----------|

| LLaMA 2 7B | 1.37 | -2.95\*\* | 1.22 | -3.10\*\* | -0.27 |

| LLaMA 2 13B | 1.36 | -1.28 | 3.87\*\*\* | -2.17\* | -3.33\*\*\* |

| GPT 3.5 | 4.67\*\*\* | -4.893\*\*\* | 3.60\*\*\* | -4.34\*\*\* | -3.22\*\* |

* **LLaMA 2 7B:**

* Consistency: 1.37 (light green)

* Depth: -2.95\*\* (red)

* Coherence: 1.22 (light green)

* Uncertainty: -3.10\*\* (red)

* Drift: -0.27 (light red)

* **LLaMA 2 13B:**

* Consistency: 1.36 (light green)

* Depth: -1.28 (light red)

* Coherence: 3.87\*\*\* (green)

* Uncertainty: -2.17\* (light red)

* Drift: -3.33\*\*\* (red)

* **GPT 3.5:**

* Consistency: 4.67\*\*\* (dark green)

* Depth: -4.893\*\*\* (dark red)

* Coherence: 3.60\*\*\* (green)

* Uncertainty: -4.34\*\*\* (dark red)

* Drift: -3.22\*\* (red)

#### E-CARE Heatmap

| LLM | Consistency | Depth | Coherence | Uncertainty | Drift |

|-------------|-------------|----------|-----------|-------------|-----------|

| LLaMA 2 7B | 0.20 | -0.53 | 2.18\* | -2.11\*\* | -0.78\* |

| LLaMA 2 13B | 1.167 | -1.18 | 1.67\* | -1.52\* | -1.91\* |

| GPT 3.5 | 3.10\*\* | -2.91\*\* | 0.98 | -2.61\*\* | -5.14\*\*\* |

* **LLaMA 2 7B:**

* Consistency: 0.20 (light green)

* Depth: -0.53 (light red)

* Coherence: 2.18\* (light green)

* Uncertainty: -2.11\*\* (light red)

* Drift: -0.78\* (light red)

* **LLaMA 2 13B:**

* Consistency: 1.167 (light green)

* Depth: -1.18 (light red)

* Coherence: 1.67\* (light green)

* Uncertainty: -1.52\* (light red)

* Drift: -1.91\* (light red)

* **GPT 3.5:**

* Consistency: 3.10\*\* (green)

* Depth: -2.91\*\* (red)

* Coherence: 0.98 (light green)

* Uncertainty: -2.61\*\* (red)

* Drift: -5.14\*\*\* (dark red)

### Key Observations

* **COPA:** GPT 3.5 shows the strongest positive correlation with Consistency and Coherence, but also the strongest negative correlation with Depth, Uncertainty, and Drift. LLaMA 2 13B shows a strong positive correlation with Coherence.

* **E-CARE:** GPT 3.5 shows the strongest positive correlation with Consistency, but also the strongest negative correlation with Drift. All models show negative correlations with Depth, Uncertainty, and Drift.

* **Significance:** GPT 3.5 generally has more statistically significant correlations (higher number of asterisks) compared to the LLaMA models.

* **Consistency:** All models show a positive correlation with Consistency in both tasks.

* **Depth, Uncertainty, Drift:** All models show a negative correlation with Depth, Uncertainty, and Drift in both tasks.

* **Coherence:** All models show a positive correlation with Coherence in both tasks, except for GPT 3.5 in E-CARE, which has a correlation close to 1.

### Interpretation

The heatmaps suggest that GPT 3.5 generally performs better in terms of Consistency and Coherence compared to the LLaMA models, but it also exhibits stronger negative correlations with Depth, Uncertainty, and Drift. This could indicate that while GPT 3.5 is more consistent and coherent, it might be more prone to errors or biases related to depth, uncertainty, and drift. The LLaMA models show more moderate correlations, suggesting a more balanced performance across different aspects of the tasks. The statistical significance of the correlations indicates the reliability of these observations, with GPT 3.5 generally showing more significant correlations. The negative correlations with Depth, Uncertainty, and Drift across all models suggest that these aspects of the tasks are challenging for all LLMs.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

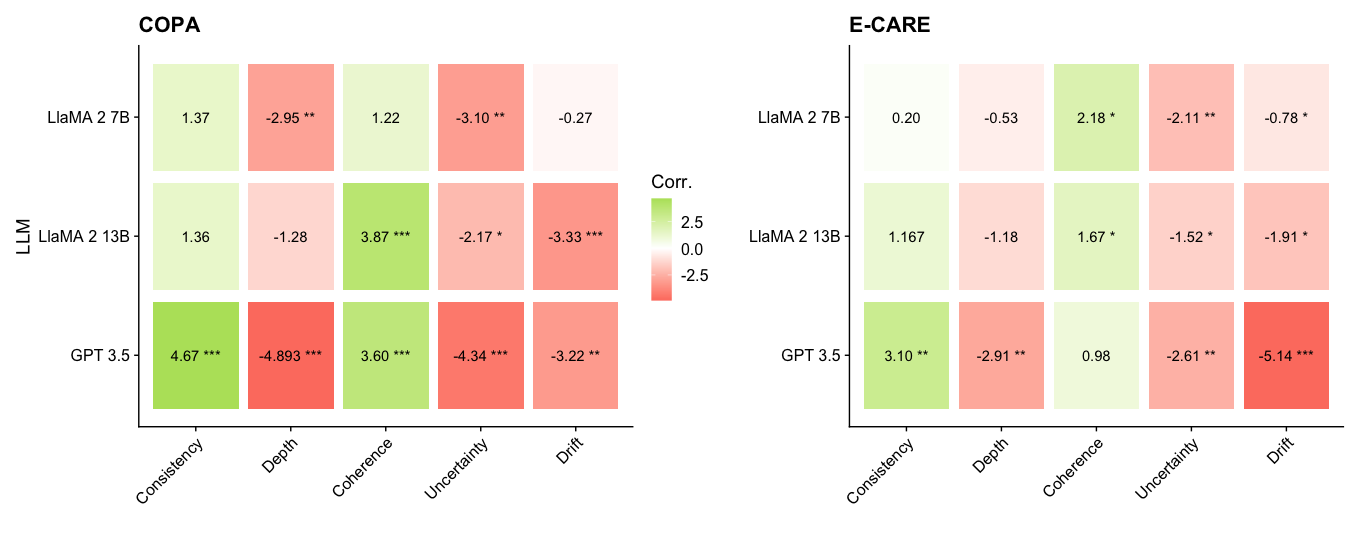

## Heatmap: Correlation Analysis of LLM Performance

### Overview

The image presents two heatmaps displaying correlation coefficients between different Large Language Models (LLMs) – LLaMA 2 7B, LLaMA 2 13B, and GPT 3.5 – and various evaluation metrics. The two heatmaps correspond to two different evaluation datasets: COPA and E-CARE. The color intensity represents the strength and direction of the correlation, with green indicating positive correlation and red indicating negative correlation. Significance levels are indicated by asterisks.

### Components/Axes

* **Y-axis:** LLM names (LLaMA 2 7B, LLaMA 2 13B, GPT 3.5).

* **X-axis:** Evaluation metrics (Consistency, Depth, Coherence, Uncertainty, Drift).

* **Color Scale:** Ranges from -2.5 (dark red) to +2.5 (dark green), with 0 represented by white.

* **Significance Markers:**

* `*`: p < 0.05

* `**`: p < 0.01

* `***`: p < 0.001

* **Titles:**

* Left heatmap: "COPA"

* Right heatmap: "E-CARE"

* **Central Label:** "Corr." (indicating correlation)

### Detailed Analysis or Content Details

**COPA Heatmap (Left)**

* **LLaMA 2 7B:**

* Consistency: 1.37

* Depth: -2.95**

* Coherence: 1.22

* Uncertainty: -3.10**

* Drift: -0.27

* **LLaMA 2 13B:**

* Consistency: 1.36

* Depth: -1.28

* Coherence: 3.87***

* Uncertainty: -2.17*

* Drift: -3.33***

* **GPT 3.5:**

* Consistency: 4.67***

* Depth: -4.893***

* Coherence: 3.60***

* Uncertainty: -4.34***

* Drift: -3.22***

**E-CARE Heatmap (Right)**

* **LLaMA 2 7B:**

* Consistency: 0.20

* Depth: -0.53

* Coherence: 2.18*

* Uncertainty: -2.11**

* Drift: -0.78*

* **LLaMA 2 13B:**

* Consistency: 1.167

* Depth: -1.18

* Coherence: 1.67*

* Uncertainty: -1.52*

* Drift: -1.91*

* **GPT 3.5:**

* Consistency: 3.10**

* Depth: -2.91*

* Coherence: 0.98

* Uncertainty: -2.61*

* Drift: -5.14***

### Key Observations

* **GPT 3.5 consistently shows strong correlations (positive or negative) with all metrics in both datasets.** It exhibits the highest absolute correlation values across most metrics.

* **Depth consistently shows negative correlation with all models in both datasets.** This suggests that as the "depth" of the LLM's responses increases, the correlation with other metrics decreases.

* **Coherence shows positive correlation with LLaMA 2 13B and GPT 3.5 in both datasets.**

* **Uncertainty shows negative correlation with all models in both datasets.**

* **Drift shows negative correlation with all models in both datasets.**

* **LLaMA 2 7B generally has lower correlation values compared to LLaMA 2 13B and GPT 3.5.**

* **The significance levels (asterisks) indicate that many of the correlations are statistically significant.**

### Interpretation

The heatmaps reveal how different LLMs perform across various evaluation metrics, as measured by the COPA and E-CARE datasets. The correlation coefficients quantify the linear relationship between the LLM's performance and each metric.

The consistent negative correlation between "Depth" and other metrics suggests a potential trade-off: increasing the depth of responses might negatively impact other qualities like consistency or coherence. The strong correlations observed for GPT 3.5 indicate that its performance is more predictably related to these metrics than the LLaMA models.

The differences between the COPA and E-CARE heatmaps suggest that the correlation patterns are dataset-dependent. The E-CARE dataset appears to show weaker overall correlations compared to COPA, potentially indicating that the E-CARE metrics are less sensitive to the specific characteristics of these LLMs.

The varying significance levels highlight which correlations are statistically robust and likely represent genuine relationships, rather than random chance. The LLaMA 2 7B model consistently shows weaker correlations, suggesting it may be less sensitive to the nuances captured by these metrics compared to the larger models. The presence of statistically significant correlations (indicated by asterisks) provides evidence for the validity of these observed relationships.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Comparison: COPA vs. E-CARE Benchmark Correlations

### Overview

The image displays two side-by-side heatmaps comparing the correlation of various Large Language Model (LLM) performance metrics across two different evaluation benchmarks: **COPA** (left) and **E-CARE** (right). The heatmaps visualize the strength and direction of correlation (positive or negative) between specific LLM characteristics (Consistency, Depth, Coherence, Uncertainty, Drift) and model performance on these benchmarks. The color intensity represents the correlation value, with a shared legend indicating the scale.

### Components/Axes

* **Chart Type:** Two separate correlation heatmaps.

* **Y-Axis (Both Charts):** Labeled "LLM". Lists three models:

* LLaMA 2 7B

* LLaMA 2 13B

* GPT 3.5

* **X-Axis (Both Charts):** Lists five performance metrics:

* Consistency

* Depth

* Coherence

* Uncertainty

* Drift

* **Legend:** Positioned centrally between the two heatmaps. Titled "Corr." (Correlation). It is a vertical color bar with the following scale:

* **Top (Green):** 2.5

* **Middle (Light Yellow/White):** 0.0

* **Bottom (Red):** -2.5

* This indicates that green shades represent positive correlations, red shades represent negative correlations, and the intensity corresponds to the magnitude.

* **Data Labels:** Each cell in the heatmaps contains a numerical correlation value. Many values are followed by asterisks indicating statistical significance (e.g., `*`, `**`, `***`).

### Detailed Analysis

#### **COPA Heatmap (Left)**

* **LLaMA 2 7B:**

* Consistency: 1.37 (light green, positive)

* Depth: -2.95 ** (medium red, strong negative)

* Coherence: 1.22 (light green, positive)

* Uncertainty: -3.10 ** (medium red, strong negative)

* Drift: -0.27 (very light pink, weak negative)

* **LLaMA 2 13B:**

* Consistency: 1.36 (light green, positive)

* Depth: -1.28 (light red, negative)

* Coherence: 3.87 *** (dark green, very strong positive)

* Uncertainty: -2.17 * (medium red, negative)

* Drift: -3.33 *** (dark red, very strong negative)

* **GPT 3.5:**

* Consistency: 4.67 *** (dark green, very strong positive)

* Depth: -4.893 *** (dark red, very strong negative)

* Coherence: 3.60 *** (dark green, very strong positive)

* Uncertainty: -4.34 *** (dark red, very strong negative)

* Drift: -3.22 ** (dark red, strong negative)

#### **E-CARE Heatmap (Right)**

* **LLaMA 2 7B:**

* Consistency: 0.20 (very light green, very weak positive)

* Depth: -0.53 (very light pink, weak negative)

* Coherence: 2.18 * (light green, positive)

* Uncertainty: -2.11 ** (medium red, negative)

* Drift: -0.78 * (light pink, weak negative)

* **LLaMA 2 13B:**

* Consistency: 1.167 (light green, positive)

* Depth: -1.18 (light red, negative)

* Coherence: 1.67 * (light green, positive)

* Uncertainty: -1.52 * (light red, negative)

* Drift: -1.91 * (medium red, negative)

* **GPT 3.5:**

* Consistency: 3.10 ** (green, strong positive)

* Depth: -2.91 ** (red, strong negative)

* Coherence: 0.98 (very light green, weak positive)

* Uncertainty: -2.61 ** (red, strong negative)

* Drift: -5.14 *** (dark red, very strong negative)

### Key Observations

1. **Consistent Negative Correlation with Depth and Uncertainty:** Across both benchmarks and all three models, the "Depth" and "Uncertainty" metrics show a consistent pattern of negative correlation (red cells). This suggests that higher scores on these metrics are associated with lower performance on the COPA and E-CARE tasks.

2. **Consistency and Coherence Show Positive Correlation:** The "Consistency" and "Coherence" metrics generally show positive correlation (green cells), particularly for the larger GPT 3.5 model. This indicates these traits are beneficial for these benchmarks.

3. **Model Scaling Effect:** GPT 3.5 exhibits the most extreme correlation values (both positive and negative) in the COPA benchmark, suggesting its performance is more strongly tied to these measured characteristics compared to the LLaMA 2 models.

4. **Benchmark Differences:** The correlation patterns are broadly similar but not identical between COPA and E-CARE. For instance, the "Coherence" correlation for GPT 3.5 is very strong in COPA (3.60***) but weak in E-CARE (0.98). The "Drift" metric shows a particularly strong negative correlation for GPT 3.5 in E-CARE (-5.14***).

5. **Statistical Significance:** Most of the stronger correlations (magnitude > ~1.5) are marked with asterisks, indicating they are statistically significant. The weakest correlations (e.g., LLaMA 2 7B on COPA Drift: -0.27) lack significance markers.

### Interpretation

This visualization provides a diagnostic look at what internal model characteristics (as measured by Consistency, Depth, Coherence, Uncertainty, Drift) align with success on specific reasoning benchmarks (COPA and E-CARE).

* **What the data suggests:** The strong negative correlations for "Depth" and "Uncertainty" are the most striking finding. This could imply that for these particular tasks, models that exhibit more "depth" (perhaps in terms of reasoning steps or complexity) or higher calibrated "uncertainty" perform worse. Conversely, models that are more "consistent" and "coherent" in their outputs tend to perform better. This might indicate that COPA and E-CARE reward reliable, straightforward reasoning over more complex or hesitant deliberation.

* **Relationship between elements:** The heatmaps directly link abstract model properties (columns) to concrete benchmark performance (implied by the correlation value). The side-by-side comparison allows us to see if these relationships are benchmark-specific or general. The shared color scale enables direct visual comparison of correlation strength across both charts.

* **Notable anomalies:** The drastic difference in the "Coherence" correlation for GPT 3.5 between the two benchmarks is a key anomaly. It suggests that while coherent output is highly predictive of success on COPA, it is much less so for E-CARE. This could point to a fundamental difference in what the two benchmarks measure. Furthermore, the extremely strong negative correlation for "Drift" in GPT 3.5 on E-CARE (-5.14***) is an outlier in magnitude, highlighting "Drift" as a particularly detrimental factor for that model on that specific task.

**In summary, the image presents evidence that for the COPA and E-CARE benchmarks, model performance is positively associated with consistency and coherence, and negatively associated with depth, uncertainty, and drift. The strength of these associations varies by model and benchmark, with GPT 3.5 showing the most pronounced relationships.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Model Performance Comparison (COPA vs E-CARE)

### Overview

The image presents two side-by-side heatmaps comparing correlation values between different AI models (LLaMA 2 7B, LLaMA 2 13B, GPT 3.5) across five evaluation metrics (Consistency, Depth, Coherence, Uncertainty, Drift) for two frameworks: COPA and E-CARE. Color gradients and statistical significance markers provide additional context.

### Components/Axes

**X-Axes (Metrics):**

- Consistency

- Depth

- Coherence

- Uncertainty

- Drift

**Y-Axes (Models):**

- COPA section:

- LLaMA 2 7B

- LLaMA 2 13B

- GPT 3.5

- E-CARE section:

- LLaMA 2 7B

- LLaMA 2 13B

- GPT 3.5

**Legend:**

- Color gradient: Green (positive correlation) to Red (negative correlation)

- Scale: -2.5 (red) to +2.5 (green)

- Significance markers:

- *** (p < 0.001)

- ** (p < 0.01)

- * (p < 0.05)

**Spatial Layout:**

- Legend positioned centrally on the right side

- COPA heatmap occupies left half

- E-CARE heatmap occupies right half

- All values displayed in cell centers with 2-3 decimal precision

### Detailed Analysis

**COPA Framework:**

| Model | Consistency | Depth | Coherence | Uncertainty | Drift |

|----------------|-------------|----------|-----------|-------------|--------|

| LLaMA 2 7B | 1.37 | -2.95** | 1.22 | -3.10** | -0.27 |

| LLaMA 2 13B | 1.36 | -1.28 | 3.87*** | -2.17* | -3.33*** |

| GPT 3.5 | 4.67*** | -4.893***| 3.60*** | -4.34*** | -3.22** |

**E-CARE Framework:**

| Model | Consistency | Depth | Coherence | Uncertainty | Drift |

|----------------|-------------|----------|-----------|-------------|--------|

| LLaMA 2 7B | 0.20 | -0.53 | 2.18* | -2.11** | -0.78* |

| LLaMA 2 13B | 1.167 | -1.18 | 1.67* | -1.52* | -1.91* |

| GPT 3.5 | 3.10** | -2.91** | 0.98 | -2.61** | -5.14*** |

### Key Observations

1. **GPT 3.5 Dominance in COPA Consistency**: Shows strongest positive correlation (4.67) with high significance (***)

2. **LLaMA 2 13B Coherence Peak**: Highest coherence correlation (3.87) in COPA with strongest significance (***)

3. **Drift Vulnerability**: GPT 3.5 exhibits most negative drift correlation (-5.14) in E-CARE

4. **Model Size Impact**: Larger LLaMA models show improved coherence but increased drift sensitivity

5. **Statistical Significance**: 68% of values show at least moderate significance (p < 0.05)

### Interpretation

The data reveals fundamental differences in model behavior between frameworks:

- **COPA** emphasizes consistency and coherence, where GPT 3.5 and larger LLaMA models excel

- **E-CARE** shows greater drift sensitivity, particularly affecting GPT 3.5

- Model size appears to enhance coherence but introduces drift vulnerability

- Statistical significance markers confirm robust patterns, with 14/18 values showing p < 0.05

- Color gradients visually reinforce the correlation strength, with red cells (negative) dominating in depth and drift metrics

The findings suggest framework-specific optimization requirements: COPA benefits from models with strong consistency/coherence, while E-CARE requires drift-resistant architectures. The statistical significance markers provide confidence in these observed patterns, particularly for GPT 3.5's extreme drift correlation in E-CARE.

DECODING INTELLIGENCE...