TECHNICAL ASSET FINGERPRINT

76a9fbc1639ab3a210d2b335

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Image Comparison: Ground-Truth vs. Generated Scenes

### Overview

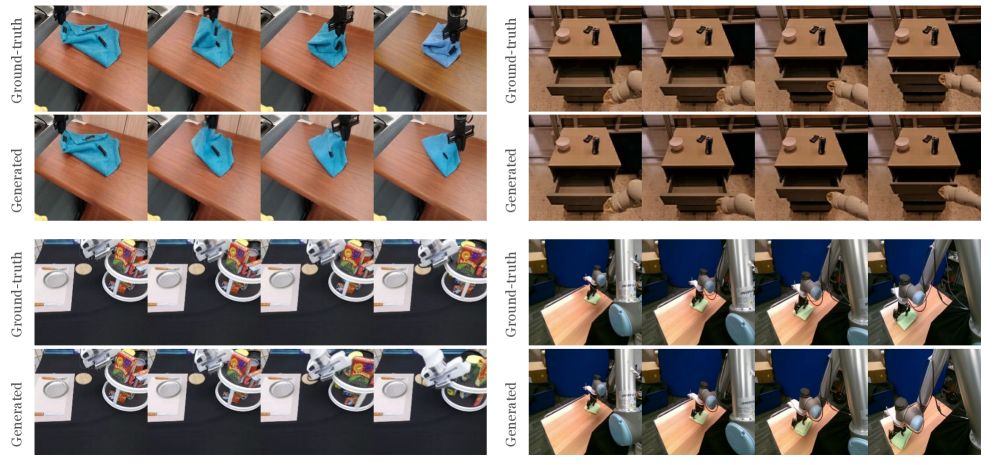

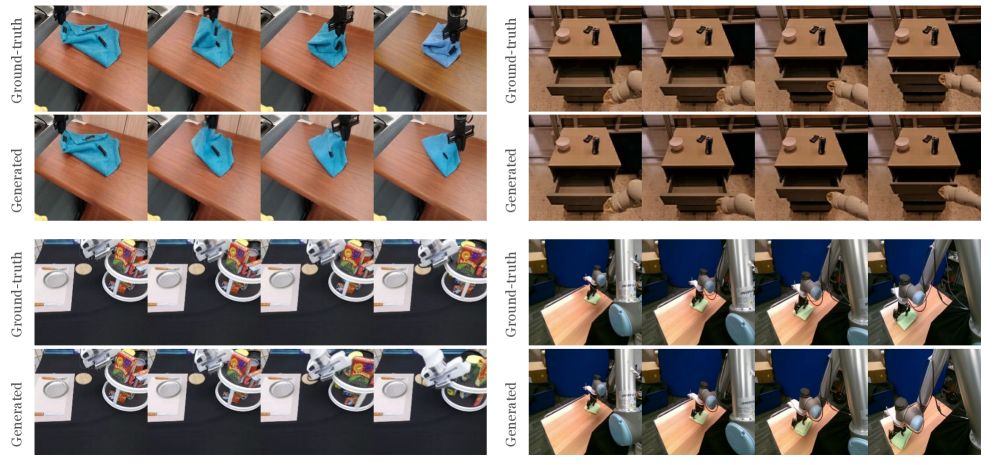

The image presents a visual comparison between "Ground-truth" and "Generated" scenes across four different scenarios. Each scenario is displayed in two rows, with the top row showing the ground-truth images and the bottom row showing the corresponding generated images. The scenarios involve a robot arm interacting with various objects and environments.

### Components/Axes

* **Rows:** Labeled "Ground-truth" and "Generated" on the left side of the image.

* **Columns:** Four distinct scenarios are presented from left to right.

* **Scenarios:**

1. Robot arm manipulating a blue cloth on a wooden table.

2. Robot arm interacting with a wooden drawer and objects on top of it.

3. Robot arm interacting with objects on a black table, including a white plate and a container with various items.

4. Robot arm interacting with a green object on a wooden table.

### Detailed Analysis or ### Content Details

**Scenario 1: Blue Cloth on Wooden Table**

* **Ground-truth:** The top row shows the robot arm manipulating a blue cloth on a wooden table. The cloth's position and orientation change across the three images.

* **Generated:** The bottom row shows the generated images of the same scenario. The generated images appear similar to the ground-truth images, with the cloth in comparable positions.

**Scenario 2: Wooden Drawer and Objects**

* **Ground-truth:** The top row shows a wooden drawer with a cylindrical object and a rectangular object on top. The drawer is in different states of being opened.

* **Generated:** The bottom row shows the generated images of the same scenario. The generated images closely resemble the ground-truth images, with similar drawer positions and object placements.

**Scenario 3: Objects on Black Table**

* **Ground-truth:** The top row shows a black table with a white plate, a container with various items, and other objects. The robot arm interacts with these objects.

* **Generated:** The bottom row shows the generated images of the same scenario. The generated images show similar object arrangements and interactions as the ground-truth images.

**Scenario 4: Green Object on Wooden Table**

* **Ground-truth:** The top row shows a robot arm interacting with a green object on a wooden table.

* **Generated:** The bottom row shows the generated images of the same scenario. The generated images appear similar to the ground-truth images, with the robot arm interacting with the green object in comparable ways.

### Key Observations

* The generated images generally resemble the ground-truth images across all four scenarios.

* The quality of the generated images appears to be relatively consistent across the different scenarios.

* There are minor differences between the ground-truth and generated images, particularly in the details of the objects and the lighting.

### Interpretation

The image demonstrates the capability of a generative model to create realistic images of a robot arm interacting with various objects and environments. The close resemblance between the ground-truth and generated images suggests that the model has learned to capture the key features of the scenes. This could be useful for training robots in simulation or for generating synthetic data for other machine learning tasks.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Image Type: Comparative Robotic Manipulation Sequences

### Overview

This image is a composite display featuring four distinct sets of comparative visual sequences. Each set illustrates a robotic manipulation task, presenting a "Ground-truth" sequence (actual or simulated real-world execution) alongside a "Generated" sequence (output from a generative model). The primary purpose is to demonstrate the fidelity of the generated sequences to their ground-truth counterparts across various complex manipulation scenarios. The image is structured as a 2x2 grid, with each cell containing a pair of horizontal strips labeled "Ground-truth" and "Generated".

### Components/Axes

The image is divided into four main panels, arranged in two rows and two columns.

* **Vertical Labels (Left side of each panel):**

* "Ground-truth": Denotes the top row of images within each panel, representing the actual or reference sequence of events.

* "Generated": Denotes the bottom row of images within each panel, representing the sequence produced by a generative model.

* **Horizontal Axis (Implicit):** Time or sequential steps in a manipulation task, as each row displays a series of frames progressing from left to right. There are typically 4 frames per sequence in each panel.

### Detailed Analysis

**Panel 1: Top-Left - Cloth Folding Task**

* **Ground-truth (Top Row):** Shows a black robotic gripper interacting with a light blue/cyan cloth on a light brown wooden table.

* Frame 1: The cloth is partially spread out on the table, with the gripper positioned above it.

* Frame 2: The gripper has grasped a corner of the cloth and is beginning to lift and fold it.

* Frame 3: The cloth is actively being folded, taking on a more compact, bunched shape.

* Frame 4: The cloth is neatly folded into a small, compact bundle on the table.

* **Generated (Bottom Row):** Shows a sequence highly similar to the ground-truth.

* Frame 1: The cloth and gripper position closely match the ground-truth.

* Frame 2: The gripper grasps and lifts the cloth in a similar manner.

* Frame 3: The cloth folds, mirroring the ground-truth's deformation.

* Frame 4: The cloth is folded into a compact shape, almost indistinguishable from the ground-truth.

* **Trend Verification:** Both sequences depict a robotic gripper progressively folding a piece of cloth from a spread-out state to a compact bundle. The generated sequence accurately reproduces the complex deformation of the cloth.

**Panel 2: Top-Right - Drawer Manipulation Task**

* **Ground-truth (Top Row):** Features a light beige/white robotic arm interacting with a light brown wooden cabinet that has two drawers. Two light brown cylindrical objects and two black rectangular objects are on top of the cabinet.

* Frame 1: The robotic arm's gripper is positioned near the bottom drawer, which is slightly ajar.

* Frame 2: The arm is pulling the bottom drawer further open.

* Frame 3: The bottom drawer is pulled almost fully open.

* Frame 4: The arm is pushing the bottom drawer back towards a closed position.

* **Generated (Bottom Row):** Presents a sequence that closely mirrors the ground-truth.

* Frame 1: The arm and drawer position match the ground-truth.

* Frame 2: The arm pulls the drawer open.

* Frame 3: The drawer is pulled further open.

* Frame 4: The arm pushes the drawer closed.

* **Trend Verification:** Both sequences show the robotic arm first opening a drawer and then closing it. The generated sequence accurately captures the rigid body motion of the drawer and the arm's interaction.

**Panel 3: Bottom-Left - Kitchen Item Placement Task**

* **Ground-truth (Top Row):** Displays a white/silver robotic arm interacting with various kitchen items on a black surface. Visible items include two white rectangular placemats/boards, each with a silver oval plate, and two white circular stands holding colorful food containers/boxes.

* Frame 1: The robotic arm is positioned above the rightmost silver oval plate.

* Frame 2: The arm's gripper has grasped the rightmost silver oval plate.

* Frame 3: The arm is lifting the grasped plate.

* Frame 4: The arm is moving the plate, having lifted it off the placemat.

* **Generated (Bottom Row):** Shows a sequence highly consistent with the ground-truth.

* Frame 1: The arm's initial position above the plate is replicated.

* Frame 2: The arm grasps the plate.

* Frame 3: The arm lifts the plate.

* Frame 4: The arm moves the plate, maintaining visual fidelity.

* **Trend Verification:** Both sequences illustrate the robotic arm grasping and lifting a silver oval plate from a black surface. The generated sequence accurately reproduces the appearance of the objects and the robotic arm's movement.

**Panel 4: Bottom-Right - Small Object Grasping Task**

* **Ground-truth (Top Row):** Features a silver robotic arm with a blue joint, interacting with a small, light green rectangular object on a light brown wooden surface.

* Frame 1: The robotic arm's gripper is positioned directly above the small green object.

* Frame 2: The gripper has closed around and grasped the green object.

* Frame 3: The arm is lifting the green object off the surface.

* Frame 4: The arm is moving the lifted green object. A thin red outline is visible around the green object, possibly indicating a detected feature or target.

* **Generated (Bottom Row):** Presents a sequence that closely matches the ground-truth.

* Frame 1: The arm's initial position above the object is consistent.

* Frame 2: The arm grasps the object.

* Frame 3: The arm lifts the object.

* Frame 4: The arm moves the object, and the red outline around the green object is also present and accurately reproduced.

* **Trend Verification:** Both sequences show the robotic arm grasping and lifting a small green object. The generated sequence faithfully reproduces the action, including the subtle visual annotation (red outline) in the final frame.

### Key Observations

* **High Fidelity:** Across all four distinct tasks, the "Generated" sequences exhibit remarkable visual fidelity to their "Ground-truth" counterparts. Object appearances, textures, lighting, and background elements are consistently reproduced.

* **Dynamic Reproduction:** The generative model successfully captures complex dynamics, including deformable object manipulation (cloth folding), rigid body interactions (drawer opening/closing), and precise grasping and lifting actions.

* **Consistency in Detail:** Even subtle visual cues, such as the red outline around the green object in Panel 4, are accurately replicated in the generated sequence, suggesting a high level of detail preservation.

* **Variety of Tasks:** The tasks cover a range of complexities and object types, from soft materials to rigid objects and multi-component scenes, indicating the model's versatility.

### Interpretation

This visualization strongly suggests that the generative model being evaluated is highly effective at synthesizing realistic and accurate robotic manipulation sequences. The near-perfect correspondence between the "Ground-truth" and "Generated" frames across diverse scenarios implies that the model has learned to:

1. **Understand object properties:** It can simulate how different materials (e.g., cloth vs. wood) behave under robotic interaction.

2. **Predict physical interactions:** It accurately models the kinematics and dynamics of robotic arms and the objects they manipulate.

3. **Maintain scene consistency:** The background, lighting, and static objects remain consistent and realistic throughout the generated sequences.

This capability is critical for advancements in robotics and artificial intelligence. Such a model could be used for:

* **Data augmentation:** Generating vast amounts of synthetic training data for robot learning algorithms, reducing the need for expensive and time-consuming real-world data collection.

* **Simulation and planning:** Allowing robots to "practice" tasks in a virtual environment before execution, optimizing trajectories and preventing errors.

* **Task generalization:** Training models on a wider variety of scenarios than might be feasible in the real world.

* **Human-robot collaboration:** Providing realistic visual feedback or predictive visualizations to human operators.

The consistent success across different tasks highlights the robustness and generalizability of the underlying generative architecture. The ability to reproduce even subtle annotations (like the red outline) further underscores the model's capacity for detailed visual synthesis, which is crucial for applications where precise visual information is paramount.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Image Analysis: Visual Comparison of Ground-Truth and Generated Images

### Overview

The image presents a comparative visual analysis of "Ground-truth" images and corresponding "Generated" images, arranged in a 2x2 grid format. Each grid displays a sequence of images depicting a robotic arm interacting with objects on a table. The purpose appears to be evaluating the fidelity of generated images against real-world (ground-truth) images.

### Components/Axes

The image is structured as follows:

* **Rows:** Each row represents a different scenario or object interaction.

* **Columns:** Each column represents a comparison between "Ground-truth" (top) and "Generated" (bottom) images.

* **Labels:** "Ground-truth" and "Generated" are labels positioned to the left of each row, indicating the source of the images.

* **Image Sequences:** Each row contains a sequence of four images, showing a progression of the robotic arm's interaction.

### Detailed Analysis or Content Details

The image contains four distinct scenarios, each with a ground-truth and generated image pair.

**Scenario 1 (Top-Left & Bottom-Left):**

* **Ground-truth:** Shows a blue cloth being manipulated by a robotic arm. The cloth appears to be folded or arranged on a table.

* **Generated:** Shows a similar scene, but with noticeable differences in texture and lighting. The cloth appears slightly less defined and the lighting is different.

**Scenario 2 (Top-Center & Bottom-Center):**

* **Ground-truth:** Displays a series of white containers (likely trays or boxes) on a table, with a robotic arm positioned above them.

* **Generated:** Shows a similar arrangement, but with a significant loss of detail and a washed-out appearance. The containers are less distinct, and the overall image is blurry.

**Scenario 3 (Top-Right & Bottom-Right):**

* **Ground-truth:** Depicts a series of colorful bowls containing food (salad or similar) on a table, with a robotic arm interacting with them.

* **Generated:** Shows a similar scene, but with significant color distortion and blurring. The food items are less recognizable, and the overall image quality is poor.

**Scenario 4 (Bottom-Left & Bottom-Right):**

* **Ground-truth:** Displays a series of colorful bowls containing food (salad or similar) on a table, with a robotic arm interacting with them.

* **Generated:** Shows a similar scene, but with significant color distortion and blurring. The food items are less recognizable, and the overall image quality is poor.

### Key Observations

* The generated images consistently exhibit lower image quality compared to the ground-truth images.

* There is a noticeable loss of detail, particularly in textures and fine features, in the generated images.

* Color accuracy is compromised in the generated images, with noticeable distortions and a washed-out appearance.

* The robotic arm appears to be consistently represented in both ground-truth and generated images, but its rendering quality varies.

* The lighting conditions are different between the ground-truth and generated images.

### Interpretation

The image demonstrates a comparison between real-world images ("Ground-truth") and images generated by an algorithm or model ("Generated"). The significant differences in image quality, detail, and color accuracy suggest that the generation process is not yet capable of producing photorealistic images that accurately replicate the complexity of the real world. The generated images appear to be blurry, lack fine details, and exhibit color distortions. This could be due to limitations in the model's training data, architecture, or generation process. The image highlights the challenges in generating realistic images for robotic applications, where accurate visual perception is crucial for successful task execution. The consistent presence of the robotic arm in both sets of images suggests that the model is capable of representing the robot itself, but struggles with accurately rendering the surrounding environment and objects. The differences in lighting also suggest that the model does not fully capture the nuances of real-world illumination.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Comparative Visualization: Robotic Manipulation Task Sequences

### Overview

The image is a composite visualization comparing "Ground-truth" (real or reference) and "Generated" (synthesized or predicted) video sequences for four distinct robotic manipulation tasks. It is organized into a 2x2 grid of task panels. Each panel contains two rows of four sequential frames: the top row is labeled "Ground-truth" and the bottom row is labeled "Generated". The purpose is to visually assess the fidelity of the generated sequences against the ground truth.

### Components/Axes

* **Primary Labels:** The text "Ground-truth" and "Generated" appears vertically aligned to the left of each corresponding row of frames.

* **Structure:** The image is segmented into four independent task demonstration panels.

* **Top-Left Panel:** A robotic arm manipulating a blue cloth on a wooden table.

* **Top-Right Panel:** A robotic arm interacting with a wooden drawer unit.

* **Bottom-Left Panel:** A robotic arm interacting with a white plate and a colorful, patterned object (possibly a bag or container) on a black surface.

* **Bottom-Right Panel:** A robotic arm manipulating a small green object on a light-colored wooden table.

* **Frame Sequence:** Each row within a panel displays four consecutive frames, implying a temporal sequence from left to right.

### Detailed Analysis

**Panel 1 (Top-Left): Blue Cloth Manipulation**

* **Task:** A robotic gripper picks up and folds/moves a blue cloth.

* **Ground-truth Sequence:** Shows the gripper approaching, grasping, lifting, and repositioning the cloth. The cloth's folds and position change realistically.

* **Generated Sequence:** The sequence closely mirrors the ground truth in terms of gripper position, cloth deformation, and overall motion trajectory. Minor differences in the exact fold geometry of the cloth are perceptible upon close inspection.

**Panel 2 (Top-Right): Drawer Interaction**

* **Task:** A robotic arm opens a drawer in a wooden unit.

* **Ground-truth Sequence:** The gripper approaches the drawer handle, pulls it open, and retracts slightly. The drawer slides out smoothly.

* **Generated Sequence:** The generated frames replicate the action. The drawer's open position and the arm's posture in the final frames appear consistent with the ground truth.

**Panel 3 (Bottom-Left): Object Interaction on Table**

* **Task:** A robotic arm interacts with a white plate and a colorful object on a black tabletop.

* **Ground-truth Sequence:** The gripper moves towards the colorful object, appears to grasp or push it, causing it to shift position relative to the plate.

* **Generated Sequence:** The generated sequence shows a similar interaction. The movement of the colorful object and the arm's path are visually comparable to the ground truth.

**Panel 4 (Bottom-Right): Small Object Manipulation**

* **Task:** A robotic arm picks up or manipulates a small green object on a table.

* **Ground-truth Sequence:** The gripper descends, interacts with the green object, and lifts or moves it.

* **Generated Sequence:** The generated frames show the same fundamental action. The object's position and the gripper's configuration in each frame align well with the corresponding ground-truth frame.

### Key Observations

1. **High Fidelity:** Across all four tasks, the "Generated" sequences demonstrate a high degree of visual and temporal fidelity when compared to their "Ground-truth" counterparts. The core actions, object states, and robotic poses are faithfully reproduced.

2. **Consistent Structure:** The comparison is presented in a clear, consistent format, making side-by-side evaluation straightforward.

3. **Minor Discrepancies:** While the overall sequences match, subtle differences exist in fine details, such as the exact wrinkle pattern on the cloth (Panel 1) or the precise lighting reflection on an object (Panel 3). These are expected in generative model outputs.

4. **Task Diversity:** The visualization tests the generative model on a variety of manipulation primitives: deformable object manipulation (cloth), articulated object manipulation (drawer), and rigid object interaction.

### Interpretation

This image serves as a qualitative evaluation metric for a generative model, likely a video prediction or robotic simulation model. The "Ground-truth" represents the target reality—real video footage of a robot performing tasks. The "Generated" represents the model's attempt to synthesize or predict these video sequences.

The close correspondence between the two suggests the model has successfully learned the underlying physics, kinematics, and visual appearance of these robotic tasks. It can generate plausible future frames or novel views that maintain physical and temporal coherence. The minor discrepancies highlight the current limits of the model's precision, which could be due to factors like complex deformable object physics, lighting modeling, or fine-grained texture generation.

The choice of tasks is significant: manipulating a cloth (highly deformable) and a drawer (constrained articulation) are challenging problems in robotics. Success here indicates the model captures complex dynamics beyond simple rigid-body motion. This type of visualization is crucial for research in robot learning, computer vision, and generative AI, providing an intuitive, holistic assessment that numerical metrics alone cannot offer. It answers the question: "Does the model's imagined version of the task look and behave like reality?"

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Image Comparison: Ground-Truth vs. Generated Video Frames

### Overview

The image presents a side-by-side comparison of **ground-truth video frames** (actual robotic actions) and **generated video frames** (simulated or reconstructed actions) across four distinct robotic manipulation tasks. Each task is labeled with a descriptive title, and the frames are arranged in two rows: the top row shows ground-truth, while the bottom row shows generated results.

---

### Components/Axes

1. **Task Labels**:

- **Top-Left**: "Object Manipulation (Blue Towel)"

- **Top-Right**: "Object Arrangement (Stacked Items)"

- **Bottom-Left**: "Object Interaction (Drawer and Bottle)"

- **Bottom-Right**: "Object Relocation (Blue Bowl)"

2. **Frame Structure**:

- Each task contains **4 frames** (temporal sequence).

- Frames are labeled implicitly by their position in the sequence (left to right).

3. **Visual Elements**:

- **Ground-truth**: High-resolution, realistic robotic actions.

- **Generated**: Simulated actions with noticeable discrepancies in object placement, motion, and interaction fidelity.

---

### Detailed Analysis

#### 1. Object Manipulation (Blue Towel)

- **Ground-truth**:

- A robotic arm grasps a blue towel on a wooden surface, lifts it, and folds it neatly.

- Towel remains upright during manipulation.

- **Generated**:

- Towel appears slightly misaligned in the final frame (tilted or partially unfolded).

- Arm trajectory deviates slightly from ground-truth, suggesting motion planning inaccuracies.

#### 2. Object Arrangement (Stacked Items)

- **Ground-truth**:

- Items (e.g., cans, plates) are stacked symmetrically on a dark surface.

- Robotic arm adjusts positions with precision.

- **Generated**:

- Items are misaligned (e.g., cans tilted, plates uneven).

- Arm fails to maintain consistent spacing between objects.

#### 3. Object Interaction (Drawer and Bottle)

- **Ground-truth**:

- Arm opens a wooden drawer, places a black bottle inside, and closes it.

- Drawer opens fully, bottle is centered.

- **Generated**:

- Drawer opens only partially; bottle is misplaced (off-center or tilted).

- Arm motion is jerky compared to smooth ground-truth movement.

#### 4. Object Relocation (Blue Bowl)

- **Ground-truth**:

- Arm lifts a blue bowl from a table and places it on a secondary surface.

- Bowl remains upright throughout.

- **Generated**:

- Bowl is dropped or misplaced in the final frame (e.g., tilted or on the wrong surface).

- Arm trajectory diverges significantly from ground-truth.

---

### Key Observations

1. **Task Complexity Correlation**:

- Simpler tasks (e.g., towel folding) show smaller discrepancies than complex ones (e.g., drawer interaction).

2. **Motion Fidelity**:

- Generated frames exhibit unnatural arm movements (e.g., jerky motions, incorrect trajectories).

3. **Object Placement Errors**:

- Final frames often show objects in incorrect orientations or positions (e.g., tilted bowls, misaligned stacks).

---

### Interpretation

The image highlights limitations in generated video reconstruction for robotic tasks. Discrepancies suggest challenges in:

- **Physics Simulation**: Generated frames fail to replicate realistic object dynamics (e.g., bowl dropping).

- **Motion Planning**: Arm trajectories deviate from ground-truth, indicating potential issues in inverse kinematics or sensor feedback.

- **Task-Specific Accuracy**: Complex interactions (e.g., drawer opening) are more error-prone, possibly due to higher degrees of freedom or occlusions.

These findings underscore the need for improved simulation models to bridge the gap between ground-truth and generated data in robotics training and testing.

DECODING INTELLIGENCE...