## Comparative Visualization: Robotic Manipulation Task Sequences

### Overview

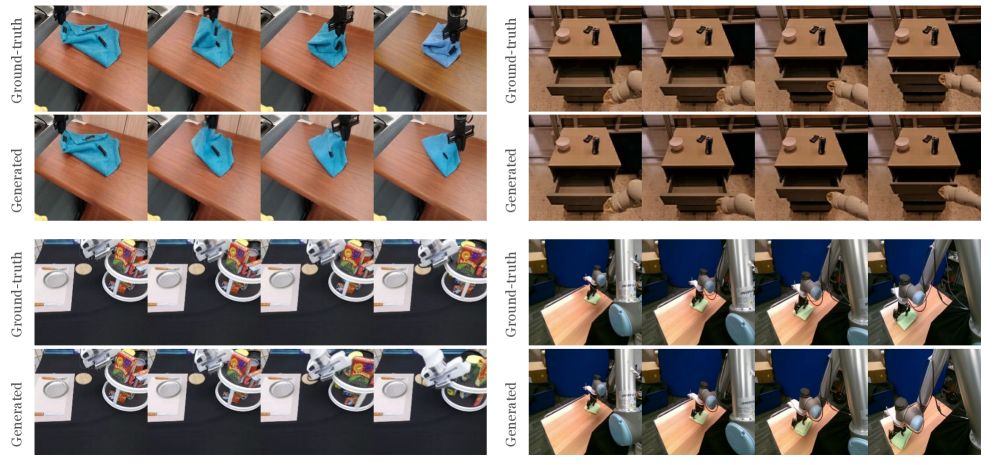

The image is a composite visualization comparing "Ground-truth" (real or reference) and "Generated" (synthesized or predicted) video sequences for four distinct robotic manipulation tasks. It is organized into a 2x2 grid of task panels. Each panel contains two rows of four sequential frames: the top row is labeled "Ground-truth" and the bottom row is labeled "Generated". The purpose is to visually assess the fidelity of the generated sequences against the ground truth.

### Components/Axes

* **Primary Labels:** The text "Ground-truth" and "Generated" appears vertically aligned to the left of each corresponding row of frames.

* **Structure:** The image is segmented into four independent task demonstration panels.

* **Top-Left Panel:** A robotic arm manipulating a blue cloth on a wooden table.

* **Top-Right Panel:** A robotic arm interacting with a wooden drawer unit.

* **Bottom-Left Panel:** A robotic arm interacting with a white plate and a colorful, patterned object (possibly a bag or container) on a black surface.

* **Bottom-Right Panel:** A robotic arm manipulating a small green object on a light-colored wooden table.

* **Frame Sequence:** Each row within a panel displays four consecutive frames, implying a temporal sequence from left to right.

### Detailed Analysis

**Panel 1 (Top-Left): Blue Cloth Manipulation**

* **Task:** A robotic gripper picks up and folds/moves a blue cloth.

* **Ground-truth Sequence:** Shows the gripper approaching, grasping, lifting, and repositioning the cloth. The cloth's folds and position change realistically.

* **Generated Sequence:** The sequence closely mirrors the ground truth in terms of gripper position, cloth deformation, and overall motion trajectory. Minor differences in the exact fold geometry of the cloth are perceptible upon close inspection.

**Panel 2 (Top-Right): Drawer Interaction**

* **Task:** A robotic arm opens a drawer in a wooden unit.

* **Ground-truth Sequence:** The gripper approaches the drawer handle, pulls it open, and retracts slightly. The drawer slides out smoothly.

* **Generated Sequence:** The generated frames replicate the action. The drawer's open position and the arm's posture in the final frames appear consistent with the ground truth.

**Panel 3 (Bottom-Left): Object Interaction on Table**

* **Task:** A robotic arm interacts with a white plate and a colorful object on a black tabletop.

* **Ground-truth Sequence:** The gripper moves towards the colorful object, appears to grasp or push it, causing it to shift position relative to the plate.

* **Generated Sequence:** The generated sequence shows a similar interaction. The movement of the colorful object and the arm's path are visually comparable to the ground truth.

**Panel 4 (Bottom-Right): Small Object Manipulation**

* **Task:** A robotic arm picks up or manipulates a small green object on a table.

* **Ground-truth Sequence:** The gripper descends, interacts with the green object, and lifts or moves it.

* **Generated Sequence:** The generated frames show the same fundamental action. The object's position and the gripper's configuration in each frame align well with the corresponding ground-truth frame.

### Key Observations

1. **High Fidelity:** Across all four tasks, the "Generated" sequences demonstrate a high degree of visual and temporal fidelity when compared to their "Ground-truth" counterparts. The core actions, object states, and robotic poses are faithfully reproduced.

2. **Consistent Structure:** The comparison is presented in a clear, consistent format, making side-by-side evaluation straightforward.

3. **Minor Discrepancies:** While the overall sequences match, subtle differences exist in fine details, such as the exact wrinkle pattern on the cloth (Panel 1) or the precise lighting reflection on an object (Panel 3). These are expected in generative model outputs.

4. **Task Diversity:** The visualization tests the generative model on a variety of manipulation primitives: deformable object manipulation (cloth), articulated object manipulation (drawer), and rigid object interaction.

### Interpretation

This image serves as a qualitative evaluation metric for a generative model, likely a video prediction or robotic simulation model. The "Ground-truth" represents the target reality—real video footage of a robot performing tasks. The "Generated" represents the model's attempt to synthesize or predict these video sequences.

The close correspondence between the two suggests the model has successfully learned the underlying physics, kinematics, and visual appearance of these robotic tasks. It can generate plausible future frames or novel views that maintain physical and temporal coherence. The minor discrepancies highlight the current limits of the model's precision, which could be due to factors like complex deformable object physics, lighting modeling, or fine-grained texture generation.

The choice of tasks is significant: manipulating a cloth (highly deformable) and a drawer (constrained articulation) are challenging problems in robotics. Success here indicates the model captures complex dynamics beyond simple rigid-body motion. This type of visualization is crucial for research in robot learning, computer vision, and generative AI, providing an intuitive, holistic assessment that numerical metrics alone cannot offer. It answers the question: "Does the model's imagined version of the task look and behave like reality?"