## Diagram: Audio-Visual Processing Framework

### Overview

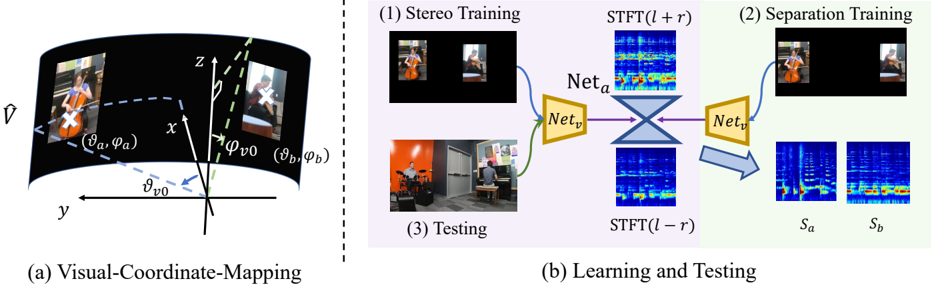

The image depicts a two-part technical framework for audio-visual processing. Part (a) illustrates a **Visual-Coordinate-Mapping** system using 3D coordinate transformations, while part (b) outlines a **Learning and Testing** pipeline involving stereo training, separation training, and testing phases with neural networks and STFT (Short-Time Fourier Transform) operations.

---

### Components/Axes

#### Part (a): Visual-Coordinate-Mapping

- **Axes**:

- **x, y, z**: 3D spatial axes.

- **θ (theta)** and **φ (phi)**: Angular coordinates for elevation and azimuth, respectively.

- **Labels**:

- **(θ_a, φ_a)**: Coordinates for the first visual element (e.g., a person playing a cello).

- **(θ_b, φ_b)**: Coordinates for the second visual element (e.g., a person in a different setting).

- **(θ_v0, φ_v0)**: Reference coordinates for the origin or baseline.

- **Visual Elements**:

- Two images of individuals (one with a cello, one in a room with a drum set).

- Dashed lines connecting angular coordinates to 3D space.

#### Part (b): Learning and Testing

- **Stages**:

1. **Stereo Training**: Inputs two stereo images (e.g., cello player and drummer).

2. **Separation Training**: Processes outputs through networks (`Net_a`, `Net_v`) and STFT operations.

3. **Testing**: Final output separation into `S_a` and `S_b`.

- **Networks**:

- `Net_a`: Processes the first input (e.g., cello player).

- `Net_v`: Processes the second input (e.g., drummer).

- **STFT Operations**:

- **STFT(l + r)**: Combines left (`l`) and right (`r`) audio channels.

- **STFT(l - r)**: Subtracts right from left audio channels.

- **Outputs**:

- `S_a` and `S_b`: Separated audio components (e.g., cello and drum sounds).

---

### Detailed Analysis

#### Part (a): Visual-Coordinate-Mapping

- **Angular Coordinates**:

- **(θ_a, φ_a)** and **(θ_b, φ_b)** map to distinct 3D positions, suggesting stereo vision or multi-view geometry.

- **(θ_v0, φ_v0)** likely represents a reference point (e.g., camera origin).

- **Dashed Lines**: Indicate geometric relationships between angular coordinates and 3D positions.

#### Part (b): Learning and Testing

- **Stereo Training**:

- Inputs two stereo images, processed by `Net_a` and `Net_v`.

- **Separation Training**:

- `Net_v` combines outputs from `Net_a` and `Net_v` via an X-shaped architecture.

- STFT operations (`l + r` and `l - r`) suggest audio feature extraction for separation.

- **Testing Phase**:

- Final outputs `S_a` and `S_b` represent isolated audio sources (e.g., cello and drum sounds).

---

### Key Observations

1. **Coordinate Mapping**: The 3D angular coordinates in part (a) imply a system for aligning visual and spatial data.

2. **Network Architecture**: The X-shaped connection between `Net_a` and `Net_v` suggests a fusion of features for separation.

3. **STFT Operations**: The use of `l + r` and `l - r` indicates a focus on phase and amplitude differences for audio separation.

4. **Output Separation**: `S_a` and `S_b` likely correspond to distinct audio sources derived from the input images.

---

### Interpretation

This framework appears to address **audio-visual source separation** using stereo imagery and neural networks. The 3D coordinate mapping in part (a) may enable spatial alignment of visual and audio data, while part (b) outlines a pipeline where:

- **Stereo Training** teaches the network to associate visual inputs with audio features.

- **Separation Training** refines the model to isolate individual audio sources (e.g., instruments).

- **Testing** validates the separation by producing `S_a` and `S_b`.

The STFT operations (`l + r` and `l - r`) are critical for capturing temporal and spectral differences between audio channels, enabling effective separation. The angular coordinates in part (a) suggest a geometric foundation for aligning visual and auditory data in 3D space.

---

**Note**: No explicit numerical values or data tables are present. The diagram focuses on conceptual relationships and architectural design.