## Diagram: Iterative Self-Instruction and Preference-Based Training Pipeline

### Overview

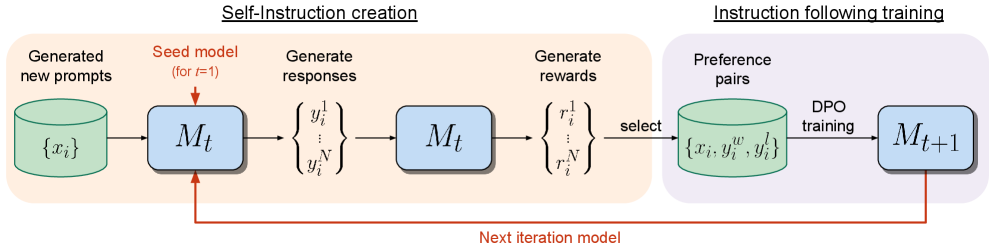

The image is a technical flowchart illustrating a two-stage, iterative machine learning training process. The pipeline consists of a "Self-Instruction creation" phase that generates training data, followed by an "Instruction following training" phase that refines the model. The process is cyclical, with the output model from one iteration becoming the input for the next.

### Components/Axes

The diagram is divided into two main colored regions:

1. **Left Region (Light Orange Background):** Titled **"Self-Instruction creation"**.

2. **Right Region (Light Purple Background):** Titled **"Instruction following training"**.

**Key Components & Labels:**

* **Data Stores (Cylinders):**

* Leftmost cylinder: Label **"Generated new prompts"**. Contains the mathematical set notation **`{x_i}`**.

* Right cylinder: Label **"Preference pairs"**. Contains the set notation **`{x_i, y_i^w, y_i^l}`**.

* **Model Blocks (Blue Rectangles):**

* First model block (left): Labeled **`M_t`**. An annotation above it reads **"Seed model (for t=1)"** in red text.

* Second model block (center): Also labeled **`M_t`**.

* Final model block (right): Labeled **`M_{t+1}`**.

* **Process Labels (Text above arrows/flows):**

* **"Generate responses"**: Positioned above the output of the first `M_t` block.

* **"Generate rewards"**: Positioned above the output of the second `M_t` block.

* **"select"**: Positioned on the arrow leading to the "Preference pairs" cylinder.

* **"DPO training"**: Positioned above the arrow leading to the `M_{t+1}` block.

* **Mathematical Notation:**

* Responses: A vertical set **`{y_i^1, ..., y_i^N}`**.

* Rewards: A vertical set **`{r_i^1, ..., r_i^N}`**.

* **Flow Arrows:** Black arrows indicate the primary data flow. A prominent **red arrow** at the bottom creates a feedback loop, labeled **"Next iteration model"**, pointing from the `M_{t+1}` block back to the initial `M_t` block.

### Detailed Analysis

The process flows as follows:

1. **Self-Instruction Creation Phase:**

* A set of generated prompts `{x_i}` is fed into the current model `M_t`.

* `M_t` generates a set of N responses `{y_i^1, ..., y_i^N}` for each prompt.

* These responses are fed back into the same model `M_t` (or a copy) to generate a corresponding set of rewards `{r_i^1, ..., r_i^N}`.

* Based on these rewards, a selection process ("select") creates a dataset of "Preference pairs" `{x_i, y_i^w, y_i^l}`. Here, `y_i^w` likely denotes a "winning" or preferred response, and `y_i^l` a "losing" or less preferred response for prompt `x_i`.

2. **Instruction Following Training Phase:**

* The curated preference pairs are used to perform **"DPO training"** (Direct Preference Optimization).

* This training updates the model, resulting in a new, improved version: `M_{t+1}`.

3. **Iterative Loop:**

* The red "Next iteration model" arrow indicates that `M_{t+1}` becomes the `M_t` for the next cycle, enabling continuous self-improvement.

### Key Observations

* **Self-Data Generation:** The model `M_t` is used twice in the first phase—once to generate responses and once to generate rewards for those responses. This suggests a self-supervised or self-evaluating mechanism.

* **DPO as the Training Mechanism:** The pipeline explicitly uses Direct Preference Optimization (DPO), a method that aligns models with human preferences using comparison data without needing a separate reward model.

* **Closed-Loop System:** The entire process is designed to be autonomous and iterative. The model bootstraps its own training data and then improves upon it in successive generations (`t`, `t+1`, etc.).

* **Color Coding:** Green is used for data stores, blue for model instances, and red for critical annotations (seed model note, feedback loop).

### Interpretation

This diagram depicts a sophisticated framework for **autonomous AI self-improvement**. It outlines a method where a language model can iteratively enhance its own instruction-following capabilities with minimal human intervention.

* **Core Mechanism:** The system generates its own training examples (prompts and responses), evaluates the quality of those responses to create preference data, and then uses that data to fine-tune itself via DPO. This creates a virtuous cycle where better models generate better training data, leading to even better future models.

* **Significance:** This approach addresses a key challenge in AI scaling: the bottleneck of high-quality, human-labeled data. By generating and curating its own preference data, the model can theoretically continue to improve indefinitely, limited mainly by its own capabilities and computational resources.

* **Underlying Assumption:** The process assumes that the model's own reward generation (`M_t` producing `r_i^N`) is a reliable proxy for quality or human preference, which is a critical and non-trivial assumption for the system's success.

* **Peircean Reading:** The diagram is an **icon** of a learning process, visually representing the cyclical and iterative nature of growth. It is also an **index**, pointing to the specific technical components (DPO, preference pairs) that make this particular self-improvement loop possible. The red feedback loop is the most salient indexical sign, emphasizing recursion as the core principle.