TECHNICAL ASSET FINGERPRINT

770895f5d86a91be7314a054

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

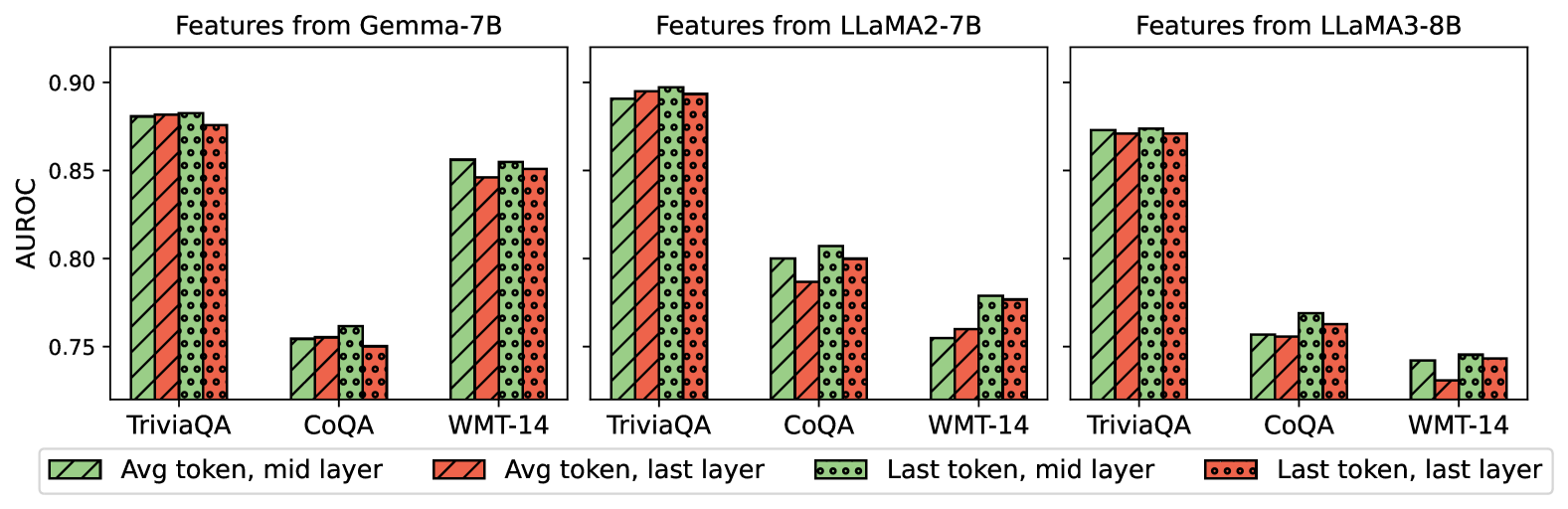

## Bar Chart: Feature Comparison of Language Models

### Overview

The image presents a series of bar charts comparing the AUROC (Area Under the Receiver Operating Characteristic curve) scores of different feature extraction methods from three language models: Gemma-7B, LLaMA2-7B, and LLaMA3-8B. The charts compare the performance on three tasks: TriviaQA, CoQA, and WMT-14. The feature extraction methods are "Avg token, mid layer", "Avg token, last layer", "Last token, mid layer", and "Last token, last layer".

### Components/Axes

* **Title:** The image is composed of three separate bar charts, each titled:

* "Features from Gemma-7B" (top-left)

* "Features from LLaMA2-7B" (top-center)

* "Features from LLaMA3-8B" (top-right)

* **Y-axis:** Labeled "AUROC" with a scale from approximately 0.74 to 0.91.

* **X-axis:** Categorical, representing the tasks: TriviaQA, CoQA, and WMT-14.

* **Legend:** Located at the bottom of the image, associating colors and patterns with feature extraction methods:

* Green with diagonal lines: "Avg token, mid layer"

* Red with diagonal lines: "Avg token, last layer"

* Green with circles: "Last token, mid layer"

* Red with circles: "Last token, last layer"

### Detailed Analysis

#### Features from Gemma-7B

* **TriviaQA:**

* Avg token, mid layer (green, diagonal lines): ~0.87

* Avg token, last layer (red, diagonal lines): ~0.86

* Last token, mid layer (green, circles): ~0.87

* Last token, last layer (red, circles): ~0.86

* **CoQA:**

* Avg token, mid layer (green, diagonal lines): ~0.76

* Avg token, last layer (red, diagonal lines): ~0.75

* Last token, mid layer (green, circles): ~0.76

* Last token, last layer (red, circles): ~0.75

* **WMT-14:**

* Avg token, mid layer (green, diagonal lines): ~0.86

* Avg token, last layer (red, diagonal lines): ~0.85

* Last token, mid layer (green, circles): ~0.86

* Last token, last layer (red, circles): ~0.85

#### Features from LLaMA2-7B

* **TriviaQA:**

* Avg token, mid layer (green, diagonal lines): ~0.89

* Avg token, last layer (red, diagonal lines): ~0.89

* Last token, mid layer (green, circles): ~0.90

* Last token, last layer (red, circles): ~0.89

* **CoQA:**

* Avg token, mid layer (green, diagonal lines): ~0.80

* Avg token, last layer (red, diagonal lines): ~0.80

* Last token, mid layer (green, circles): ~0.80

* Last token, last layer (red, circles): ~0.80

* **WMT-14:**

* Avg token, mid layer (green, diagonal lines): ~0.76

* Avg token, last layer (red, diagonal lines): ~0.76

* Last token, mid layer (green, circles): ~0.78

* Last token, last layer (red, circles): ~0.78

#### Features from LLaMA3-8B

* **TriviaQA:**

* Avg token, mid layer (green, diagonal lines): ~0.85

* Avg token, last layer (red, diagonal lines): ~0.85

* Last token, mid layer (green, circles): ~0.85

* Last token, last layer (red, circles): ~0.85

* **CoQA:**

* Avg token, mid layer (green, diagonal lines): ~0.76

* Avg token, last layer (red, diagonal lines): ~0.76

* Last token, mid layer (green, circles): ~0.76

* Last token, last layer (red, circles): ~0.76

* **WMT-14:**

* Avg token, mid layer (green, diagonal lines): ~0.73

* Avg token, last layer (red, diagonal lines): ~0.73

* Last token, mid layer (green, circles): ~0.74

* Last token, last layer (red, circles): ~0.74

### Key Observations

* For all three models, performance on TriviaQA is generally higher than on CoQA and WMT-14.

* The choice of layer (mid vs. last) and token aggregation (avg vs. last) has a relatively small impact on AUROC scores within each task and model.

* LLaMA2-7B generally shows the highest AUROC scores, especially on TriviaQA.

* LLaMA3-8B shows the lowest AUROC scores on WMT-14.

### Interpretation

The bar charts provide a comparative analysis of feature extraction methods from different language models based on their AUROC scores on various tasks. The data suggests that the LLaMA2-7B model performs slightly better overall compared to Gemma-7B and LLaMA3-8B. The performance differences between using the average token versus the last token, and the mid-layer versus the last layer, are relatively minor, indicating that the choice of feature extraction method is not as critical as the choice of the language model itself. The lower scores on CoQA and WMT-14 across all models suggest that these tasks are more challenging for the models compared to TriviaQA.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

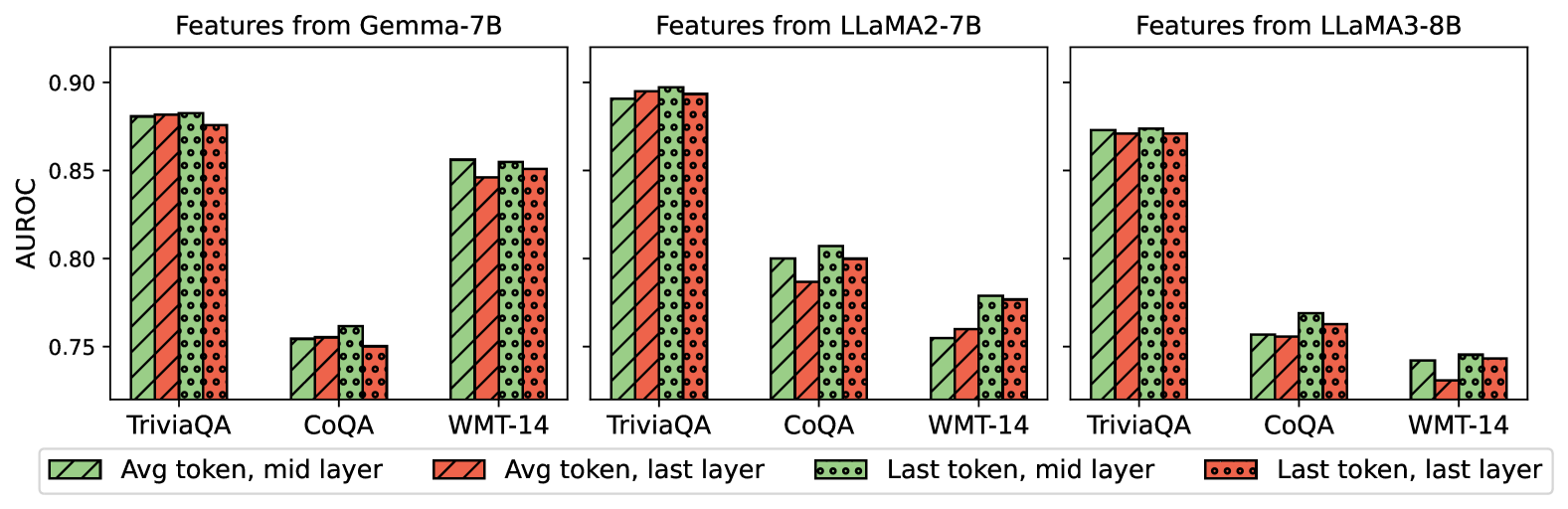

## Bar Chart: AUROC Scores for Different Models and Token Positions

### Overview

This image presents a comparative bar chart showing the Area Under the Receiver Operating Characteristic curve (AUROC) scores for three different language models – Gemma-7B, LLaMA2-7B, and LLaMA3-8B – across three datasets: TriviaQA, CoQA, and WMT-14. The chart compares performance based on features extracted from the average token at the mid-layer versus the last layer, and from the last token at the mid-layer versus the last layer. Each model has its own set of three bars for each dataset, representing these four feature configurations.

### Components/Axes

* **X-axis:** Datasets – TriviaQA, CoQA, WMT-14 (repeated for each model).

* **Y-axis:** AUROC score, ranging from approximately 0.74 to 0.91.

* **Chart Title:** Three separate titles, one for each model: "Features from Gemma-7B", "Features from LLaMA2-7B", and "Features from LLaMA3-8B".

* **Legend:** Located at the bottom of the image.

* Grey bars: "Avg token, mid layer"

* Red bars: "Avg token, last layer"

* Black dotted bars: "Last token, mid layer"

* Red dotted bars: "Last token, last layer"

### Detailed Analysis or Content Details

**Gemma-7B:**

* **TriviaQA:**

* Avg token, mid layer: Approximately 0.88

* Avg token, last layer: Approximately 0.89

* Last token, mid layer: Approximately 0.78

* Last token, last layer: Approximately 0.81

* **CoQA:**

* Avg token, mid layer: Approximately 0.82

* Avg token, last layer: Approximately 0.83

* Last token, mid layer: Approximately 0.76

* Last token, last layer: Approximately 0.78

* **WMT-14:**

* Avg token, mid layer: Approximately 0.79

* Avg token, last layer: Approximately 0.81

* Last token, mid layer: Approximately 0.75

* Last token, last layer: Approximately 0.76

**LLaMA2-7B:**

* **TriviaQA:**

* Avg token, mid layer: Approximately 0.90

* Avg token, last layer: Approximately 0.91

* Last token, mid layer: Approximately 0.77

* Last token, last layer: Approximately 0.79

* **CoQA:**

* Avg token, mid layer: Approximately 0.84

* Avg token, last layer: Approximately 0.85

* Last token, mid layer: Approximately 0.77

* Last token, last layer: Approximately 0.79

* **WMT-14:**

* Avg token, mid layer: Approximately 0.77

* Avg token, last layer: Approximately 0.78

* Last token, mid layer: Approximately 0.74

* Last token, last layer: Approximately 0.75

**LLaMA3-8B:**

* **TriviaQA:**

* Avg token, mid layer: Approximately 0.89

* Avg token, last layer: Approximately 0.90

* Last token, mid layer: Approximately 0.79

* Last token, last layer: Approximately 0.82

* **CoQA:**

* Avg token, mid layer: Approximately 0.83

* Avg token, last layer: Approximately 0.84

* Last token, mid layer: Approximately 0.76

* Last token, last layer: Approximately 0.77

* **WMT-14:**

* Avg token, mid layer: Approximately 0.76

* Avg token, last layer: Approximately 0.77

* Last token, mid layer: Approximately 0.73

* Last token, last layer: Approximately 0.74

### Key Observations

* For all models and datasets, using the "Avg token, last layer" consistently yields the highest AUROC scores.

* The "Last token, mid layer" consistently produces the lowest AUROC scores.

* LLaMA2-7B generally achieves the highest AUROC scores across all datasets, particularly on TriviaQA.

* WMT-14 consistently shows the lowest AUROC scores across all models.

* The difference between "mid layer" and "last layer" features is more pronounced for the average token than for the last token.

### Interpretation

The data suggests that features extracted from the average token at the last layer of these language models are most effective for discriminating between positive and negative examples in these tasks, as measured by AUROC. This could indicate that the final layers of these models capture more discriminative information relevant to the tasks. The lower performance of the "Last token, mid layer" features suggests that the last token alone may not contain sufficient information for accurate prediction, or that the mid-layers haven't fully converged on the task-specific features.

The superior performance of LLaMA2-7B suggests that its architecture or training data may be better suited for these tasks compared to Gemma-7B and LLaMA3-8B. The consistently lower scores on WMT-14 might indicate that this dataset is inherently more challenging for these models, potentially due to its complexity or the nature of the translation task. The consistent trend across all models and datasets highlights the importance of feature selection and layer choice in optimizing model performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart Comparison: AUROC Performance of Three Language Models Across Datasets

### Overview

The image displays three grouped bar charts arranged horizontally, comparing the AUROC (Area Under the Receiver Operating Characteristic curve) performance of features extracted from three different large language models (LLMs) on three distinct datasets. The models are Gemma-7B, LLaMA2-7B, and LLaMA3-8B. For each model, performance is measured using four different feature extraction strategies, represented by bars with distinct colors and patterns.

### Components/Axes

* **Chart Titles (Top):** "Features from Gemma-7B" (left), "Features from LLaMA2-7B" (center), "Features from LLaMA3-8B" (right).

* **Y-Axis (Left):** Labeled "AUROC". The scale runs from approximately 0.75 to 0.90, with major tick marks at 0.75, 0.80, 0.85, and 0.90.

* **X-Axis (Bottom of each subplot):** Lists three datasets: "TriviaQA", "CoQA", and "WMT-14".

* **Legend (Bottom of entire figure):** Positioned below the three charts. It defines four feature extraction methods:

* **Green bar with diagonal stripes (\\):** "Avg token, mid layer"

* **Red bar with diagonal stripes (\\):** "Avg token, last layer"

* **Green bar with dots (.):** "Last token, mid layer"

* **Red bar with dots (.):** "Last token, last layer"

### Detailed Analysis

**1. Features from Gemma-7B (Left Chart):**

* **TriviaQA:** All four methods perform similarly high, with AUROC values clustered around 0.88. The "Last token, mid layer" (green dots) appears marginally highest (~0.885), while "Last token, last layer" (red dots) is slightly lower (~0.875).

* **CoQA:** Performance is notably lower than for TriviaQA. Values range from ~0.75 to ~0.76. "Last token, mid layer" (green dots) is the highest (~0.76), while the other three methods are very close, around 0.75.

* **WMT-14:** Performance is intermediate. "Avg token, mid layer" (green stripes) is highest (~0.855). "Last token, mid layer" (green dots) is close behind (~0.85). "Avg token, last layer" (red stripes) and "Last token, last layer" (red dots) are slightly lower, around 0.845-0.85.

**2. Features from LLaMA2-7B (Center Chart):**

* **TriviaQA:** Shows the highest overall performance in the entire figure. All four methods are very close, with AUROC values near or at 0.90. "Avg token, last layer" (red stripes) and "Last token, mid layer" (green dots) appear to be at the peak (~0.90).

* **CoQA:** Performance is lower. "Last token, mid layer" (green dots) is the highest (~0.81). "Avg token, mid layer" (green stripes) is next (~0.80). "Last token, last layer" (red dots) is ~0.80, and "Avg token, last layer" (red stripes) is the lowest (~0.79).

* **WMT-14:** Performance is the lowest among the three datasets for this model. "Last token, mid layer" (green dots) is highest (~0.78). "Last token, last layer" (red dots) is close (~0.775). "Avg token, last layer" (red stripes) is ~0.76, and "Avg token, mid layer" (green stripes) is the lowest (~0.755).

**3. Features from LLaMA3-8B (Right Chart):**

* **TriviaQA:** Performance is high but slightly lower than LLaMA2-7B on the same task. All four methods are tightly clustered around 0.87-0.875.

* **CoQA:** Performance is the lowest among the three models for this dataset. "Last token, mid layer" (green dots) is highest (~0.77). "Last token, last layer" (red dots) is ~0.76. "Avg token, mid layer" (green stripes) and "Avg token, last layer" (red stripes) are both around 0.755.

* **WMT-14:** Performance is the lowest across all models and datasets. "Last token, mid layer" (green dots) is highest (~0.745). "Last token, last layer" (red dots) is ~0.74. "Avg token, mid layer" (green stripes) is ~0.74, and "Avg token, last layer" (red stripes) is the lowest (~0.73).

### Key Observations

1. **Dataset Difficulty:** Across all three models, **TriviaQA consistently yields the highest AUROC scores** (approx. 0.87-0.90), followed by **WMT-14** (approx. 0.74-0.855), with **CoQA generally being the most challenging** (approx. 0.73-0.81).

2. **Model Comparison:** **LLaMA2-7B** appears to achieve the peak performance on TriviaQA (~0.90). **Gemma-7B** shows strong and consistent performance on TriviaQA and WMT-14. **LLaMA3-8B** shows a more pronounced drop in performance on the CoQA and WMT-14 datasets compared to the other two models.

3. **Feature Extraction Strategy:** The **"Last token, mid layer" (green dots) strategy is frequently the top or near-top performer** across most model-dataset combinations (e.g., Gemma on CoQA/WMT-14, LLaMA2 on CoQA/WMT-14, LLaMA3 on all). Using the **last layer (red bars) often results in slightly lower performance** compared to using the mid-layer (green bars) for the same token strategy.

4. **Token Strategy:** There is no universal winner between "Avg token" and "Last token" strategies; their relative performance varies by model and dataset. However, the "Last token" strategies (dotted bars) show a slight edge in more instances.

### Interpretation

This chart evaluates how effectively internal representations (features) from different LLMs can distinguish between correct and incorrect outputs on question-answering (TriviaQA, CoQA) and translation (WMT-14) tasks. The AUROC metric quantifies this discriminative power.

The data suggests that:

* **Task-Specific Feature Quality:** The features extracted from these models are most discriminative for the factual recall task (TriviaQA) and least for the conversational QA task (CoQA). This could indicate that the models' internal states more cleanly encode factual correctness than the nuanced correctness required in conversational contexts.

* **Layer and Token Selection Matters:** The consistent, often superior performance of features from the **mid-layer** (especially using the last token) implies that the most useful signal for error detection may reside in intermediate processing stages, not necessarily the final output layer. This aligns with the "layerwise" understanding of LLMs, where different layers specialize in different types of processing.

* **Model Architecture/Training Impact:** The performance differences between models (e.g., LLaMA2-7B's peak on TriviaQA vs. LLaMA3-8B's lower scores on CoQA/WMT-14) highlight that model scale (7B vs 8B parameters) is not the sole determinant of feature quality for these tasks. Differences in training data, architecture, or fine-tuning likely contribute significantly.

* **Practical Implication:** For building a classifier or detector that uses LLM features (e.g., for detecting hallucinations or errors), this analysis indicates that **extracting features from the mid-layer using the last token representation is a robust starting point**. The choice of source model should be guided by the specific target task (e.g., LLaMA2-7B for trivia-like tasks).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: AUROC Comparison Across Models and Features

### Overview

The image presents a grouped bar chart comparing the Area Under the Receiver Operating Characteristic curve (AUROC) values for three language models (Gemma-7B, LLaMA2-7B, LLaMA3-8B) across three datasets (TriviaQA, CoQA, WMT-14). Four feature types are evaluated:

1. **Avg token, mid layer** (solid green)

2. **Avg token, last layer** (solid red)

3. **Last token, mid layer** (dotted green)

4. **Last token, last layer** (dotted red)

### Components/Axes

- **X-axis**: Datasets (TriviaQA, CoQA, WMT-14)

- **Y-axis**: AUROC values (0.75–0.90, increments of 0.05)

- **Legend**: Located at the bottom, mapping colors/patterns to feature types.

- **Model Sections**: Three vertical groupings (left to right) for each model.

### Detailed Analysis

#### Gemma-7B

- **TriviaQA**:

- Avg token, mid layer: ~0.88

- Avg token, last layer: ~0.87

- Last token, mid layer: ~0.89

- Last token, last layer: ~0.88

- **CoQA**:

- Avg token, mid layer: ~0.76

- Avg token, last layer: ~0.75

- Last token, mid layer: ~0.77

- Last token, last layer: ~0.75

- **WMT-14**:

- Avg token, mid layer: ~0.86

- Avg token, last layer: ~0.85

- Last token, mid layer: ~0.87

- Last token, last layer: ~0.86

#### LLaMA2-7B

- **TriviaQA**:

- Avg token, mid layer: ~0.89

- Avg token, last layer: ~0.89

- Last token, mid layer: ~0.90

- Last token, last layer: ~0.89

- **CoQA**:

- Avg token, mid layer: ~0.80

- Avg token, last layer: ~0.79

- Last token, mid layer: ~0.81

- Last token, last layer: ~0.80

- **WMT-14**:

- Avg token, mid layer: ~0.76

- Avg token, last layer: ~0.75

- Last token, mid layer: ~0.77

- Last token, last layer: ~0.76

#### LLaMA3-8B

- **TriviaQA**:

- Avg token, mid layer: ~0.87

- Avg token, last layer: ~0.87

- Last token, mid layer: ~0.88

- Last token, last layer: ~0.87

- **CoQA**:

- Avg token, mid layer: ~0.76

- Avg token, last layer: ~0.75

- Last token, mid layer: ~0.77

- Last token, last layer: ~0.75

- **WMT-14**:

- Avg token, mid layer: ~0.74

- Avg token, last layer: ~0.73

- Last token, mid layer: ~0.75

- Last token, last layer: ~0.74

### Key Observations

1. **TriviaQA Dominance**: All models achieve highest AUROC on TriviaQA, suggesting it aligns better with their architectures.

2. **Mid Layer Superiority**: Features from the mid layer (both avg and last token) consistently outperform last layer features across models.

3. **LLaMA2-7B Peak**: LLaMA2-7B achieves the highest AUROC (0.90) for Last token, mid layer on TriviaQA.

4. **WMT-14 Struggles**: All models perform worst on WMT-14, with AUROC values dropping below 0.80.

5. **Last Token Variability**: Last token features show mixed performance, sometimes matching or slightly exceeding avg token results.

### Interpretation

The data suggests that **mid-layer features** (both average and last token) are more effective for these tasks than last-layer features, potentially due to mid layers capturing richer contextual information. TriviaQA’s higher performance across models implies it is more compatible with the models’ design, while WMT-14’s lower scores may reflect task complexity or domain mismatch. The consistency of mid-layer superiority across models indicates this is a generalizable trend rather than model-specific behavior.

DECODING INTELLIGENCE...