## Diagram: Hierarchical Processing System with Multi-Layered Nodes

### Overview

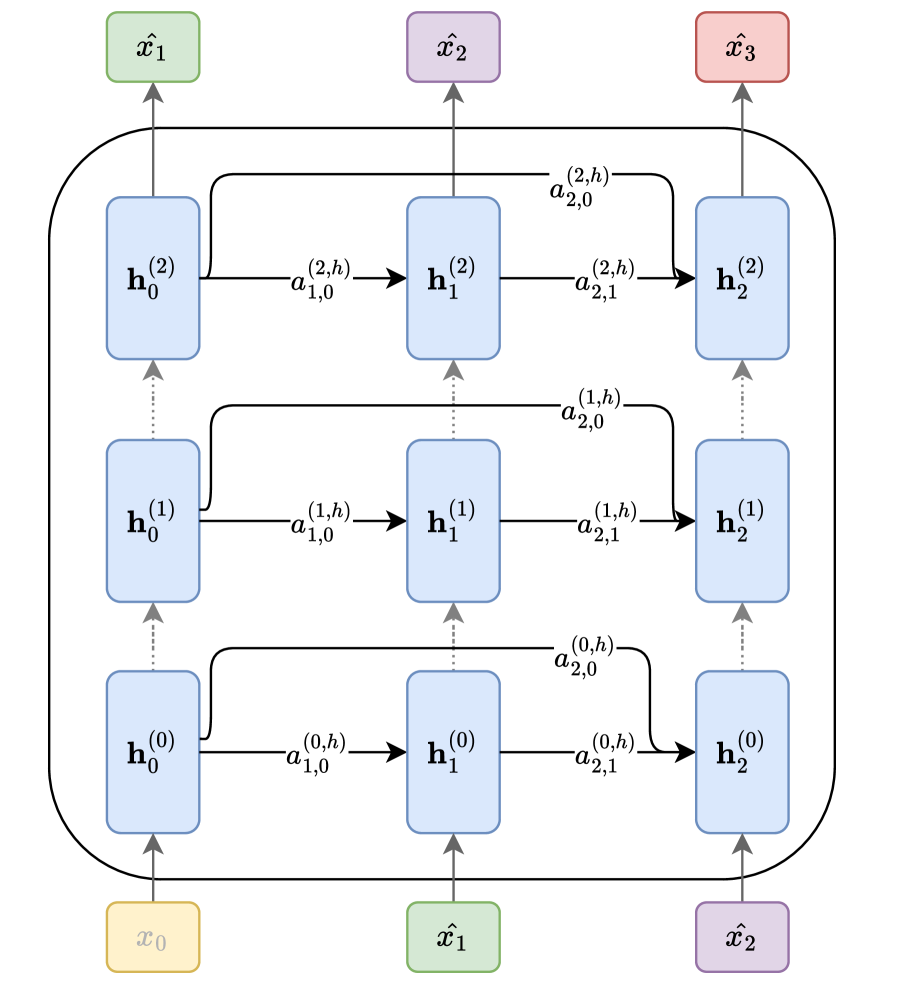

The diagram illustrates a hierarchical processing system with three layers of nodes (`h^(0)`, `h^(1)`, `h^(2)`) and four input nodes (`x^0`, `x^1`, `x^2`, `x^3`). Arrows represent directional flows between nodes, labeled with parameters such as `a^(i,j)` and `a^(k,h)`. The system appears to model transformations or operations across layers, with inputs feeding into lower layers and propagating upward.

---

### Components/Axes

- **Nodes**:

- **Input Nodes**:

- `x^0` (yellow, bottom-left)

- `x^1` (green, bottom-center)

- `x^2` (purple, bottom-right)

- `x^3` (red, top-right)

- **Hidden Layers**:

- `h^(0)` (blue, middle-left)

- `h^(1)` (blue, middle-center)

- `h^(2)` (blue, middle-right)

- **Arrows**:

- Labeled with parameters like `a^(0,h)`, `a^(1,h)`, `a^(2,h)`, `a^(1,0)`, `a^(2,0)`, etc.

- Arrows connect inputs to hidden layers and between hidden layers.

---

### Detailed Analysis

1. **Input to Hidden Layer Flow**:

- `x^0` → `h^(0)` via `a^(0,h)`

- `x^1` → `h^(0)` via `a^(1,h)`

- `x^2` → `h^(0)` via `a^(2,h)`

- `x^3` → `h^(0)` via `a^(3,h)` (implied by pattern, though not explicitly labeled in the diagram).

2. **Inter-Layer Connections**:

- `h^(0)` → `h^(1)` via `a^(0,h)` and `a^(1,h)`

- `h^(1)` → `h^(2)` via `a^(0,h)` and `a^(1,h)`

- `h^(0)` → `h^(2)` via `a^(2,h)` (direct connection from `h^(0)` to `h^(2)`).

3. **Parameter Labels**:

- Arrows between `h^(i)` and `h^(j)` use `a^(k,h)` where `k` likely denotes the source layer and `h` the target layer.

- Arrows from inputs to `h^(0)` use `a^(i,h)` where `i` is the input index.

---

### Key Observations

- **Layered Structure**: The system is organized into three hierarchical layers, with inputs feeding into the lowest layer (`h^(0)`) and propagating upward.

- **Parameter Consistency**: The parameter `a^(k,h)` appears consistently across connections, suggesting a uniform transformation rule (e.g., weights in a neural network).

- **Missing Labels**: The arrow from `x^3` to `h^(0)` is not explicitly labeled in the diagram, but the pattern implies `a^(3,h)`.

---

### Interpretation

This diagram likely represents a **multi-layered neural network** or **hierarchical model** where:

- **Inputs** (`x^0`, `x^1`, `x^2`, `x^3`) are processed through **hidden layers** (`h^(0)`, `h^(1)`, `h^(2)`).

- **Parameters** (`a^(i,j)`) define the relationships between nodes, possibly representing weights or coefficients in a computational model.

- The **flow direction** (bottom-to-top) suggests a bottom-up processing mechanism, common in deep learning architectures.

The absence of explicit numerical values or trends indicates this is a **conceptual diagram** rather than a data-driven chart. The labels and structure emphasize **system design** over empirical data.