## Scatter Plot: Network Parameter Efficiency vs. Test Accuracy

### Overview

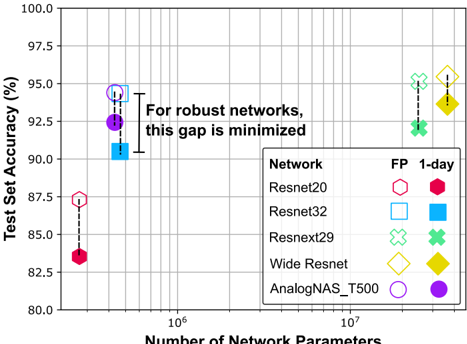

The image is a scatter plot comparing the test set accuracy (y-axis) against the number of network parameters (x-axis, logarithmic scale) for five different neural network architectures. Each architecture is represented by two data points: one for a full-precision ("FP") model and one for a "1-day" trained model. An annotation highlights a key finding regarding robust networks.

### Components/Axes

* **X-Axis:** "Number of Network Parameters". It is a logarithmic scale with major grid lines at 10^6 and 10^7. The visible range spans from approximately 2×10^5 to 4×10^7.

* **Y-Axis:** "Test Set Accuracy (%)". It is a linear scale ranging from 80.0 to 100.0, with major grid lines every 2.5%.

* **Legend:** Located in the bottom-right quadrant. It defines the shapes and colors for five network types, each with two variants:

* **Network:** Resnet20, Resnet32, Resnext29, Wide Resnet, AnalogNAS_T500.

* **FP (Full Precision):** Represented by open (unfilled) shapes.

* **1-day:** Represented by solid (filled) shapes of the same color and form as their FP counterpart.

* **Annotation:** Text placed in the center of the plot area: "For robust networks, this gap is minimized". A dashed line connects the FP and 1-day markers for the Resnet32 network, visually indicating the "gap" referenced in the text.

### Detailed Analysis

**Data Series and Points (Approximate Values):**

| Network | Variant | Shape & Color | Approx. Parameters | Approx. Accuracy (%) | Accuracy Drop (FP to 1-day) |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **Resnet20** | FP | Open Red Hexagon | ~2×10^5 | ~87.5 | ~4.0 |

| | 1-day | Solid Red Hexagon | ~2×10^5 | ~83.5 | |

| **Resnet32** | FP | Open Blue Square | ~4.5×10^5 | ~94.5 | ~3.5 |

| | 1-day | Solid Blue Square | ~4.5×10^5 | ~91.0 | |

| **Resnext29** | FP | Open Green Cross | ~2.5×10^7 | ~95.0 | ~2.5 |

| | 1-day | Solid Green Cross | ~2.5×10^7 | ~92.5 | |

| **Wide Resnet** | FP | Open Yellow Diamond | ~3.5×10^7 | ~96.5 | ~2.0 |

| | 1-day | Solid Yellow Diamond | ~3.5×10^7 | ~94.5 | |

| **AnalogNAS_T500** | FP | Open Purple Circle | ~4.5×10^5 | ~94.5 | ~2.0 |

| | 1-day | Solid Purple Circle | ~4.5×10^5 | ~92.5 | |

### Key Observations

1. **Universal Accuracy Drop:** For all five networks, the "1-day" model has lower test set accuracy than its "FP" counterpart.

2. **Parameter Clustering:** The networks fall into two distinct clusters based on parameter count:

* **Lower-Parameter Cluster (~4.5×10^5):** Resnet32 and AnalogNAS_T500.

* **Higher-Parameter Cluster (~2.5-3.5×10^7):** Resnext29 and Wide Resnet.

* Resnet20 is an outlier with the fewest parameters (~2×10^5).

3. **Accuracy vs. Parameters:** Generally, networks with more parameters (Resnext29, Wide Resnet) achieve higher baseline (FP) accuracy (>95%) compared to those with fewer parameters (Resnet20 at ~87.5%).

4. **The "Gap":** The vertical distance (accuracy drop) between FP and 1-day markers varies. The annotation suggests this gap is smaller for "robust networks." Visually, the gap appears smallest for AnalogNAS_T500 and Wide Resnet (~2 points) and largest for Resnet20 (~4 points).

### Interpretation

This chart investigates the trade-off between model size (parameters), training regimen (FP vs. 1-day), and final accuracy. The core message, emphasized by the annotation, is that **network robustness—likely defined here as the ability to maintain performance under constrained training (1-day)—is inversely related to the accuracy drop between FP and 1-day models.**

* **What the data suggests:** The "1-day" training condition acts as a stress test. Networks that suffer a smaller accuracy degradation under this stress (e.g., AnalogNAS_T500, Wide Resnet) are implied to be more robust. The chart posits that robustness is a desirable property distinct from raw parameter count or peak FP accuracy.

* **Relationship between elements:** The plot connects three concepts: architectural efficiency (x-axis), peak performance (y-axis, FP points), and robustness (vertical gap). It argues that evaluating a network solely on its FP accuracy is insufficient; its resilience to training variations is also critical.

* **Notable patterns/anomalies:** AnalogNAS_T500 is particularly interesting. It matches Resnet32 in parameter count and FP accuracy but demonstrates a smaller robustness gap, suggesting its architecture (likely found via neural architecture search) is inherently more robust. Resnet20, with the smallest parameter count, is the least robust, hinting at a potential lower bound on model size for achieving stability. The chart implies that the goal for robust network design is to minimize the FP-to-1-day accuracy gap, regardless of the absolute parameter count.