## Heatmap: Neural Network Layer-Token Activation Analysis

### Overview

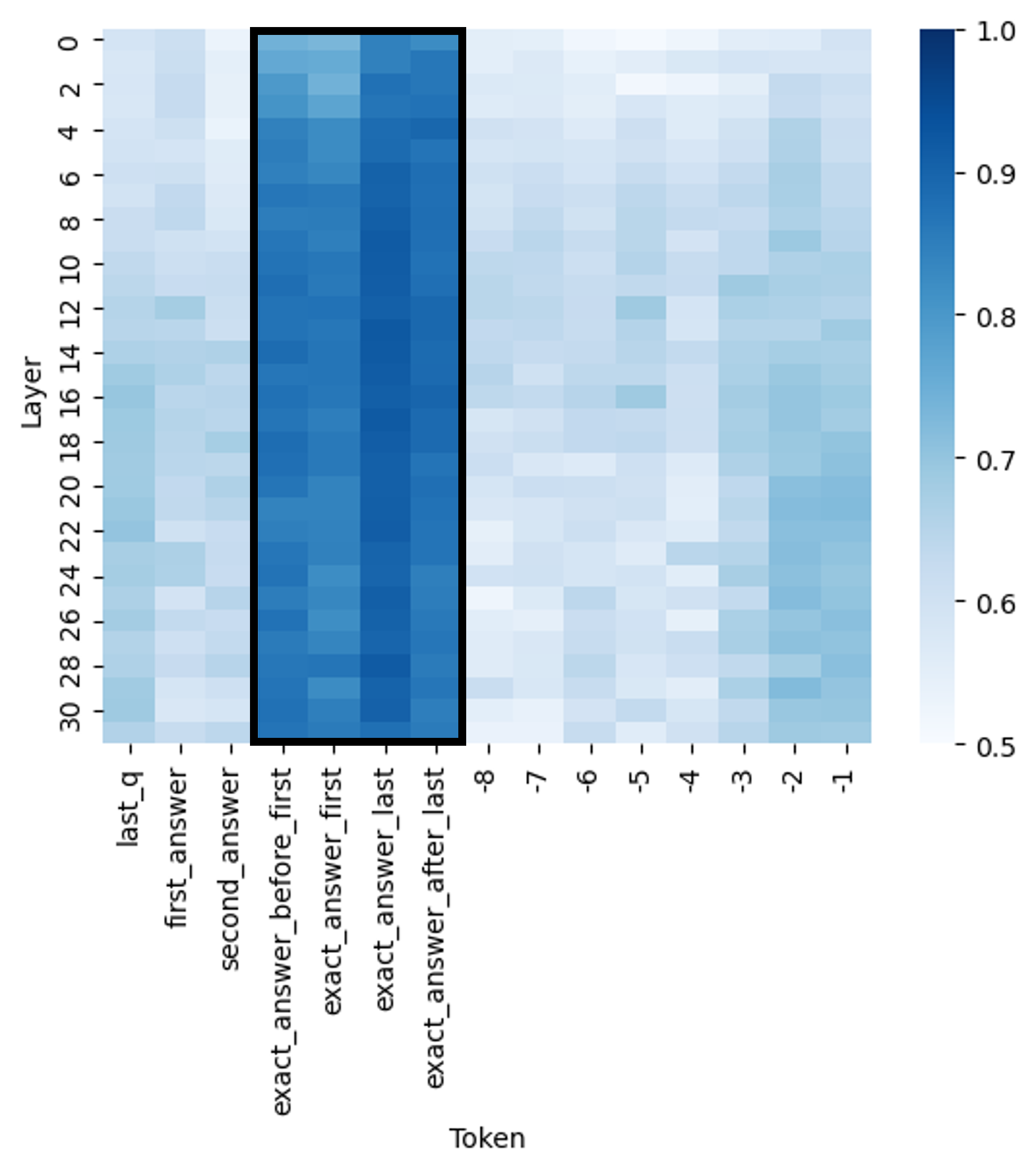

The image displays a heatmap visualizing numerical values (likely attention weights, activation strengths, or correlation scores) across different layers of a neural network (y-axis) and specific tokens or token positions (x-axis). A prominent vertical black rectangle highlights a specific region of interest on the x-axis. A color scale bar on the right indicates that values range from 0.5 (lightest blue/white) to 1.0 (darkest blue).

### Components/Axes

* **Chart Type:** Heatmap.

* **Y-Axis (Vertical):**

* **Label:** "Layer"

* **Scale:** Linear, numbered from 0 at the top to 30 at the bottom, with tick marks every 2 units (0, 2, 4, ..., 30).

* **X-Axis (Horizontal):**

* **Label:** "Token"

* **Categories (from left to right):**

1. `last_q`

2. `first_answer`

3. `second_answer`

4. `exact_answer_before_first` (Start of highlighted region)

5. `exact_answer_first`

6. `exact_answer_last`

7. `exact_answer_after_last` (End of highlighted region)

8. `-8`

9. `-7`

10. `-6`

11. `-5`

12. `-4`

13. `-3`

14. `-2`

15. `-1`

* **Color Scale (Legend):**

* **Position:** Right side of the chart.

* **Range:** 0.5 to 1.0.

* **Gradient:** Continuous gradient from very light blue/white (0.5) to dark blue (1.0).

* **Tick Marks:** Labeled at 0.5, 0.6, 0.7, 0.8, 0.9, 1.0.

* **Highlighted Region:**

* A thick black rectangle outlines a vertical band on the heatmap.

* **Spatial Grounding:** This rectangle is positioned in the center-left of the chart, spanning from the x-axis category `exact_answer_before_first` to `exact_answer_after_last`. It covers all layers (0-30) vertically within this token range.

### Detailed Analysis

* **General Pattern:** The heatmap shows a clear concentration of high values (dark blue) within the highlighted vertical band. Values outside this band are generally lower (lighter blue to white).

* **Highlighted Band Analysis (Tokens: `exact_answer_before_first` to `exact_answer_after_last`):**

* **Trend Verification:** This entire vertical strip exhibits consistently high values across nearly all layers (0-30). The color is predominantly dark blue, indicating values frequently in the 0.8-1.0 range.

* **Sub-Patterns:** Within this band, the columns for `exact_answer_first` and `exact_answer_last` appear to have the most intense and consistent dark blue coloring, suggesting these tokens may have the highest values. The columns `exact_answer_before_first` and `exact_answer_after_last` are also dark but show slightly more variation, with some lighter blue cells, particularly in the middle layers (approx. layers 10-20).

* **Non-Highlighted Regions Analysis:**

* **Left Region (Tokens: `last_q`, `first_answer`, `second_answer`):** Values are generally low to moderate. The color is mostly light blue, corresponding to an approximate range of 0.5-0.7. There is no strong layer-wise trend; values are scattered.

* **Right Region (Tokens: `-8` to `-1`):** Values are also generally low to moderate, similar to the left region. The color is predominantly light blue (0.5-0.7). There is a subtle pattern where the columns for `-2` and `-1` appear slightly darker (closer to 0.7-0.8) in the lower layers (approx. layers 20-30) compared to the upper layers.

* **Layer-wise Trends:**

* There is no single, strong trend that applies to all tokens across layers. The most significant pattern is the stability of high values within the highlighted token band across all layers.

* In the non-highlighted regions, the distribution of moderate values appears somewhat random without a clear increasing or decreasing trend from layer 0 to layer 30.

### Key Observations

1. **Dominant Feature:** The most striking feature is the vertical band of high activation/values for the four tokens related to the "exact answer" (`exact_answer_before_first`, `exact_answer_first`, `exact_answer_last`, `exact_answer_after_last`). This is explicitly highlighted by the black rectangle.

2. **Token Specificity:** The tokens `exact_answer_first` and `exact_answer_last` within the highlighted band show the most consistently high values (darkest blue).

3. **Low Baseline:** Tokens outside the highlighted "exact answer" context (`last_q`, `first_answer`, `second_answer`, and the numbered tokens `-8` to `-1`) show significantly lower values, mostly in the lower half of the scale (0.5-0.7).

4. **Spatial Anomaly:** The numbered tokens on the far right (`-8` to `-1`) show a slight increase in value in the lower network layers (20-30), particularly for `-2` and `-1`.

### Interpretation

This heatmap likely visualizes a metric like attention weight or hidden state activation strength within a transformer-based language model, analyzing how the model processes a specific question-answer pair.

* **What the data suggests:** The model's internal representations (across all layers from 0 to 30) are strongly and consistently focused on the tokens immediately surrounding the "exact answer." The high values in the highlighted band indicate these tokens are critically important for the model's processing at every level of its hierarchy.

* **How elements relate:** The stark contrast between the high-value "exact answer" band and the low-value surrounding tokens demonstrates a sharp contextual focus. The model appears to "lock onto" the precise answer span. The numbered tokens (`-8` to `-1`), which likely represent positions relative to the end of the sequence or a special token, show weaker and more diffuse activation, suggesting they play a less central role in this specific analysis.

* **Notable outliers/trends:** The slight increase in value for tokens `-2` and `-1` in the deeper layers (20-30) is a subtle but interesting anomaly. This could indicate that the final layers of the model pay slightly more attention to the very end of the input sequence, perhaps for tasks like determining when to stop generating or for final answer normalization.

* **Peircean investigative reading:** The heatmap is an indexical sign pointing to the model's internal focus. The highlighted rectangle is a direct index of the researcher's hypothesis—that the "exact answer" tokens are key. The data confirms this hypothesis strongly. The chart is also a symbolic representation of the model's computational state, allowing us to infer that the mechanism for answer extraction or verification is distributed across all layers but is highly localized to specific token positions. The lack of a strong layer-wise gradient suggests this focus is a fundamental, early-established property of the processing stream for this input, not something that emerges only in deep layers.