TECHNICAL ASSET FINGERPRINT

7799e88d71d876208fe12ff3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

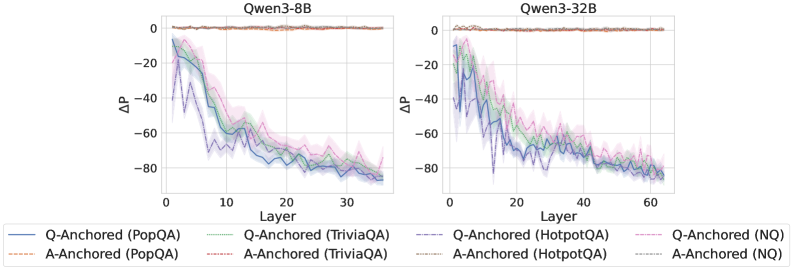

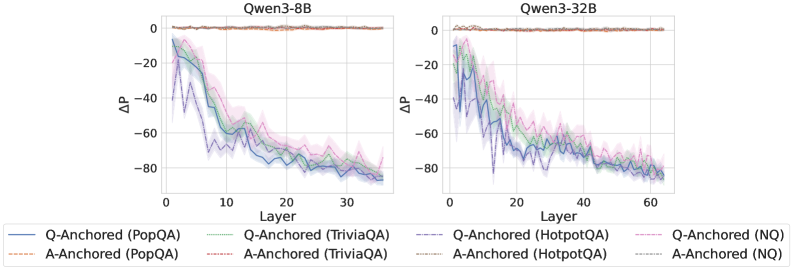

## Line Charts: Layer-wise ΔP for Q-Anchored vs. A-Anchored Methods on Qwen3 Models

### Overview

The image displays two side-by-side line charts comparing the performance metric ΔP across model layers for two different large language models: **Qwen3-8B** (left chart) and **Qwen3-32B** (right chart). Each chart plots multiple data series representing different experimental methods ("Q-Anchored" and "A-Anchored") applied to four distinct question-answering datasets. The charts share a common legend and axis labels.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Qwen3-8B`

* Right Chart: `Qwen3-32B`

* **X-Axis (Both Charts):**

* Label: `Layer`

* Scale (Qwen3-8B): 0 to ~35, with major ticks at 0, 10, 20, 30.

* Scale (Qwen3-32B): 0 to ~65, with major ticks at 0, 20, 40, 60.

* **Y-Axis (Both Charts):**

* Label: `ΔP` (Delta P)

* Scale: Approximately -90 to +5, with major ticks at -80, -60, -40, -20, 0.

* **Legend (Bottom, spanning both charts):**

* The legend is positioned at the bottom of the figure, below both charts.

* It defines 8 data series using a combination of line color and style (solid vs. dashed).

* **Q-Anchored (Solid Lines):**

* Blue solid line: `Q-Anchored (PopQA)`

* Green solid line: `Q-Anchored (TriviaQA)`

* Purple solid line: `Q-Anchored (HotpotQA)`

* Pink solid line: `Q-Anchored (NQ)`

* **A-Anchored (Dashed Lines):**

* Orange dashed line: `A-Anchored (PopQA)`

* Red dashed line: `A-Anchored (TriviaQA)`

* Gray dashed line: `A-Anchored (HotpotQA)`

* Light blue dashed line: `A-Anchored (NQ)`

* **Visual Elements:** Each data series is plotted as a line with a shaded region around it, likely representing a confidence interval or standard deviation.

### Detailed Analysis

**Qwen3-8B Chart (Left):**

* **Q-Anchored Series (Solid Lines):** All four solid lines (PopQA, TriviaQA, HotpotQA, NQ) exhibit a strong, consistent downward trend.

* They start at Layer 1 with ΔP values between approximately -10 and -20.

* They decline steeply until around Layer 15-20, reaching values between -60 and -70.

* The decline continues at a slower rate, ending near Layer 35 with values clustered around -80.

* The lines are tightly grouped, with the blue (PopQA) and purple (HotpotQA) lines often at the lower edge of the cluster.

* **A-Anchored Series (Dashed Lines):** All four dashed lines remain very close to the ΔP = 0 baseline across all layers (0 to ~35). They show minimal fluctuation, staying within a narrow band roughly between -5 and +5.

**Qwen3-32B Chart (Right):**

* **Q-Anchored Series (Solid Lines):** The pattern is similar to the 8B model but extended over more layers.

* They start near Layer 1 with ΔP values between -10 and -25.

* A steep decline occurs until approximately Layer 25-30, where values reach between -60 and -75.

* The decline persists, ending near Layer 65 with values tightly clustered around -80.

* The grouping of lines is very tight, making individual series difficult to distinguish in the later layers.

* **A-Anchored Series (Dashed Lines):** Identical to the 8B chart, these lines hover consistently near ΔP = 0 across the entire layer range (0 to ~65).

### Key Observations

1. **Fundamental Dichotomy:** There is a stark, categorical difference between the behavior of Q-Anchored and A-Anchored methods. Q-Anchored methods show a large, layer-dependent negative ΔP, while A-Anchored methods show a ΔP near zero that is layer-invariant.

2. **Layer-Dependent Degradation:** For Q-Anchored methods, the metric ΔP degrades (becomes more negative) significantly as information propagates through deeper layers of the network. The most rapid change occurs in the first half of the layers.

3. **Model Scale Invariance of Pattern:** The qualitative pattern is identical between the 8B and 32B parameter models. The 32B model simply extends the trend over a greater number of layers.

4. **Dataset Similarity:** Within each anchoring method (Q or A), the performance across the four different datasets (PopQA, TriviaQA, HotpotQA, NQ) is remarkably similar. The lines for different datasets are tightly clustered, suggesting the observed effect is robust across these QA benchmarks.

5. **Convergence:** By the final layers, the Q-Anchored lines for all datasets converge to a very similar, low ΔP value (approx. -80).

### Interpretation

This visualization presents a technical analysis of internal model behavior, likely probing how different "anchoring" techniques affect a model's internal probability distributions (ΔP) across its layers.

* **What the data suggests:** The "A-Anchored" method appears to stabilize the model's internal representations, maintaining a consistent probability shift (ΔP ≈ 0) regardless of depth. In contrast, the "Q-Anchored" method leads to a progressive and substantial negative shift in probabilities as information moves from early to late layers. This could indicate that anchoring on the question (Q) causes the model to increasingly suppress or alter certain probability distributions in deeper processing stages, while anchoring on the answer (A) preserves the initial distribution.

* **How elements relate:** The side-by-side comparison of two model sizes demonstrates that this is a fundamental property of the anchoring methods themselves, not an artifact of a specific model scale. The consistency across four datasets reinforces that the finding is generalizable within the domain of question answering.

* **Notable anomalies/trends:** The most striking "anomaly" is the perfect separation between the two method families. There is no overlap or ambiguity. The trend is not merely a gradual decline for Q-Anchored methods; it is a steep, monotonic drop that accounts for nearly the entire y-axis range. The tight clustering of datasets suggests the underlying mechanism being measured is highly consistent.

* **Peircean investigative reading:** The charts function as an *index* pointing to a causal relationship: the choice of anchoring technique (Q vs. A) directly causes a drastic difference in the layer-wise evolution of the model's internal state (ΔP). The consistency across models and datasets makes this a reliable sign of a core mechanistic difference. A researcher would infer that "A-Anchoring" acts as a regularizer or stabilizer for internal probabilities, while "Q-Anchoring" allows or induces a significant transformation of those probabilities during deep processing. This has implications for understanding model interpretability and designing probing experiments.

DECODING INTELLIGENCE...