## Diagram: Language Model Dataset Enrichment via API Calls

### Overview

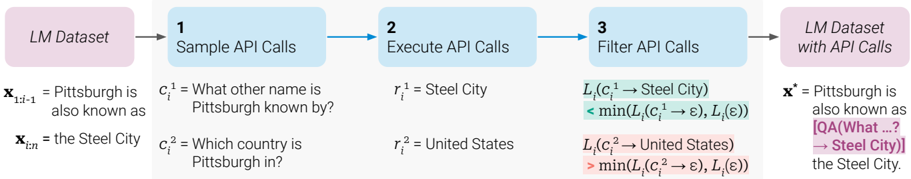

This diagram illustrates a three-step pipeline for enriching a Language Model (LM) dataset by generating, executing, and filtering synthetic API calls. The process transforms a raw text prompt into an augmented version that includes a question-answer pair derived from external knowledge (simulated via API calls).

### Components/Flow

The diagram is a linear flowchart moving from left to right, consisting of five main blocks connected by arrows.

1. **Initial State (Leftmost Block):**

* **Label:** `LM Dataset`

* **Content:** A text sequence `x_{1:t-1}` = "Pittsburgh is also known as" and `x_{t:n}` = "the Steel City".

2. **Step 1: Sample API Calls**

* **Label:** `1 Sample API Calls`

* **Content:** Two sampled API call candidates, `c_i^1` and `c_i^2`.

* `c_i^1` = "What other name is Pittsburgh known by?"

* `c_i^2` = "Which country is Pittsburgh in?"

3. **Step 2: Execute API Calls**

* **Label:** `2 Execute API Calls`

* **Content:** The results `r_i^1` and `r_i^2` from executing the sampled calls.

* `r_i^1` = "Steel City"

* `r_i^2` = "United States"

4. **Step 3: Filter API Calls**

* **Label:** `3 Filter API Calls`

* **Content:** A filtering logic based on a loss function `L_i`. Two comparisons are shown:

* For the first call: `L_i(c_i^1 → Steel City) < min(L_i(c_i^1 → ε), L_i(ε))`

* For the second call: `L_i(c_i^2 → United States) > min(L_i(c_i^2 → ε), L_i(ε))`

* **Note:** The first comparison is highlighted in green, indicating it passes the filter. The second is in red, indicating it fails.

5. **Final State (Rightmost Block):**

* **Label:** `LM Dataset with API Calls`

* **Content:** The enriched text sequence `x^1`. The original text is preserved, but a new segment is inserted, shown in purple: `[QA(What ...? → Steel City)]`.

* **Full Transcription:** `x^1` = "Pittsburgh is also known as [QA(What ...? → Steel City)] the Steel City."

### Detailed Analysis

* **Process Logic:** The pipeline aims to identify which potential API calls (questions) are useful for augmenting the training data. A call is deemed useful if the loss associated with predicting the API response (`r_i`) is lower than the loss of not having that information (represented by `ε`, likely a null or baseline token).

* **Mathematical Notation:**

* `x_{1:t-1}`: The prefix context text.

* `x_{t:n}`: The continuation text.

* `c_i^k`: The k-th candidate API call (question) for instance i.

* `r_i^k`: The result (answer) for call k.

* `L_i(...)`: A loss function evaluating the quality or utility of a text segment.

* `ε`: Represents a null or baseline alternative to the API call/response.

* `→`: Denotes the transition or insertion of a text segment.

* **Filtering Outcome:** Only the first API call (`c_i^1`) about Pittsburgh's other name is kept. Its result ("Steel City") is already present in the original text (`x_{t:n}`), suggesting the filter identifies calls that provide *grounding* or *verification* for facts already in the context. The second call about the country is filtered out, possibly because that information is not present in the original text snippet or is deemed less relevant for this specific training objective.

### Key Observations

* The final output `[QA(What ...? → Steel City)]` is a structured token inserted into the text stream. It encapsulates both the question (abbreviated as "What ...?") and its answer.

* The color-coding (green for pass, red for fail, purple for the inserted QA) is a critical visual aid for understanding the filtering step's result.

* The process is **self-referential**: it uses the model's own loss function (`L_i`) to decide which external knowledge calls are valuable for improving its own training data.

### Interpretation

This diagram depicts a method for **automated data augmentation** or **self-improvement** for language models. The core idea is to have the model generate potential questions about a text, simulate getting answers from an external knowledge source (an API), and then programmatically decide which question-answer pairs are most beneficial to weave back into the training data.

The key insight is the filtering criterion: an API call is valuable if knowing its answer reduces the model's uncertainty (loss) more than not having that answer at all. In this example, the model already knows Pittsburgh is the "Steel City," so confirming that fact via a QA pair is low-loss and useful. Asking about the country, however, introduces information not present in the original context, which might be filtered out if the goal is strictly to reinforce or structure existing knowledge rather than inject new facts.

This approach could be used to create richer training examples that explicitly link questions to answers found within or inferred from the text, potentially improving the model's question-answering and reasoning capabilities. The "Peircean" investigative angle here is abductive: the system generates hypotheses (API calls/questions) and tests which ones best explain or are consistent with the given data (the original text), selecting the most plausible ones to become part of the new, augmented reality of the dataset.