TECHNICAL ASSET FINGERPRINT

785241960937d14ad0499c1a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

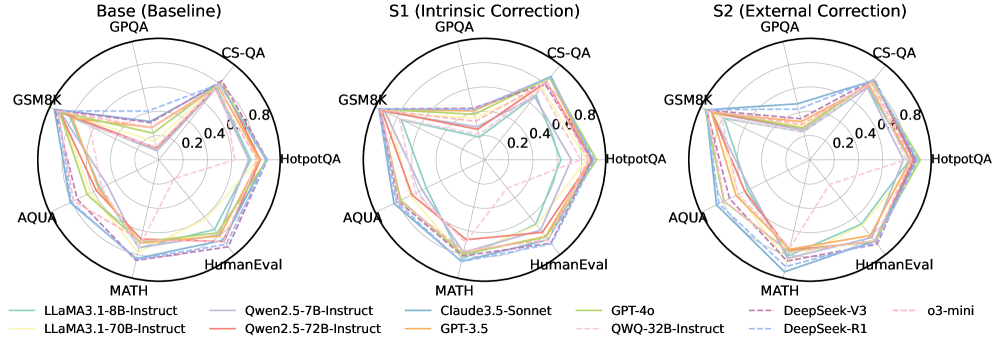

## Radar Charts: Model Performance on GPQA Benchmarks

### Overview

The image presents three radar charts comparing the performance of various language models on the GPQA benchmark, across different settings: a baseline model (Base), a model with intrinsic correction (S1), and a model with external correction (S2). Each chart visualizes the performance of multiple models across several tasks, including CS-QA, GSM8K, HotpotQA, AQUA, HumanEval, and MATH.

### Components/Axes

* **Chart Type**: Radar Charts (3 charts side-by-side)

* **Titles**:

* Left Chart: "Base (Baseline) GPQA"

* Middle Chart: "S1 (Intrinsic Correction) GPQA"

* Right Chart: "S2 (External Correction) GPQA"

* **Axes**:

* Radial Axis: Represents performance score, ranging from 0.0 to 0.8, with markers at 0.2, 0.4, 0.6, 0.8.

* Angular Axis: Represents different tasks/benchmarks: CS-QA, GSM8K, HotpotQA, AQUA, HumanEval, MATH. These are arranged clockwise around the circle.

* **Legend**: Located at the bottom of the image. Lists the models and their corresponding line colors:

* Light Blue: LLaMA3.1-8B-Instruct

* Light Yellow: LLaMA3.1-70B-Instruct

* Light Purple: Qwen2.5-7B-Instruct

* Light Red: Qwen2.5-72B-Instruct

* Darker Blue: Claude3.5-Sonnet

* Orange: GPT-3.5

* Green: GPT-4o

* Dashed Light Purple: QWQ-32B-Instruct

* Dashed Dark Blue: DeepSeek-V3

* Dashed Light Blue: DeepSeek-R1

* Dashed Light Pink: o3-mini

### Detailed Analysis or ### Content Details

**Chart 1: Base (Baseline) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.5 on CS-QA, 0.6 on GSM8K, 0.4 on HotpotQA, 0.3 on AQUA, 0.2 on HumanEval, and 0.1 on MATH.

**Chart 2: S1 (Intrinsic Correction) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.8 on CS-QA, 0.85 on GSM8K, 0.7 on HotpotQA, 0.6 on AQUA, 0.5 on HumanEval, and 0.4 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

**Chart 3: S2 (External Correction) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.8 on CS-QA, 0.9 on GSM8K, 0.7 on HotpotQA, 0.6 on AQUA, 0.5 on HumanEval, and 0.4 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

### Key Observations

* **Task Performance**: Models generally perform best on GSM8K and CS-QA, and worst on MATH and HumanEval.

* **Model Comparison**: GPT-4o (Green) consistently shows higher performance across all tasks and settings compared to other models. o3-mini (Dashed Light Pink) generally performs the worst.

* **Correction Impact**: Intrinsic (S1) and External (S2) corrections generally improve performance compared to the baseline (Base), with S2 showing a slight edge over S1.

### Interpretation

The radar charts provide a visual comparison of language model performance across different question-answering tasks. The data suggests that:

* **Task Difficulty**: Some tasks are inherently more challenging for these models, as evidenced by the consistently lower scores on MATH and HumanEval.

* **Model Superiority**: GPT-4o demonstrates superior performance, indicating its advanced capabilities in handling diverse question types.

* **Effectiveness of Corrections**: Both intrinsic and external correction methods enhance model performance, suggesting that these techniques are valuable for improving accuracy and reliability. The slight advantage of external correction (S2) may indicate that providing additional context or information during the correction process is beneficial.

* **Model Consistency**: The relative performance of models remains consistent across different correction settings. Models that perform well in the baseline setting tend to maintain their relative advantage in the corrected settings.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Radar Charts: Model Performance Comparison Across Benchmarks

### Overview

The image presents three radar charts comparing the performance of several language models across six different benchmarks: GSM8K, MATH, HumanEval, HotpotQA, CS-QA, and AQUA. Each chart represents a different evaluation setting: "Base (Baseline) GPQA", "S1 (Intrinsic Correction) GPQA", and "S2 (External Correction) GPQA". The performance is measured on a scale from approximately 0 to 0.8, indicated by concentric circles. Each line on the radar chart represents a different language model.

### Components/Axes

* **Benchmarks (Axes):** GSM8K, MATH, HumanEval, HotpotQA, CS-QA, AQUA. These are evenly spaced around the circular charts.

* **Radial Scale:** The scale ranges from approximately 0.0 to 0.8, with markings at 0.2, 0.4, 0.6, and 0.8.

* **Models (Lines):**

* LLaMA3.1-8B-Instruct (Dark Blue, dashed)

* LLaMA3.1-70B-Instruct (Dark Blue, solid)

* Owen2.5-7B-Instruct (Orange)

* Owen2.5-72B-Instruct (Pink)

* Claude3.5-Sonnet (Green)

* GPT-3.5 (Light Orange)

* GPT-4o (Light Green)

* QWQ-32B-Instruct (Cyan)

* DeepSeek-V3 (Purple, dashed)

* DeepSeek-R1 (Purple, solid)

* o3-mini (Red)

* **Titles:** Each chart has a title indicating the evaluation setting: "Base (Baseline) GPQA", "S1 (Intrinsic Correction) GPQA", "S2 (External Correction) GPQA".

* **Legend:** Located at the bottom of the image, the legend maps each color and line style to a specific language model.

### Detailed Analysis or Content Details

**Base (Baseline) GPQA:**

* **LLaMA3.1-8B-Instruct (Dark Blue, dashed):** Shows relatively low performance across all benchmarks, with a peak around 0.3-0.4 for CS-QA and GSM8K.

* **LLaMA3.1-70B-Instruct (Dark Blue, solid):** Performs better than the 8B version, peaking around 0.5-0.6 for CS-QA and GSM8K.

* **Owen2.5-7B-Instruct (Orange):** Exhibits moderate performance, peaking around 0.4 for GSM8K and CS-QA.

* **Owen2.5-72B-Instruct (Pink):** Shows higher performance than the 7B version, peaking around 0.5-0.6 for GSM8K and CS-QA.

* **Claude3.5-Sonnet (Green):** Performs well, peaking around 0.6-0.7 for GSM8K and CS-QA.

* **GPT-3.5 (Light Orange):** Shows moderate performance, peaking around 0.4-0.5 for GSM8K and CS-QA.

* **GPT-4o (Light Green):** Exhibits the highest performance, peaking around 0.7-0.8 for GSM8K and CS-QA.

* **QWQ-32B-Instruct (Cyan):** Shows moderate performance, peaking around 0.4-0.5 for GSM8K and CS-QA.

* **DeepSeek-V3 (Purple, dashed):** Exhibits moderate performance, peaking around 0.4-0.5 for GSM8K and CS-QA.

* **DeepSeek-R1 (Purple, solid):** Shows higher performance than the V3 version, peaking around 0.5-0.6 for GSM8K and CS-QA.

* **o3-mini (Red):** Shows relatively low performance across all benchmarks, peaking around 0.3-0.4 for CS-QA and GSM8K.

**S1 (Intrinsic Correction) GPQA:**

* The overall trend is similar to the "Base" chart, but most models show slightly improved performance. GPT-4o continues to lead, and the LLaMA models show modest gains.

* The performance differences between the models are more pronounced in this setting.

**S2 (External Correction) GPQA:**

* Again, the trend is similar to the "Base" chart, with most models showing slightly improved performance. GPT-4o remains the top performer.

* The performance differences between the models are further amplified in this setting.

### Key Observations

* GPT-4o consistently outperforms all other models across all benchmarks and evaluation settings.

* Larger models (e.g., 70B versions of LLaMA and Owen) generally perform better than their smaller counterparts (e.g., 8B and 7B versions).

* The "Intrinsic Correction" (S1) and "External Correction" (S2) methods generally lead to slight performance improvements across most models.

* The performance variations across benchmarks are significant. Models tend to perform better on GSM8K and CS-QA compared to HumanEval and AQUA.

### Interpretation

The radar charts demonstrate the relative strengths and weaknesses of different language models across a variety of challenging benchmarks. The consistent dominance of GPT-4o suggests its superior capabilities in reasoning, knowledge, and problem-solving. The performance gains observed with the "Intrinsic Correction" and "External Correction" methods indicate that these techniques can effectively enhance model performance. The varying performance across benchmarks highlights the importance of evaluating models on a diverse set of tasks to obtain a comprehensive understanding of their capabilities. The charts suggest that model size is a significant factor in performance, but other factors, such as model architecture and training data, also play a crucial role. The differences in performance between the models could be attributed to variations in their training data, model architecture, and optimization strategies. The data suggests that the GPQA framework, with and without corrections, is a useful tool for evaluating and comparing the performance of language models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Radar Chart Comparison: AI Model Performance Across Benchmarks

### Overview

The image displays three radar charts (spider plots) comparing the performance of 11 different large language models (LLMs) across six standardized benchmarks. The charts are organized to show performance under three different conditions: a baseline ("Base"), an "Intrinsic Correction" condition ("S1"), and an "External Correction" condition ("S2"). Each chart plots the same set of models on the same six axes, allowing for a direct visual comparison of how model performance changes across the three conditions.

### Components/Axes

* **Chart Titles (Top Center):**

* Left Chart: `Base (Baseline)`

* Middle Chart: `S1 (Intrinsic Correction)`

* Right Chart: `S2 (External Correction)`

* **Axes (Radial Spokes):** Six benchmarks are plotted as axes radiating from the center. The axes are labeled at their outer ends. Clockwise from the top:

1. `GPQA`

2. `CS-QA`

3. `HotpotQA`

4. `HumanEval`

5. `MATH`

6. `AQUA`

* **Scale (Concentric Circles):** The radial scale is marked by concentric circles representing performance scores. The innermost circle is labeled `0.2`, the next `0.4`, the next `0.6`, and the outermost labeled `0.8`. The center point represents a score of 0.

* **Legend (Bottom):** A comprehensive legend is provided below the three charts, mapping line colors and styles to specific AI models. The legend is organized in three columns.

* **Column 1:**

* `LLaMA3.1-8B-Instruct` (Solid, light teal line)

* `LLaMA3.1-70B-Instruct` (Solid, light yellow-green line)

* **Column 2:**

* `Qwen2.5-7B-Instruct` (Solid, light purple line)

* `Qwen2.5-72B-Instruct` (Solid, salmon pink line)

* **Column 3:**

* `Claude3.5-Sonnet` (Solid, medium blue line)

* `GPT-3.5` (Solid, orange line)

* **Column 4:**

* `GPT-4o` (Solid, light green line)

* `QWQ-32B-Instruct` (Dashed, pink line)

* **Column 5:**

* `DeepSeek-V3` (Dashed, purple line)

* `DeepSeek-R1` (Dashed, light blue line)

* **Column 6:**

* `o3-mini` (Dashed, light pink line)

### Detailed Analysis

**1. Base (Baseline) Chart:**

* **Trend:** Most models show a similar, somewhat irregular pentagonal shape, indicating varied performance across benchmarks. Performance is generally strongest on `GPQA` and `CS-QA` (closer to the 0.8 ring) and weakest on `MATH` and `AQUA` (often between 0.4 and 0.6).

* **Key Data Points (Approximate):**

* **Top Performers (Outermost lines):** `Claude3.5-Sonnet` (blue) and `GPT-4o` (green) consistently form the outermost shape, indicating the highest overall scores. They approach or exceed 0.8 on `GPQA` and `CS-QA`.

* **Mid-Tier:** `Qwen2.5-72B-Instruct` (salmon), `LLaMA3.1-70B-Instruct` (yellow-green), and `GPT-3.5` (orange) form a cluster just inside the top performers.

* **Lower-Tier:** `LLaMA3.1-8B-Instruct` (teal) and `Qwen2.5-7B-Instruct` (purple) are generally the innermost lines, indicating lower scores, particularly on `MATH` and `AQUA` where they dip near or below 0.4.

* **Notable Outlier:** The dashed pink line for `QWQ-32B-Instruct` shows a very distinct shape. It has a pronounced spike towards `HotpotQA` (near 0.8) but is the innermost line on `MATH` and `AQUA` (below 0.4), indicating highly specialized performance.

**2. S1 (Intrinsic Correction) Chart:**

* **Trend:** The overall shapes expand outward compared to the Base chart, suggesting a general improvement in scores across most models and benchmarks after intrinsic correction. The relative ordering of models remains similar.

* **Key Changes:**

* The gap between the top performers (`Claude3.5-Sonnet`, `GPT-4o`) and the mid-tier narrows slightly.

* The lower-tier models (`LLaMA3.1-8B-Instruct`, `Qwen2.5-7B-Instruct`) show noticeable improvement, moving further from the center.

* The specialized shape of `QWQ-32B-Instruct` (dashed pink) becomes less extreme; its low scores on `MATH`/`AQUA` improve, while its high score on `HotpotQA` remains strong.

**3. S2 (External Correction) Chart:**

* **Trend:** This chart shows the most significant expansion and convergence of shapes. The performance of nearly all models improves further, and the differences between them become much smaller. The lines are tightly clustered near the outer edge of the chart.

* **Key Changes:**

* **Massive Convergence:** Almost all models now score between approximately 0.7 and 0.9 on all six benchmarks. The distinct performance profiles seen in the Base chart are largely erased.

* **Top Cluster:** `Claude3.5-Sonnet`, `GPT-4o`, `Qwen2.5-72B-Instruct`, `LLaMA3.1-70B-Instruct`, and `GPT-3.5` are nearly indistinguishable at the top.

* **Dramatic Improvement:** The smaller models (`LLaMA3.1-8B-Instruct`, `Qwen2.5-7B-Instruct`) and the specialized `QWQ-32B-Instruct` show the most dramatic gains, now performing at a level comparable to the much larger models in the baseline.

* **New Entrants:** The dashed lines for `DeepSeek-V3`, `DeepSeek-R1`, and `o3-mini` are also present in this cluster, indicating high performance under the S2 condition.

### Key Observations

1. **Performance Hierarchy:** In the baseline, a clear hierarchy exists: proprietary models (Claude, GPT-4o) > large open-source models (70B/72B) > smaller open-source models (7B/8B).

2. **Benchmark Difficulty:** `MATH` and `AQUA` appear to be the most challenging benchmarks for all models in the baseline, as scores are consistently lowest on these axes.

3. **Specialization:** The `QWQ-32B-Instruct` model exhibits a unique performance profile in the baseline, excelling at `HotpotQA` but struggling with `MATH` and `AQUA`.

4. **Correction Impact:** Both "Intrinsic" (S1) and especially "External" (S2) correction methods lead to substantial performance gains. The S2 condition acts as a powerful equalizer, dramatically reducing the performance gap between model sizes and architectures.

5. **Diminishing Returns:** The improvement from Base to S1 is significant, but the leap from S1 to S2 is even more pronounced, suggesting the external correction method is highly effective.

### Interpretation

This visualization demonstrates the profound impact of correction techniques on LLM benchmark performance. The data suggests that:

* **Raw Capability vs. Corrected Performance:** The baseline ("Base") chart reflects the raw, unaided reasoning and knowledge capabilities of the models, where scale (parameter count) and training data quality create a clear performance stratification.

* **The Power of External Tools/Methods:** The dramatic convergence in the "S2 (External Correction)" chart implies that when models are augmented with external correction mechanisms (which could involve tools, retrieval-augmented generation, or specialized verification modules), their inherent limitations in specific domains (like mathematical reasoning) can be largely overcome. This narrows the gap between smaller and larger models.

* **Benchmark Sensitivity:** The consistent difficulty of `MATH` and `AQUA` in the baseline highlights these as areas where model reasoning is most fragile without assistance. The fact that correction methods most dramatically improve scores on these axes underscores their value for practical applications requiring robust reasoning.

* **Strategic Implication:** For developers, this indicates that investing in external correction systems (S2) may yield greater performance improvements and cost-efficiency (by enabling smaller models to perform like larger ones) than simply scaling up model size alone. The charts argue for a paradigm where model capability is a combination of base model intelligence and the sophistication of its supporting correction ecosystem.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Radar Chart: Model Performance Across Evaluation Metrics

### Overview

The image contains three horizontally aligned radar charts comparing the performance of multiple AI models across seven evaluation metrics: GPQA, CS-QA, HotpotQA, GSM8K, AQUA, MATH, and HumanEval. Each chart represents a different evaluation framework: "Base (Baseline)", "S1 (Intrinsic Correction)", and "S2 (External Correction)". The charts use a circular layout with radial axes scaled from 0.2 to 0.8, and models are represented by colored lines connecting their scores across metrics.

### Components/Axes

- **Radial Axes**:

- GPQA (top)

- CS-QA (top-right)

- HotpotQA (right)

- GSM8K (bottom-right)

- AQUA (bottom)

- MATH (bottom-left)

- HumanEval (left)

- **Legends**:

- **Base (Baseline)**:

- LLaMA3.1-8B-Instruct (teal)

- Qwen2.5-7B-Instruct (purple)

- Claude3.5-Sonnet (blue)

- GPT-4o (green)

- DeepSeek-V3 (dark purple)

- o3-mini (pink)

- **S1 (Intrinsic Correction)**:

- LLaMA3.1-70B-Instruct (yellow)

- Qwen2.5-72B-Instruct (orange)

- GPT-3.5 (red)

- QWQ-32B-Instruct (dashed pink)

- DeepSeek-R1 (dashed blue)

- **S2 (External Correction)**: Same models as S1 but with updated performance values.

- **Scale**: Radial axes marked at 0.2, 0.4, 0.6, 0.8.

### Detailed Analysis

#### Base (Baseline)

- **LLaMA3.1-8B-Instruct** (teal): Peaks at GPQA (~0.75), lowest in MATH (~0.45).

- **Qwen2.5-7B-Instruct** (purple): Strong in CS-QA (~0.7), weaker in GSM8K (~0.55).

- **Claude3.5-Sonnet** (blue): Balanced performance, highest in HumanEval (~0.7).

- **GPT-4o** (green): Highest in MATH (~0.75), moderate in GPQA (~0.65).

- **DeepSeek-V3** (dark purple): Strong in CS-QA (~0.7), lower in AQUA (~0.5).

- **o3-mini** (pink): Highest in HumanEval (~0.75), lowest in GSM8K (~0.5).

#### S1 (Intrinsic Correction)

- **LLaMA3.1-70B-Instruct** (yellow): Improved in MATH (~0.7), slight drop in GPQA (~0.65).

- **Qwen2.5-72B-Instruct** (orange): Increased CS-QA (~0.75), stable HumanEval (~0.65).

- **GPT-3.5** (red): Minimal changes, peaks in AQUA (~0.6).

- **QWQ-32B-Instruct** (dashed pink): New entry, strong in CS-QA (~0.7), weak in MATH (~0.4).

- **DeepSeek-R1** (dashed blue): Improved HumanEval (~0.7), slight drop in GSM8K (~0.55).

#### S2 (External Correction)

- **LLaMA3.1-70B-Instruct** (yellow): Further gains in MATH (~0.75), stable GPQA (~0.65).

- **Qwen2.5-72B-Instruct** (orange): CS-QA peaks at ~0.8, HumanEval drops to ~0.6.

- **GPT-3.5** (red): Slight improvement in AQUA (~0.65).

- **QWQ-32B-Instruct** (dashed pink): CS-QA remains ~0.7, MATH improves to ~0.45.

- **DeepSeek-R1** (dashed blue): HumanEval peaks at ~0.75, GSM8K drops to ~0.5.

### Key Observations

1. **Model Specialization**:

- GPT-4o and LLaMA3.1-70B-Instruct dominate MATH.

- Qwen2.5-72B-Instruct and DeepSeek-R1 excel in CS-QA and HumanEval.

2. **Correction Impact**:

- S1 and S2 show mixed results: Some models improve in specific metrics (e.g., Qwen2.5-72B-Instruct in CS-QA) while others decline (e.g., LLaMA3.1-70B-Instruct in GPQA).

- External correction (S2) amplifies performance gaps between models.

3. **Outliers**:

- o3-mini underperforms in GSM8K across all frameworks.

- QWQ-32B-Instruct shows inconsistent results, excelling in CS-QA but struggling in MATH.

### Interpretation

The charts suggest that correction frameworks (S1/S2) do not universally improve model performance. Instead, gains in one metric (e.g., CS-QA for Qwen2.5-72B-Instruct) often come at the cost of others (e.g., HumanEval). The baseline models (Base) exhibit more balanced performance, while larger models (e.g., LLaMA3.1-70B-Instruct) show greater specialization. The data implies that correction methods may introduce trade-offs, highlighting the need for context-specific evaluation. Notably, HumanEval scores remain relatively stable across frameworks, suggesting it is less sensitive to correction techniques compared to task-specific metrics like MATH or CS-QA.

DECODING INTELLIGENCE...