## Diagram: Parallel Neural Network Processing Architecture

### Overview

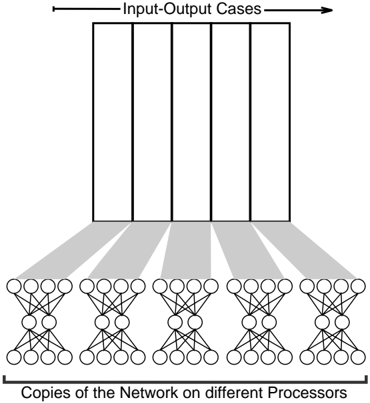

The image is a black-and-white schematic diagram illustrating a parallel computing architecture for neural networks. It depicts a data parallelism strategy where multiple copies of the same neural network model are executed simultaneously on different processors, each processing a distinct subset of input-output cases.

### Components/Axes

The diagram is organized into two primary horizontal sections connected by shaded pathways.

1. **Top Section (Data Layer):**

* **Label:** "Input-Output Cases" (centered above the section).

* **Components:** Five identical, vertically oriented rectangles arranged side-by-side. These represent distinct batches or partitions of the training/validation dataset.

2. **Bottom Section (Processing Layer):**

* **Label:** "Copies of the Network on different Processors" (centered below the section).

* **Components:** Five identical neural network diagrams arranged side-by-side. Each network is a standard fully connected (dense) feedforward architecture with:

* An **input layer** of 5 nodes (circles).

* A **hidden layer** of 3 nodes.

* An **output layer** of 5 nodes.

* Lines connecting every node in one layer to every node in the next, indicating a fully connected topology.

3. **Connections (Data Flow):**

* Five gray, shaded, trapezoidal pathways connect the top and bottom sections.

* Each pathway originates from the base of one "Input-Output Cases" rectangle and fans out to connect to the entirety of one corresponding neural network copy below. This visually represents the distribution of a specific data partition to a dedicated processor running a model copy.

### Detailed Analysis

* **Spatial Grounding:** The "Input-Output Cases" label and rectangles occupy the top ~40% of the diagram. The "Copies of the Network..." label and network diagrams occupy the bottom ~40%. The central ~20% is dedicated to the shaded connection pathways.

* **Component Isolation:**

* **Header Region:** Contains the title "Input-Output Cases" and the five data partition rectangles.

* **Main Flow Region:** Contains the five shaded pathways illustrating the one-to-one mapping of data partitions to network copies.

* **Footer Region:** Contains the five neural network diagrams and the title "Copies of the Network on different Processors."

* **Network Topology Detail:** Each of the five network copies is structurally identical, confirming the "same model" premise of data parallelism. The node count per layer is consistent: 5 (input) -> 3 (hidden) -> 5 (output).

### Key Observations

1. **Perfect Symmetry:** The diagram is perfectly symmetrical along a vertical axis, emphasizing the uniform and independent nature of the parallel processes.

2. **One-to-One Mapping:** There is a strict, unambiguous one-to-one correspondence between an input-output case partition and a network copy. No sharing of data or model instances is depicted.

3. **Abstraction Level:** The diagram is a high-level conceptual model. It abstracts away details of the processors themselves, the synchronization mechanism (e.g., gradient averaging), and the specific neural network operation, focusing solely on the data flow and replication pattern.

### Interpretation

This diagram is a canonical representation of **data parallelism** in distributed deep learning. The core concept it demonstrates is the partitioning of a large dataset ("Input-Output Cases") into smaller, independent chunks. Each chunk is processed by an identical copy of a neural network model running on a separate processor.

The visual flow—from a single data block at the top, splitting into five dedicated pathways, and terminating in five identical models—clearly communicates the strategy's purpose: to accelerate training or inference by dividing the computational workload. The identical nature of the network copies implies that after processing their respective data batches, their results (e.g., computed gradients or predictions) would typically be aggregated in a subsequent step not shown here.

The choice of a simple, fully connected network as the icon for "the network" is symbolic. It represents any arbitrary neural network architecture (e.g., CNN, Transformer) that would be replicated in a real-world implementation. The diagram's strength is its clarity in illustrating the fundamental architectural pattern, making it a useful explanatory tool for concepts in parallel computing and scalable machine learning systems.