\n

## Diagram: Experimental Conditions & Outcomes of AI Interaction

### Overview

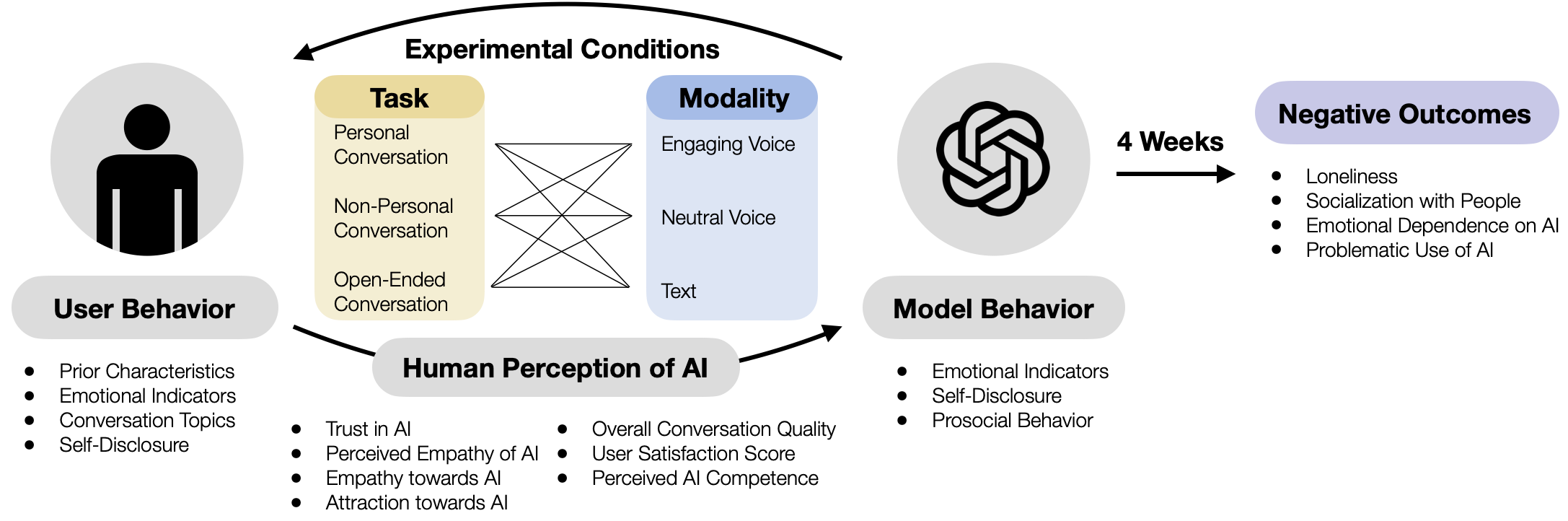

This diagram illustrates the experimental conditions and potential negative outcomes of human interaction with Artificial Intelligence (AI). It depicts a flow from user behavior, through experimental conditions, to model behavior, and ultimately to negative outcomes over a period of 4 weeks. The central element is a network of interactions defined by "Task" and "Modality".

### Components/Axes

The diagram consists of the following components:

* **User Behavior:** Located at the bottom-left, listing characteristics observed in users.

* **Experimental Conditions:** Positioned at the top-center, defined by "Task" and "Modality".

* **Human Perception of AI:** Located in the center, bridging User and Model Behavior.

* **Model Behavior:** Positioned at the bottom-right, listing characteristics of the AI model.

* **Negative Outcomes:** Located at the top-right, listing potential consequences of the interaction.

* **Timeframe:** A "4 Weeks" label indicating the duration of the experiment.

The "Task" dimension includes:

* Personal Conversation

* Non-Personal Conversation

* Open-Ended Conversation

The "Modality" dimension includes:

* Engaging Voice

* Neutral Voice

* Text

### Detailed Analysis / Content Details

**User Behavior:**

* Prior Characteristics

* Emotional Indicators

* Conversation Topics

* Self-Disclosure

**Human Perception of AI:**

* Trust in AI

* Perceived Empathy of AI

* Empathy towards AI

* Attraction towards AI

* Overall Conversation Quality

* User Satisfaction Score

* Perceived AI Competence

**Model Behavior:**

* Emotional Indicators

* Self-Disclosure

* Prosocial Behavior

**Negative Outcomes:**

* Loneliness

* Socialization with People

* Emotional Dependence on AI

* Problematic Use of AI

The diagram shows connections between the "Task" and "Modality" dimensions, forming a network. For example, "Personal Conversation" connects to "Engaging Voice", "Neutral Voice", and "Text". "Non-Personal Conversation" also connects to all three modalities. "Open-Ended Conversation" connects to all three modalities as well. These connections lead to "Human Perception of AI", which then influences "Model Behavior", and finally, "Negative Outcomes" after 4 weeks.

### Key Observations

The diagram highlights the interplay between the type of conversation (Task) and the way the AI communicates (Modality) in shaping human perception and ultimately, potential negative consequences. The network structure suggests that multiple combinations of Task and Modality can lead to the same perception and outcomes. The inclusion of "4 Weeks" suggests a longitudinal study design.

### Interpretation

This diagram represents a conceptual model for investigating the psychological effects of interacting with AI. It suggests that the way an AI is designed to converse (Task and Modality) can significantly impact how users perceive it, and that these perceptions can contribute to negative outcomes like loneliness and dependence. The diagram implies a hypothesis that certain combinations of Task and Modality might be more likely to lead to these negative outcomes than others. The inclusion of "Human Perception of AI" as a mediating factor is crucial, as it acknowledges that the impact of AI is not direct but is filtered through individual interpretation and emotional response. The diagram is a high-level overview and does not provide specific data or quantitative relationships, but rather a framework for research. It is a conceptual model, not a data visualization.