TECHNICAL ASSET FINGERPRINT

794d292a324e4abbfe2e676c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Charts: LLM Performance Comparison

### Overview

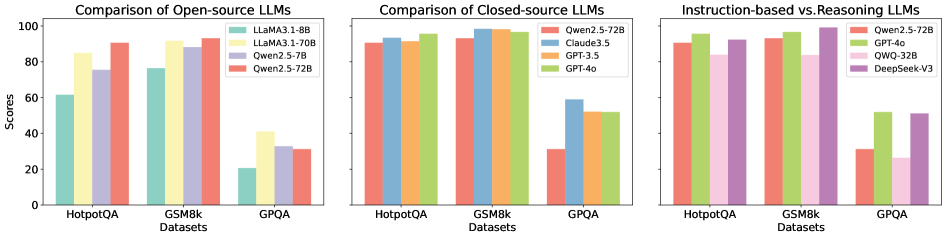

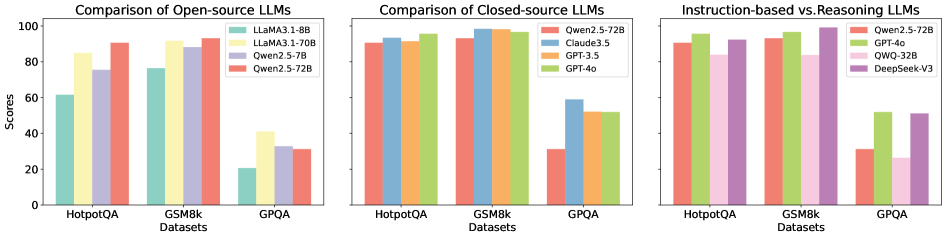

The image presents three bar charts comparing the performance of different Large Language Models (LLMs) on three datasets: HotpotQA, GSM8k, and GPQA. The charts are grouped by LLM type: Open-source, Closed-source, and Instruction-based vs. Reasoning. The y-axis represents scores, and the x-axis represents the datasets.

### Components/Axes

**General Chart Elements:**

* **Title (Left Chart):** Comparison of Open-source LLMs

* **Title (Middle Chart):** Comparison of Closed-source LLMs

* **Title (Right Chart):** Instruction-based vs. Reasoning LLMs

* **Y-axis Label:** Scores

* **Y-axis Scale:** 0 to 100, with tick marks at 20, 40, 60, 80, and 100.

* **X-axis Label:** Datasets

* **X-axis Categories:** HotpotQA, GSM8k, GPQA

**Legends:**

* **Left Chart (Open-source LLMs):** Located in the top-right corner of the chart.

* Light Green: LLaMA3.1-8B

* Yellow: LLaMA3.1-70B

* Lavender: Qwen2.5-7B

* Salmon: Qwen2.5-72B

* **Middle Chart (Closed-source LLMs):** Located in the top-right corner of the chart.

* Salmon: Qwen2.5-72B

* Light Blue: Claude3.5

* Orange: GPT-3.5

* Green: GPT-4o

* **Right Chart (Instruction-based vs. Reasoning LLMs):** Located in the top-right corner of the chart.

* Salmon: Qwen2.5-72B

* Green: GPT-4o

* Pink: QWQ-32B

* Purple: DeepSeek-V3

### Detailed Analysis

**1. Comparison of Open-source LLMs:**

* **LLaMA3.1-8B (Light Green):**

* HotpotQA: ~62

* GSM8k: ~77

* GPQA: ~20

* **LLaMA3.1-70B (Yellow):**

* HotpotQA: ~85

* GSM8k: ~92

* GPQA: ~25

* **Qwen2.5-7B (Lavender):**

* HotpotQA: ~75

* GSM8k: ~88

* GPQA: ~28

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~92

* GSM8k: ~94

* GPQA: ~30

**2. Comparison of Closed-source LLMs:**

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~92

* GSM8k: ~95

* GPQA: ~30

* **Claude3.5 (Light Blue):**

* HotpotQA: ~93

* GSM8k: ~97

* GPQA: ~58

* **GPT-3.5 (Orange):**

* HotpotQA: ~95

* GSM8k: ~98

* GPQA: ~52

* **GPT-4o (Green):**

* HotpotQA: ~97

* GSM8k: ~98

* GPQA: ~52

**3. Instruction-based vs. Reasoning LLMs:**

* **Qwen2.5-72B (Salmon):**

* HotpotQA: ~92

* GSM8k: ~95

* GPQA: ~30

* **GPT-4o (Green):**

* HotpotQA: ~97

* GSM8k: ~98

* GPQA: ~52

* **QWQ-32B (Pink):**

* HotpotQA: ~90

* GSM8k: ~92

* GPQA: ~28

* **DeepSeek-V3 (Purple):**

* HotpotQA: ~92

* GSM8k: ~94

* GPQA: ~32

### Key Observations

* **Dataset Difficulty:** All models generally perform best on GSM8k and HotpotQA, and significantly worse on GPQA.

* **Open-source Performance:** The 72B parameter version of Qwen2.5 consistently outperforms the other open-source models across all datasets. LLaMA3.1-8B performs the worst.

* **Closed-source Performance:** GPT-4o and GPT-3.5 show very high performance on HotpotQA and GSM8k, with GPT-4o slightly edging out GPT-3.5. Claude3.5 also performs well.

* **Instruction vs. Reasoning:** GPT-4o generally outperforms Qwen2.5-72B, QWQ-32B, and DeepSeek-V3, especially on GPQA.

### Interpretation

The data suggests that model size (parameter count) is a significant factor in performance for open-source models, as evidenced by the difference between LLaMA3.1-8B and LLaMA3.1-70B. Closed-source models generally outperform open-source models, particularly on the GPQA dataset, which may indicate better reasoning capabilities. The performance differences between instruction-based and reasoning LLMs on GPQA suggest that some models are better suited for complex reasoning tasks. The consistently low scores on GPQA across all model types indicate that this dataset is particularly challenging.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: LLM Performance Comparison

### Overview

The image presents a comparative analysis of Large Language Models (LLMs) across three datasets: HotpotQA, GSM8k, and GPQA. The comparison is segmented into three charts: Open-source LLMs, Closed-source LLMs, and a comparison of Instruction-based vs. Reasoning LLMs. The y-axis represents "Scores" ranging from 0 to 100, while the x-axis represents the datasets. Each chart uses grouped bar graphs to display the performance of different LLMs on each dataset.

### Components/Axes

* **Y-axis:** "Scores" (Scale: 0 to 100, increments of 20)

* **X-axis:** "Datasets" (Categories: HotpotQA, GSM8k, GPQA)

* **Chart 1 (Open-source LLMs):**

* Legend:

* LLaMA3-1-8B (Light Blue)

* LLaMA3-1-70B (Pale Green)

* Qwen2-7B (Light Orange)

* Qwen2-5-72B (Light Red)

* **Chart 2 (Closed-source LLMs):**

* Legend:

* Qwen2.5-72B (Light Blue)

* Claude3.5 (Pale Green)

* GPT-3.5 (Light Orange)

* GPT-4o (Light Red)

* **Chart 3 (Instruction-based vs. Reasoning LLMs):**

* Legend:

* Qwen2.5-72B (Light Blue)

* GPT-4o (Pale Green)

* QWO-32B (Light Orange)

* DeepSeek-V3 (Light Red)

### Detailed Analysis

**Chart 1: Comparison of Open-source LLMs**

* **HotpotQA:**

* LLaMA3-1-8B: Approximately 62

* LLaMA3-1-70B: Approximately 86

* Qwen2-7B: Approximately 82

* Qwen2-5-72B: Approximately 88

* **GSM8k:**

* LLaMA3-1-8B: Approximately 78

* LLaMA3-1-70B: Approximately 92

* Qwen2-7B: Approximately 88

* Qwen2-5-72B: Approximately 90

* **GPQA:**

* LLaMA3-1-8B: Approximately 22

* LLaMA3-1-70B: Approximately 32

* Qwen2-7B: Approximately 28

* Qwen2-5-72B: Approximately 30

**Chart 2: Comparison of Closed-source LLMs**

* **HotpotQA:**

* Qwen2.5-72B: Approximately 92

* Claude3.5: Approximately 94

* GPT-3.5: Approximately 90

* GPT-4o: Approximately 96

* **GSM8k:**

* Qwen2.5-72B: Approximately 94

* Claude3.5: Approximately 96

* GPT-3.5: Approximately 92

* GPT-4o: Approximately 98

* **GPQA:**

* Qwen2.5-72B: Approximately 30

* Claude3.5: Approximately 34

* GPT-3.5: Approximately 28

* GPT-4o: Approximately 36

**Chart 3: Instruction-based vs. Reasoning LLMs**

* **HotpotQA:**

* Qwen2.5-72B: Approximately 92

* GPT-4o: Approximately 96

* QWO-32B: Approximately 88

* DeepSeek-V3: Approximately 86

* **GSM8k:**

* Qwen2.5-72B: Approximately 94

* GPT-4o: Approximately 98

* QWO-32B: Approximately 92

* DeepSeek-V3: Approximately 90

* **GPQA:**

* Qwen2.5-72B: Approximately 30

* GPT-4o: Approximately 36

* QWO-32B: Approximately 26

* DeepSeek-V3: Approximately 28

### Key Observations

* GPT-4o consistently achieves the highest scores across all datasets in the Closed-source and Instruction-based vs. Reasoning LLMs charts.

* LLaMA3-1-70B and Qwen2-5-72B generally outperform LLaMA3-1-8B and Qwen2-7B in the Open-source LLMs chart.

* Performance on GPQA is significantly lower than on HotpotQA and GSM8k for all models.

* The gap in performance between open-source and closed-source models is noticeable, with closed-source models generally achieving higher scores.

### Interpretation

The data suggests that GPT-4o is currently the leading LLM in terms of performance on these datasets. The larger models (e.g., LLaMA3-1-70B, Qwen2-5-72B) consistently outperform their smaller counterparts within the open-source category. The lower scores on GPQA indicate that this dataset presents a greater challenge for all models, potentially due to its specific characteristics or complexity. The consistent outperformance of closed-source models highlights the advantages of larger training datasets and more sophisticated architectures, which are often proprietary. The comparison between instruction-based and reasoning LLMs demonstrates that both types of models can achieve high performance, but GPT-4o still leads in this category. The data provides valuable insights for researchers and developers working on LLMs, indicating areas for improvement and potential directions for future research. The consistent trends across datasets suggest that the observed performance differences are not random and reflect genuine capabilities of the models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Comparative Analysis of Large Language Model (LLM) Performance Across Datasets

### Overview

The image is a composite of three bar charts comparing the performance of various Large Language Models (LLMs) on three distinct benchmark datasets: HotpotQA, GSM8k, and GPQA. The charts are organized by model type: open-source, closed-source, and a comparison of instruction-based versus reasoning-based models. All charts share a common y-axis labeled "Scores" (0-100) and x-axis labeled "Datasets."

### Components/Axes

* **Common Elements:**

* **Y-Axis:** Labeled "Scores," with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis:** Labeled "Datasets," with three categorical groups: "HotpotQA," "GSM8k," and "GPQA."

* **Legend:** Each chart has a legend in the top-right corner, mapping colors to specific model names.

* **Chart 1 (Left): "Comparison of Open-source LLMs"**

* **Legend (Top-Right):**

* Teal: `LLaMA3.1-8B`

* Yellow: `LLaMA3.1-70B`

* Light Purple: `Qwen2.5-7B`

* Salmon: `Qwen2.5-72B`

* **Chart 2 (Center): "Comparison of Closed-source LLMs"**

* **Legend (Top-Right):**

* Salmon: `Qwen2.5-72B`

* Blue: `Claude3.5`

* Orange: `GPT-3.5`

* Green: `GPT-4o`

* **Chart 3 (Right): "Instruction-based vs Reasoning-based"**

* **Legend (Top-Right):**

* Salmon: `Qwen2.5-72B`

* Green: `GPT-4o`

* Pink: `QWO-32B`

* Purple: `DeepSeek-V3`

### Detailed Analysis

#### Chart 1: Comparison of Open-source LLMs

* **HotpotQA:** Performance increases with model size. `LLaMA3.1-8B` scores ~60, `LLaMA3.1-70B` ~85, `Qwen2.5-7B` ~75, and `Qwen2.5-72B` ~90.

* **GSM8k:** All models perform well. `LLaMA3.1-8B` ~78, `LLaMA3.1-70B` ~92, `Qwen2.5-7B` ~88, `Qwen2.5-72B` ~95 (highest in this chart).

* **GPQA:** This is the most challenging dataset for these models. `LLaMA3.1-8B` scores ~20, `LLaMA3.1-70B` ~40, `Qwen2.5-7B` ~32, `Qwen2.5-72B` ~30. Notably, the 70B LLaMA model outperforms the 72B Qwen model here.

#### Chart 2: Comparison of Closed-source LLMs

* **HotpotQA:** All models score very high and similarly, clustered between ~90 and ~95.

* **GSM8k:** Performance remains high and consistent across models, all scoring between ~92 and ~96.

* **GPQA:** A significant performance drop is observed for all models. `Qwen2.5-72B` scores ~30, `Claude3.5` ~60, `GPT-3.5` ~50, and `GPT-4o` ~52. `Claude3.5` shows the strongest performance on this difficult dataset.

#### Chart 3: Instruction-based vs Reasoning-based

* **HotpotQA:** `Qwen2.5-72B` and `GPT-4o` score ~90-92. `QWO-32B` and `DeepSeek-V3` score slightly lower, around ~85.

* **GSM8k:** `Qwen2.5-72B` and `GPT-4o` again lead with scores ~95. `QWO-32B` scores ~85, and `DeepSeek-V3` ~88.

* **GPQA:** Performance is low across the board. `Qwen2.5-72B` ~30, `GPT-4o` ~52, `QWO-32B` ~28, `DeepSeek-V3` ~52. `GPT-4o` and `DeepSeek-V3` show a notable advantage over the other two models on this dataset.

### Key Observations

1. **Dataset Difficulty:** GPQA is consistently the most challenging benchmark, causing a dramatic performance drop for all models compared to HotpotQA and GSM8k.

2. **Model Scaling:** In the open-source chart, larger models (70B/72B) generally outperform smaller ones (7B/8B), with the notable exception on GPQA where `LLaMA3.1-70B` beats `Qwen2.5-72B`.

3. **Closed-source Dominance:** Closed-source models (Chart 2) show less variance and maintain higher scores on the easier datasets (HotpotQA, GSM8k) compared to the open-source models.

4. **Performance Clustering:** On HotpotQA and GSM8k, top-tier models from all categories cluster in the 85-95 score range, suggesting these tasks may be approaching saturation for advanced LLMs.

5. **GPQA as a Discriminator:** The GPQA dataset effectively differentiates model capabilities, with `Claude3.5`, `GPT-4o`, and `DeepSeek-V3` showing a clear lead over others.

### Interpretation

The data suggests a clear hierarchy of task difficulty for current LLMs, with GPQA representing a frontier challenge likely requiring deeper reasoning or specialized knowledge. The strong performance of closed-source models, particularly on the harder GPQA task, indicates potential advantages in training data, architecture, or post-training refinement.

The comparison between instruction-based and reasoning-based models (Chart 3) is less clear-cut from the labels alone, but the data shows that model performance is highly dataset-dependent. A model's strength on one benchmark (e.g., GSM8k) does not guarantee proportional strength on another (e.g., GPQA). The outlier performance of `LLaMA3.1-70B` on GPQA compared to the larger `Qwen2.5-72B` suggests that raw parameter count is not the sole determinant of capability; training methodology and data quality are critical factors.

Overall, the charts demonstrate that while many models excel on standard benchmarks, the development of robust models that perform well across diverse and challenging tasks like GPQA remains an active area of competition and research.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Comparison of LLMs Across Datasets

### Overview

The image is a grouped bar chart comparing the performance of various Large Language Models (LLMs) across three datasets: **HotpotQA**, **GSM8k**, and **GPQA**. The chart is divided into three sections:

1. **Open-source LLMs**

2. **Closed-source LLMs**

3. **Instruction-based vs. Reasoning LLMs**

Each section uses distinct color-coded models, with scores normalized to a 0–100 scale.

---

### Components/Axes

- **X-axis (Datasets)**:

- HotpotQA

- GSM8k

- GPQA

- **Y-axis (Scores)**:

- Scale: 0 to 100 (discrete increments of 20).

- **Legends**:

- **Open-source LLMs**:

- LLaMA3.1-8B (teal)

- LLaMA3.1-70B (yellow)

- Qwen2.5-72B (red)

- **Closed-source LLMs**:

- Qwen2.5-72B (red)

- Claude3.5 (blue)

- GPT-3.5 (orange)

- GPT-4o (green)

- **Instruction-based vs. Reasoning LLMs**:

- Qwen2.5-72B (red)

- GPT-4o (green)

- QWQ-32B (pink)

- DeepSeek-V3 (purple)

---

### Detailed Analysis

#### Open-source LLMs

- **HotpotQA**:

- LLaMA3.1-8B: ~60

- LLaMA3.1-70B: ~85

- Qwen2.5-72B: ~90

- **GSM8k**:

- LLaMA3.1-8B: ~75

- LLaMA3.1-70B: ~88

- Qwen2.5-72B: ~92

- **GPQA**:

- LLaMA3.1-8B: ~20

- LLaMA3.1-70B: ~40

- Qwen2.5-72B: ~30

#### Closed-source LLMs

- **HotpotQA**:

- Qwen2.5-72B: ~90

- Claude3.5: ~92

- GPT-3.5: ~91

- GPT-4o: ~95

- **GSM8k**:

- Qwen2.5-72B: ~93

- Claude3.5: ~95

- GPT-3.5: ~94

- GPT-4o: ~97

- **GPQA**:

- Qwen2.5-72B: ~30

- Claude3.5: ~58

- GPT-3.5: ~52

- GPT-4o: ~53

#### Instruction-based vs. Reasoning LLMs

- **HotpotQA**:

- Qwen2.5-72B: ~90

- GPT-4o: ~95

- QWQ-32B: ~85

- DeepSeek-V3: ~98

- **GSM8k**:

- Qwen2.5-72B: ~93

- GPT-4o: ~97

- QWQ-32B: ~88

- DeepSeek-V3: ~100

- **GPQA**:

- Qwen2.5-72B: ~30

- GPT-4o: ~52

- QWQ-32B: ~25

- DeepSeek-V3: ~50

---

### Key Observations

1. **Open-source LLMs**:

- Qwen2.5-72B consistently outperforms LLaMA variants across all datasets.

- LLaMA3.1-8B struggles significantly in GPQA (~20), while LLaMA3.1-70B improves but still lags behind Qwen2.5-72B.

2. **Closed-source LLMs**:

- GPT-4o dominates in all datasets, achieving the highest scores (e.g., ~97 in GSM8k).

- Claude3.5 and GPT-3.5 show similar performance, with Claude3.5 slightly ahead in HotpotQA.

3. **Instruction-based vs. Reasoning LLMs**:

- **Instruction-based models** (Qwen2.5-72B, GPT-4o) excel in **GSM8k** (reasoning-heavy dataset), with scores near 100.

- **Reasoning-based models** (DeepSeek-V3) underperform in GPQA (~50) but dominate in HotpotQA (~98).

- QWQ-32B (instruction-based) has the lowest scores in GPQA (~25).

---

### Interpretation

- **Model Type Impact**:

- Closed-source models (e.g., GPT-4o) generally outperform open-source models, suggesting proprietary architectures or training data advantages.

- Instruction-based models (e.g., Qwen2.5-72B) excel in reasoning tasks (GSM8k) but struggle with open-source benchmarks like GPQA.

- **Dataset-Specific Trends**:

- **GSM8k** (reasoning): Instruction-based models (Qwen2.5-72B, GPT-4o) achieve near-perfect scores (~93–97).

- **GPQA** (general knowledge): Open-source models (LLaMA3.1-8B) perform poorly (~20), while closed-source models (GPT-4o) achieve moderate scores (~53).

- **Anomalies**:

- DeepSeek-V3 (reasoning-based) achieves the highest score in GSM8k (~100) but underperforms in GPQA (~50), indicating specialization in reasoning tasks.

- QWQ-32B (instruction-based) has the lowest GPQA score (~25), suggesting limitations in general knowledge tasks.

- **Implications**:

- Closed-source models may offer better reliability for high-stakes applications.

- Instruction-based models are optimized for structured reasoning but lack versatility in open-ended tasks.

---

### Spatial Grounding & Trend Verification

- **Legend Placement**:

- Open-source legend: Top-left of the first section.

- Closed-source legend: Top-left of the second section.

- Instruction-based vs. Reasoning legend: Top-left of the third section.

- **Color Consistency**:

- Red consistently represents Qwen2.5-72B across all sections.

- Green represents GPT-4o in closed-source and instruction-based sections.

- **Trend Validation**:

- In Open-source LLMs, Qwen2.5-72B (red) slopes upward across datasets, confirming its dominance.

- In Closed-source LLMs, GPT-4o (green) shows a flat, high-performance trend.

---

### Content Details

- **Textual Elements**:

- No non-English text detected.

- Dataset labels and model names are explicitly annotated in legends.

- **Data Table Reconstruction**:

| Dataset | Model | Score |

|-------------|---------------------|-------|

| HotpotQA | LLaMA3.1-8B | ~60 |

| HotpotQA | LLaMA3.1-70B | ~85 |

| HotpotQA | Qwen2.5-72B | ~90 |

| GSM8k | LLaMA3.1-8B | ~75 |

| GSM8k | LLaMA3.1-70B | ~88 |

| GSM8k | Qwen2.5-72B | ~92 |

| GPQA | LLaMA3.1-8B | ~20 |

| GPQA | LLaMA3.1-70B | ~40 |

| GPQA | Qwen2.5-72B | ~30 |

| ... (repeated for closed-source and instruction-based sections) |

---

### Final Notes

The chart highlights trade-offs between model openness, architecture, and task specificity. While closed-source models dominate in general performance, open-source models like Qwen2.5-72B show promise in specialized domains. Further analysis could explore training data size, computational resources, or fine-tuning strategies to explain these disparities.

DECODING INTELLIGENCE...